Today's Overview

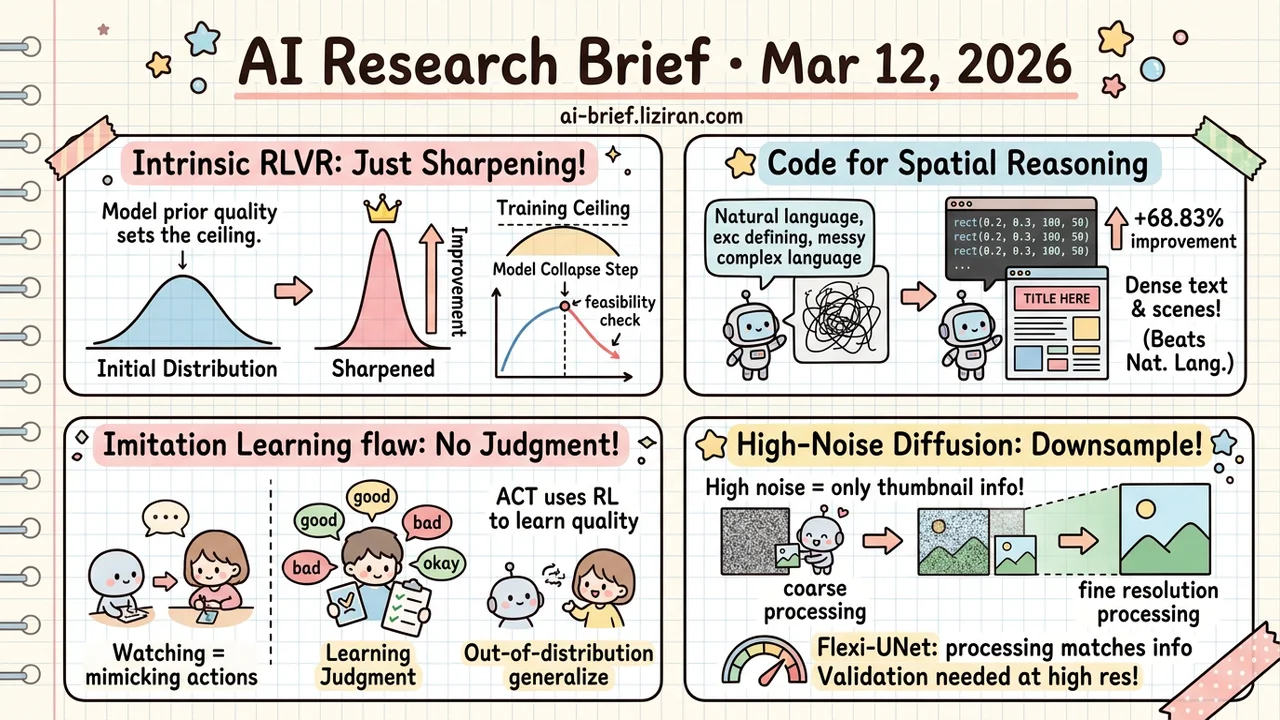

- All Intrinsic RLVR Is Just Sharpening the Initial Distribution. Model prior quality sets the training ceiling. Model Collapse Step can predict feasibility before you commit resources.

- Code Beats Natural Language as a Spatial Reasoning Chain. Structured layout benchmarks improve 68.83%, with the largest gains on dense text and multi-element scenes.

- Imitation Learning's Structural Flaw Is Missing Judgment Training. ACT uses RL to make models compare and evaluate candidate actions. The critical thinking transfers to out-of-distribution tasks.

- High-Noise Diffusion Steps Only Need a Thumbnail. The information content equals a downsampled low-res image — full-resolution processing is wasted compute. Theory is solid, but quality tradeoffs at high resolution need validation.

Featured

01 Training Where Does Unsupervised RL Hit Its Ceiling?

Intrinsic RLVR — unsupervised reinforcement learning using the model's own signals as reward — has seen plenty of experiments lately. Most show "it improves," none explain where it stops. This paper provides the most systematic analysis yet. It classifies URLVR methods into intrinsic (model's own signals) and external (outside computational verification), then proves under a unified framework that all intrinsic methods do the same thing: sharpen the model's initial distribution.

When the model's initial confidence aligns with correctness, sharpening helps. When it doesn't, training collapses. Experiments confirm this: intrinsic reward follows a rise-then-fall pattern across all methods. The collapse point depends on model prior quality, not hyperparameter tuning.

Intrinsic reward still works well for test-time training on small datasets. The paper also introduces Model Collapse Step as a predictive metric — essentially a feasibility check before committing to an RL run. On the external reward side, early experiments exploit computational asymmetry (generation is hard, verification is easy) to build reward signals that may bypass the confidence ceiling. Still early evidence, but a direction worth tracking.

Key takeaways: - All intrinsic RLVR sharpens the initial distribution; model prior quality sets the ceiling, not engineering tricks - Model Collapse Step predicts RL training viability — run it before investing compute - External reward via computational asymmetry is a promising escape route, but needs more validation

Source: How Far Can Unsupervised RLVR Scale LLM Training?

02 Image Gen Code as Reasoning Chain: Write a Program, Then Draw

Having a model write code before generating an image sounds like a detour. CoCo shows it's a shortcut. The model generates executable code defining the scene's spatial layout, renders a deterministic sketch in a sandbox, then refines it into a high-fidelity image. Code natively handles precise coordinates, loops, and conditionals — exactly what natural language chain-of-thought can't express. The gap is largest on dense typography and complex multi-element scenes.

On structured layout benchmarks, CoCo improves 68.83% over direct generation and beats other CoT approaches across the board. Programmatic expression is a better spatial reasoning language than free-form text.

Key takeaways: - Code is a natural fit for spatial layout — more precise and controllable than natural language reasoning chains - Biggest gains on dense text and multi-element scenes, up to 69% - Teams doing complex text-to-image generation should watch the "code as reasoning chain" pattern

Source: CoCo: Code as CoT for Text-to-Image Preview and Rare Concept Generation

03 Agent Can You Learn Judgment From Perfect Demonstrations Alone?

You can learn execution from watching correct demonstrations. But execution isn't understanding. The model mimics expert actions without ever comparing good against bad — it never develops judgment about action quality. Some work tries to patch this by having models imitate pre-written reflections, but imitating reflection and actually learning to reflect are different things.

ACT takes a different path. RL trains the model to judge which of two candidate actions is better, rewarding correct judgments. The model develops its own ability to evaluate action quality rather than mimicking scripted self-critique. On three agent benchmarks, ACT beats imitation learning by 5 points on average and direct RL by 4.6 points. It generalizes to out-of-distribution tasks and general reasoning — the model learned judgment itself, not task-specific reaction patterns.

Key takeaways: - Imitation learning's structural blind spot: exposure only to correct actions, no basis for developing judgment - ACT uses RL for the model to autonomously learn action quality evaluation, not imitate pre-written reflections - Strong out-of-distribution generalization confirms the critical thinking ability transfers across tasks

Source: Agentic Critical Training

04 Architecture Noisy Diffusion Steps Only Need a Thumbnail

Scale-space theory and diffusion models seem like separate fields. Scale Space Diffusion draws a formal connection with a counterintuitive conclusion: the information in a high-noise diffusion state is mathematically equivalent to a downsampled low-res image. Full-resolution denoising at early steps is doing useless work on details that don't exist yet.

Their solution, Flexi-UNet, denoises at only the resolution and network depth the current step actually needs. Coarse processing when noise is high, finer resolution as details emerge. The theory is clean, but experiments on CelebA and ImageNet leave a key question open: does the efficiency gain come at a quality cost? The paper shows scaling behavior analysis, not high-resolution generation benchmarks. Quality tradeoffs at higher resolutions remain unverified.

Key takeaways: - High-noise diffusion states carry information equivalent to low-res images; full-resolution processing is redundant - Flexi-UNet dynamically matches processing resolution to information density — coarse when noisy, fine when detailed - Theoretical foundation is strong, but generation quality tradeoffs need verification at higher resolutions

Source: Scale Space Diffusion

Also Worth Noting

Today's Observation

Three Featured papers today did the same thing: replace a conventional supervision signal. RLVR substitutes ground-truth labels with intrinsic model reward. ACT replaces expert demonstrations with learned critical judgment. CoCo swaps natural language planning for code structure.

The surface-level topics are unrelated. The underlying problem is the same: standard supervision formats lack expressive power. Labels say right or wrong, never why. Demonstrations show what to do, never why not something else. Natural language descriptions like "place A on the left, B on the right, leave 30 pixels between them" are inherently imprecise.

What's happening isn't the removal of supervision. It's a systematic upgrade of supervision signals from low-fidelity to high-fidelity — from single-bit correct/incorrect judgments toward signal forms that carry structure, causality, and quantitative relationships.

If you're designing a training pipeline, pause and ask: does your current supervision signal actually express what you want the model to learn? Labels, demonstrations, and natural language descriptions each have expressive limits. Choose the wrong signal form, and no amount of data will compensate.