Today's Overview

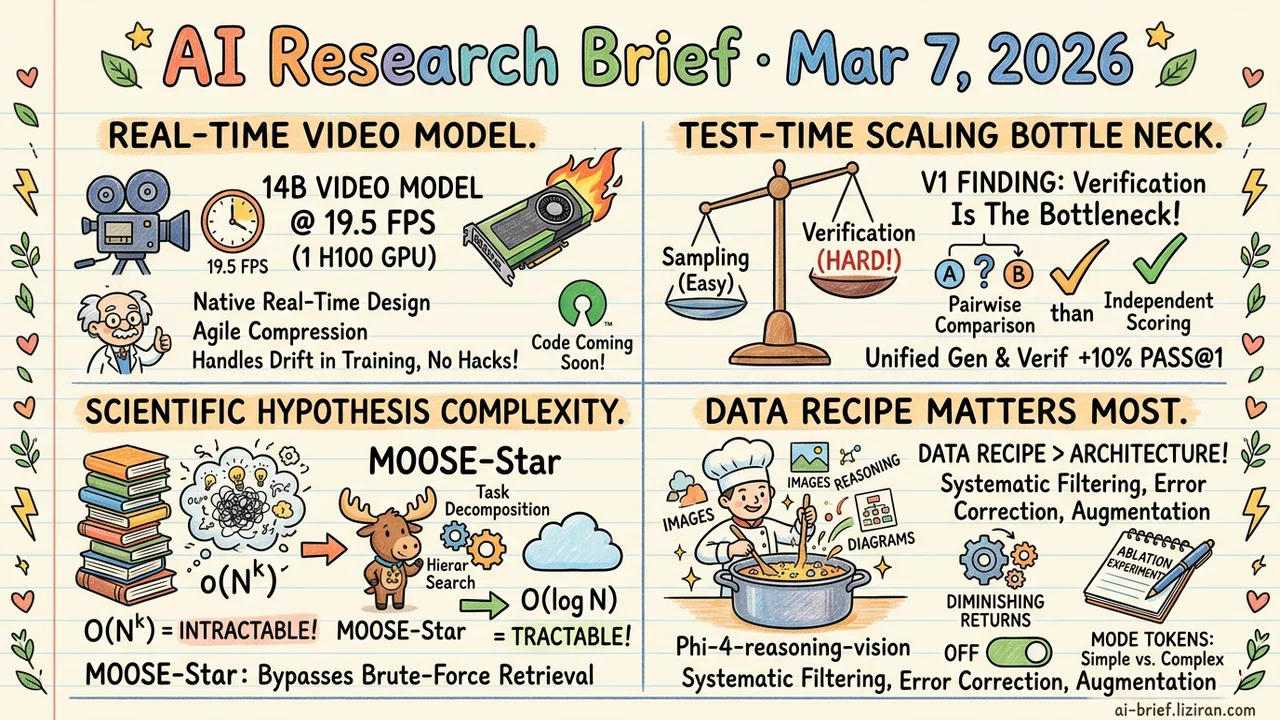

- 14B Video Model at 19.5 FPS on One GPU. No KV-cache, no sparse attention, no quantized inference. The architecture is natively designed for real-time generation, not patched after the fact.

- Verification Is the Real Test-Time Scaling Bottleneck. V1 finds pairwise comparison far outperforms independent scoring. Unifying generation and verification in one model lifts Pass@1 by up to 10%.

- Scientific Hypothesis Complexity Drops from O(N^k) to O(log N). MOOSE-Star first proves brute-force retrieval is mathematically intractable, then bypasses it with task decomposition and hierarchical search.

- Data Recipe Matters Most for Multimodal Reasoning Models. Phi-4-reasoning-vision-15B's ablation experiments and design tradeoffs are more worth reading than the model weights themselves.

Featured

01 Video Gen 14B Video Model Runs Real-Time, No Tricks

14B parameters, 19.5 FPS on a single H100, minute-long continuous generation. Impressive numbers, but the path matters more. Helios skips KV-cache, sparse attention, linear attention, quantized inference, and every common anti-drifting trick: self-forcing, error banks, keyframe sampling — all absent. This isn't an acceleration patch on an existing architecture. It's an autoregressive diffusion architecture designed from scratch for real-time generation.

The core idea: aggressively compress historical frame and noise context representations while reducing sampling steps. A 14B model's compute cost drops to 1.3B territory. Long-video drift isn't handled by inference-time heuristics either. Training explicitly simulates drift and eliminates the root causes of repetitive motion. Infrastructure-level optimization fits four 14B models in 80GB VRAM without parallelism or sharding frameworks.

127 community upvotes reflect more than approval of quality. They signal excitement about deployment feasibility. When a model runs real-time without specialized acceleration infrastructure, the deployment bar drops by an order of magnitude. Code and weights will be open-sourced.

Key takeaways: - 14B model at 19.5 FPS on one GPU, compute cost reduced to 1.3B levels. Architecture natively designed for real-time, not retrofitted. - Anti-drifting handled through training strategy, not inference heuristics. Drift eliminated at the source. - Code and model will be open-sourced. Teams working on video generation deployment should watch closely.

Source: Helios: Real Real-Time Long Video Generation Model

02 Reasoning The Real Bottleneck Is Verification, Not Sampling

Test-time scaling typically means sampling multiple candidate solutions in parallel, then picking the best. The bottleneck is rarely the sampling itself. Berkeley's V1 found a telling asymmetry: models score individual solutions far less accurately than they compare pairs head-to-head.

V1 unifies generation and verification in one model, replacing per-solution scoring with tournament-style pairwise ranking. Uncertainty guidance focuses verification compute on the hardest-to-distinguish candidate pairs. On code generation and math reasoning, pairwise verification beats independent scoring by up to 10% on Pass@1 while using less compute than other test-time scaling methods.

Key takeaways: - The real test-time scaling bottleneck is verification, not sampling volume. - Pairwise comparison far outperforms independent scoring and can be unified with generation training to avoid distribution drift. - Investing in better verification strategies beats stacking more samples.

Source: $V_1$: Unifying Generation and Self-Verification for Parallel Reasoners

03 AI for Science First Prove It's Impossible, Then Make It Work

Most work on LLMs for scientific discovery focuses on prompt design or feedback fine-tuning. MOOSE-Star asks a more fundamental question: can you directly train a model to generate hypotheses from background knowledge? They start with a discouraging proof — retrieving and combining inspiration from large knowledge bases has O(N^k) complexity, mathematically intractable.

Then they break the problem apart. The discovery process decomposes into subtasks with motivation-guided hierarchical search for logarithmic retrieval. Bounded combination resists noise. Best case: complexity drops from exponential to O(log N). The accompanying TOMATO-Star dataset (108K decomposed papers, 38K GPU-hours) makes the framework trainable in practice. Where brute-force sampling hits the complexity wall, MOOSE-Star still shows continued test-time scaling gains.

Key takeaways: - Directly modeling "background to hypothesis" generation is exponentially intractable. A more fundamental limitation than prompt engineering. - Task decomposition plus hierarchical retrieval compresses complexity from O(N^k) to O(log N). - The framework shows continued test-time scaling where brute-force sampling hits its ceiling.

Source: MOOSE-Star: Unlocking Tractable Training for Scientific Discovery by Breaking the Complexity Barrier

04 Multimodal Data Recipe Beats Architecture for Multimodal Reasoning

Building a compact multimodal reasoning model, the biggest unknown isn't which architecture to pick. It's how to mix the data. Phi-4-reasoning-vision-15B's technical report documents this in detail: systematic data filtering, error correction, and synthetic augmentation deliver larger gains than architectural innovation.

High-resolution dynamic-resolution encoders provide stable improvements on the architecture side — accurate visual perception is a prerequisite for quality reasoning. One practical design choice: explicit mode tokens distinguish reasoning from non-reasoning data. A single model can answer simple questions quickly or chain-of-thought through complex ones. For teams exploring this direction, the report's systematic ablations — what worked, what was abandoned — may be more valuable than the 15B model weights.

Key takeaways: - Data filtering, error correction, and synthetic augmentation are the top performance lever. Architectural innovation shows diminishing returns. - Explicit mode tokens enable dual reasoning/non-reasoning modes. Simple tasks skip reasoning overhead. - The ablation experiments and design tradeoff documentation offer direct reference value for teams training similar models.

Also Worth Noting

Today's Observation

V1 samples 100 candidate solutions and can't pick the best one. MOOSE-Star hits exponential combinatorial blowup when retrieving inspiration from knowledge bases. Long-horizon agents in Memex(RL) find critical information buried in their own history. Three systems, three different problems, one shared premise: generation is already strong enough. The bottleneck has shifted to locating the right answer among generated results.

Over the past two years, the industry poured resources into larger models and more sampling. Generation capacity now overflows. Verification, retrieval, and knowledge-combination infrastructure hasn't kept pace. V1's pairwise verification beats independent scoring by 10%. MOOSE-Star compresses retrieval complexity from exponential to logarithmic. Indexed memory in Memex(RL) makes distant evidence reachable. None of these improve generation. All of them improve selection.

If you're planning product or infrastructure investments, do one thing: audit the compute ratio between generation and selection in your system. When the generation side already produces enough candidates, shifting more budget toward verification and retrieval may yield higher returns.