Today's Overview

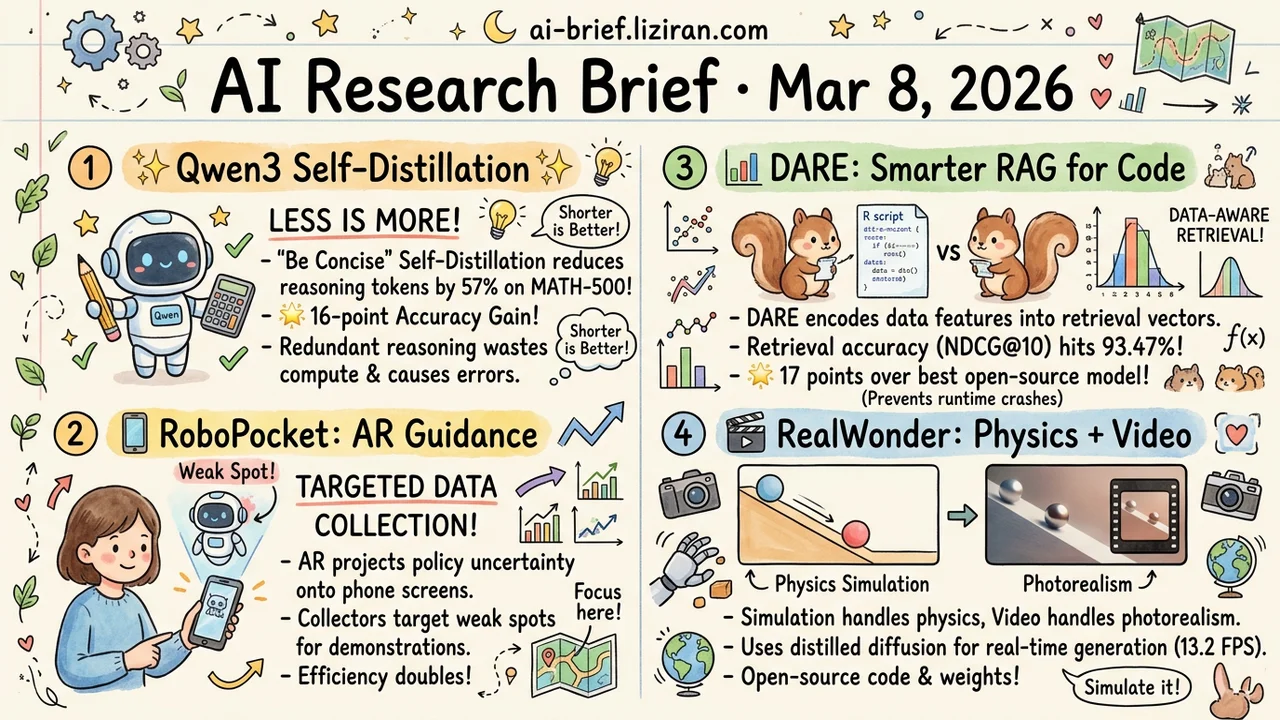

- "Be Concise" Self-Distillation Halves Tokens and Raises Accuracy. Qwen3 on MATH-500: 57% fewer reasoning tokens, 16-point accuracy gain. Redundant reasoning doesn't just waste compute — it actively introduces errors.

- Policy Already Knows Where It Fails. RoboPocket projects the model's uncertainty via AR onto a phone screen, letting data collectors target weak spots. Collection efficiency doubles.

- Semantic Match Scores High, but the Retrieved Function Crashes. DARE encodes data distribution features into retrieval vectors. NDCG@10 hits 93.47% — 17 points above the best open-source embedding model.

- Video Models Can't Do Physics, So Don't Teach Them. RealWonder offloads physical interactions to simulation, then translates to photorealism. 13.2 FPS at 480×832 resolution.

Featured

01 Efficiency "Be Concise" Halves Tokens, Accuracy Goes Up

The entire method fits in one sentence: prompt the same model with "please answer concisely," use its logits as the teacher signal, and run reverse KL distillation on the model's own rollouts. No external teacher, no human-labeled answers, no token budget, no difficulty estimator. Pure self-distillation — the model's concise mode teaching its default mode.

Results are surprisingly strong. Qwen3-8B and 14B cut 57–59% of reasoning tokens on MATH-500 while accuracy rises 9–16 points. The 14B model also gains 10 points on competition-level AIME 2024 while compressing 41% of tokens. The paper's explanation is compelling: excessive intermediate steps don't just waste compute. They accumulate errors. Each unnecessary token adds drift. The model already knows how to reason concisely. This method distills that ability from "prompted mode" back into default behavior.

Key takeaways: - Self-distillation requires no external model or labeled data. Deployment barrier is near zero. - 50%+ token reduction with accuracy gains confirms redundant reasoning actively harms quality. - The method auto-calibrates by difficulty: aggressive compression on easy problems, full reasoning depth on hard ones.

Source: On-Policy Self-Distillation for Reasoning Compression

02 Robotics More Demos Won't Help Without Targeting Weak Spots

The data bottleneck in imitation learning isn't volume. It's targeting. Handheld devices made "in-the-wild" data collection scalable, but operators have no idea which actions the current policy has mastered and which it hasn't. Most demonstrations get wasted on scenarios the model already handles.

RoboPocket projects the policy's predicted trajectory onto the phone screen via AR. Collectors see exactly where the policy is likely to fail, then add demonstrations for those specific cases. Paired with async online fine-tuning, the correction loop closes in minutes. Data efficiency doubles compared to offline collection strategies.

Key takeaways: - The core data collection bottleneck is targeting, not scale. Blind demonstrations waste effort on already-learned behaviors. - AR visualization lets collectors "see" policy weaknesses, moving interactive correction from the lab to the field. - The 2x efficiency gain depends on accurate uncertainty estimation — a claim that needs validation across more scenarios.

Source: RoboPocket: Improve Robot Policies Instantly with Your Phone

03 Retrieval Semantic Match Is Perfect, but the Function Crashes

When LLM agents do statistical analysis, RAG retrieval of R functions has a blind spot: existing methods match only on function description semantics. They ignore the actual data distribution. A retrieved function's description fits the task perfectly, but the data violates its assumptions — skewed data paired with a test that assumes normality.

DARE's fix is surprisingly direct: encode data distribution features into the retrieval vector so the system understands both "what you want to do" and "what the data looks like." On a knowledge base of 8,191 CRAN packages, retrieval accuracy (NDCG@10) hits 93.47%. That's 17 points above the best open-source embedding model, with fewer parameters. The idea is simple, but it exposes a broader problem: most RAG systems encode only static semantics and ignore runtime context entirely. That blind spot extends far beyond statistics.

Key takeaways: - Code retrieval based solely on semantic descriptions misses whether data satisfies a function's statistical preconditions, producing unusable recommendations. - Encoding data distributions into retrieval vectors is a simple, overlooked direction with large gains. - Any team building RAG systems should audit whether they're ignoring runtime context.

Source: DARE: Aligning LLM Agents with the R Statistical Ecosystem via Distribution-Aware Retrieval

04 Video Gen Video Models Can't Do Physics? Don't Teach Them

Video generation models produce photorealistic frames but have zero understanding of physical interactions like "push this cup and see what happens." They lack 3D scene structure. RealWonder's approach: stop trying to teach video models physics. Insert a physics simulation layer instead.

The pipeline splits into two stages. A physics engine converts actions (forces, robotic manipulation) into simulated visuals (optical flow and RGB). The video model handles only the "simulation to photorealism" translation. Physics engines simulate forces; video models generate realistic imagery. Each does what it's good at. The system integrates single-image 3D reconstruction, physics simulation, and a 4-step distilled diffusion generator. It runs at 13.2 FPS at 480×832 resolution, covering rigid bodies, deformable objects, fluids, and granular materials.

Key takeaways: - Physics simulation as a bridge decomposes one hard problem into two tractable ones, each solved by the right tool. - 4-step distilled diffusion enables real-time generation at 13.2 FPS for interactive exploration. - Code and weights are open-source. Worth tracking for AR/VR and robot learning applications.

Source: RealWonder: Real-Time Physical Action-Conditioned Video Generation

Also Worth Noting

Today's Observation

OPSDC extracts concise reasoning with a "be concise" prompt. RoboPocket projects policy uncertainty to human collectors. DARE encodes data distributions into retrieval vectors. Three systems, three different problems, one shared structure: the signal needed for improvement already exists inside the system. Nobody was using it.

The model already reasons concisely when prompted — default mode just doesn't activate it. Policy networks already signal where they're uncertain — nobody reads that output. Data distributions make the best retrieval context — nobody encodes them. All three teams did the same thing: connected an existing internal signal to the decision loop.

This pattern has direct engineering value. Next time a system underperforms, resist the urge to add modules, swap models, or pile on data. Audit the signals your system already produces but doesn't use: logit distributions, intermediate representations, prediction uncertainty, input data statistics. Pick the worst-performing component, print its intermediate outputs, and look for signals you can tap directly. Wiring up existing signals deploys faster, carries less risk, and often outperforms adding new capabilities.