Today's Overview

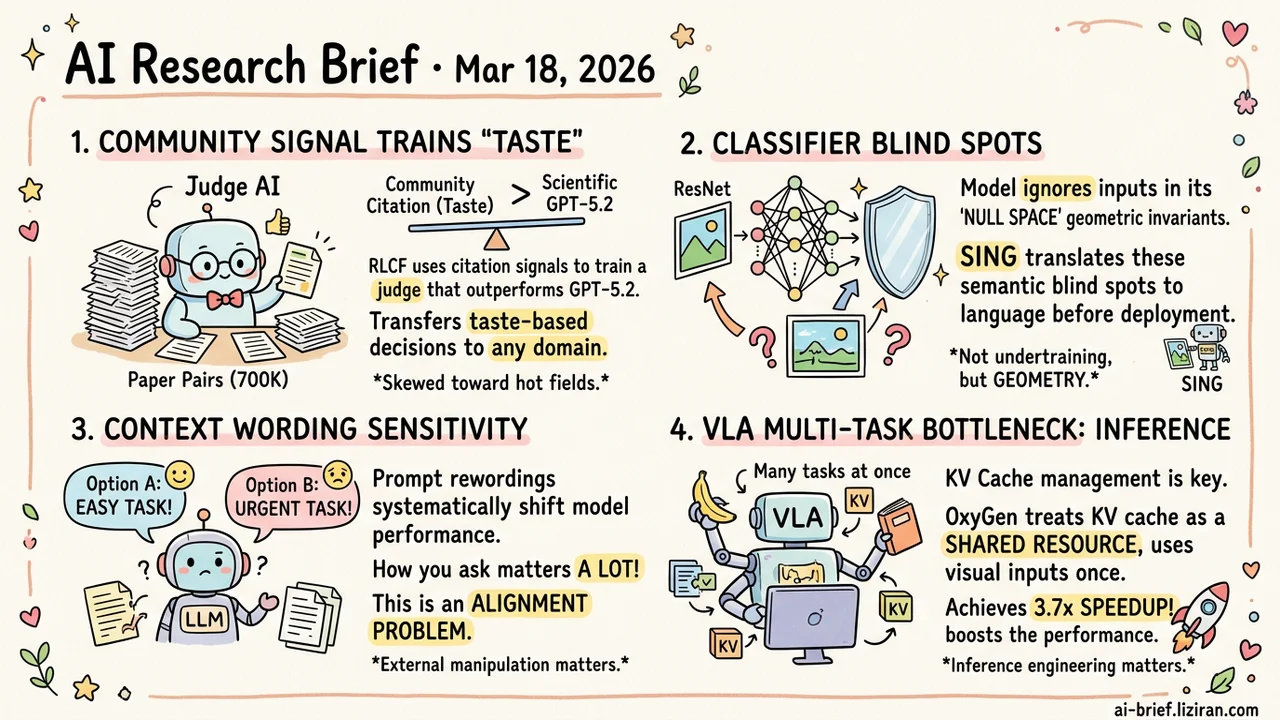

- Community citation signals can train "taste." RLCF uses 700K paper pairs for preference modeling, producing a judge that outperforms GPT-5.2. The paradigm transfers to any domain requiring taste-based decisions.

- Classifier blind spots hide in the null space. SING translates geometric invariants of linear mappings into natural language. Auditing what a model ignores before deployment beats chasing accuracy.

- Model behavior is far more sensitive to context wording than expected. Changing task descriptions systematically shifts performance. Whether or not this constitutes "motivation," the manipulability itself is an alignment problem.

- VLA multi-task bottleneck is the inference system, not model architecture. OxyGen manages cross-task KV cache as a shared resource, computes shared visual observations once, and achieves up to 3.7x speedup.

Featured

01 AI for Science Research Taste Trained on 700K Paper Pairs

Citation counts measure a paper's impact. Can you reverse-engineer that signal to teach a model what research is worth doing? RLCF (Reinforcement Learning from Community Feedback) tries exactly this: 700K pairs of high-citation vs. low-citation papers become training data for judging research potential.

The pipeline has two stages. First, train a Scientific Judge on preference modeling. Then use that judge as a reward model to RL-train a Scientific Thinker that generates high-potential research ideas. The Judge outperforms GPT-5.2 and Gemini 3 Pro at predicting paper impact, generalizes to future years and unseen fields, and passes peer-review preference tests.

The bigger takeaway extends beyond academia. Any decision requiring "taste" — picking technical directions, evaluating proposals, prioritizing roadmaps — could use a similar community-feedback paradigm. The caveat: citation count ≠ scientific value. Training on it inevitably reinforces popularity bias toward hot fields. How well this generalizes to niche domains needs verification.

Key takeaways: - Community citation signals train preference models that turn "taste" from subjective judgment into a learnable capability. - The paradigm applies beyond academia: any domain with community feedback signals and taste-based decisions is a candidate. - Training signal is citation count, which naturally skews toward popular fields. Generalization to niche directions needs validation.

Source: AI Can Learn Scientific Taste

02 Interpretability Accuracy Misses What Hides in the Null Space

Linear classifiers have null spaces by definition. Input variations along these directions get completely ignored, no matter how semantically important they are. Certain visual attributes will never affect model output. This isn't undertraining. It's geometry.

SING exploits this property. It constructs equivalent images within the null space, then uses a vision-language model to translate the differences into natural language: which semantics were preserved, which were discarded. ResNet50 leaks critical semantic attributes into its null space. DINO-pretrained ViTs do significantly better.

For deployment, knowing what a model is blind to prevents systematic failures that accuracy metrics will never catch.

Key takeaways: - Null space invariants are structural blind spots determined by linear mapping geometry, not insufficient training. - SING converts blind spots into natural language descriptions, supporting both single-image analysis and class-level statistical audits. - Auditing what a model ignores before deployment prevents production incidents better than chasing accuracy numbers.

Source: Make it SING: Analyzing Semantic Invariants in Classifiers

03 Safety Skip the "Motivation" Debate — Behavioral Manipulability Is Real

This paper asks whether LLMs have human-like "motivation." The more interesting finding isn't the philosophical question but the behavioral patterns the experiments expose. Models' self-reported motivation levels correlate structurally with task performance. External manipulation — rewording task descriptions — systematically shifts these patterns.

The deployment implications are concrete. If simple context framing changes a model's effort level and output quality, prompt engineering's blast radius may be larger than assumed. Whether this counts as "motivation" needs more rigorous causal analysis. Whatever you call it, model behavior's sensitivity to context wording is an alignment problem.

Key takeaways: - Structured correlations between model "self-reported motivation" and behavior can be externally manipulated. - Deployments need to audit how prompt wording systematically affects model behavior patterns. - Whether it's called "motivation" is beside the point. Behavioral manipulability itself is the alignment concern.

Source: Motivation in Large Language Models

04 Robotics VLA Multi-Task Bottleneck Is Inference, Not Architecture

VLAs (Vision-Language-Action models) with MoT architectures already handle manipulation commands, dialogue, and memory simultaneously. Deploying all tasks at once on-device is another story. Each task maintains its own KV cache, shared visual observations get redundantly prefilled, and resources fight each other.

OxyGen treats KV cache as a first-class shared resource across tasks. Identical observations compute once and get reused. Cross-frame continuous batching decouples variable-length language decoding from fixed-frequency action generation. Implemented on π₀.₅, it achieves up to 3.7x speedup over isolated execution in multi-task scenarios, sustaining 200+ tokens/s language throughput and 70Hz action frequency without degrading action quality.

Shared cache management isn't new in LLM serving. Systematically adapting it to VLA multi-task workloads is a solid engineering contribution.

Key takeaways: - The real bottleneck for VLA multi-task parallelism is KV cache redundancy in the inference system, not model architecture. - Unified cache management computes shared visual observations once and reuses across tasks. - For on-device VLAs to be truly multi-task capable, inference system engineering matters as much as model architecture.

Source: OxyGen: Unified KV Cache Management for Vision-Language-Action Models under Multi-Task Parallelism

Also Worth Noting

Today's Observation

Two papers today approach the same question from orthogonal directions: what constitutes judgment in AI systems?

RLCF works from the outside in, distilling "taste" from community citation signals. It encodes "what the crowd considers important" into model capability. SING works from the inside out, using null space analysis to reveal blind spots mathematically inevitable in a classifier's linear structure. One answers "what to pay attention to." The other reveals "what gets inevitably ignored."

This pairing points to a practical framework. Evaluating AI judgment requires auditing two orthogonal dimensions. The preference dimension: where does the training signal come from, and do those external signals represent what you actually care about? High citations don't equal scientific value. High engagement doesn't mean the product direction is right. The structural dimension: what does the model's geometry make it permanently blind to, and are those blind spots acceptable in your use case? Accuracy metrics catch neither.

Next time you evaluate a decision-critical system, add two audit steps beyond benchmarks. Check its preference signal sources: does the training data represent real value? Probe its structural immunities: construct adversarial inputs to find what it can't see. The first determines whether its taste is trustworthy. The second determines whether its blind spots are fatal.