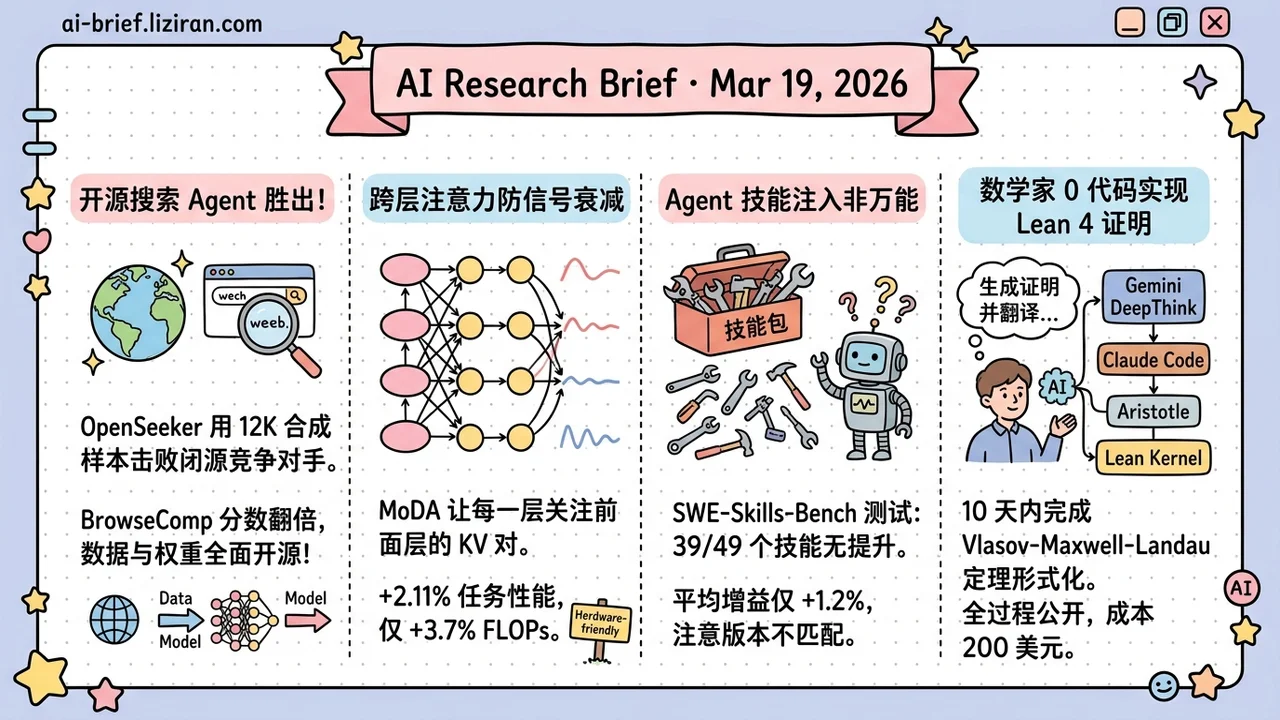

Today's Overview

- An open-source search agent trained on 12K synthetic samples beats closed-source competitors. OpenSeeker nearly doubles the second-best on BrowseComp with fully open data and weights. Deep Research is no longer a big-lab monopoly.

- Cross-layer attention keeps deep signals from fading. MoDA lets each attention head attend to KV pairs from preceding layers, trading 3.7% extra FLOPs for +2.11% on downstream tasks. Open-sourced.

- Agent skill injection sounds great; 39 of 49 skills produce zero improvement. SWE-Skills-Bench is the first rigorous evaluation of agent skills in real-world SWE. Average gain: +1.2%.

- A mathematician formalized a plasma physics theorem in Lean 4 with zero code in 10 days. The full AI-assisted workflow is publicly archived for $200 total cost.

Featured

01 Agent 12K Samples Is All You Need: How an Open-Source Search Agent Beat Closed Rivals

Deep search has become table stakes for LLM agents, yet high-performance search agents remain locked behind big-lab walls. The bottleneck isn't models. It's training data. OpenSeeker tackles this directly: reverse-engineer the web graph via topological expansion and entity obfuscation to synthesize multi-hop reasoning tasks, then denoise trajectories through retrospective summarization.

The result: 11.7K synthesized SFT samples yield 29.5% on BrowseComp, nearly doubling DeepDive's 15.3%. OpenSeeker even edges out Tongyi DeepResearch on BrowseComp-ZH (48.4% vs. 46.7%), despite the latter's extensive continual pre-training, SFT, and RL pipeline. Both data and weights are fully open.

For teams building their own search agents, the data synthesis pipeline may be more valuable than the model itself.

Key takeaways: - 11.7K synthetic SFT samples reach frontier search agent performance. Data efficiency is the competitive edge. - The synthesis pipeline (topological expansion, entity obfuscation, retrospective denoising) is the real contribution for teams wanting to build their own. - Full open-source release lowers the entry barrier for search agent development.

Source: OpenSeeker: Democratizing Frontier Search Agents by Fully Open-Sourcing Training Data

02 Architecture Deeper Models, Weaker Signals? Let Attention Look Back Across Layers

LLMs keep getting deeper, but depth brings an old problem. Useful features formed in shallow layers get gradually diluted by repeated residual updates. Deeper layers need them but can't recover them. MoDA (Mixture-of-Depths Attention) fixes this with a straightforward idea: let each attention head attend to KV pairs from both the current layer and preceding layers.

The engineering matters as much as the concept. A hardware-efficient algorithm resolves non-contiguous memory access patterns, achieving 97.3% of FlashAttention-2's throughput at 64K sequence length. On 1.5B-parameter models, average perplexity drops by 0.2 and downstream tasks improve by 2.11%, with only 3.7% FLOPs overhead. A side finding: MoDA works better with post-norm than pre-norm. Code is open-sourced.

Key takeaways: - Cross-layer attention lets deeper layers reuse shallow-layer features, directly addressing signal degradation. - Hardware-friendly implementation reaches 97.3% of FlashAttention-2 efficiency. Practical barrier is low. - 3.7% extra FLOPs for 2.11% downstream improvement makes the cost-benefit ratio attractive.

Source: Mixture-of-Depths Attention

03 Code Intelligence Agent Skills Sound Great. 39 of 49 Showed Zero Improvement

Injecting structured "skill packages" into LLM agents has become a popular way to boost coding performance. SWE-Skills-Bench offers the first rigorous reality check. It pairs 49 public SWE skills with real GitHub repositories pinned at fixed commits, yielding 565 task instances across six subdomains.

The results are sobering. 39 skills produced zero pass-rate improvement. Average gain across all skills: +1.2%. Token overhead ranged from modest savings to a 451% increase, with pass rates unchanged. Only 7 highly specialized skills showed meaningful gains (up to +30%). Three skills actually degraded performance (up to -10%) because version-mismatched guidance conflicted with project context.

Agent skills are a narrow intervention. Effectiveness depends heavily on domain fit, abstraction level, and contextual compatibility. Run ablation studies before adding skill packages.

Key takeaways: - 39 of 49 agent skills yield zero improvement on real SWE tasks. Average gain is just +1.2%. - Effective skills require tight domain match and context compatibility. Generic skills are essentially dead weight. - Version-mismatched skill guidance can hurt performance. Blind injection is worse than no injection.

Source: SWE-Skills-Bench: Do Agent Skills Actually Help in Real-World Software Engineering?

04 AI for Science Zero Code, 10 Days, $200: A Full Replay of AI-Assisted Formal Proof

The equilibrium characterization of the Vlasov-Maxwell-Landau system describes charged plasma motion. One mathematician completed its full Lean 4 formalization without writing a single line of code. The workflow: Gemini DeepThink generated the proof from a conjecture, Claude Code translated it from natural language into Lean, a specialized prover (Aristotle) closed 111 lemmas, and Lean's kernel verified the result. Total: 10 days, 229 human prompts, 213 git commits, $200.

The formalization finished before the corresponding math paper's final draft. The author documents AI failure modes in detail: hypothesis creep, definition-alignment bugs, and agent avoidance behaviors. The entire development process is publicly archived.

For teams exploring AI-assisted rigorous reasoning, this is a rare field report with full transparency.

Key takeaways: - Complete AI-assisted formalization workflow: reasoning model generates proof, coding tool translates, specialized prover closes lemmas, kernel verifies. - The human role is supervisor, not coder. 229 prompts, zero lines of code written. - Detailed failure mode documentation (hypothesis creep, definition alignment, avoidance behaviors) has direct practical value.

Source: Semi-Autonomous Formalization of the Vlasov-Maxwell-Landau Equilibrium

Also Worth Noting

Today's Observation

OpenSeeker beat heavily RL-trained closed-source search agents with 11.7K synthetic samples. SWE-Skills-Bench found 39 of 49 agent skill packages completely ineffective. Both stories point to the same conclusion: data quality and task fit matter far more than data volume and feature stacking.

OpenSeeker won on synthesis design: topological expansion ensures coverage, entity obfuscation controls complexity, retrospective denoising raises trajectory quality. The failed agent skills lost on their generality assumption: generic packages meet specific project contexts and either miss the mark or actively conflict. Teams building agents would be better served investing in a small number of high-fit data points and carefully designed skills than pursuing comprehensive arsenals.