Today's Overview

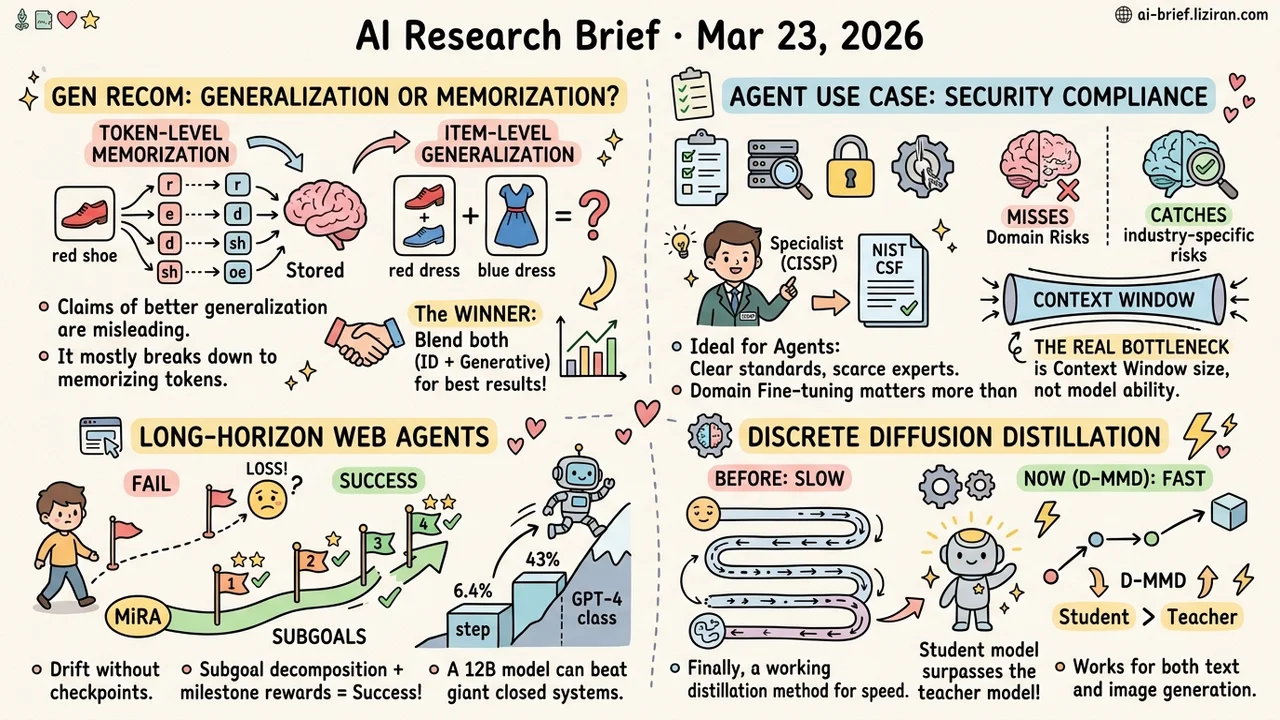

- Generative recommendation's "generalization advantage" degrades to token-level memorization at closer inspection. Per-instance fusion of both paradigms beats picking sides.

- Security compliance audits may be the ideal agent use case thanks to explicit standards and scarce human experts. Domain fine-tuning catches risks general models miss, but context windows are the real bottleneck.

- Long-horizon web agents fail because they lack intermediate checkpoints. Subgoal decomposition lifts a 12B open model from 6.4% to 43% success rate, surpassing GPT-4-class systems.

- Discrete diffusion finally has a working distillation method. D-MMD validates on both text and image domains, with the student model outperforming its teacher.

Featured

01 Retrieval Generative Recommendation's Generalization Edge May Just Be Finer-Grained Memorization

One core argument for generative recommendation (GR) over traditional ID-based models is stronger generalization: GR can predict item combinations unseen during training. This paper builds a decomposition framework that classifies each prediction instance by whether it requires memorization or generalization, then evaluates both paradigms separately.

The surface result is unsurprising: GR wins on generalization-heavy instances, ID models win on memorization-heavy ones. The real finding sits one level deeper. Zoom from item-level to token-level analysis, and much of GR's apparent "generalization" turns out to be token-level memorization reshuffled. The model didn't learn compositional reasoning about user preferences; it just memorized at a finer granularity once items were tokenized.

For teams replacing traditional recommenders with GR: the generalization gains you're measuring may not mean what you think. The paper also shows the two paradigms are complementary and proposes a memorization-strength metric for per-instance adaptive fusion. The blended approach outperforms either paradigm alone — a more practical conclusion than declaring a winner.

Key takeaways: - GR's item-level generalization largely degrades to token-level memorization; the "stronger generalization" claim needs re-examination. - ID-based models still hold an edge in memorization-dense scenarios. A wholesale switch risks losing those gains. - Per-instance adaptive fusion based on memorization strength beats picking one paradigm over the other.

Source: How Well Does Generative Recommendation Generalize?

02 Safety Why Security Audits May Be the Best Entry Point for AI Agents

Security compliance assessment hits a sweet spot for agentic AI. Evaluation criteria are extremely well-defined (NIST CSF framework). Qualified human experts (CISSP holders) are extremely scarce. Microsoft built six agents handling organizational profiling, asset mapping, threat analysis, control evaluation, risk scoring, and recommendation generation, then tested on a 15-person healthcare compliance firm. Compared against three independent CISSP expert assessments: 85% agreement on severity classification, 92% risk coverage.

Domain-tuned models caught risks that general-purpose models missed entirely: PHI exposure in healthcare, OT/IIoT vulnerabilities in manufacturing. General capability isn't enough here; industry knowledge is what matters. One caveat: the multi-agent pipeline failed completely under T4's 4096-token context window. Context capacity, not model ability, is the actual bottleneck.

Key takeaways: - Security compliance evaluation is an ideal agent scenario: explicit standards, scarce experts, structured outputs. - Domain fine-tuning matters more than general capability. It catches industry-specific risks that generalist models overlook. - Context window size, not model intelligence, is the binding constraint for multi-agent pipelines today.

Source: An Agentic Multi-Agent Architecture for Cybersecurity Risk Management

03 Agent Web Agents Keep Getting Lost. Intermediate Checkpoints Are the Missing Piece

LLM agents drift off course on long-horizon web tasks. An underappreciated reason: they fixate on the final goal with no intermediate checkpoints to correct course. MiRA breaks long tasks into subgoals and delivers a reward signal after each one, rather than waiting until the end to learn whether the attempt succeeded.

The idea isn't new. The results are. Gemma3-12B trained with MiRA jumps from 6.4% to 43.0% on WebArena-Lite, blowing past GPT-4-Turbo (17.6%) and GPT-4o (13.9%). Even adding subgoal planning at inference time alone, without RL training, lifts Gemini by roughly 10 percentage points. The bottleneck really is planning, not raw model capability.

Key takeaways: - Long-horizon agent tasks stall from missing intermediate feedback, not insufficient model capability. - Subgoal decomposition plus milestone rewards lets a 12B open model surpass GPT-4-class closed systems. - Inference-time planning alone adds ~10 points. Training-time and inference-time improvements stack.

Source: A Subgoal-driven Framework for Improving Long-Horizon LLM Agents

04 Efficiency Discrete Diffusion Models Finally Have a Distillation Path

Discrete diffusion models face an awkward situation: generation quality is catching up, but sampling speed keeps dragging behind. Distillation, the most direct acceleration route, has been a dead end. Every prior attempt destroyed generation quality. D-MMD breaks through by adapting moment matching to discrete token spaces, bypassing token-by-token alignment to match teacher and student distributions directly.

The results surprised: quality and diversity hold in both text and image domains. The distilled student actually outperforms its teacher. Discrete diffusion architectures, increasingly adopted in text generation, now have a viable inference acceleration path.

Key takeaways: - First effective distillation method for discrete diffusion, filling a long-standing gap in acceleration tooling. - The student surpassing its teacher suggests distillation optimizes sampling paths, not just compresses them. - Validated across text and image. Teams working on discrete diffusion can adopt this directly.

Source: Beyond Single Tokens: Distilling Discrete Diffusion Models via Discrete MMD

Also Worth Noting

Today's Observation

The recommendation paper decomposes each prediction into "memorization" and "generalization" instances, and finds token-level memorization masquerading as generalization. The same day, an autonomous driving mapping diagnostic does nearly the same thing: classify model performance by how well training data covers the input features, and discover that seemingly strong generalization is really memorization of frequently seen road structures. Two fields, two independent diagnostic tools, same conclusion once you look inside: good test-set metrics do not equal generalization.

Standard train/test splits can't distinguish the two. Test sets always contain samples that look different on the surface but are fundamentally memorizable. Both papers converge on a shared approach: classify prediction instances by the capability they require, then evaluate memorization and generalization performance separately. Only then does a model's true capability profile become visible.

Something you can do right now: For any model you care about, split test samples into "near" and "far" groups by nearest-neighbor distance to the training set. No fancy framework needed. A single embedding distance threshold will do. If the "near" group's metrics far exceed the "far" group's, your model is likely running on memorization.