Today's Overview

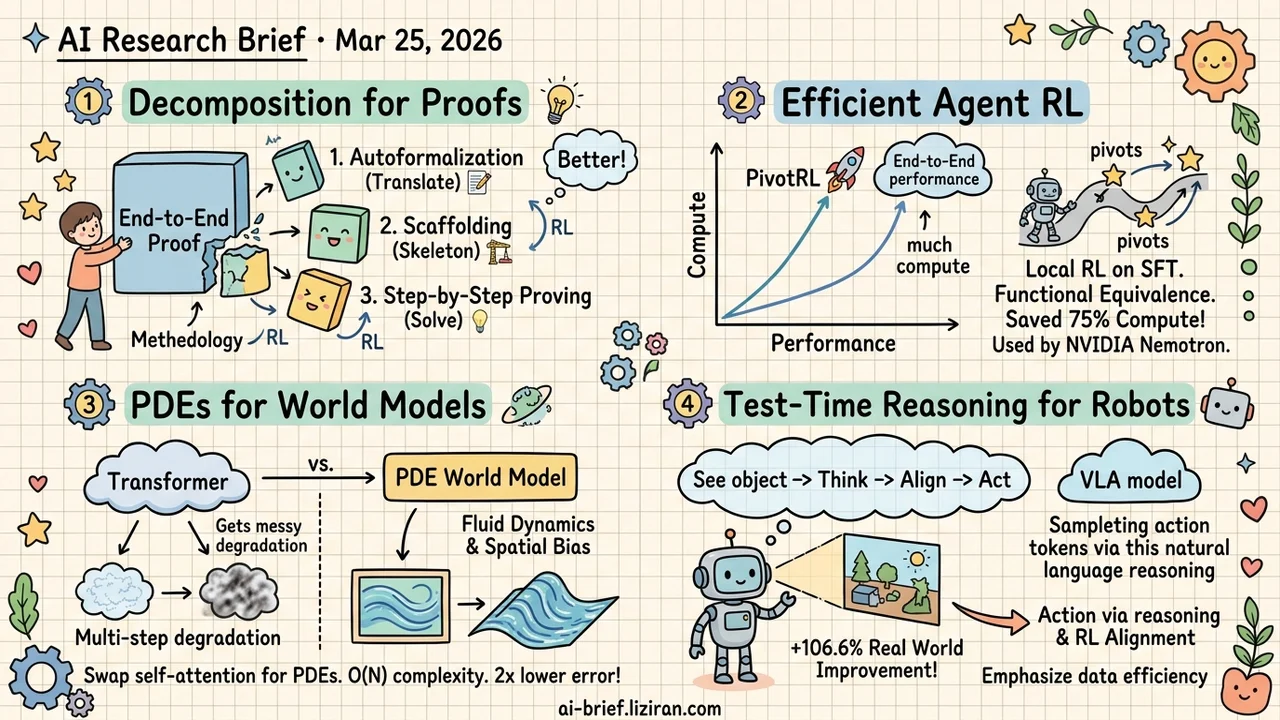

- Decomposing formal proofs into three independent RL tasks beats end-to-end training. LongCat-Flash-Prover separates autoformalization, scaffolding, and step-by-step proving, each with its own RL loop. HisPO stabilizes MoE long-chain training. The methodology transfers regardless of model scale.

- Layering local RL on SFT trajectories reaches near end-to-end performance at one quarter the compute. PivotRL only rolls out at high-variance "pivot" steps. OOD tasks beat standard SFT by 10%. Already deployed in NVIDIA's Nemotron production models.

- A PDE replaces self-attention in world model prediction with 2x lower reconstruction error. FluidWorld uses reaction-diffusion equations for spatial inductive bias and O(N) complexity. Multi-step predictions stay stable where Transformers degrade.

- Aligning language and actions at inference time beats baking reasoning supervision into training. RoboAlign samples action tokens via natural language reasoning at test time, then applies RL alignment. Just 1% of data after SFT yields significant gains.

Featured

01 Reasoning Formal Proof Isn't One Task — It's Three

End-to-end training treats formal proofs as a single capability. LongCat-Flash-Prover splits it into three: autoformalization (translating natural language to Lean4), scaffolding (writing proof skeletons), and step-by-step proving. Each gets its own training trajectories and RL optimization. The split works because bottlenecks aren't uniform. Sometimes translation is the blocker; sometimes proof strategy is. Mixed training leaves the model unable to diagnose which skill needs improvement.

For agentic RL on MoE models, they introduce HisPO. Gradient masking handles policy staleness and train-inference engine mismatches. Theorem consistency checks cut off reward hacking directly.

The decomposition methodology matters more than the 560B parameter count. "Split complex capabilities into independent subtasks, reinforce each separately" transfers to any compound reasoning scenario, regardless of model scale.

Key takeaways: - Formal proofs decomposed into autoformalization, scaffolding, and proving — each trained independently via RL — outperform end-to-end approaches. - HisPO solves MoE long-chain training instability. The gradient masking approach is reusable. - The "decompose then reinforce" methodology is model-scale-agnostic. Apply it to your own compound reasoning tasks.

02 Training Cost vs. Generalization Isn't a Binary Choice

SFT and end-to-end RL sit at opposite extremes for agent post-training. SFT is cheap but degrades out-of-distribution. End-to-end RL generalizes well but multi-turn rollout costs are brutal. PivotRL threads the needle: run local on-policy rollouts on existing SFT trajectories, focusing only on "pivots" — intermediate steps where sampled actions cause the largest outcome variance.

Functional equivalence rewards help too. Instead of requiring exact string matches against SFT data, PivotRL rewards behavioral correctness. The model learns what to do, not how to spell it. Across four agent domains: +4.17% over standard SFT on average, +10% on OOD tasks. Coding tasks reach near end-to-end RL performance at one quarter the rollout budget.

NVIDIA adopted this for Nemotron's production post-training. If you're building agents, the "SFT trajectory + local RL" playbook is ready to try.

Key takeaways: - Local RL on existing SFT trajectories bridges the cost-generalization gap. Only high-variance pivot steps get rollouts. - Functional equivalence rewards teach behavioral logic, not surface-level imitation. - Deployed in NVIDIA's production pipeline. Validated beyond academic benchmarks.

Source: PivotRL: High Accuracy Agentic Post-Training at Low Compute Cost

03 Architecture PDEs Replace Attention in World Models

World model predictors default to Transformers. That assumption rarely gets questioned. FluidWorld runs a direct experiment: swap self-attention for reaction-diffusion PDEs. Physical diffusion processes become the computational substrate for prediction. Complexity drops from O(N²) to O(N).

In parameter-matched comparisons (roughly 800K parameters, identical encoders, decoders, and loss functions), the PDE approach achieves 2x lower reconstruction error and 10–15% better spatial structure preservation. The standout result is multi-step prediction. Transformer and ConvLSTM outputs degrade rapidly over rollout steps. The PDE variant stays coherent.

Still proof-of-concept: UCF-101 at 64×64, trained on a single RTX 4070 Ti. Production viability is far off. But the question deserves consideration: the computational substrate for world models may have been prematurely locked to attention.

Key takeaways: - PDEs provide spatial inductive bias and linear complexity natively. Transformers need extra design to achieve either. - Multi-step rollout stability is the standout result, even at proof-of-concept scale. - Teams building world models should reconsider whether attention is the right default for prediction.

Source: FluidWorld: Reaction-Diffusion Dynamics as a Predictive Substrate for World Models

04 Robotics Reasoning Alignment Works Better at Inference Time

Adding VQA-style reasoning supervision to multimodal models for robot manipulation has been unreliable — sometimes harmful. RoboAlign skips reasoning supervision during training entirely. At inference time, it samples action tokens through natural language reasoning, then uses RL to align that reasoning process. This bridges the gap between language understanding and low-level motor actions.

After SFT, under 1% of data for RL alignment yields +17.5% on LIBERO, +18.9% on CALVIN, and +106.6% in real-world environments. The 106.6% gain needs context: if the baseline is low, doubling it isn't hard. The direction is clear regardless. Test-time reasoning for embodied intelligence may be more practical than training-time supervision.

Key takeaways: - VQA-style reasoning supervision for VLAs is unreliable. Inference-time alignment offers a more stable path. - Under 1% of data for post-SFT RL alignment produces significant gains. Data efficiency stands out. - The 106.6% real-world improvement requires checking baseline absolute performance before drawing conclusions.

Also Worth Noting

Today's Observation

LongCat decomposes formal proofs into three sub-capabilities for independent RL. PivotRL runs local RL on existing SFT trajectories to skip full rollouts. Both attack the same bottleneck: end-to-end agentic RL costs too much compute.

One reduces per-task RL complexity through decomposition. The other cuts rollout cost by reusing SFT trajectories. The signal isn't "RL works" — that's settled. The focus has shifted to making RL affordable for teams without massive compute budgets.

Audit your existing SFT data and task structure. If your task decomposes into independent sub-capabilities (LongCat's approach), or your SFT trajectories contain high-variance pivot points (PivotRL's approach), you can start small-scale experiments now. No need to wait for enough compute to run end-to-end RL.