Today's Overview

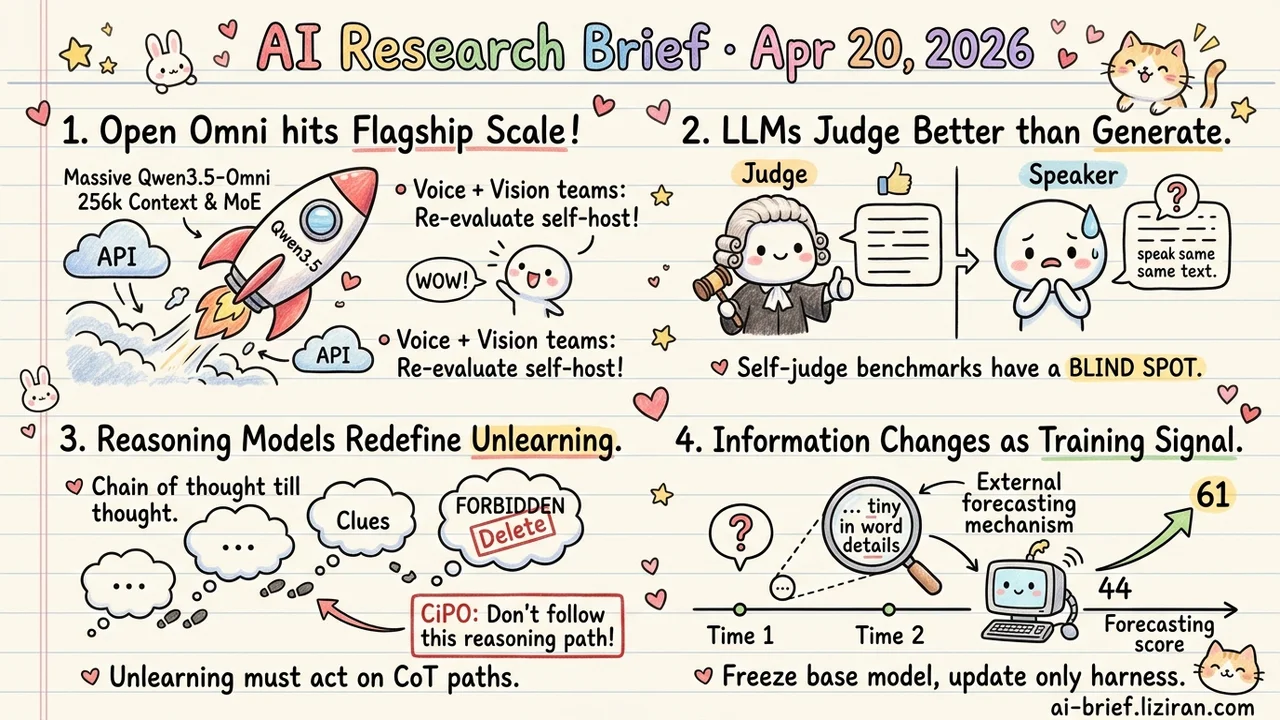

- Open omni finally hits closed-flagship scale. Qwen3.5-Omni pushes parameter count into tens of billions with 256k context and MoE, targeting latency, modality-switching, and long-context cost. Voice and vision teams should re-evaluate self-host plans.

- LLMs judge better than they generate. On three pragmatic tasks, the same model scores higher as listener than as speaker. Any benchmark or reward signal built on self-judge now has an asymmetric blind spot.

- Reasoning models redefine unlearning. Even when the final answer is scrubbed, the chain of thought walks the forbidden knowledge back step by step. CiPO extends "don't output" to "don't follow this reasoning path."

- How public information changes over time is itself a training signal. Milkyway freezes the base model, updates only an external forecasting harness, and lifts FutureX from 44 to 61.

Featured

01 Open Omni Catches Up on Scale

For teams building voice and vision applications, Qwen3.5-Omni pushes open omni to tens of billions of parameters with 256k context. Architecture splits Hybrid Attention MoE across the Thinker and Talker components. It's the first time open-source omni matches closed flagships on raw size.

The previous generation drew complaints on three points: inference latency, modality-switching cost, and long-context compute. Engineering priorities this round map cleanly onto those. MoE compresses long-sequence inference overhead. A new ARIA module aligns text and speech tokens adaptively. It's credited with fixing streaming TTS instability and prosody problems rooted in encoding mismatch.

The numbers claimed: SOTA across 215 audio and audio-visual subtasks, beating Gemini-3.1 Pro on key audio tasks, matching it on combined audio-visual understanding. Technical reports skew optimistic, so treat those as ceiling estimates. One emergent ability is interesting: writing code directly from audio-visual instructions, which the team calls Audio-Visual Vibe Coding. The same goes for 10-hour audio comprehension and 400-second 720p video at 1 FPS. If those hold up under load, they open product shapes that used to require stitching multiple models together. For teams weighing whether to turn the closed API into an option, don't read the score table. Run your own worst cases: streaming TTS stability, multimodal switching latency, actual throughput and cost on long audio-video understanding.

Key takeaways: - Open omni now matches closed flagships on parameter count and context length. Teams shipping voice or vision apps should re-examine their API dependency. - ARIA streaming-speech alignment and MoE long-sequence inference are the deployment-relevant improvements. Weight those more heavily than headline scores. - SOTA claims don't replace your own latency and stability tests. Discount the technical report's conclusions.

Source: Qwen3.5-Omni Technical Report

02 The Judge Is Better Than the Speaker

LLM-as-judge has been the default infrastructure for two years — for evaluation, for RLAIF, for preference learning. Strong models score weaker ones, act as process reward models, filter training data. This ACL paper tests that assumption on three pragmatic tasks.

The setup is direct. Take the same pool of models. Have each act first as listener, judging whether language is contextually appropriate. Then have it act as speaker, generating appropriate language itself. Most models score higher as judge than as speaker. On the surface it's good news: at least the judge works. Read the other way: this "competence" may not exist as a generative capability. If judgment and generation correlate only weakly, self-judge benchmarks and reward signals might be measuring a phantom.

The finding is restricted to pragmatics, so domains with clean ground truth like math and code may not show the same gap. Any team using a judge to filter training data or build preference models should verify judge-generator agreement on pragmatic and stylistic tasks before trusting it.

Key takeaways: - Judgment and generation are only weakly aligned in current LLMs. One cannot substitute for the other. - Self-judge-based benchmarks and reward models may be amplifying standards the model itself cannot meet. - PRM and data-filtering teams should separately verify judge-generator consistency on pragmatic and stylistic tasks.

03 Reasoning Models Break Unlearning

Classical unlearning has one goal: stop the model from emitting some private or copyrighted fact. Plug the mouth, done. Large reasoning models complicate this. Even when the final answer is scrubbed, the chain of thought reconstructs the forbidden knowledge step by step.

CiPO reframes the target. Don't just block the output. Block the reasoning path itself. The method generates a self-consistent counterfactual reasoning trajectory, then uses iterative preference optimization to push the original trajectory away. Reasoning ability stays intact, the authors claim, while the targeted knowledge vanishes from intermediate steps. That class of method historically wobbles under jailbreak and inductive prompting, so the adversarial tests in the full paper are what actually matter.

Remember the problem framing more than this particular fix. In the reasoning-model era, unlearning, safety alignment, and copyright compliance need new definitions. Regulators won't be auditing just final answers — they'll be auditing the full chain.

Key takeaways: - Reasoning chains reconstruct "forgotten" knowledge. Deleting answers isn't enough. - Unlearning has to act on reasoning paths, not just on terminal outputs. - Compliance and safety teams should extend evaluation to intermediate CoT steps, not only final answers.

Source: CiPO: Counterfactual Unlearning for Large Reasoning Models through Iterative Preference Optimization

04 Public Information Leaks the Future

Public information leaks the future. The same open question, reported and requoted and revised across different moments, carries a statistical signal about the real outcome in how its wording changes.

Milkyway turns that observation into a mechanism. Predicting the same question at two different times exposes what got missed: untracked factors, overlooked evidence, underestimated uncertainties. That's an internal feedback loop that doesn't wait for the event to resolve. What's surprising is the setup: the base model stays frozen, only an external forecasting harness updates — factor tracking, evidence collection, uncertainty handling. On FutureX the score climbs from 44 to 61. FutureWorld goes from 62 to 78.

That repositions the bottleneck for forecasting agents. The constraint isn't reasoning capability. It's how finely the system tracks information changes over time.

Key takeaways: - Time-dimension information shifts are a supervision signal on their own. No need to wait for ground truth before iterating. - Freezing the base and updating only an external harness already produces large gains. Friendly for teams building agents on closed APIs. - Teams working on forecasting products or prediction-agent evaluation can treat "same question across time" as a design primitive.

Source: The World Leaks the Future: Harness Evolution for Future Prediction Agents