Today's Overview

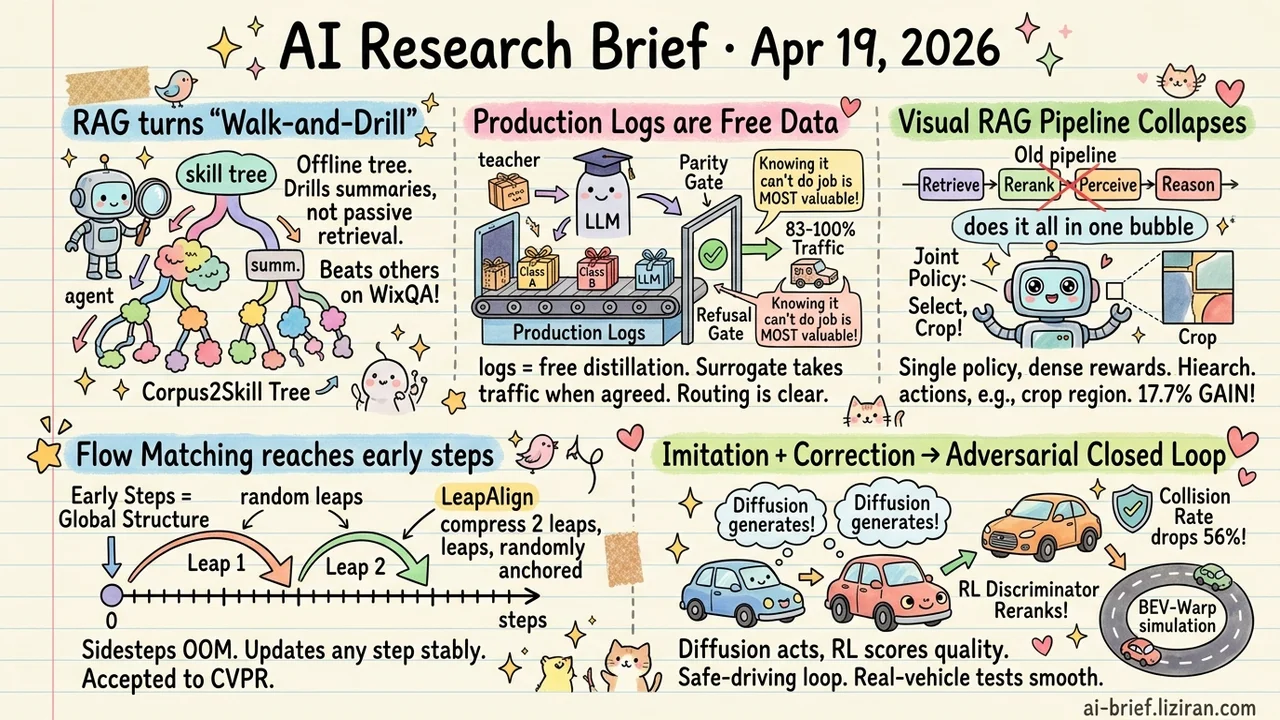

- RAG shifts from "retrieve-consume" to "walk-and-drill." Corpus2Skill compiles the entire corpus offline into a hierarchical skill tree; the agent drills down along summaries rather than passively receiving results, and beats dense retrieval, RAPTOR, and agentic RAG on WixQA.

- Production logs are free distillation data. TRACER uses a parity gate to hand 83-100% of traffic across 77 intent classes to a lightweight surrogate, and on NLI it refuses deployment outright — "knowing it can't do the job" is the most valuable capability in the system.

- Visual RAG's four-stage pipeline collapses into one joint policy. UniDoc-RL trains hierarchical actions with dense rewards end-to-end, folds "actively crop a region" into the action space, and gains up to 17.7% across three benchmarks.

- Flow matching post-training finally reaches the early generation steps. LeapAlign compresses long trajectories into two randomly anchored leaps, sidestepping the dilemma between OOM backprop and direct-gradient methods that can't touch early steps. Accepted to CVPR.

- Imitation plus rule-based correction gives way to an adversarial closed loop. RAD-2 has diffusion generate candidates while an RL discriminator reranks them, paired with BEV feature-space closed-loop simulation; collision rate drops 56% vs a strong diffusion baseline.

Featured

01 Compile the Corpus, Let the Agent Walk It

Standard RAG treats the LLM as a consumer sitting behind a search box. It gets retrieval results, it answers based on them, but it never sees how the corpus is organized, and it has no idea what didn't come back or whether it should try a different angle.

Corpus2Skill flips this. Offline, it compiles the document store into a hierarchical skill tree: cluster, summarize layer by layer with an LLM, land the result as a walkable skill file tree. At serve time the agent gets a global view, drills down topic branches, reads finer summaries, decides whether to backtrack or combine evidence across branches, and pulls source text by ID at the leaves. The cost is a sharp rise in index-time compute — every layer needs LLM summaries. The payoff is an agent that actually perceives corpus structure.

On the enterprise-support benchmark WixQA, it beats dense retrieval, RAPTOR, and agentic RAG on every quality metric.

Key takeaways: - Shifting work from serve time to index time is the real cost of letting an agent see corpus structure. - Best fit for stable corpora where queries need evidence combined across documents, like enterprise knowledge bases and product docs. - If the corpus updates constantly or query volume is tiny, recalculate the payback window before committing.

Source: Don't Retrieve, Navigate: Distilling Enterprise Knowledge into Navigable Agent Skills for QA and RAG

02 Your Production Logs Are a Training Set

Every LLM classification call already produces a labeled pair. The input is the user request, the label is the LLM's response, and both sit in your logs. TRACER pushes this to its logical end: train a lightweight surrogate on production traces, then use a parity gate — the surrogate only takes traffic when it agrees with the LLM above threshold α — to decide what the small model handles and what falls back to the large one.

On 77-way intent classification, the surrogate covers 83-100% of traffic. On a 150-class benchmark it fully replaces the Sonnet 4.6 teacher. On NLI tasks the gate refuses deployment outright, because embeddings alone can't support reliable separation. That refusal is the most valuable part of the system.

Cost reduction stops being "swap in a cheaper model" and becomes "let the big model train its own stand-in," with the stand-in's boundaries drifting as logs accumulate.

Key takeaways: - Production logs from classification LLMs are free distillation data. No extra annotation needed. - The parity gate turns "when is it safe to ship a surrogate" from a guess into a measurable decision. - Routing is transparent and auditable. You can see which inputs the small model handles well and which it doesn't.

Source: TRACER: Trace-Based Adaptive Cost-Efficient Routing for LLM Classification

03 One Joint Policy Beats a Four-Stage Pipeline

Visual RAG over complex documents is usually a pipeline: retrieve documents, rerank images, do visual perception, then reason. Each stage has its own loss and none of them talk to each other. Signal the early stages throw away, the later stages can't recover.

UniDoc-RL fuses all four into a single agent policy. Hierarchical actions (document level → image level → region crop) progressively refine the visual evidence. Dense multi-term rewards score each action. The whole thing trains end-to-end with GRPO. Across three benchmarks it gains up to 17.7% over prior RL methods. The idea itself isn't new — the agentification-plus-RL wave has now reached RAG — but pulling "actively crop a region" into the action space is a useful step.

Key takeaways: - Visual RAG stages can be trained jointly with RL, and the end-to-end signal preserves global semantics better than stage-wise losses. - A hierarchical action space (retrieve → select image → crop) lets the model zoom into information-dense regions on its own, rather than passively consuming whatever image got fed in. - 17.7% looks solid, but needs validation on longer documents and out-of-distribution inputs before claiming generality.

Source: UniDoc-RL: Coarse-to-Fine Visual RAG with Hierarchical Actions and Dense Rewards

04 Flow Matching Finally Reaches the Early Steps

Post-training flow matching has been stuck on a structural conflict. Backpropagating reward gradients along the full trajectory blows up memory or gradients. Direct-gradient methods sidestep that but can't touch the early generation steps, which are the ones that set the image's global structure.

LeapAlign compresses the long trajectory into two leaps. Each jump spans many ODE sampling steps and predicts a future latent in one shot. Randomizing the start and end times of the two leaps means any generation step can be updated stably. The abstract also mentions decaying large-magnitude gradients rather than clipping them, a common loss point for prior direct-gradient methods.

Fine-tuned on Flux, LeapAlign improves on image quality and prompt alignment over GRPO and other direct-gradient baselines. The abstract doesn't give specific numbers. CVPR acceptance says reviewers bought the engineering path.

Key takeaways: - Early generation steps are the blind spot of flow matching post-training. They set global structure and are the hardest to reach with reward gradients. - The two-leap trajectory with randomized endpoints is a practical workaround for long-trajectory backprop. Worth a look for image teams doing preference alignment. - Specific gains aren't disclosed in the abstract. Wait for full-paper ablations before drawing conclusions.

05 Diffusion Plus a Judge Halves the Collision Rate

Diffusion planners generate diverse candidate trajectories, but pure imitation learning gives them no negative feedback. The model learns how the expert drives; it doesn't learn which of its own candidates would crash.

RAD-2 splits generation from discrimination. The diffusion model produces candidates. An RL-trained discriminator reranks them, scoring by long-term driving quality. Sparse scalar rewards no longer act directly on high-dimensional trajectory space, which stabilizes optimization. The team also built BEV-Warp, a closed-loop simulator that evaluates in bird's-eye-view feature space to push training throughput.

Collision rate drops 56% vs a strong diffusion baseline. Real-vehicle road tests also showed smoother, safer-feeling driving.

Key takeaways: - Generator-discriminator separation is a workable route to wire RL into diffusion planners, avoiding the instability of sparse rewards applied directly to trajectory space. - Closed-loop simulation in BEV feature space is the throughput unlock. Pure trajectory replay can't scale this way. - For autonomous driving and long-horizon robotics teams, this adversarial closed-loop pattern is more extensible than rule-based correction.

Source: RAD-2: Scaling Reinforcement Learning in a Generator-Discriminator Framework

Also Worth Noting

Today's Observation

Corpus2Skill and UniDoc-RL landed the same day, and each reads at first like a narrow optimization — one rebuilds the index, the other rebuilds the training objective. But both push against the same default: the LLM as a passive consumer of RAG retrieval results. Corpus2Skill has the agent actively walk a pre-compiled skill tree at serve time. UniDoc-RL collapses retrieve, rerank, visual perception, and reasoning into one end-to-end joint policy. One moves on the index side, the other on the training side. The signal is the same: for complex tasks, the traditional pipeline that splits retrieve from reason has gone from infrastructure to bottleneck.

The interesting part isn't which approach wins, or some line about a new architecture rising. It's that "RAG pipeline = default architecture" is being questioned from multiple angles simultaneously. For teams building a RAG system, the concrete next step is small: in the next evaluation round, add an end-to-end joint-policy baseline alongside your staged one (a single-agent loop with function calls is a fine proxy) and measure how much global signal the staged design is losing. Measure first, then decide whether to switch.