Today's Overview

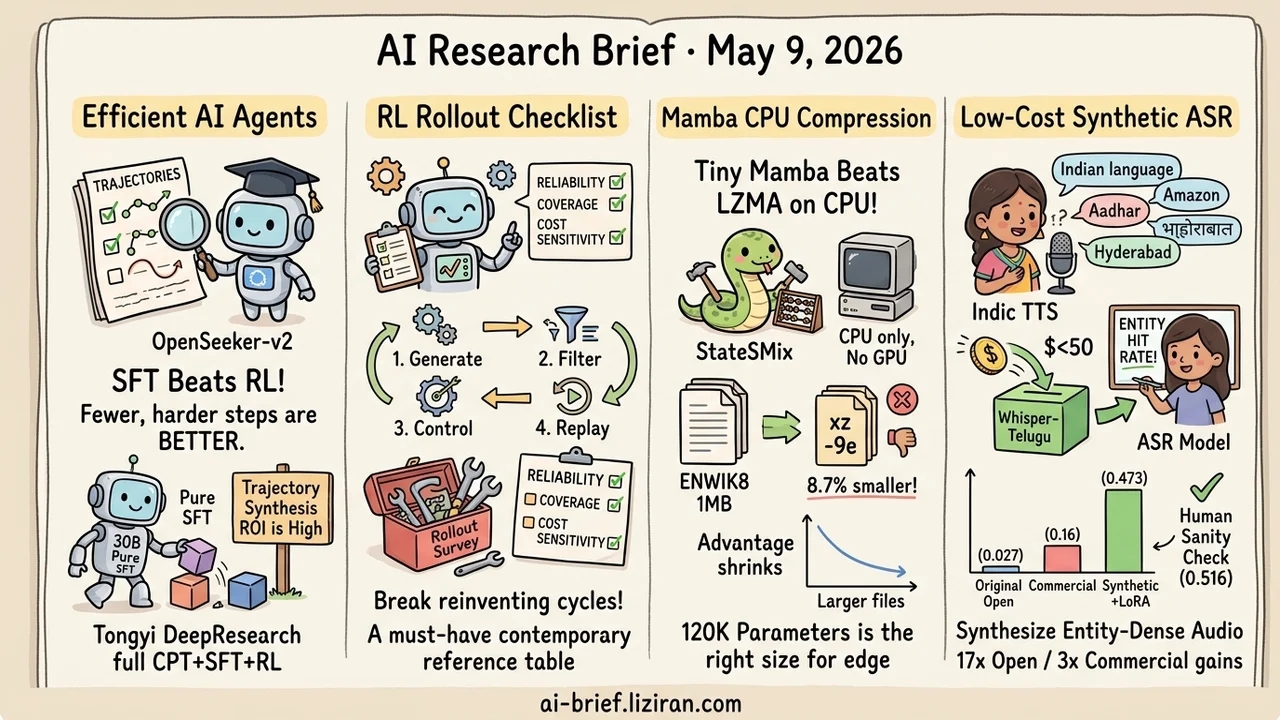

- 10.6k Curated Trajectories Match a Four-Stage RL Pipeline. OpenSeeker-v2 expands knowledge graph and tool set, applies strict low-step filtering. Pure SFT on a 30B model beats Tongyi DeepResearch's full CPT+SFT+RL on BrowseComp/HLE/xbench. The investment-worthy step is moving from optimizers to trajectory synthesis.

- RL Post-Training Rollout Finally Has a Checklist. A new survey breaks the lifecycle into Generate/Filter/Control/Replay, with three-axis evaluation across reliability, coverage, and cost sensitivity, plus a symptom-to-module diagnostic index.

- A 120K-Parameter Mamba Beats LZMA on Plain CPU. StateSMix combines online training, sparse n-gram, and arithmetic coding in pure C. No GPU needed. Beats xz -9e by 8.7% on 1MB enwik8, but the gap collapses to 0.7% by 10MB.

- Under $50 of Synthetic Data Lifts Open ASR to 3x Commercial on Long-Tail Languages. Indic TTS synthesizes ~22K entity-dense utterances. LoRA fine-tuning Whisper-Telugu pushes Entity-Hit-Rate from 0.027 to 0.473. A 20-utterance human sanity check addresses the same-TTS self-loop concern.

Featured

01 10.6k Curated Trajectories Match a Full RL Pipeline

A frontier search agent trained with pure SFT on 10.6k examples. The difference at OpenSeeker-v2 isn't algorithmic — it's the trajectory filter.

Three not-complicated changes drove the result. They scaled the knowledge graph for broader exploration, expanded the tool set for wider coverage, and applied strict low-step filtering to drop easy samples. The resulting 30B model beat Tongyi DeepResearch — which ran the full CPT+SFT+RL pipeline — across BrowseComp, BrowseComp-ZH, HLE, and xbench.

Under fixed budget, spending on harder, more informative trajectories may beat stacking another RL stage. One scope limit worth keeping. The comparisons all involve relatively structured deep-search agent tasks. Whether longer-horizon or open-ended agent settings carry the conclusion is not validated in the paper.

Key takeaways: - For search agents, optimizing trajectory synthesis and filtering may have higher ROI than extending the pipeline. - SFT's ceiling on structured agent tasks is higher than expected, provided trajectories are hard and informative. - The comparison is only on deep search. Don't transplant the conclusion to long-horizon or open-ended agents.

Source: OpenSeeker-v2: Pushing the Limits of Search Agents with Informative and High-Difficulty Trajectories

02 Which Rollout Step Is Your RL Pipeline Missing?

Most teams running RL post-training are reinventing rollout, but few document the practice. Optimizer papers are everywhere; rollout design gets passed around by word of mouth.

This survey breaks the lifecycle into four steps. Generate produces candidate trajectories and topology. Filter applies verifiers or judges to mid-rollout signals. Control allocates compute under budget — continue, branch, or stop. Replay retains and reuses artifacts, including self-improving curricula. Alongside that, a three-dimensional evaluation framework covers reliability, coverage, and cost sensitivity, plus a diagnostic index mapping common rollout pathologies to specific modules.

The value isn't a new method. It's the first contemporary reference table for what was scattered engineering practice.

Key takeaways: - Use it as a checklist. Walk your RL pipeline through GFCR and gaps surface fast. - Replay is the most-skipped step. Self-improving curricula squeeze training value from old trajectories without weight updates. - Rollout design is underrated in most papers. This saves teams from compiling the survey themselves.

03 A 120K-Parameter Mamba Beats LZMA on Your CPU

Intuition says beating an industrial compressor takes a big model and a GPU. StateSMix runs the other way. A 120K-parameter Mamba SSM trains token-by-token from scratch on the file being compressed, paired with nine sparse n-gram hash tables and arithmetic coding.

The implementation is pure C with AVX2 SIMD. No GPU, no pre-trained weights, ~2000 tokens/second on a regular x86-64. On enwik8 it beats xz -9e by 8.7% at 1MB, 5.4% at 3MB, and only 0.7% at 10MB. The gap shrinks fast as files grow, but the small-file delta is real. Ablation shows the SSM alone already beats xz; n-gram adds another 4.1%.

Two years of LLM dominance made "small model + online learning + classical coding" look like a default-skipped path. For edge devices, privacy-sensitive data, and long-tail file types, 120K parameters of adaptive model is actually the right size.

Key takeaways: - An online-trained tiny SSM can beat LZMA on CPU. Small-plus-online has real space in edge and privacy settings. - Advantage decays sharply with file size (only 0.7% at 10MB). Applicability depends on data scale. - Pure C, AVX2, no external dependencies. The engineering recipe transfers directly to on-device compression and coding tasks.

Source: StateSMix: Online Lossless Compression via Mamba State Space Models and Sparse N-gram Context Mixing

04 $50 of Synthetic Data Triples Open ASR on Long-Tail Languages

Open-source Whisper-Telugu hits Entity-Hit-Rate 0.027 on number strings, addresses, brand names, and English-Indic codemix. Commercial Deepgram Nova-3 hits 0.16. Long-tail language plus entity-dense audio fails both sides.

The authors built a TTS↔STT flywheel. Open-source Indic TTS synthesizes ~22000 entity-dense utterances at marginal cost under $50. LoRA fine-tuning Whisper-Telugu pushes EHR to 0.473 (17x open, 3x commercial). FLEURS-Te read-style WER only loses 6.6 points. The obvious self-loop risk: the held-out comes from the same TTS system. The authors recorded 20 real Telugu utterances as a sanity check; EHR 0.516 actually beats the synthetic test, dampening the "only works on its own synthetic data" concern.

Hindi and Tamil also see significant gains. Hindi still trails commercial. All three languages fall short of the pre-registered EHR target. The authors report it straight.

Key takeaways: - Long-tail language plus entity-dense audio is a shared blind spot for open and commercial ASR. Teams targeting long-tail markets have to fill it themselves. - $50 synthetic data plus LoRA is a reproducible recipe for low-resource settings, not just a narrative. - Same-TTS held-out has self-loop risk. Look at the human sanity check and high-resource control (Hindi still loses to commercial) to judge real generalization.

Also Worth Noting

Today's Observation

Read three of today's papers together and the shift is consistent. What determines agent capability is moving from "algorithm/loss" to "trajectory/environment/data." OpenSeeker-v2 argues high-quality trajectories plus simple SFT can approach a heavy RL pipeline. The rollout survey breaks RL's core into generate/filter/control/replay rather than optimizer details. Add Healthcare AI GYM from the notable list — gym environments across 10 clinical domains, not new algorithms — and three different angles point to the same place. Trajectories and environment determine the ceiling. Algorithms are just the implementation.

The over-reach to avoid: this isn't "RL is dead, SFT is back." Two of the three still operate inside the RL frame. OpenSeeker-v2 only compares on structured deep-search tasks, with no validation on longer-horizon settings.

Concrete next step. If you're doing agent post-training, the next iteration shouldn't open with swapping the RL algorithm. Check whether your trajectory-pipeline levers are saturated first. Knowledge graph and tool set coverage on the generation side. Difficulty distribution and low-step filtering on the selection side. Replay strategy on the reuse side. Any one of those gaps may be more worth fixing than a different optimizer.