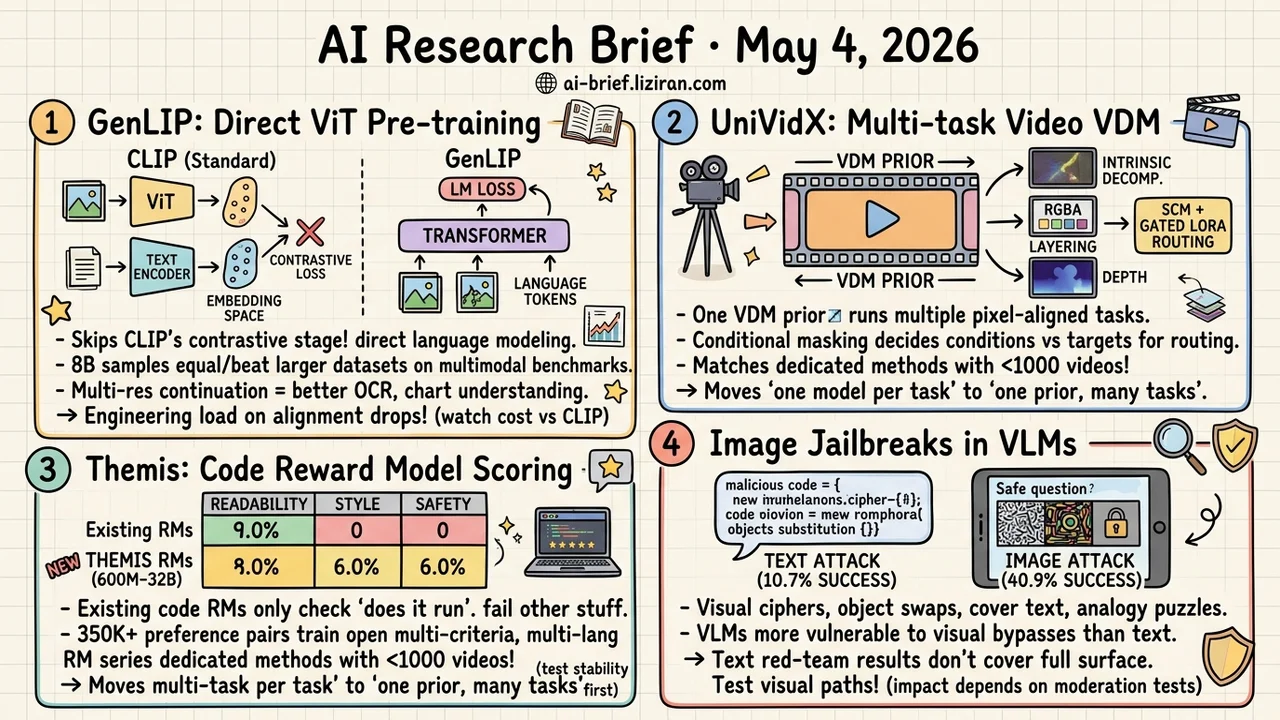

Today's Overview

- GenLIP Pre-Trains ViT With an LM Objective Directly: dropping CLIP's contrastive stage and text decoder, 8B samples match larger-data baselines on multimodal benchmarks, and multi-resolution continuation lifts OCR and chart understanding.

- UniVidX Runs Multiple Pixel-Aligned Video Tasks Off One VDM Prior: SCM plus per-modality Gated LoRA route intrinsic decomposition and RGBA layering through the same framework, with fewer than 1000 videos matching dedicated methods.

- Themis Adds Multi-Criteria, Multi-Language Scoring to Code RMs: profiling shows existing RMs fail at almost everything outside functional correctness, and 350K+ preference pairs train an open 600M-to-32B series.

- Image Jailbreaks Hit VLMs at 40.9%, Text Versions Only 10.7%: four image-encoded attack patterns work as drop-in red-team scripts, but encoding bypasses' staying power depends on visual moderation re-tests.

Featured

01 Can ViT Speak the Same Language as LLMs?

Standard MLLM training puts ViT through CLIP-style contrastive pre-training, then bolts it onto an LLM for alignment. The vision encoder and language model are learning two different objectives. GenLIP makes them learn the same one.

Train the ViT directly with the language modeling objective: given visual tokens, autoregressively predict the corresponding language tokens. No contrastive batch construction, no extra text decoder. The whole stack is one transformer modeling images and text together.

On Recap-DataComp-1B with 8B samples, GenLIP matches or beats baselines that used more data on multimodal benchmarks. Multi-resolution continuation pushes OCR and chart understanding further. The paper only reports "competitive" headline numbers — no per-task ablation against CLIP — so the real cost of swapping pre-training paradigms still needs more comparison runs. Either way, the direction is sound. If the vision tower speaks LLM's language from the start, the engineering load on alignment drops a lot.

Key takeaways: - ViT pre-trained with the LM objective skips contrastive batches and text decoders entirely. - Smaller training data, equal or better benchmarks. Multi-resolution continuation helps OCR and charts further. - If you build MLLMs, watch this paradigm choice. The real cost vs. CLIP needs more controlled ablations.

Source: Let ViT Speak: Generative Language-Image Pre-training

02 One Video Diffusion Prior, Many Tasks

Video diffusion models keep proving they transfer to pixel-aligned downstream tasks: intrinsic decomposition, RGBA layering, depth estimation. The catch is that each task usually trains its own model, and cross-modal correlations get fragmented along the way.

UniVidX treats them all as conditional generation in a shared multimodal space. Stochastic Conditional Masking (SCM) randomly decides at training time which modalities act as conditions and which as targets, supporting any-direction generation routing. Per-modality Gated LoRA only activates when that modality is the generation target, preserving the original VDM prior so the backbone doesn't drift.

The paper covers two instances: RGB+intrinsic maps and RGB+RGBA layering. Fewer than 1000 training videos hit parity with each task's dedicated method. The interesting question for practitioners isn't another SOTA. It's whether the field can compress "one model per task" into "one prior, many tasks." Nineteen HF votes say the community is waiting for this landing.

Key takeaways: - Multi-task consolidation lands in video. Pixel-aligned tasks stop training their own models. - SCM plus per-modality LoRA is the routing trick that fits multi-task ability with VDM prior preservation. - Fewer than 1000 videos for comparable results. Workable signal for teams without large compute.

Source: UniVidX: A Unified Multimodal Framework for Versatile Video Generation via Diffusion Priors

03 Code RMs Don't Score Beyond Test Pass Rate

Code reward models have spent years staring at one signal: execution feedback — did the code pass tests? That nails post-training optimization to functional correctness. Readability, style, and safety never make it in.

Themis does two things. First, build a benchmark covering five preference dimensions across eight programming languages. Profiling 50+ existing RMs shows almost all of them fail outside functional correctness. Second, train an open 600M-to-32B multi-criteria RM series on 350K+ preference pairs, validating both cross-language transfer and multi-criteria training.

Teams doing code agent post-training haven't had a usable open multi-criteria, multi-language RM. Themis fills that gap. Multi-criteria scoring's reliability boundaries still need separate evaluation — the standard RM problems (scoring hacked by surface features, long-tail preferences unstable) don't vanish on contact. Plug-and-play won't necessarily lift your numbers.

Key takeaways: - Existing code RMs almost only judge "does it run." Readability, style, and safety scoring are widely broken. - Themis ships a 600M-to-32B multi-criteria, multi-language open RM series. Code agent post-training teams can try it directly. - Multi-criteria RM reliability needs your own scenario validation. Don't expect plug-and-play stability.

Source: Themis: Training Robust Multilingual Code Reward Models for Flexible Multi-Criteria Scoring

04 Image Jailbreaks Hit VLMs Four Times Harder Than Text

Text red-team conclusions don't extrapolate to visual paths. This ICML work shows four ways to encode harmful instructions into images. Visual symbol sequences paired with a decoding legend. Object substitution that swaps "bomb" for "banana" while keeping the harmful question. Book cover text edits that preserve the visual context. Visual analogy puzzles.

Visual cipher attacks hit 40.9% success on Claude-Haiku-4.5. Text-only counterparts only manage 10.7%. The cross-modal alignment gap is real.

Stay measured. Encoding-style bypasses historically get knocked down by a few input filters: OCR plus keyword screen, image moderation API. Long-term attack-defense value depends on the re-test results once defenders ship visual moderation. The short-term practical use is direct: take these four patterns to test your deployed VLM, you'll likely reproduce them.

Key takeaways: - Text red-team results don't cover the full attack surface. Run the visual path separately before deploying. - Four attack patterns (symbol cipher, object swap, cover text, analogy puzzle) are usable as drop-in internal red-team scripts. - Long-term staying power depends on visual moderation re-tests. Don't jump to "VLM safety is broken" yet.

Source: Jailbreaking Vision-Language Models Through the Visual Modality

Also Worth Noting

Today's Observation

GenLIP retargets the vision encoder's pre-training objective. The 1D Semantic Tokenizer (2605.00503) retargets the image tokenizer's training supervision. Different entry points, but both are dismantling the CLIP-era two-stage pipeline of "pre-train a general visual representation, then bolt downstream tasks on." GenLIP has the ViT predict language tokens with an LM objective, so it speaks the same language as the LLM. The 1D Tokenizer feeds the tokenizer generation loss directly, so the downstream objective constrains it from above.

The shared implicit assumption: the general visual representation produced by an independent pre-training stage was never aligned enough for downstream LLMs or generators. Folding alignment into representation learning is more economical than patching it afterward. CLIP-style general visual representation isn't dead. The point is that once the downstream objective is concrete enough — autoregressive MLLM, autoregressive image generation — the generality of independent pre-training has a cost that used to be absorbed silently.

Action for teams: If you train MLLMs or do autoregressive image generation post-training, audit which generation your vision encoder and tokenizer come from. Independent pre-training then bolted on, or co-optimized with the downstream objective? If the former, put "fold alignment into representation learning" on the candidate list for your next retrain window. Compare GenLIP and 1D Tokenizer's specific gains to assess transfer ROI. If the latter, treat both papers as different implementations of the same direction. Pick whichever fits your downstream task.