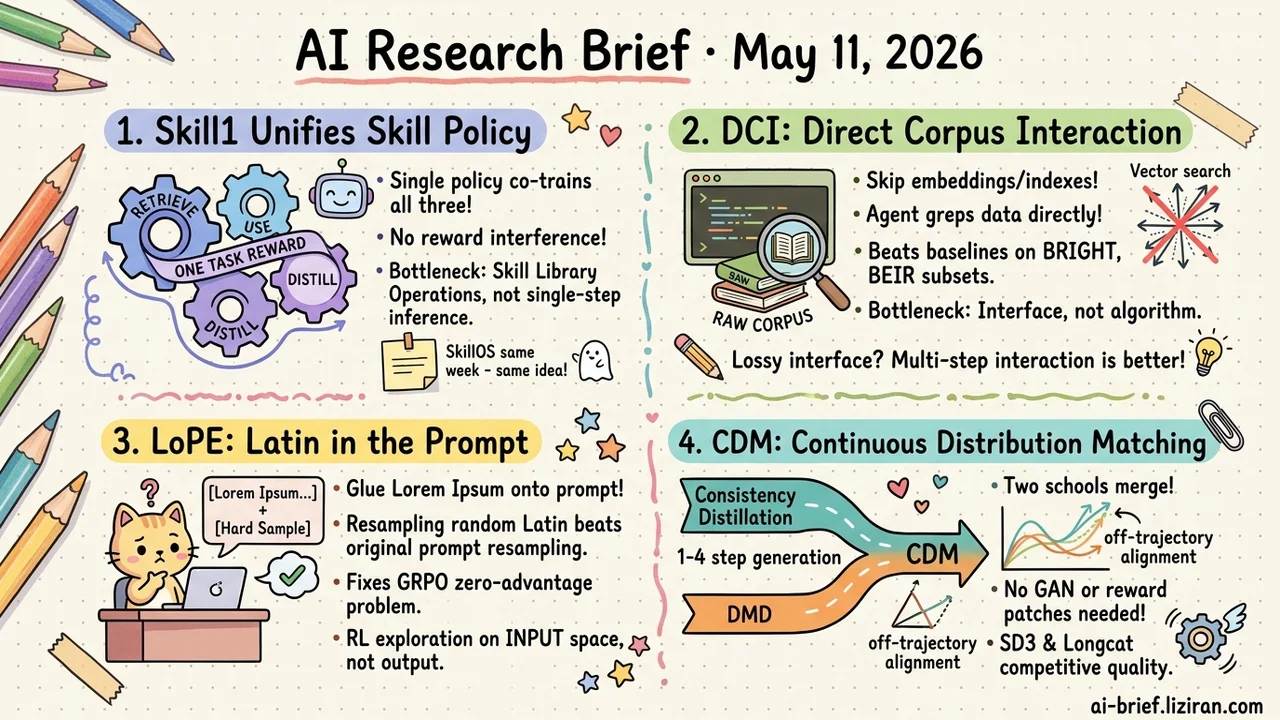

Today's Overview

- Skill1 Unifies Skill Retrieval, Use, and Distillation in One Policy. A single task reward co-trains all three, avoiding interference between competing reward signals. SkillOS attacks the same problem from a different angle the same week. Agent continual learning's bottleneck is shifting from single-step inference to skill library operations.

- DCI Lets Agents Grep the Raw Corpus Directly. Skip embeddings, vector indexes, and retrieval APIs. Beats sparse, dense, and reranking baselines on BRIGHT, BEIR subsets, and BrowseComp-Plus. The retrieval bottleneck moves from algorithm to interface.

- LoPE Glues Lorem Ipsum Onto the Prompt. From 1.7B to 7B, prepending random Latin beats resampling the original prompt for rescuing GRPO's zero-advantage hard samples. Moving RL exploration from output to input was a path almost nobody had tried seriously.

- CDM Brings DMD into Continuous Time. Trajectory density and distribution matching, two competing schools, collapse into one framework. 1-4 step generation no longer leans on GAN or reward patches.

Featured

01 The Real Bottleneck in Self-Improving Agents Is the Skill Library

Skill1 does something simple to describe. One policy learns three things at once: retrieve and rerank candidates from a skill library, use the chosen skill to complete the task, then distill new skills from the resulting trajectory. The trick is that a single task-outcome signal trains all three. Low-frequency reward trends credit "did you pick right," high-frequency variance credits "is the new distilled skill any good."

The mainstream alternative trains them separately. Skill manager has its own retrieval reward, main policy has task reward, distillation runs offline. Three reward streams that fight each other. Overall progress stalls on whichever step ends up the weakest link. ALFWorld and WebShop gains come specifically from this unified credit assignment, and ablations confirm cutting any single credit path collapses the joint behavior.

SkillOS (2605.06614) landed the same day on a different angle: make the skill library's curation operator itself a learnable object. Different mechanism, same target — make skill library management trainable alongside the main policy. Two independent papers betting on the same direction the same week is the more telling signal. Agent continual learning's real bottleneck sits in how the skill library is operated, not in optimizing single-task inference.

Key takeaways: - Skill1 co-trains skill selection, use, and distillation under one task reward, sidestepping multi-reward interference. - SkillOS attacks the same problem via a learnable curation operator. Different route, same goal: skill libraries should learn alongside the policy. - For teams building self-improving agents, the bolted-on "external skill manager + main policy + offline distillation" architecture deserves a second look.

Source: Skill1: Unified Evolution of Skill-Augmented Agents via Reinforcement Learning

02 Let the Agent Grep the Raw Corpus

For agents, the retrieval bottleneck may not be the algorithm. It's the interface. Compressing a corpus into a single top-k similarity query strips out exact lexical constraints, conjunctive sparse cues, local context inspection, and multi-step hypothesis revision. Evidence filtered out early can't be recovered, no matter how strong the downstream reasoning.

DCI (direct corpus interaction) just gives the agent grep, file reads, shell, and lightweight scripts to operate raw corpus directly. No embeddings, no vector index, no retrieval API. Across BRIGHT, several BEIR datasets, BrowseComp-Plus, and multi-hop QA, DCI beats sparse, dense, and reranking baselines. The paper says "several" subsets rather than a clean sweep, so broader validation is still pending.

The author's read: as agent reasoning improves, the retrieval quality bottleneck shifts from algorithm to interface resolution. A single top-k call returns granularity that's too coarse. Multi-step interaction is what recovers the signal early compression destroys.

Key takeaways: - "One-shot top-k retrieval" is itself a lossy interface. The exact-match and multi-step revision agents need most are precisely what gets squeezed out at this step. - DCI doesn't need embeddings, indexes, or APIs. Local or fast-changing corpora fit naturally, and the offline indexing engineering goes away. - When evaluating agent retrieval, treat "interface design" as its own dimension. Don't only push on the embedding and rerank layers.

Source: Beyond Semantic Similarity: Rethinking Retrieval for Agentic Search via Direct Corpus Interaction

03 Lorem Ipsum Rescues GRPO's Wasted Samples

GRPO has a familiar problem. When a question gets sampled N times and every rollout fails, relative advantage is zero. That batch and its compute are wasted. Conventional fixes work on the output side — adjust reward, add curriculum, expand sampling budget.

LoPE goes the other direction. Glue some Lorem Ipsum random tokens in front of the prompt, and the model gets pushed onto reasoning paths the original prompt couldn't reach. From 1.7B to 7B this beats resampling the original prompt. More surprisingly, other low-perplexity Latin gibberish works too.

Moving RL exploration from output space to input space is a direction almost nobody had tried seriously. The unexplored room here may be larger than it looks.

Key takeaways: - GRPO's zero-advantage problem wastes hard samples and compute. Prompt-side perturbation is an overlooked solution. - LoPE just prepends a random prefix during resampling. No reward changes, no architecture changes. The engineering cost is trivial. - For RL training teams, input-space perturbation belongs on the experiment list. It may pay off more than another curriculum pass.

Source: Nonsense Helps: Prompt Space Perturbation Broadens Reasoning Exploration

04 Two Few-Step Diffusion Distillation Routes Merge

Few-step diffusion distillation has had two competing schools. DMD (Distribution Matching Distillation) runs distribution matching at fixed time steps, but sparse supervision plus reverse-KL's mode-seeking tendency tends to yield artifacts and over-smoothing. Fixes usually require GAN or reward model patches. Consistency Distillation enforces self-consistency along the entire PF-ODE trajectory. Denser route, different framework.

CDM (Continuous-Time Distribution Matching) ports DMD from fixed discrete time steps into continuous time. A random-length continuous schedule lets distribution matching happen at any point on the trajectory. Latents extrapolated from the student's velocity field then provide off-trajectory alignment. On SD3-Medium and Longcat-Image, it gets competitive visual quality without GAN or reward assistance.

The point isn't another metric bump. It's that "trajectory density" and "distribution matching" — previously two camps' core ideas — now sit inside one framework.

Key takeaways: - Teams chasing 1-4 step inference cost can re-evaluate the quality ceiling. The DMD route no longer needs GAN or reward patches. - Continuous-time scheduling plus off-trajectory alignment is the key technical move. Worth tracking and reproducing. - Two distillation routes now share an explanatory frame. Follow-up work will likely keep consolidating along this axis.

Source: Continuous-Time Distribution Matching for Few-Step Diffusion Distillation

Also Worth Noting

Today's Observation

Read Skill1, SkillOS, and StraTA together and the same move surfaces. Components inside agent systems that used to live as hand-tuned scripts or hard-coded rules are getting rebuilt as learnable parts trained alongside the main policy via RL. Different papers move different parts. Skill1 moves the skill operator: retrieve, use, distill. SkillOS moves the curation operator: which skills earn a slot. StraTA moves strategy generation: the trajectory-level exploration policy. All three target the same idea. The "fixed logic" pieces in agent systems that look like they don't need learning actually can and should co-optimize with the policy. This thread explains why agent RL paper density jumped over the last few weeks better than any single paper.

Concrete next step. If you build agents, spend ten minutes on a list. Which "fixed logic" modules in your system — routing, filtering, memory selection, tool selection, rewriting, fallback strategy — currently run on hand-tuned scripts or hard-coded heuristics? Walk down the list and judge which ones are worth folding into RL co-training and which actually don't need it. Prioritize the modules where reward signal can flow back directly and where current heuristics make obvious mistakes. That's the easiest place to pick up wins.