Today's Overview

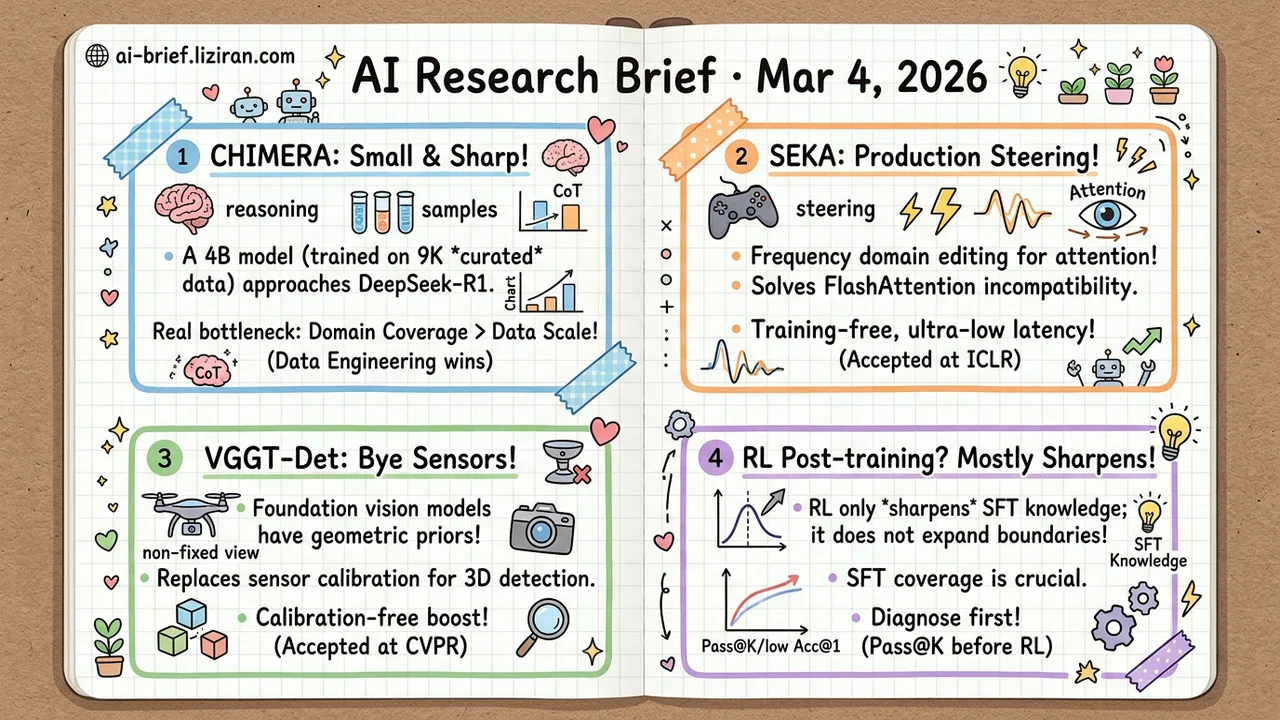

- A 4B reasoning model trained on 9K curated samples approaches DeepSeek-R1. CHIMERA shows the real bottleneck in reasoning training is domain coverage and data curation, not scale.

- Attention steering is finally production-ready. SEKA edits key embeddings in the frequency domain, bypassing FlashAttention compatibility issues. Training-free, negligible latency. Accepted at ICLR.

- Foundation vision models carry geometric priors strong enough to replace sensor calibration. VGGT-Det beats prior best by 4–8 mAP on calibration-free 3D detection. Accepted at CVPR.

- RL post-training mostly sharpens output distributions, not expanding capability boundaries. Controlled experiments show SFT's coverage is the real prerequisite for performance gains.

Featured

01 Reasoning A 4B Model Trained on 9K Samples Rivals DeepSeek-R1

Training a reasoning model hits a cold-start problem immediately: you need chain-of-thought (CoT) traces as training data, but generating reliable CoT traces requires a model that already reasons well. Open-source reasoning datasets skew almost entirely toward math, and human annotation for hard problems doesn't scale.

CHIMERA uses strong existing models to synthesize seed data, but the real contribution is the data engineering pipeline. It builds a hierarchical taxonomy covering 8 disciplines and 1,000+ fine-grained topics to ensure domain balance, then cross-validates with strong models to filter question validity and answer correctness. Only 9K samples survive. A 4B Qwen3 model post-trained on this data matches or approaches DeepSeek-R1 and Qwen3-235B on GPQA-Diamond, AIME 24/25/26, and other benchmarks.

That 9K is enough says something: the bottleneck in reasoning training is coverage and curation quality, not volume. For teams that want reasoning capabilities but lack data infrastructure, this is a reproducible path with a significantly lower barrier.

Key takeaways: - Cold-start solved by synthesizing CoT traces from strong models; the real work is domain coverage and cross-validation filtering. - 9K curated samples let a 4B model approach models 50x+ its size. Data engineering beats data volume. - Fully automated and reproducible, lowering the barrier to reasoning training.

Source: CHIMERA: Compact Synthetic Data for Generalizable LLM Reasoning

02 Architecture Attention Steering Is Finally Production-Ready

FlashAttention saves memory by skipping the full attention matrix. That same shortcut blocks every steering method that needs to touch it. Directing a model's focus to specific tokens (highlighting key instructions, emphasizing context passages) works in research but has never been deployable.

SEKA takes a different route: spectral decomposition on key embeddings in the frequency domain, amplifying latent directions for target tokens. Same effect, full compatibility with efficient inference. AdaSEKA goes further by dynamically combining expert subspaces based on prompt semantics, auto-selecting steering strategies per intent. Training-free, negligible added latency, minimal memory overhead. Accepted at ICLR.

Key takeaways: - Existing attention steering methods require the full attention matrix, making them incompatible with FlashAttention and stuck in research. - SEKA edits key embeddings in the frequency domain, bypassing this constraint with zero training and negligible overhead. - Teams working on prompt controllability and model guidance now have a deployable solution.

Source: Spectral Attention Steering for Prompt Highlighting

03 Multimodal Foundation Models Already Know Camera Poses

Large-scale visual geometry models like VGGT encode strong 3D geometric priors in their internal features. VGGT-Det extracts these priors through two modules: one initializes detection queries from VGGT's attention maps using semantic information, the other aggregates geometric features across layers to lift 2D information into 3D.

This eliminates multi-view 3D detection's hard dependency on precise camera calibration. On ScanNet and ARKitScenes in calibration-free settings, it beats the previous best by 4.4 and 8.6 mAP@0.25 respectively. Accepted at CVPR. Skip calibration pipelines; extract geometry from foundation models instead.

Key takeaways: - Foundation vision models carry geometric priors strong enough to replace sensor calibration, changing deployment requirements for 3D detection. - 4–8 mAP improvement in calibration-free settings, with code available. - For phones, drones, and other non-fixed-viewpoint scenarios, eliminating calibration dependency matters more than boosting detection accuracy.

Source: VGGT-Det: Mining VGGT Internal Priors for Sensor-Geometry-Free Multi-View Indoor 3D Object Detection

04 Training Most RL Post-Training Gains Actually Belong to SFT

A controlled experiment delivers an uncomfortable answer: on medical VLMs, RL mostly "sharpens." It makes capabilities learned during SFT more reliably expressed, rather than teaching new ones. Researchers isolated visual perception, SFT, and RL axes on MedMNIST. RL only helps when the model already has high Pass@K (can answer correctly if sampled multiple times). What improves is Acc@1 (first-try accuracy) and sampling efficiency.

SFT's coverage is the prerequisite. Without it, no amount of RL training helps. The finding comes from medical imaging, but the logic applies broadly: are RL gains from learning something new, or just expressing SFT's knowledge more consistently? Diagnose with Pass@K before committing compute to RL.

Key takeaways: - RL on VLMs primarily sharpens output distributions rather than expanding capability boundaries. SFT coverage is the prerequisite. - RL works best when Pass@K is high but Acc@1 is low. Otherwise, it wastes compute. - Before investing in RL post-training, diagnose with Pass@K whether the model has a foundation worth reinforcing.

Source: When Does RL Help Medical VLMs? Disentangling Vision, SFT, and RL Gains

Also Worth Noting

Today's Observation

RL-for-reasoning has a specific engineering bottleneck: when problem difficulty exceeds the model's current capability, sampling produces no correct trajectories, reward signal drops to zero, and training stalls in that difficulty range. Three independent papers today attack this problem at different levels.

CHIMERA solves it at the data layer. Synthetic data with difficulty calibration ensures enough samples near the model's capability boundary for correct trajectories to be sampled in the first place. "Learn Hard Problems" solves it at the search strategy layer: when the model can't find correct solutions on its own, human reference proofs guide RL exploration. Not through direct SFT imitation (out-of-distribution proofs can't be imitated), but as anchor points in the search space. DIVA-GRPO solves it at the loss function layer: standard GRPO's advantage approaches zero on both too-hard and too-easy problems, and difficulty-adaptive advantage computation restores the gradient signal.

Data engineering, search strategy, loss function — three approaches at three levels of the training pipeline. Their simultaneous appearance points to a converged judgment: reward sparsity is the biggest engineering bottleneck in RL-for-reasoning right now, more important than the choice of RL algorithm (GRPO vs. PPO vs. REINFORCE). This convergence signal carries more information than any single paper's results. It reflects different teams repeatedly hitting the same wall in practice.

If you're training reasoning models, build a difficulty-bucketed diagnostic first: check Pass@K and non-zero reward ratios per difficulty tier. Where the signal breaks is where you need to invest — data coverage, exploration guidance, loss correction. Pick at least one.