Today's Overview

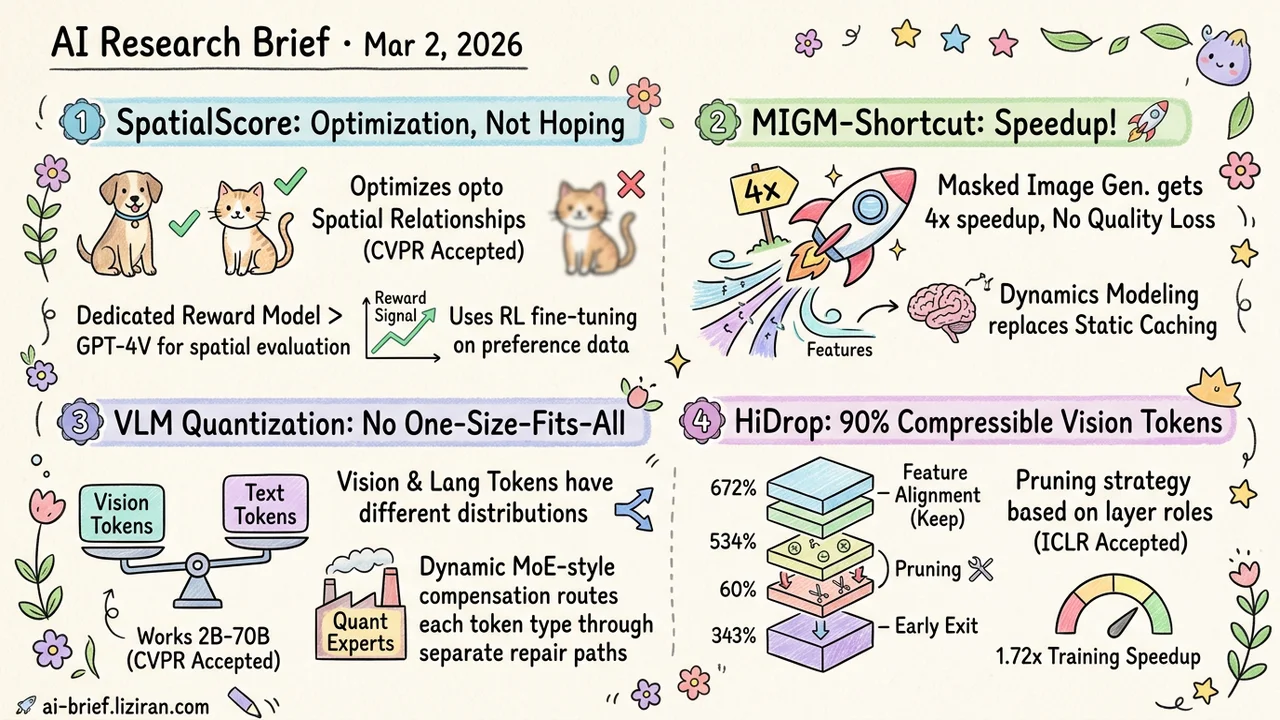

- Spatial relationships in image generation can now be optimized, not just hoped for. SpatialScore trains a reward model that outperforms GPT-4V on spatial evaluation, then uses it to RL-fine-tune generators. CVPR accepted, dataset open-sourced.

- Masked image generation gets a 4x speedup with no quality loss. Learning feature dynamics replaces static caching, recovering semantic information that discrete sampling throws away.

- VLM quantization can't be one-size-fits-all. Vision and language tokens have different distributions. MoE-style dynamic error compensation routes each token type through separate repair paths. Works from 2B to 70B, CVPR accepted.

- 90% of vision tokens are compressible. HiDrop finds that early layers handle feature alignment and shouldn't be pruned. A layer-aware strategy that matches each layer's actual role is the key. ICLR accepted.

Featured

01 Image Gen Turn "Cat Left, Dog Right" Into an Optimization Target

Text-to-image models understand semantics fine, but spatial relationships remain a coin flip. "A to the left of B" often takes multiple samples to get right. The root cause: no explicit feedback signal for spatial correctness during generation.

SpatialScore fixes this by modeling spatial accuracy as a reward signal. They train a dedicated reward model on 80K+ preference pairs, then use it for online RL to directly optimize the generator's spatial understanding. The reward model beats GPT-4V and other closed-source models on spatial evaluation, confirming that a focused small model with good data still wins on vertical tasks.

CVPR accepted, dataset and code open-sourced. Engineering barrier to adoption is low. The bigger story is transferability: spatial reasoning is just one of many text-to-image weak spots. Text rendering, counting, attribute binding — all could follow the same "build preference data → train reward model → RL fine-tune" playbook.

Key takeaways: - Turning spatial correctness from a sampling lottery into an optimizable reward signal is the real shift - A dedicated reward model outperforms GPT-4V on spatial evaluation; small model + good data still works - The same reward-modeling pattern could generalize to text rendering, counting, and attribute binding

Source: Enhancing Spatial Understanding in Image Generation via Reward Modeling

02 Efficiency Discrete Sampling Throws Away Semantic Information. You Can Learn It Back.

Masked image generation models (MIGM) run full bidirectional attention at every step. The rich semantics in continuous features get discarded when sampling discrete tokens. Previous speedup methods cache old features to approximate future ones, but error compounds fast at higher acceleration ratios.

MIGM-Shortcut takes a different angle: train a lightweight model that consumes both previous features and already-sampled tokens to regress the average velocity field of feature evolution. Dynamics modeling replaces static caching. On the current best MIGM (Lumina-DiMOO), it achieves 4x+ speedup with no quality drop, pushing the efficiency-quality frontier forward.

The same day, SenCache attacked a similar problem from sensitivity analysis: learn which computations matter, not how they evolve. Two independent approaches, both answering "what can we skip," with completely different methodologies.

Key takeaways: - Feature caching fails because it ignores sampling information; dynamics modeling is a more expressive alternative - 4x speedup with no quality loss has direct implications for MIGM deployment - Two parallel efforts on MIGM efficiency signal that this problem is getting concentrated attention

Source: Accelerating Masked Image Generation by Learning Latent Controlled Dynamics

03 Efficiency Multimodal Quantization Needs Separate Repair Paths for Vision and Language

Running VLMs on-device almost always means quantization. An underappreciated problem: image tokens and text tokens have very different numerical distributions. Standard PTQ applies uniform error compensation across all channels. That's leaving performance on the table.

Quant Experts borrows from MoE architecture. It splits important channels into "globally shared" and "token-dependent" groups. The first group gets a shared low-rank adapter for repair. The second routes dynamically to specialized expert modules based on the specific token. Vision tokens and language tokens each get their own repair path instead of compromising on a shared one.

Tested from 2B to 70B parameter VLMs, quantized accuracy approaches full-precision. CVPR accepted.

Key takeaways: - The bottleneck in VLM quantization is cross-modal distribution mismatch, not just the precision-compression tradeoff - MoE-style dynamic compensation beats static global strategies for heterogeneous inputs - Validated across 2B to 70B scale, directly applicable to on-device multimodal deployment

04 Multimodal Drop 90% of Vision Tokens and MLLMs Don't Get Worse?

Previous token pruning methods share a common mistake: treating early layers as redundant and pruning there. Early layers actually perform vision-language feature alignment. Pruning them breaks the fusion process.

HiDrop corrects this. Early layers stay untouched. Vision tokens get injected only when actual multimodal fusion begins (Late Injection). Middle layers apply a concave pyramid pruning curve. Deep layers allow early exit. This layered strategy removes roughly 90% of vision tokens with near-flat performance and 1.72x training speedup.

The engineering details matter too: persistent positional encoding and FlashAttention-compatible token selection avoid the hidden overhead that dynamic pruning usually introduces. ICLR accepted.

Key takeaways: - Early layers do feature alignment and should not be pruned; prior methods got this wrong - 90% of vision tokens are compressible when pruning strategy matches each layer's actual function - Directly useful for deploying MLLMs under resource constraints

Also Worth Noting

Today's Observation

"Redundancy" gets thrown around too loosely in efficiency research. Fixed-interval caching assumes every Nth step can be skipped. Uniform pruning assumes all vision tokens are equally disposable. Several papers today independently disprove these assumptions.

SenCache finds that sensitivity varies enormously across diffusion steps; blindly caching certain critical steps causes quality cliffs. MIGM-Shortcut discovers that feature evolution is predictable, making dynamics modeling strictly better than static snapshots. HiDrop shows that early layers handle feature alignment and should never be pruned, while mid-to-deep redundancy varies nonlinearly with depth.

Three independent results point the same direction: computational redundancy in models is dynamic. It shifts with input content, timestep, and layer depth. Static strategies approximate every specific case with the average case. When models get complex enough, that approximation costs real quality.

If your inference pipeline still uses fixed-interval caching or uniform token dropping, run a profiling pass on your actual production data. Measure sensitivity distributions across steps and layers. You'll likely find 20% of computation drives 80% of quality. Reallocating saved compute to those critical positions beats uniform acceleration every time.