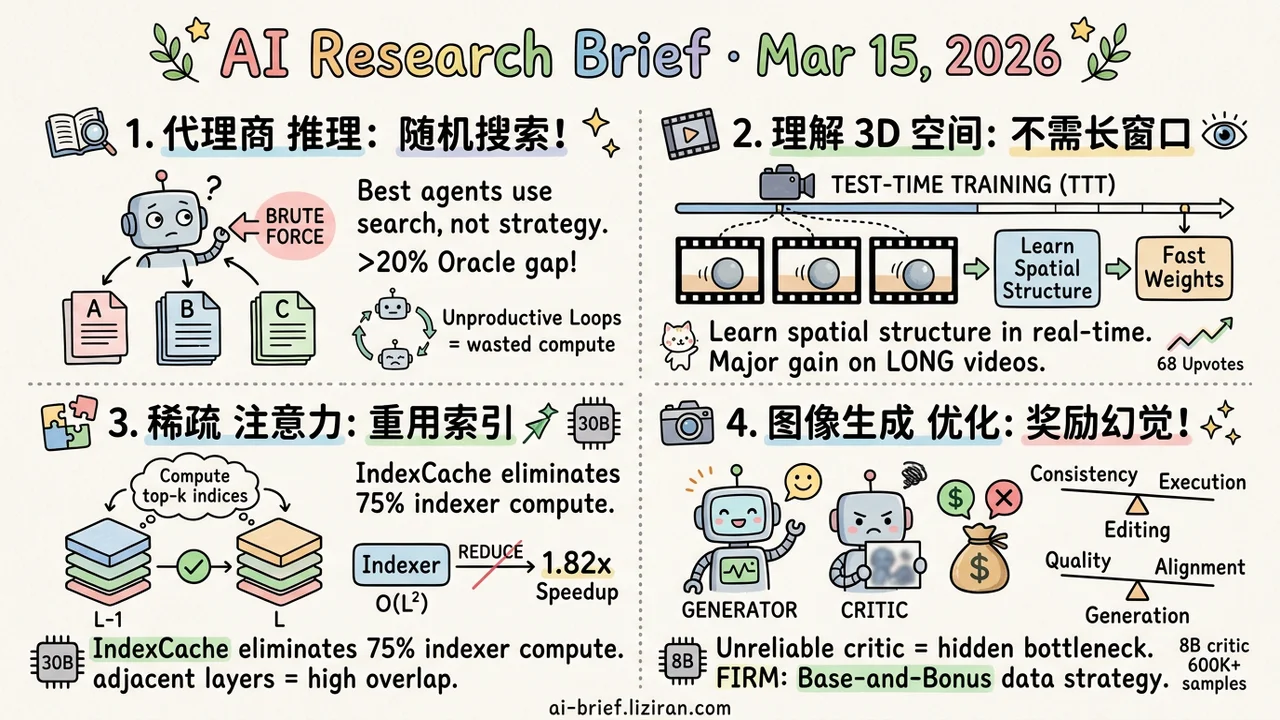

Today's Overview

- Document Agents' Reasoning Is Overestimated. MADQA's benchmark, designed with classical test theory, shows the best multimodal agents match human accuracy but navigate more like random search than strategic reasoning. Nearly 20% gap to Oracle remains.

- Understanding 3D Space Doesn't Need Longer Context Windows. Spatial-TTT updates model parameters at test time, learning spatial structure on the fly. Major gains on long-video tasks.

- Sparse Attention's Indexer Became the New Bottleneck. IndexCache reuses indices across layers by exploiting high overlap in adjacent-layer attention patterns. 75% indexer compute eliminated. 1.82x prefill speedup on a 30B model with near-zero quality loss.

- Reward Model Hallucinations Are the Hidden Bottleneck in RL-Optimized Image Generation. FIRM trains an 8B critic on 600K+ purpose-built samples using a Base-and-Bonus strategy to prevent single-metric misguidance. Fully open-sourced.

Featured

01 Agent Document Agents Navigate by Luck

Multimodal agents look increasingly capable on document-heavy tasks. A new benchmark offers a more sober diagnosis. MADQA contains 2,250 questions built from 800 heterogeneous PDFs, with items designed using classical test theory (CTT) to maximize discrimination between agent capabilities.

The best agents match human searchers on accuracy — but the two groups answer different questions correctly. Agents compensate for weak strategic planning with brute-force retrieval. The harder number: agents still trail Oracle performance by nearly 20%, and they repeatedly fall into unproductive loops, burning compute without extracting new information.

For teams building agent products, this is a product risk signal. Your agent may look smart in demos, but its "reasoning" over real document workflows could be random walk through search space. The benchmark and evaluation tools are open-sourced — worth running against your own system to check whether it's navigating or guessing.

Key takeaways: - Top multimodal agents match human accuracy but rely on brute-force search, not strategic reasoning - The ~20% gap to Oracle exposes a structural weakness in document navigation - Teams building agent products should distinguish "can produce an answer" from "can reason efficiently"

Source: Strategic Navigation or Stochastic Search? How Agents and Humans Reason Over Document Collections

02 Multimodal 3D Spatial Understanding Through Test-Time Weight Updates

Long videos with spatial information seem to demand longer context windows. Spatial-TTT takes a different path: the model continuously updates a subset of its parameters ("fast weights") via test-time training, learning the current scene's spatial structure as it watches each frame. A 3D spatiotemporal convolution drives spatial prediction, guiding the model to actively capture geometric correspondences between frames rather than passively memorizing more of them.

The approach hits SOTA on video spatial understanding benchmarks, with especially strong gains on longer videos. 68 HuggingFace upvotes suggest the community senses a new direction here. The core insight: spatial information isn't about remembering more frames. It's about selectively retaining and updating what matters.

Key takeaways: - Test-time parameter updates replace context window expansion as a more fundamental solution for long-video spatial understanding - 3D spatiotemporal convolutions guide active learning of geometric correspondences, not passive frame memorization - Code is available; teams working on embodied AI or video understanding should evaluate this approach

Source: Spatial-TTT: Streaming Visual-based Spatial Intelligence with Test-Time Training

03 Efficiency Who Optimizes the Optimizer Inside Sparse Attention?

DeepSeek Sparse Attention (DSA) uses a lightweight indexer to select top-k tokens, reducing core attention from O(L²) to O(Lk). The indexer itself still runs at O(L²), and it runs independently at every layer.

IndexCache's observation is straightforward: adjacent layers select highly overlapping top-k tokens. No need to recompute at every layer. The fix splits layers into a few "full layers" (which run their own indexer) and many "shared layers" (which reuse a nearby full layer's indices). Both training-free and training-aware configurations are available. On a 30B DSA model, this eliminates 75% of indexer compute: 1.82x prefill speedup, 1.48x decode speedup, near-zero quality degradation. Early experiments on the GLM-5 production model confirm the findings.

Key takeaways: - The indexer inside sparse attention has become the new bottleneck; cross-layer index reuse is a low-cost fix - Pure engineering optimization, no architecture changes, already validated on production-scale models - The next round of long-context serving cost reduction lies in attention's auxiliary computations

Source: IndexCache: Accelerating Sparse Attention via Cross-Layer Index Reuse

04 Image Gen When the Reward Model Hallucinates, Harder Optimization Makes Things Worse

RL-based image generation optimization has an underappreciated failure mode: the reward model acting as judge produces hallucinated scores. The generator isn't lacking capability. It's being steered by bad signal.

FIRM attacks this from the data layer up. The team designed separate data construction pipelines for image editing and text-to-image, collecting 600K+ high-quality scoring samples to train a dedicated 8B critic. The key design is a "Base-and-Bonus" reward strategy: editing tasks use consistency to modulate execution scores; generation tasks use quality to modulate alignment scores. This prevents any single metric from hijacking the optimization direction. All data, models, and code are open-sourced.

Key takeaways: - Reward model hallucination is the hidden bottleneck in RL-optimized image generation; an unreliable critic is more damaging than a weak generator - 600K+ purpose-built scoring dataset and 8B critic model are open-sourced, ready for RL training pipelines - Where TDM-R1 addresses non-differentiable rewards, FIRM addresses unreliable reward signals — complementary problems

Also Worth Noting

Today's Observation

MADQA evaluates document agents. FIRM trains image generation models. Two seemingly unrelated directions, same blind spot exposed: the component responsible for "judgment" inside the system is itself unreliable.

MADQA used classical test theory to build a high-discrimination benchmark and found that agents navigate document collections more like random walkers. The agent's internal planner produces plausible-looking retrieval decisions, but the outcomes barely beat random search. The problem isn't execution. It's planning. FIRM found the mirror image: when RL optimizes image generation, the reward model hallucinates scores, and the optimizer faithfully marches toward noise. More effort, worse results. The problem isn't the generator. It's the judge.

Both cases point to the same thing: the critic components inside compound systems have never been independently validated.

If you're building compound AI systems, here's one concrete step. List every component in your pipeline that plays a "judge" role: planners, scorers, routers, validators. Run adversarial tests on each one independently. If its judgment accuracy can't support the decision weight you've assigned to it, either replace it or architecturally reduce the system's dependence on it.