Today's Overview

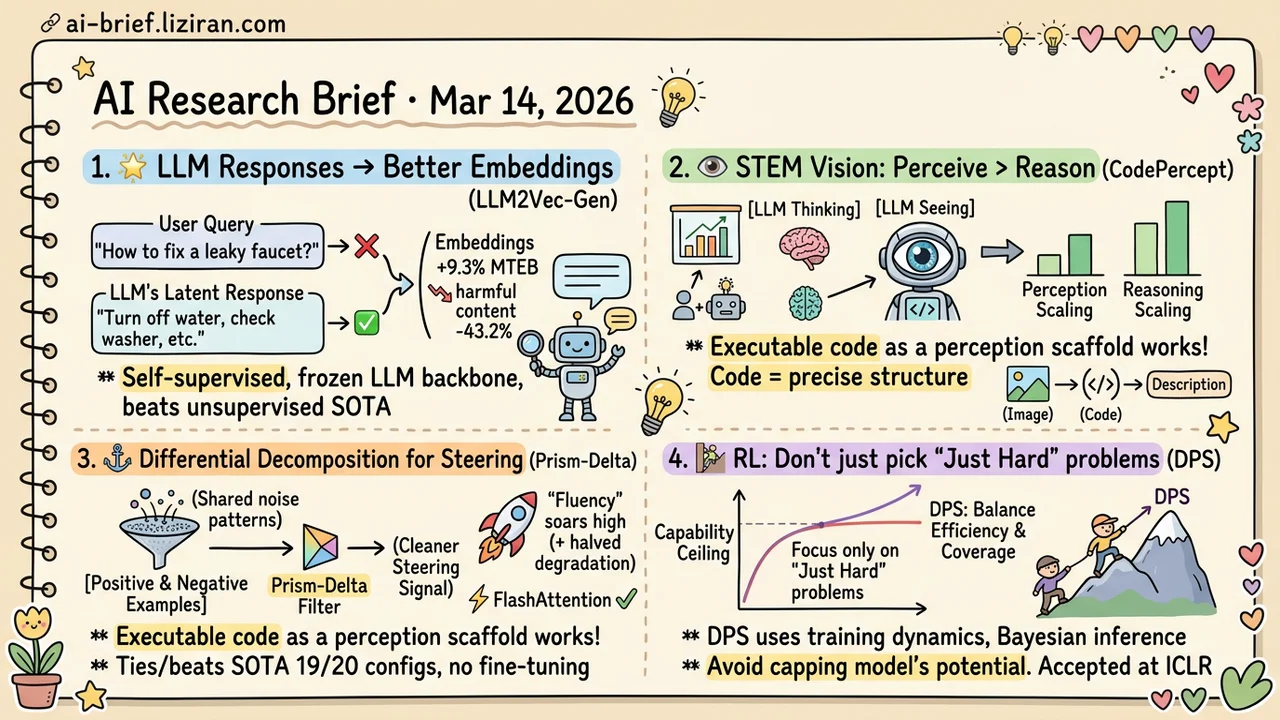

- Encoding LLM Responses Instead of User Queries Lifts Embeddings by 9.3%. LLM2Vec-Gen uses purely self-supervised training to beat the best unsupervised methods on MTEB. Safety alignment transfers into embedding space as a bonus.

- The Real Bottleneck in STEM Visual Reasoning Is Perception, Not Reasoning. CodePercept's ablations show that scaling the perception component consistently outperforms scaling reasoning. Executable code as a perception scaffold works remarkably well.

- Differential Decomposition of Cross-Covariance Matrices for Attention Steering. Prism-Delta ties or beats SOTA on 19 of 20 evaluation configs, halves fluency degradation, and works with FlashAttention out of the box.

- Selecting Only "Just Hard Enough" Problems Caps Your RL Model's Ceiling. DPS uses training dynamics to balance efficiency and coverage. Validated across math, planning, and visual geometry tasks. Accepted at ICLR.

Featured

01 Retrieval Encode the Answer, Not the Question

The core challenge of text embedding is mapping wildly varied inputs to a coherent vector space. LLM2Vec-Gen flips the usual approach: instead of encoding what the user asked, encode how the model would answer. Different phrasings of the same question produce different input embeddings. A good LLM's answers converge.

The method appends a few trainable special tokens to the input, optimizing them to represent the LLM's latent response. An unsupervised embedding teacher provides distillation targets. The LLM backbone stays completely frozen. No paired data. Purely self-supervised. On MTEB it beats the best unsupervised method by 9.3%, while harmful content retrieval drops 43.2% — the LLM's safety alignment carries over into embedding space. The generated embeddings can also be decoded back to text, so you can inspect what the model is actually representing. Traditional contrastive embeddings can't do that.

Key takeaways: - Encoding "how the model would respond" instead of "what the user asked" naturally bridges the gap between input diversity and output consistency - Purely self-supervised training eliminates the need for paired data, drastically lowering the barrier to training embedding models - Teams doing RAG or semantic search should pay attention: building contrastive training sets may no longer be necessary

Source: LLM2Vec-Gen: Generative Embeddings from Large Language Models

02 Multimodal STEM Visual Reasoning Fails at Seeing, Not Thinking

Intuition says models struggle with STEM visual problems because they can't reason well enough. CodePercept ran systematic ablations and found the opposite: independently scaling the perception component consistently outperforms scaling the reasoning component. The model isn't failing to think. It's failing to see.

Their fix is elegant: have the model generate executable code to parse visual information. Code's precise semantics replace natural language's vague descriptions, giving perception a structured scaffold. The team built a dataset of 1M image-description-code triplets to train this capability. They also designed a new benchmark that requires generating code to reconstruct the original image — a far more honest test of perception than answering multiple-choice questions.

Key takeaways: - The real bottleneck in STEM visual reasoning is perception, not reasoning; scaling perception yields consistently higher returns - Executable code as the perception medium naturally fits structured STEM diagrams and charts - The reconstruction benchmark provides a more reliable perception evaluation than standard question-answering

Source: CodePercept: Code-Grounded Visual STEM Perception for MLLMs

03 Interpretability Steering Attention Without Fine-Tuning

Attention steering methods typically extract important directions from positive examples. The problem: structural patterns shared between positive and negative examples get extracted too. That's the root of noisy signal.

Prism-Delta decomposes the difference between positive and negative cross-covariance matrices, keeping only the most discriminative subspace directions and discarding shared components. Each attention head gets a continuous importance weight, so weak-but-useful heads participate at reduced intensity. The method extends to Value representations, capturing content-channel signals that Key-based approaches miss. Results: ties or beats SOTA on 19 of 20 evaluation configs, halves fluency degradation from steering, and adds 4.8% on long-context retrieval. No fine-tuning required. Compatible with FlashAttention. Nearly zero extra memory. A practical inference-time control tool for long-document scenarios.

Key takeaways: - Differential decomposition of cross-covariance matrices strips out shared directions, producing cleaner steering signals - Fluency degradation drops by half; steering quality and output readability are no longer a tradeoff - FlashAttention-compatible, no fine-tuning needed — plug-and-play for long-context RAG pipelines

Source: Prism-Δ: Differential Subspace Steering for Prompt Highlighting in Large Language Models

04 Training Only Training on "Just Hard Enough" Problems Has a Hidden Cost

Online data selection for RL fine-tuning of reasoning models has a subtle blind spot. Current strategies concentrate compute on problems the model can barely solve — these produce the strongest gradient signal and fastest learning. The tradeoff: problems the model can't solve at all get systematically skipped. Short-term efficiency goes up. Long-term capability ceiling goes down.

DPS (Dynamics-Predictive Sampling) models each problem's solving progress as a dynamical system. A hidden Markov model tracks how the model's "solving state" evolves per problem. Bayesian inference then predicts which problems deserve compute, without needing a full rollout to decide. This preserves efficient use of medium-difficulty problems while keeping currently-unsolvable-but-almost-there problems in the mix. Accepted at ICLR. Validated across math, planning, and visual geometry tasks.

Key takeaways: - There's a hidden tradeoff between sampling efficiency and capability coverage in online data selection; picking only "just hard enough" problems caps the model's ceiling - Training dynamics prediction replaces rollout-based filtering, dramatically reducing the compute cost of data selection itself - Teams doing RL fine-tuning for reasoning models should consider this approach to data selection

Source: Dynamics-Predictive Sampling for Active RL Finetuning of Large Reasoning Models

Also Worth Noting

Today's Observation

Three papers today independently hit the same engineering lesson. CodePercept found that STEM visual reasoning bottlenecks at perception, not reasoning — scaling the perception component yields consistently higher returns. LLM2Vec-Gen found that embeddings should encode the model's latent response, not the input itself. DPS found that optimizing sampling efficiency in RL data selection sacrifices coverage and caps the model's ceiling.

Three different fields. Same pattern: the assumed bottleneck isn't the actual bottleneck.

All three teams started with systematic ablations to verify their bottleneck hypothesis rather than pouring resources into the intuitive direction. If you're working on performance optimization, consider spending a day on controlled experiments: replace or freeze the component you believe is the bottleneck with an oracle, then measure system-level impact. If the improvement is small, the real bottleneck is somewhere else.