Today's Overview

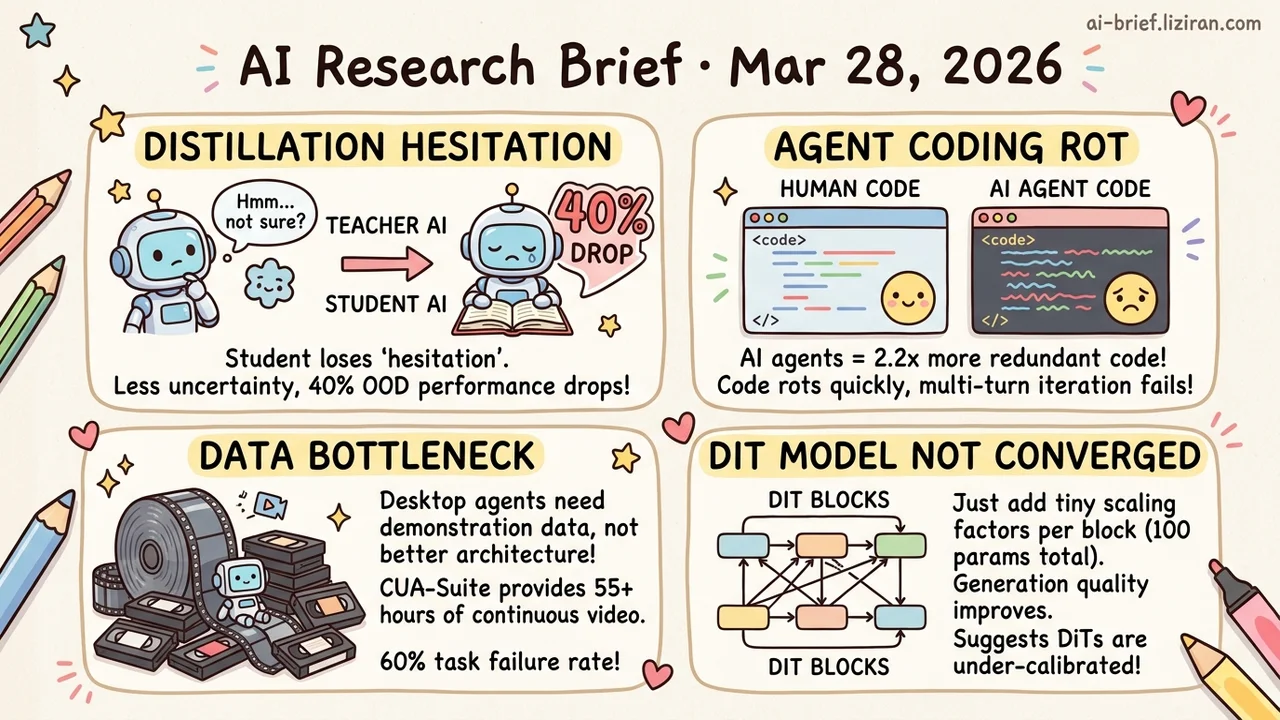

- Self-distillation strips out the model's ability to hesitate, not redundant steps. Once epistemic verbalization is suppressed, OOD performance drops up to 40%, and standard metrics won't catch it.

- Coding agents produce 2.2x more redundancy than human projects. SlopCodeBench is the first benchmark to quantify tech debt across multi-turn iterations: all 11 models failed every task end-to-end, and prompt tuning doesn't fix the root cause.

- The bottleneck for desktop agents is demonstration data, not model architecture. CUA-Suite pushes continuous human demo footage from under 20 hours to 55 hours. The best current model still fails about 60% of tasks.

- Trained DiT models haven't actually converged. Adding one scaling coefficient per block (roughly 100 parameters total) improves generation quality, suggesting current training pipelines are systematically under-calibrated.

Featured

01 Reasoning Shorter Chains, Smarter Models? The Opposite

When self-distillation shortens reasoning chains, you'd expect it to trim redundancy. This paper finds it removes something else: epistemic verbalization. That's the model's habit of saying "I'm not sure" or "let me try another approach" mid-reasoning. These hesitations look wasteful but actually help the model handle unfamiliar problems.

When the teacher model receives rich context, it stops expressing uncertainty. The distilled student inherits that blind confidence. In-domain performance improves quickly, but on out-of-distribution inputs, performance collapses by up to 40%. This pattern held across Qwen3-8B, DeepSeek-Distill-Qwen-7B, and Olmo3-7B-Instruct. Not a one-off.

The model didn't get dumber. It lost the ability to know what it doesn't know. If you're running distillation, your eval suite may not be tracking what's actually being lost.

Key takeaways: - Self-distillation suppresses epistemic verbalization, not redundant reasoning steps. - In-domain metrics can mask OOD degradation of up to 40%. Eval suites need uncertainty-expression tracking. - Teams running distillation should check whether the student model retains the ability to express doubt.

Source: Why Does Self-Distillation (Sometimes) Degrade the Reasoning Capability of LLMs?

02 Code Intelligence Passing Tests Doesn't Mean You Can Iterate

Anyone who's used AI for coding has the intuition: generated code runs, but after a few rounds of changes it starts rotting. SlopCodeBench puts numbers on that intuition. Twenty tasks requiring multi-turn iteration (93 checkpoints) force agents to revise their own code under evolving requirements, not write one-shot answers.

No model completed any task end-to-end. The best checkpoint pass rate was 17.2%. In 89.8% of trajectories, code redundancy kept climbing. 80% showed structural decay with complexity concentrating in a handful of functions. Compared to 48 open-source Python projects, agent code carried 2.2x more redundancy. Human code stayed flat over time.

Prompt engineering improved initial code quality but couldn't prevent the subsequent rot. The problem isn't the starting point. Agents lack design discipline across iterations.

Key takeaways: - Pass-rate benchmarks systematically underestimate maintainability issues in agent-generated code. - Agent code carries 2.2x more redundancy than human open-source projects and degrades each turn. Human code stays stable. - Prompt optimization is a surface fix. Iterative development requires agents with architectural planning ability.

Source: SlopCodeBench: Benchmarking How Coding Agents Degrade Over Long-Horizon Iterative Tasks

03 Agent Desktop Agents Need More Human Demos, Not Better Models

The biggest bottleneck for training computer-use agents isn't model architecture. The previous largest open-source dataset, ScaleCUA, had 2 million screenshots, roughly under 20 hours of video. CUA-Suite changes the scale: 10,000 human-annotated tasks across 87 professional applications, captured as continuous 30fps recordings totaling about 55 hours and 6 million frames.

"Continuous" is the key word. Not sparse screenshots with final click coordinates, but full cursor trajectories, multi-layer reasoning annotations, and lossless video streams. The data converts directly into any existing agent framework's format.

Early benchmarks are sobering. The best foundation action model still fails about 60% of tasks on professional desktop apps. Models aren't incapable; they just haven't seen enough real human operations. 84 upvotes on HF suggest the community has been waiting for high-density demo data like this.

Key takeaways: - The previous largest open-source CUA dataset had under 20 hours of video. CUA-Suite pushes continuous demos to 55 hours across 10,000 tasks. - Continuous video preserves full interaction dynamics that sparse screenshots miss, a data format requirement for training general desktop agents. - Current models still fail about 60% of tasks, pointing to demo data as the bottleneck rather than model capability.

Source: CUA-Suite: Massive Human-annotated Video Demonstrations for Computer-Use Agents

04 Image Gen Trained Diffusion Models Aren't at Their Best Yet

Add one learnable scaling coefficient per DiT block — about 100 parameters total — and generation quality improves visibly. Inference steps can even be reduced. Calibri's method is straightforward: model calibration framed as a black-box reward optimization problem, solved with evolutionary search.

The method matters less than what it reveals. If 100 scaling coefficients produce visible gains, current DiT training pipelines are likely under-calibrated systematically. The relative weights across blocks aren't being optimized well during training.

Every team training DiTs should ask: does the pipeline need a post-calibration step?

Key takeaways: - Roughly 100 scaling parameters improve generation quality across multiple text-to-image models and reduce inference steps. - The result suggests systematic under-calibration in DiT training. Models ship in a suboptimal state. - Teams training DiTs should evaluate whether their pipelines need a calibration stage.

Source: Calibri: Enhancing Diffusion Transformers via Parameter-Efficient Calibration

Also Worth Noting

Today's Observation

Two seemingly unrelated papers today point at the same mechanism failure. The self-distillation paper shows that reasoning degradation isn't from reduced capability. Epistemic verbalization — "I'm not sure," "let me rethink" — gets systematically suppressed. The model doesn't get dumber; it loses the habit of expressing uncertainty. The iterative-optimization survey finds that only 9% of self-improving agents actually use automated optimization. Not because the algorithms fail, but because engineers face too many implicit design decisions that no objective function can answer: which parameters to search, how to shape the feedback signal, when to stop.

The intersection is specific. Current post-training failures don't stem from the optimization algorithm itself. They stem from not identifying what must be preserved through the iterative process. Distillation keeps accuracy but drops uncertainty expression. Optimization loops keep the objective but lose controllability of the design space. Both show improving metrics. The signals that matter live outside those metrics.

If you're shipping any iterative post-training pipeline — distillation, RLHF, self-play — add one check to your eval suite. Sample 100 OOD inputs and manually inspect whether the model still expresses hesitation. If it's confident about everything, that's not capability gain. It's lost calibration.