Today's Overview

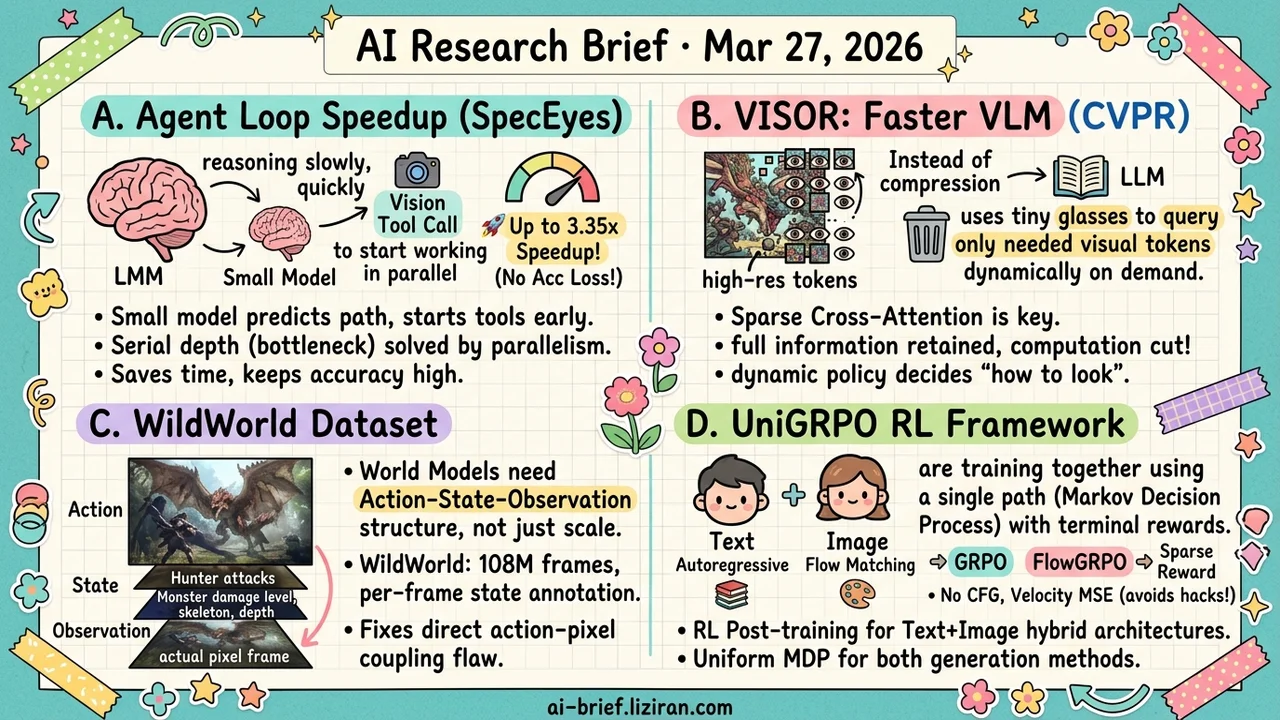

- Speculative Execution Comes to Agent Loops, Up to 3.35x Speedup. SpecEyes borrows CPU branch prediction for multimodal agents: a small model predicts trajectories, launches vision tool calls in parallel. Accuracy holds or improves.

- VLM Speedup Without Dropping Visual Tokens. VISOR replaces dense self-attention with sparse cross-attention, letting the language model query vision on demand. Full visual information retained, compute cost cut sharply. (CVPR)

- World Model Datasets Need Structure, Not Scale. WildWorld provides 108M frames with explicit action-state-observation decoupling, exposing the design flaw of coupling actions directly to pixels.

- RL Training Across Text and Image Generation Now Has a Unified Framework. UniGRPO models autoregressive text and flow-matching images as a single MDP, giving mixed-architecture post-training a reusable baseline.

Featured

01 Agent Speculative Execution for Multimodal Agents

CPUs have done this for decades: predict the next instruction, prefetch data, roll back if wrong. SpecEyes applies the same idea to multimodal agent systems. A lightweight, tool-free small model acts as a speculative planner, predicting the agent's execution trajectory and launching vision tool calls before the large model finishes its serial perceive-reason-act loop.

The core mechanism is a "cognitive gate" that measures answer separability to estimate confidence, deciding when to terminate expensive tool chains early without external verification. A heterogeneous parallel funnel lets the small model's stateless concurrency mask the large model's stateful serial execution. On V* Bench, HR-Bench, and POPE, speedups range from 1.1x to 3.35x. Accuracy doesn't drop; it rises by up to +6.7%.

The broader signal matters more than any single benchmark. Agent loops are getting deeper (o3, Gemini Agentic Vision). Sequential depth, not single-step latency, is the real system bottleneck. Speculative parallelism may offer more leverage than optimizing individual models.

Key takeaways: - CPU-style speculative execution applied to agent loops: small model predicts trajectories, parallelizes vision tool calls. - Serial depth is the true bottleneck in agent systems, more worth optimizing than single-step inference speed. - Accuracy gains suggest many intermediate tool calls were redundant in the first place.

Source: SpecEyes: Accelerating Agentic Multimodal LLMs via Speculative Perception and Planning

02 Efficiency Keep All Visual Tokens, Still Run Faster

The standard approach to VLM acceleration is compressing visual tokens: squeeze images down before feeding them to the language model. VISOR (CVPR) goes the opposite direction. Keep every high-resolution visual token, but let the language model look only when it needs to.

The swap: replace dense image-text self-attention with sparse cross-attention in most layers. Only a dynamically selected subset of self-attention layers does fine-grained visual reasoning. A lightweight policy network allocates visual compute per sample: simple questions get fewer layers, hard questions get more. Compute drops substantially while fine-grained understanding tasks actually improve over compression-based methods. The information bottleneck disappears at the source.

Key takeaways: - VLM acceleration bottleneck is interaction pattern, not token count. Sparse on-demand querying beats compress-then-feed. - Dynamic policy network lets the model decide "how carefully to look," saving compute on easy samples without sacrificing accuracy on hard ones. - CVPR acceptance. Teams working on VLM deployment optimization should track this direction.

03 Multimodal World Models Struggle With Actions. The Data Structure Is Wrong.

Current video world model datasets have a structural flaw: actions map directly to pixel changes with no explicit state layer in between. Models learn "press A, pixels change to B" instead of "press A, state changes to S, state S produces observation O." That second decomposition is the correct abstraction for dynamical systems.

WildWorld extracts 108 million frames from Monster Hunter: Wilds, but scale isn't the point. Every frame is annotated with character skeleton, world state, camera pose, and depth map. Action, state, and observation are explicitly separated at the data level. The companion WildBench evaluation measures both action following and state alignment. Current models remain weak on semantically rich actions and long-horizon state consistency.

Key takeaways: - The core deficiency in world model datasets is direct action-pixel coupling without an explicit state intermediate layer. - WildWorld provides action-state-observation decoupled benchmarking with per-frame state annotations across 108M frames. - Teams working on world models or game AI should study this data structure design, not just the scale.

04 Image Gen Unified RL for Text and Image Generation

The unified model trend is clear: autoregressive for text, flow matching for images. RL-based post-training for these hybrid architectures hits a wall. GRPO works on the text side. Flow matching's continuous generation process doesn't fit the same optimization framework.

UniGRPO models the entire multimodal generation as a Markov decision process. Text follows standard GRPO. Images follow FlowGRPO. Sparse terminal rewards drive both. Two design choices matter: removing classifier-free guidance to maintain linear rollouts (critical for multi-turn scalability), and replacing latent KL with velocity-field MSE penalties to suppress reward hacking. This is an engineering integration, not a theoretical breakthrough. It gives mixed-architecture post-training a reproducible baseline.

Key takeaways: - Autoregressive text plus flow-matching images is the dominant hybrid architecture. Unified RL training is the next open problem. - Dropping CFG and using velocity-field MSE regularization are the key modifications that make FlowGRPO scalable in multi-turn settings. - Teams doing unified generation post-training can use this framework as a starting baseline.

Source: UniGRPO: Unified Policy Optimization for Reasoning-Driven Visual Generation

Also Worth Noting

Today's Observation

Three independent papers today converge on the same design principle: stop processing all inputs by default.

SpecEyes speculatively prefetches visual inputs in the agent loop, on the premise that most intermediate vision calls are redundant. VISOR lets the LLM query visual tokens on demand at the attention layer, keeping full information but accessing only what's needed. EVA uses RL to teach video agents which frames matter, instead of scanning every one. Three teams, three layers (agent orchestration, attention mechanism, data sampling), same conclusion. All report significant efficiency gains without accuracy loss.

The common thread isn't sparsification. Sparsification reduces data volume. These three works relocate the "what to look at" decision from the preprocessing pipeline to the inference process itself. The model forms a judgment first, then decides what inputs to fetch. That's the inverse of the standard pattern: process everything, then think.

As multimodal input scales keep growing (longer video, deeper tool chains, higher image resolution), the "process everything first, reason later" pipeline is giving way to "reason first, fetch on demand." If you're building multimodal systems, audit your pipeline for how much compute goes to just-in-case preprocessing. Those invisible preprocessing steps may be the largest optimization target you have.