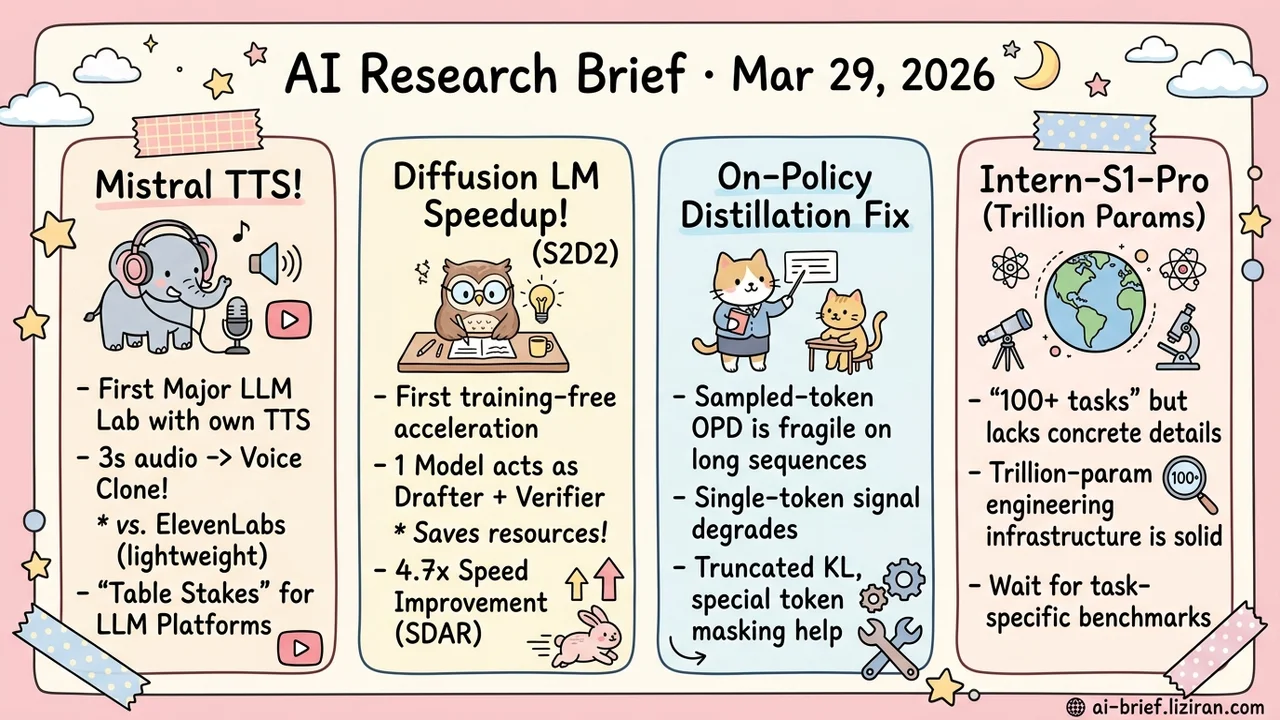

Today's Overview

- Mistral becomes the first major LLM lab to ship its own TTS. Three seconds of reference audio is enough for voice cloning. Speech synthesis is shifting from specialized vendors to LLM-platform table stakes.

- Diffusion language models get their first training-free speedup. S2D2 exploits a degeneracy at block size=1 to let the same model act as both drafter and verifier, hitting 4.7x acceleration.

- Sampled-token on-policy distillation is fundamentally fragile on long sequences. Three failure modes and their fixes make a ready-made checklist for any team doing knowledge transfer.

- Trillion-parameter science model Intern-S1-Pro claims 100+ tasks. The engineering infrastructure is solid, but domain coverage depth needs per-task benchmarks to judge.

Featured

01 Mistral Fires the First Shot on LLM-Native TTS

Mistral released Voxtral TTS, making it the first major LLM lab to build speech synthesis in-house. No single technical breakthrough here: autoregressive generation for semantic tokens, flow-matching for acoustic detail, plus a VQ-FSQ hybrid codec trained from scratch. All mature components, packaged into a system that clones voices from three seconds of reference audio.

In native-speaker evaluations, Voxtral beat ElevenLabs Flash v2.5 on naturalness and expressiveness with a 68.4% win rate. Context matters: Flash is ElevenLabs' speed-optimized lightweight model, not their flagship. This is closer to "commercially viable" than "best in class." Weights are open under CC BY-NC. Research and non-commercial use are free; commercial deployment goes through Mistral's API.

The model itself matters less than the signal. Speech synthesis is moving from ElevenLabs-style specialist vendors to standard LLM platform capability — the same path image generation took two years ago. Teams building voice products should reassess their vendor strategy.

Key takeaways: - Mistral is the first major LLM lab to ship in-house TTS; the strategic signal outweighs the technical novelty. - Three seconds of reference audio for voice cloning, but the 68.4% win rate is against ElevenLabs' lightweight tier, not their best model. - Speech synthesis is becoming LLM-platform table stakes. If you depend on a dedicated TTS vendor, your bargaining position just changed.

Source: Voxtral TTS

02 Diffusion LLMs Finally Get an Acceleration Toolkit

Block-diffusion language models can theoretically generate tokens in parallel, but their acceleration toolchain has been nearly empty. S2D2 found an elegant property: when block size shrinks to 1, a block-diffusion model degenerates into a standard autoregressive model. One pretrained model can serve as both the parallel drafter and the sequential verifier. No auxiliary model needed.

The key design is a lightweight routing policy that decides which positions need verification and which can trust the diffusion output. This avoids the brittleness of fixed thresholds that are either too aggressive or too conservative. Across three block-diffusion models, S2D2 consistently improved the speed-accuracy tradeoff: 4.7x on SDAR and 4.4x on LLaDA2.1-Mini over static baselines, with the latter also gaining slightly in accuracy.

Key takeaways: - First training-free acceleration method for diffusion language models, filling a real toolchain gap. - Same model acts as both drafter and verifier by exploiting block size=1 degeneracy. - Adaptive routing beats fixed thresholds for deciding when to verify, removing a manual tuning burden.

Source: S2D2: Fast Decoding for Diffusion LLMs via Training-Free Self-Speculation

03 On-Policy Distillation Breaks Down on Long Sequences

On-policy distillation (OPD) lets the student generate its own rollouts for the teacher to score. More flexible than fixed teacher trajectories, but the common sampled-token implementation has a fundamental weakness: it compresses distribution matching into a single-token signal. As student rollouts get longer and drift further from the teacher's distribution, that signal degrades. Not something you can tune away.

This paper maps three failure modes: imbalanced single-token signals, misleading teacher guidance on student-generated prefixes, and gradient distortion from tokenizer and special-token mismatches. The fixes are direct: truncated reverse-KL with top-p rollout sampling and special-token masking. Both math reasoning and agent multitask training showed more stable results than standard sampled-token OPD. The three failure modes alone are a ready-made diagnostic checklist for any post-training pipeline.

Key takeaways: - Sampled-token OPD fragility on long sequences stems from single-token signal degradation, not hyperparameter choices. - Truncated reverse-KL plus top-p sampling and special-token masking is a drop-in fix. - Teams doing post-training should audit their pipelines against these three failure modes.

Source: Revisiting On-Policy Distillation: Empirical Failure Modes and Simple Fixes

04 Trillion-Scale Science Model Claims 100+ Tasks, Details Pending

"Covering chemistry, materials, life sciences, earth sciences, and 100+ specialized tasks" — Intern-S1-Pro leads with this claim but barely unpacks it. Which tasks per domain? How does it compare to tuned domain-specific models? These questions stay vague.

What the paper does detail is engineering infrastructure: XTuner and LMDeploy supporting trillion-parameter RL training with guaranteed training-inference precision alignment. That is a genuine engineering contribution. But for teams doing scientific AI deployment, the core question is whether a "specializable generalist" actually beats a well-tuned domain model on your specific problem. That answer requires per-task breakdowns only the full paper can provide.

Key takeaways: - "100+ specialized tasks" lacks concrete breakdown; depth of domain coverage is unverified. - Trillion-parameter RL training infrastructure is the hard contribution here. - Generalist vs. domain-specific model effectiveness: wait for full benchmark data before drawing conclusions.

Source: Intern-S1-Pro: Scientific Multimodal Foundation Model at Trillion Scale

Also Worth Noting

Today's Observation

Voxtral TTS in speech, S2D2 in text. Completely different problems, strikingly similar architecture: autoregressive modeling handles sequence-level semantic structure (Voxtral uses it for semantic token sequences; S2D2 relies on it for inter-block dependencies), while diffusion or flow-matching handles local high-dimensional detail (Voxtral's acoustic token reconstruction; S2D2's intra-block parallel denoising).

This convergence isn't coincidence. It reflects problem structure. When the generation target has both strong sequential dependencies (semantics must cohere) and high-dimensional local structure (acoustic detail, token-level parallelism), pure autoregressive is too slow and pure diffusion lacks long-range control. "Autoregressive at the sequence layer, diffusion at the detail layer" is becoming the default decomposition for this class of problems, and it already spans speech and text.

If you're designing a new generation system, start with one question: does your output have both sequential dependencies and local high-dimensional structure? If yes, this two-layer architecture is worth building as your baseline before iterating — rather than starting from pure autoregressive or pure diffusion and patching from there.