Today's Overview

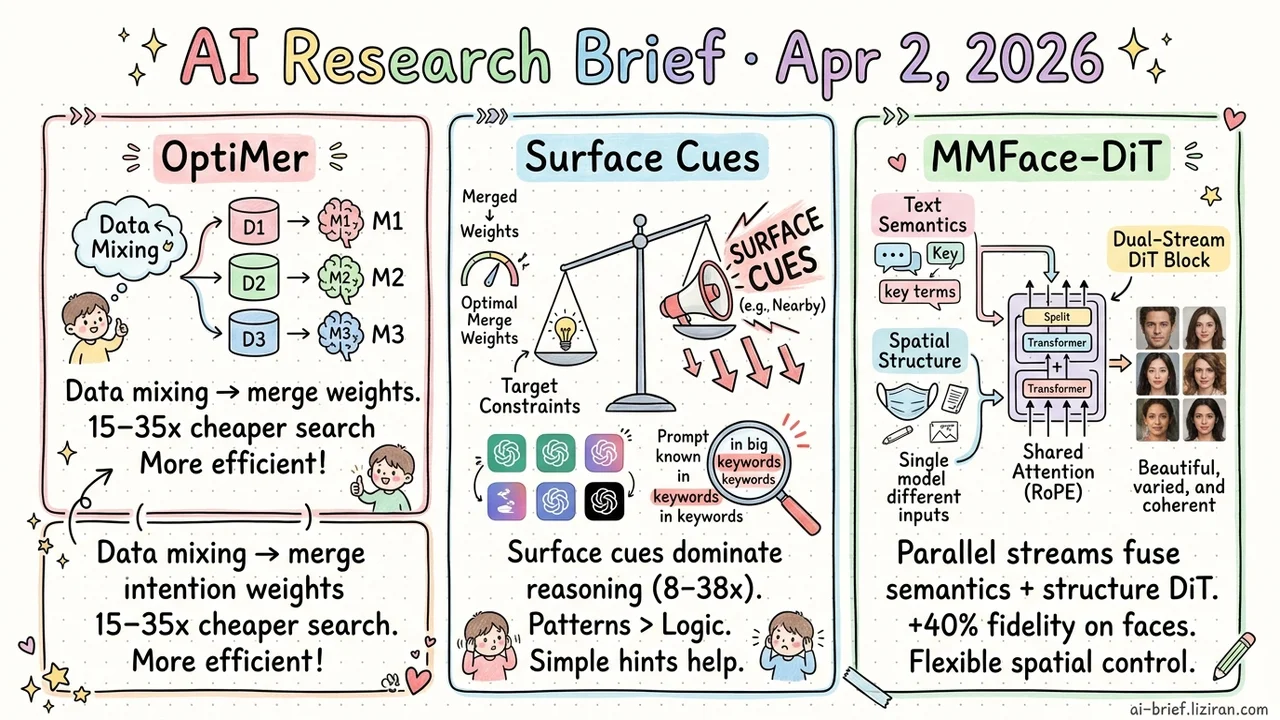

- Data mixing ratios move from pre-training hyperparameter to post-training optimization. OptiMer trains per-dataset models, then searches for optimal merge weights in parameter space. Search cost drops 15–35x.

- Surface cues hijack LLM reasoning 8–38x harder than target constraints. A stable sigmoid pattern across six models. One minimal hint recovers 15 percentage points.

- Dual-stream DiT unifies text semantics and spatial structure inside the architecture. MMFace-DiT beats six SOTAs by 40% on face generation. One model handles multiple spatial conditions.

Featured

01 Data Mixing Ratios? Decide After Training.

OptiMer performs a clean variable substitution: it turns continual pre-training (CPT) data mixing ratios from a training hyperparameter into a post-hoc optimization target. Train a separate CPT model on each dataset, extract each one's distribution vector — the shift it caused in parameter space — then run Bayesian optimization over those vectors to find the best merge weights.

The search space shrinks from "retrain" to "reweight." On Gemma 3 27B across languages (Japanese, Chinese) and domains (math, code), search cost drops 15–35x versus traditional data mixing, with better results. Two side findings stand out: optimized merge weights can be read backward as data mixing ratios, and retraining with those ratios improves standard CPT. The same vectors can also be re-optimized for different objectives without retraining, producing custom models on demand.

Clean idea, solid coverage. The catch: you need enough compute to run one CPT pass per dataset. For well-resourced teams, this slashes trial-and-error cost. For small teams, the upfront investment may not pay off.

Key takeaways: - Data mixing ratios become a post-training optimization problem, cutting search cost 15–35x. - Merge weights double as data mixing guidance, closing the loop from vector optimization to data strategy. - Requires one CPT run per dataset upfront. Best suited for teams with compute budget to spare.

Source: OptiMer: Optimal Distribution Vector Merging Is Better than Data Mixing for Continual Pre-Training

02 One Salient Word Overrides Your Prompt's Actual Goal

LLMs should weigh all information in a prompt and reason holistically. This systematic study across six models shows they don't. When surface cues (e.g., "nearby") conflict with implicit constraints (e.g., "target is unreachable"), the surface cue's influence is 8–38x stronger. Not occasional errors: a stable sigmoid curve.

Token-level attribution confirms the mechanism. Models behave more like keyword matchers than compositional reasoners — pattern matching, not logical inference. The HOB benchmark (500 test cases, 14 models) validates this is universal: no model exceeds 75% accuracy under strict evaluation. One surprising result: adding a minimal hint (e.g., emphasizing a key object) recovers 15 percentage points on average. The models "know" the constraint exists. Surface cues just drown it out.

Key takeaways: - Surface cues hijack LLM reasoning direction 8–38x over target constraints. This is systematic, not anecdotal. - Keyword weight distribution in prompts is highly uneven. Know which words "steal the show" and explicitly reinforce constraints that get overlooked. - The failure is in constraint inference, not knowledge. Simple prompting fixes recover significant accuracy.

Source: The Model Says Walk: How Surface Heuristics Override Implicit Constraints in LLM Reasoning

03 Dual-Stream Transformers Align Text and Spatial Control From the Inside

MMFace-DiT processes text semantics and spatial structure (masks, sketches) in parallel within the same Transformer block, fusing them through shared RoPE attention. The design choice: unify both signals inside the architecture instead of bolting control modules onto a frozen pipeline.

The payoff is direct. Text descriptions and structural priors negotiate in a shared latent space rather than reconciling after the fact. This avoids modality dominance problems. Visual fidelity and prompt alignment improve 40% over six SOTA methods. A single model dynamically adapts to different spatial condition inputs without condition-specific training.

Faces are highly structured and well-constrained. Whether dual-stream fusion holds its advantage on more open-ended generation tasks is the key open question.

Key takeaways: - Dual-stream DiT unifies semantic and spatial fusion at the architecture level, avoiding the disconnect of external control modules. - One model handles multiple spatial conditions (masks, sketches, etc.). Real deployment flexibility. - Strong validation on faces. Generalization to unconstrained domains is the outstanding question.

Source: MMFace-DiT: A Dual-Stream Diffusion Transformer for High-Fidelity Multimodal Face Generation