Today's Overview

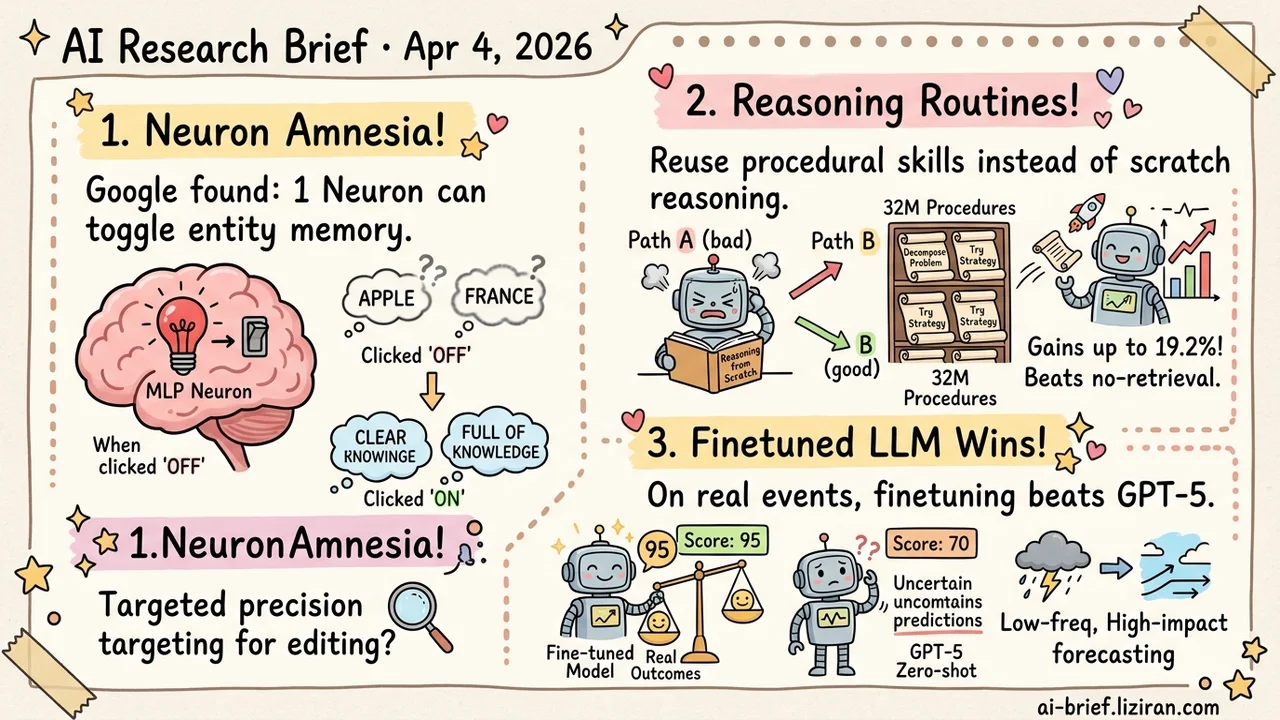

- Single MLP Neurons Can Trigger Entity-Level "Amnesia." Google verified causal links across 200 entities — knowledge editing may shift from broad surgery to precision targeting.

- Reusable Problem-Solving Routines Extracted From Reasoning Traces. 32 million procedural knowledge entries free models from reasoning from scratch, with gains up to 19.2%.

- Fine-Tuned LLMs on Real Disruption Events Beat GPT-5 Zero-Shot on accuracy and calibration for supply chain forecasting. The general pattern for low-frequency, high-impact prediction deserves attention.

Featured

01 Interpretability Single Neurons Can "Remember" an Entity

Where does knowledge live inside an LLM — spread evenly, or concentrated in precise switches? Google's answer is surprisingly specific: for many entities, a single MLP neuron's activation suffices to recover related factual predictions. The team ran causal interventions on 200 entities. Ablate a specific neuron and the model forgets everything about that entity. Inject its activation at a placeholder token and correct recall returns.

These entity neurons cluster in early layers. They respond to aliases, abbreviations, typos, and cross-lingual references — suggesting the model maintains a normalized entity representation internally. The parallel to neuroscience's "grandmother cell" hypothesis is hard to miss: decades of debate about whether single biological neurons encode specific concepts, now with silicon evidence.

200 entities is a small sample, and popular entities have much better coverage than obscure ones. Not every entity maps cleanly to a single neuron. But if the finding generalizes, knowledge editing gets dramatically cheaper: locate the right early-layer neuron, make a targeted fix, done.

Key takeaways: - Causal intervention on single MLP neurons can trigger or reverse entity-level "amnesia." Knowledge storage is sparser and more locatable than expected. - Entity neurons cluster in early layers and stay consistent across aliases, typos, and languages, offering scalpel-level precision for knowledge editing. - Sample covers only 200 entities with better results for popular ones. Whether long-tail knowledge is equally locatable remains open.

Source: Friends and Grandmothers in Silico: Localizing Entity Cells in Language Models

02 Reasoning Teaching Models to Accumulate Problem-Solving Routines

Mainstream test-time scaling has models reason from scratch on every problem. No experience reuse — like an engineer debugging from first principles every time. Mila's Reasoning Memory takes a different path: extract "procedural knowledge" from existing reasoning traces. How to decompose problems, which strategies to pick, when to backtrack. The result is a retrievable library of 32 million solving subroutines.

At inference, the model articulates the core sub-problem, then retrieves relevant routines as an implicit prior. Across six math, science, and coding benchmarks, this beats no-retrieval baselines by up to 19.2% and the strongest equal-compute baseline by 7.9%. Ablations point to two factors: breadth of procedural coverage in source traces, and decompose-retrieve granularity that makes knowledge actually reusable.

The open question is generalization. These routines work well in distribution. How they perform on genuinely novel problem types needs more testing.

Key takeaways: - Reusable solving strategies extracted from reasoning traces let models skip reasoning from scratch every time. - 32 million procedural knowledge entries outperform retrieving raw documents or full traces. - The bottleneck is generalization: performance on out-of-distribution problem types remains unverified.

Source: Procedural Knowledge at Scale Improves Reasoning

03 AI for Science Fine-Tuned LLMs Beat GPT-5 at Supply Chain Forecasting

Train on outcomes, not descriptions. This work fine-tunes LLMs end-to-end on real supply chain disruption events, producing calibrated probability forecasts. Input signals scatter across unstructured text. Target events are extremely rare. Generic large models can't extract useful patterns from this noise through zero-shot reasoning alone.

The fine-tuned model significantly outperforms GPT-5 on accuracy, calibration, and precision. It also spontaneously develops structured probabilistic reasoning — no manual prompt engineering needed.

The real value isn't the supply chain application itself. Supervising prediction models with realized outcomes could transfer to financial risk, geopolitical events, and other low-frequency, high-impact scenarios. Cross-domain generalization still needs validation.

Key takeaways: - Supervised fine-tuning on actual disruption outcomes significantly beats GPT-5 zero-shot on accuracy and calibration. - Fine-tuning automatically induces structured probabilistic reasoning without manual prompt engineering. - The paradigm matters more than the application: any low-frequency, high-impact prediction task could benefit.

Source: Forecasting Supply Chain Disruptions with Foresight Learning