Today's Overview

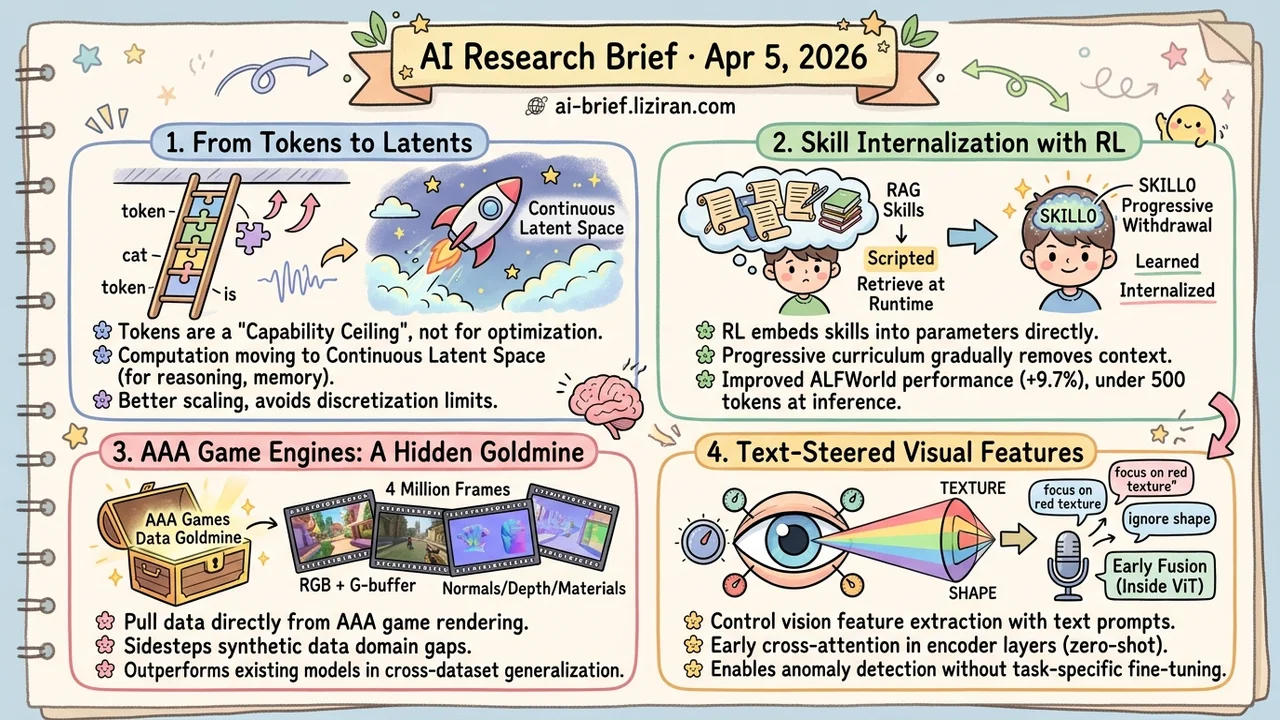

- Discrete Tokens Are LLM's Architectural Ceiling, Not an Optimization Target. A survey traces four technical threads showing core computation migrating from token sequences to continuous latent space.

- Agent Skills Work Better Internalized via RL Than Retrieved at Runtime. SKILL0's progressive withdrawal curriculum improves ALFWorld by 9.7%, with under 500 tokens per step at inference.

- AAA Game Engines Are a Hidden Data Goldmine for Generative Rendering. 4 million synchronized RGB + G-buffer frames produce models that clearly outperform existing solutions on cross-dataset generalization.

- Visual Features Can Be Steered in Real Time with Text Prompts. Injecting cross-attention inside ViT encoder layers enables zero-shot generalization on anomaly detection without degrading general capabilities.

Featured

01 Architecture Core LLM Computation Is Leaving Token Space

Discrete tokens are the basic unit of operation in current LLMs, but mounting evidence suggests they're also the capability ceiling. This survey traces a migration already underway: the model's internal computation — reasoning, planning, memory, multimodal perception — is shifting from human-readable token sequences to continuous latent space.

The driver isn't a single breakthrough. It's structural limitations of discretization itself. Language redundancy lowers information density. Tokenization introduces compression loss. Autoregressive token-by-token generation creates sequential efficiency bottlenecks. Better tokenizers or bigger models won't fix these. They're architectural limits. The survey tracks this trend along four axes — architecture, representation, computation, optimization — and maps progress across seven capability dimensions.

This isn't purely academic either. Coconut (continuous chain-of-thought), latent reasoning, and similar approaches are now validating at scale. The default assumption that tokens are the right computational substrate is being challenged. Does your current inference and training framework still operate at the token level by default? That may be capping your system's potential.

Key takeaways: - The discrete token bottleneck is an architectural ceiling, not an optimization problem. Latent space is emerging as an alternative computational substrate for LLMs. - Reasoning, planning, and memory show better scaling properties in continuous space. - When choosing inference and training frameworks, evaluate whether they're locked into token-level operations.

Source: The Latent Space: Foundation, Evolution, Mechanism, Ability, and Outlook

02 Agent Skill Internalization Beats Retrieval Injection

The dominant approach to extending agent skills is basically RAG: retrieve skill descriptions, inject them into the prompt, have the model follow along. Retrieval is noisy, injection eats tokens, and the model never truly learns the skills. It's reading from a script. SKILL0 asks a more fundamental question: can RL internalize skills directly into parameters?

The approach uses a progressive withdrawal curriculum. Training starts with full skill context. A dynamic evaluator scores each skill file's contribution to the current policy, gradually removing skills the model has already absorbed. The endpoint: fully zero-shot execution. On ALFWorld, performance improved 9.7%. On Search-QA, 6.6%. Inference context stayed under 500 tokens per step. This is a direct fine-tuning vs. RAG showdown in the agent domain, and internalization won.

Key takeaways: - Skill internalization shifts agent capability from "runtime retrieval" to "training-time learning," a fundamentally different acquisition paradigm. - The progressive withdrawal curriculum dynamically evaluates each skill's contribution, solving the problem where removing context cold-turkey collapses performance. - Token overhead drops sharply at inference, with direct cost implications for deployed agents.

Source: SKILL0: In-Context Agentic Reinforcement Learning for Skill Internalization

03 Video Gen The Best Rendering Dataset Was Hiding in Games

Everyone working on inverse and forward rendering knows the domain gap between synthetic datasets and real scenes. This team's fix is unexpected: pull data straight from AAA games. It makes sense on reflection. Game engines have spent decades solving complex lighting, dynamic weather, and motion blur. Their rendering quality surpasses academic synthetic datasets by a wide margin.

Using a dual-screen capture method, they extracted 4 million continuous 720p frames, each with synchronized RGB and five G-buffer channels (normals, depth, materials, etc.). Inverse rendering models fine-tuned on this data clearly outperform existing approaches on cross-dataset generalization. The data also enables controllable video generation through G-buffer guidance. A VLM-based evaluation protocol handles the ground-truth-free real-world assessment problem.

Key takeaways: - AAA games as a rendering data source sidestep the synthetic dataset domain gap entirely. - 4 million synchronized RGB + G-buffer frames at a scale and quality academic synthetic datasets can't match. - Data strategy breakthroughs sometimes push a field forward more than model innovations.

Source: Generative World Renderer

04 Multimodal Visual Features You Can Steer on Demand

Image retrieval and anomaly detection often require the model to focus on specific attributes — texture over shape, for example. Existing vision encoders give you whatever features they consider most salient. No control knobs. Text guidance is the natural idea, but CLIP's approach fuses modalities after encoding, sacrificing spatial precision.

This work moves fusion earlier: lightweight cross-attention layers injected inside ViT encoder blocks let text prompts directly shape visual feature extraction. The result is steerable visual representations. On anomaly detection and personalized object discrimination, performance matches or beats specialized methods. General-purpose vision capabilities don't degrade.

Key takeaways: - Early fusion (injecting text inside encoder layers) preserves more spatial information than late fusion. This is the design choice that matters. - Zero-shot generalization on anomaly detection and object discrimination shows steerability isn't bought through task-specific fine-tuning. - Teams working on retrieval, segmentation, or any task needing semantically controllable visual features should track this.

Source: Steerable Visual Representations

Also Worth Noting

Today's Observation

The latent space survey, LatentUM, and SKILL0 span different subfields, but they expose the same structural problem: explicit intermediate representations degrade from information carriers to information bottlenecks as systems scale.

The survey's core argument isn't "latent space has potential." It's that discrete tokens have structural limits as a computational substrate: language redundancy, compression loss, sequential bottlenecks. These stem from discretization itself. No tokenizer improvement can eliminate them. LatentUM bypasses the default path of "describe the image as text, then reason" by performing cross-modal reasoning directly in latent space. SKILL0 moves agent skills from the RAG pattern — retrieve docs, inject into prompt, model follows instructions — to training-time internalization with zero-shot inference.

These three directions converging isn't coincidence. They face the same class of bottleneck: when the information complexity a model needs to process exceeds what explicit representations can carry, the translation layer becomes a loss source. Tokens can't carry the full information density reasoning requires. Text descriptions can't carry spatial details of visual content. Skill documents can't capture an agent's behavioral patterns in complex environments. When models are capable enough to operate directly in continuous space, these explicit layers devolve from necessary bridges to unnecessary funnels.

Explicit representations won't disappear. Humans still need readable output. But the default assumption — "convert to human-readable form, then compute" — is being revised to "compute in continuous space internally, discretize only at I/O boundaries." The direction is consistent: remove explicit representations from the computation path, keep them at the interface layer.

Action item: Audit the explicit intermediate representations in your current systems: token-level reasoning chains, text-formatted retrieval results, prompt-injected tool descriptions. Distinguish which serve as interpretability interfaces for humans (keep those) and which exist only as architectural inertia. The latter are your most immediate optimization targets, from inference cost to information fidelity.