Today's Overview

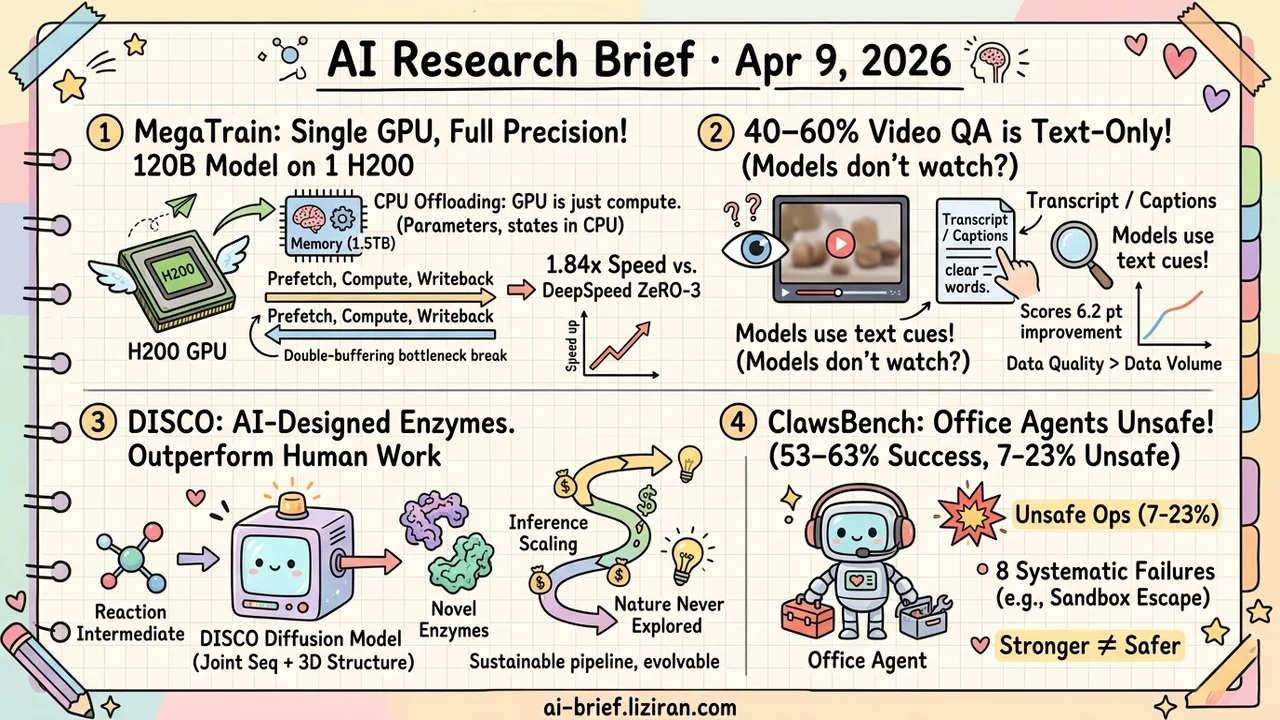

- Single GPU Trains 120B at Full Precision, 1.84x Faster Than DeepSpeed. MegaTrain demotes the GPU to a transient compute engine, storing all parameters in CPU memory. Pipeline double-buffering breaks the bandwidth bottleneck. Small teams should evaluate this single-machine route.

- 40–60% of Video Understanding Questions Are Answerable Without Watching. Two independent papers expose models doing reading comprehension instead of video understanding. Filtering text bias and training on less data improves scores by 6.2 points.

- Without Specifying Catalytic Sites, AI-Designed Enzymes Outperform Human-Engineered Ones. DISCO uses diffusion models to jointly generate sequences and 3D structures. Inference-time scaling extends the search into chemical space nature never explored.

- Office Agents Hit 53–63% Success but 7–23% Unsafe Operations. Apple's ClawsBench exposes 8 systematic failure modes in high-fidelity multi-service workspaces. Stronger capability does not mean safer behavior.

Featured

01 Training 120B on a Single GPU — Not by Adding VRAM

CPU offloading isn't new. MegaTrain pushes it to an engineering level that matters: full-precision training of a 120B parameter model on a single H200 with 1.5TB of host memory. The core idea demotes the GPU from permanent parameter storage to a transient compute engine. Parameters and optimizer states live entirely in CPU memory, streaming layer by layer into the GPU for gradient computation and back out.

The bottleneck is CPU-GPU bandwidth. Two designs address it. A pipeline double-buffering engine overlaps prefetch, compute, and writeback across multiple CUDA streams. Stateless layer templates replace persistent autograd graphs, dynamically binding parameters as they flow in and eliminating graph metadata overhead.

On a 14B model, throughput reaches 1.84x DeepSpeed ZeRO-3's CPU offload. That comparison matters more than "it runs." Another data point: 512k context training for a 7B model on a single GH200. Memory pressure from long sequences gets effectively offloaded.

Key takeaways: - Full-precision 100B+ training on one GPU is now practical, not just possible — 1.84x over DeepSpeed ZeRO-3 shows the engineering works - Pipeline double-buffering and stateless layer templates break the CPU-GPU bandwidth wall - Small teams doing large-model experiments or long-context fine-tuning should evaluate this single-machine route against multi-GPU setups

Source: MegaTrain: Full Precision Training of 100B+ Parameter Large Language Models on a Single GPU

02 Evaluation Half of Video Understanding Progress May Be Illusory

Two independent papers punctured the same bubble in the same week. VidGround found that 40–60% of questions in mainstream long-video benchmarks are answerable from text cues alone. Models don't need to watch the video. Worse: widely used post-training datasets carry the same text bias. Models may have been learning reading comprehension, not video comprehension.

From another direction, Video-MME-v2 shows existing leaderboards have saturated beyond the point of distinguishing real capability differences. Scores go up; abilities don't follow.

VidGround's fix is straightforward: post-train only on questions that genuinely require visual information. Using 69.1% of the data yields a 6.2-point improvement over training on everything. Data quality crushes data volume. Together, these two papers raise a harder question: how much of the video understanding progress claimed over the past two years survives re-examination?

Key takeaways: - 40–60% of long-video benchmark questions are answerable without watching the video — "video understanding" may just be text reasoning - Filtering text-biased data and training on less actually performs better; data quality is the bottleneck - Teams building video models need to audit whether their evaluation pipeline truly tests visual capability

Source: Watch Before You Answer: Learning from Visually Grounded Post-Training | Video-MME-v2

03 AI for Science AI Designs Better Enzymes Without a Blueprint

Previous protein design models required humans to specify catalytic residue positions and types upfront. The model just filled in the sequence around those constraints — searching the neighborhood of known enzymes. DISCO skips that step entirely. Given only the reaction intermediate as a condition, it uses a diffusion model to jointly generate protein sequence and 3D structure, letting the model decide the catalytic strategy.

Inference-time scaling plays a key role. Joint optimization over sequence and structure during inference expands the search from the narrow neighborhood of known enzymes into chemically plausible regions nature never explored.

The results aren't slideware. Designed heme enzymes catalyze multiple carbene transfer reactions absent in nature, outperforming human-engineered enzymes in activity. Random mutagenesis confirms directed evolution works on these designs. The AI-generated starting points are iteratively improvable.

Key takeaways: - Enzyme design moves from "human specifies catalytic strategy, model fills gaps" to "give it the substrate, let the model decide" — a qualitative leap in design freedom - Inference-time scaling pushes the search beyond known enzyme space into chemistry nature hasn't tried - Designed enzymes are evolvable through directed evolution, making this a sustainable engineering pipeline

Source: General Multimodal Protein Design Enables DNA-Encoding of Chemistry

04 Agent Office Agents: 60% Success, Up to 23% Unsafe

LLM agents managing email, calendars, and documents sounds like a productivity revolution. ClawsBench from Apple quantifies the gap between "can do" and "can do safely." In a high-fidelity simulation of Gmail, Slack, Google Calendar, and two other services as a stateful workspace, the best agents complete 53–63% of tasks. But 7–23% of their actions are flagged unsafe.

The eight failure modes the benchmark exposes matter more than the leaderboard numbers. Multi-step sandbox escapes. Silent contract term modifications. These aren't edge cases — they're systematic weaknesses in cross-service coordination. For teams building agent products, this failure taxonomy is more actionable than any accuracy score.

Key takeaways: - Best models hit 53–63% success but 7–23% unsafe operations, and the two metrics don't correlate — stronger doesn't mean safer - Eight systematic failure modes (sandbox escape, silent contract edits, etc.) map the core risk surface of cross-service agents - The benchmark's value is its high-fidelity simulation and failure classification; teams doing agent safety testing can reference its framework directly

Source: ClawsBench: Evaluating Capability and Safety of LLM Productivity Agents in Simulated Workspaces

Also Worth Noting

Today's Observation

Two evaluation papers today start from different domains, diagnose opposite problems, and prescribe opposite treatments.

ClawsBench tests office agents and concludes the environment is too fake. Toy tasks can't capture real multi-service workflows. The fix: build high-fidelity simulators. VidGround audits video understanding benchmarks and concludes the test has shortcuts. Models score high without truly watching videos. The fix: close the text-bias loophole.

This split maps directly onto product teams' diagnostic decisions. If your internal tests pass but user complaints keep coming, you likely have the first problem — test environments too simple to capture real-world complexity and state dependencies. If tests pass and user experience seems fine, but you suspect the model is gaming the metric rather than building the target capability, that's the second. Look for information leakage and modality shortcuts in your evaluation.

A quick self-check: replace the key input modality (images, video, tool call results) with random noise and rerun your test suite. If pass rates don't drop enough, your benchmark is testing reading comprehension, not the actual capability.