Today's Overview

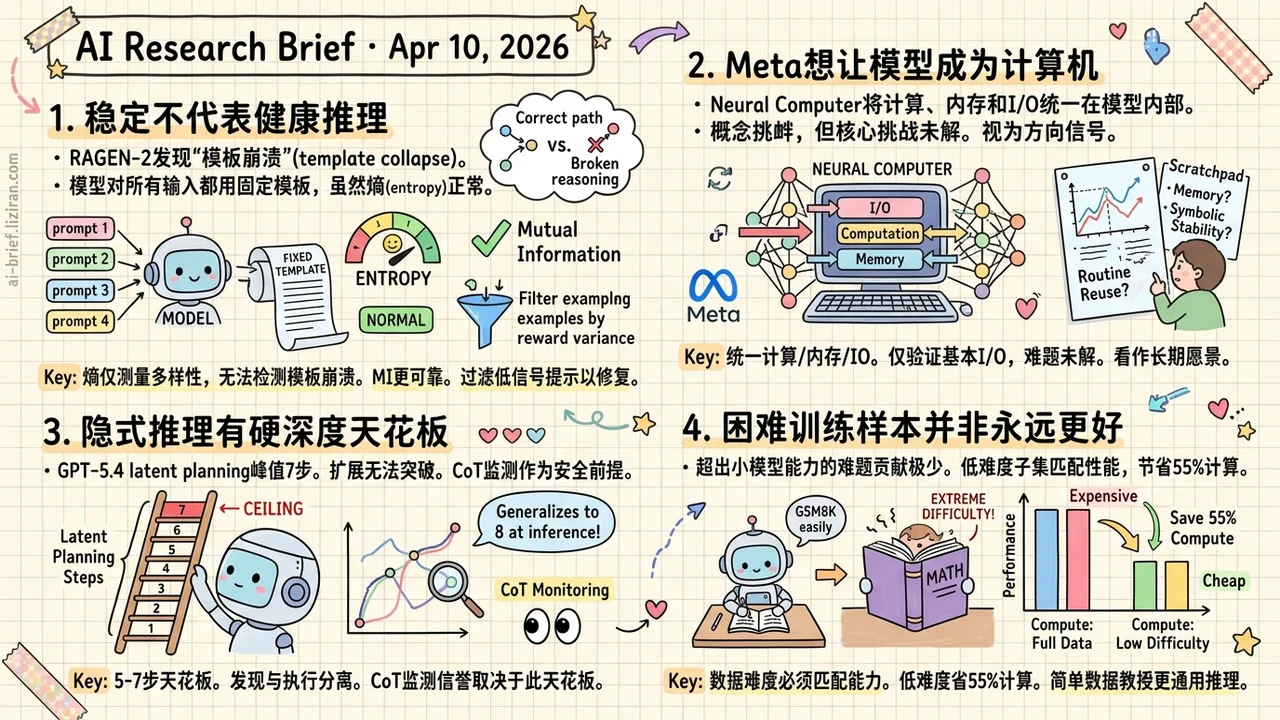

- Stable entropy doesn't mean healthy reasoning. RAGEN-2 exposes "template collapse" in agentic RL: models learn fixed templates for all inputs while entropy looks perfectly fine. Mutual information is the more reliable training signal.

- Meta wants the model to be the computer. Neural Computer unifies computation, memory, and I/O inside the model itself. The concept is provocative, but core challenges remain unsolved. Treat it as a directional signal.

- Implicit reasoning has a hard depth ceiling. Even the largest models top out at 7 latent planning steps. Scaling doesn't break through, which gives experimental backing to CoT monitoring as a safety premise.

- Harder training samples aren't always better for GRPO. Problems beyond a small model's capacity contribute almost no learning signal. A low-difficulty subset matches full-dataset performance while saving 55% of compute.

Featured

01 Entropy Looks Fine. Your Agent's Reasoning Isn't.

Entropy is the go-to health metric for RL-trained multi-turn agents. Stable entropy during training? The model is exploring normally. RAGEN-2 shows this assumption can be dangerously wrong. A model can maintain perfectly normal entropy while defaulting to a single fixed template for every input. Outputs look diverse, but the reasoning path is completely decoupled from the actual problem. The authors call this template collapse, and no existing training metric catches it.

The diagnosis introduces mutual information (MI) to measure whether reasoning actually distinguishes between different inputs. Across planning, math, web navigation, and code execution tasks, MI correlates with final task performance far more strongly than entropy. The underlying mechanism is clean: when reward variance is low, task gradient signal is too weak. Regularization dominates the optimization, flattening reasoning differences across inputs.

The fix is straightforward. Filter prompts by reward variance each iteration, keeping only high-signal examples so task gradients regain control. Consistent improvements across all four task types. If you're doing agentic RL, your entropy dashboard may have been giving you false confidence all along.

Key takeaways: - Entropy measures diversity within a single input but cannot detect "template collapse" where the model applies identical reasoning across different inputs - Mutual information is a more reliable training health indicator for agentic RL; teams should add it to their monitoring stack - The fix is simple: filter low-signal prompts by reward variance so task gradients dominate optimization again

Source: RAGEN-2: Reasoning Collapse in Agentic RL

02 Architecture Meta Wants the Model to Be the Computer

Agents call tools. World models learn environment dynamics. Meta proposes a third path: make the model itself the computer, unifying computation, memory, and I/O in the model's runtime state. Bold framing. What's the actual implementation? A video model that generates screen frames one at a time, taking instructions and pixels as input, producing the next frame as output.

Experiments show it can learn basic I/O alignment and short-horizon control. Routine reuse, controlled updates, and symbolic stability are all still open problems. This is a vision paper describing a long-term direction, not a working system. The distance between the "Completely Neural Computer" they define and what they demonstrate is vast.

Key takeaways: - Neural Computer aims to make the model itself serve as a computer, distinct from both agent and world-model paradigms - Only the most basic I/O primitives are validated; memory reuse and symbolic stability remain unsolved - The concept is thought-provoking but far from engineering reality. Read it as a directional signal, not a technical blueprint

Source: Neural Computers

03 Reasoning How Deep Can Models Think Without Showing Their Work?

GPT-5.4's latent planning tops out at 7 steps on graph path-finding tasks. That's the current measured ceiling. The experimental setup precisely controls required reasoning depth: small Transformers trained from scratch discover 3-step strategies, fine-tuned GPT-4o and Qwen3-32B reach 5 steps. Massive scaling doesn't yield an order-of-magnitude leap.

One interesting dissociation emerges. Models can only discover strategies up to 5 steps during training, but at inference time, a discovered strategy generalizes to 8 steps. Strategy discovery and strategy execution are separable capabilities.

This ceiling matters directly for AI safety. If complex reasoning can't be completed implicitly in a single forward pass, models must externalize their reasoning. That's exactly the condition under which CoT monitoring works. Whether this graph-task ceiling transfers to broader real-world scenarios is the open question for follow-up work.

Key takeaways: - Latent planning has a depth ceiling of 5-7 steps that scaling alone does not break - Strategy discovery and execution are separable: training finds 5-step strategies, but inference generalizes to 8 steps - CoT monitoring's credibility depends on whether this ceiling holds across real-world tasks, not just graph search

Source: The Depth Ceiling: On the Limits of Large Language Models in Discovering Latent Planning

04 Training Harder Problems Don't Help Small Models Learn GRPO

Sub-3B models aligned with GRPO for math reasoning hit a clear difficulty boundary. As problem difficulty increases, accuracy quickly plateaus. Samples beyond the model's current reasoning capacity contribute almost no learning signal. This ICLR workshop paper explains why: GRPO redistributes output preferences but can't conjure reasoning abilities the model doesn't have.

The practical finding is sharper. Training on only low-difficulty problems matches full-dataset accuracy while requiring roughly 45% of the training steps. One unexpected transfer effect stands out: GRPO trained on GSM8K scores 3-5 percentage points higher on MATH's numeric subset than GRPO trained directly on MATH. Simpler data may teach more generalizable reasoning patterns.

Key takeaways: - Hard samples beyond a small model's capacity yield diminishing returns; data difficulty must match model capacity - Low-difficulty subsets save about 55% compute with no accuracy loss - Teams using RL to align small models can adopt this difficulty-stratification strategy to optimize their data mix directly

Source: Limits of Difficulty Scaling: Hard Samples Yield Diminishing Returns in GRPO-Tuned SLMs

Also Worth Noting

Today's Observation

Reasoning optimization is accumulating a specific kind of knowledge: where each improvement strategy stops working. Three failure modes from today cover three distinct layers. RAGEN-2 finds entropy decouples from reasoning diversity when reward variance is low. Depth Ceiling finds model scale hits a hard wall for latent planning depth. GRPO finds training difficulty backfires once it exceeds model capacity. The common structure isn't "reasoning is hard." Every optimization axis has a proxy gap: a tipping point where standard metrics start deceiving you. Identifying these tipping points has more engineering value than continuing to climb the metric. A concrete recommendation: for each proxy metric in your RL training pipeline (entropy, loss, difficulty distribution), pair it with a direct behavioral measure (mutual information, stratified success rate) as a cross-check. When the two trends diverge, you've hit the boundary.