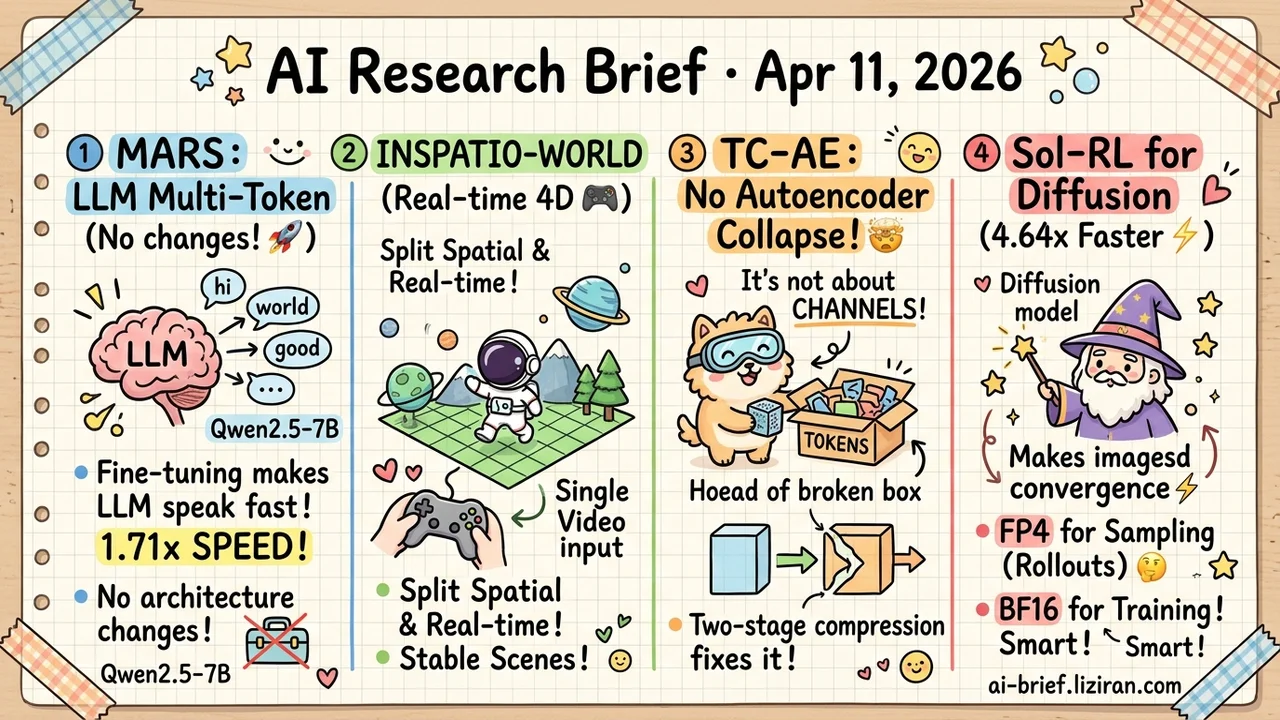

Today's Overview

- Fine-tuning alone teaches LLMs to output multiple tokens per step. MARS needs no architecture changes and no extra parameters. Qwen2.5-7B hits 1.71x wall-clock speedup with near-zero migration cost.

- Image autoencoder collapse isn't a channel problem. TC-AE shows the real bottleneck is token utilization. A two-stage compression path fixes it without adding complexity.

- World models no longer trade spatial consistency for real-time speed. INSPATIO-WORLD splits the two concerns into separate modules, generating navigable 4D scenes from a single video input.

- RL alignment for diffusion models doesn't need full precision everywhere. FP4 for exploration, BF16 for training. Convergence speeds up by up to 4.64x with no quality loss.

Featured

01 Efficiency Fine-Tuning Alone Unlocks Multi-Token Generation

Multi-token prediction isn't new. Previous approaches always carried overhead. Speculative decoding requires a separate draft model. Medusa adds extra prediction heads. MARS takes a cleaner path: no architecture changes, no added parameters, no new components. Standard instruction-tuning on existing data teaches the model to predict multiple tokens per step.

Backward compatibility is full. On six benchmarks, single-token mode matches or beats the original. Multi-token mode lifts throughput 1.5-1.7x. Wall-clock speedup on Qwen2.5-7B reaches 1.71x. A built-in confidence threshold controls how many tokens to accept per step at runtime. Under heavy load, the model speeds up automatically without a service restart.

Inference acceleration just became a pure fine-tuning problem. Take your existing model, run one training pass. API unchanged, deployment unchanged, calling conventions unchanged. Teams running LLM serving should evaluate this seriously. Migration cost may be lower than expected.

Key takeaways: - Pure fine-tuning achieves multi-token generation with zero extra parameters and zero architecture changes, making migration cost minimal - Fully compatible with original single-token inference; multi-token mode delivers 1.5-1.7x throughput gains - Runtime confidence threshold enables dynamic speed adjustment under load without service restart

Source: MARS: Enabling Autoregressive Models Multi-Token Generation

02 Multimodal World Models: Spatial Consistency Meets Real-Time Speed

World models face a persistent tradeoff. Spatial consistency demands heavy computation. Real-time interaction demands cutting corners. Walk a few steps and the scene falls apart. INSPATIO-WORLD decouples the two into separate modules: an implicit spatiotemporal cache maintains global scene consistency, while an explicit spatial constraint module handles geometry and camera trajectory.

Input is a single reference video. Output is a navigable 4D interactive scene. Joint distribution matching distillation (JDMD) deserves separate attention: it uses real data distributions to correct quality degradation from synthetic training data. That idea transfers to other world model work.

The system ranks first among real-time interactive methods on WorldScore-Dynamic, beating existing approaches in both spatial consistency and interaction precision.

Key takeaways: - Spatial consistency and real-time interaction are no longer mutually exclusive; dedicated modules solve each independently - Real-data distribution distillation mitigates synthetic training degradation, a transferable technique for other world model pipelines - Teams building 3D/4D interactive scenes should watch this single-video-to-navigable-environment pipeline

Source: INSPATIO-WORLD: A Real-Time 4D World Simulator via Spatiotemporal Autoregressive Modeling

03 Image Gen Everyone Adds Channels. The Real Problem Is Token Utilization.

Image autoencoders collapse under high compression. The standard fix is adding more channels. TC-AE argues this instinct is wrong from the start. Under deep compression, most tokens collapse into near-identical representations. More channels just give broken tokens extra dimensions to repeat the same information.

The actual bottleneck is the token-to-latent compression step. It discards structural information too aggressively. TC-AE's fix is simpler: split compression into two stages to preserve structure, then use self-supervised training to inject semantic content into tokens. No added architectural complexity. Reconstruction and generation quality both improve measurably under deep compression.

Teams building visual tokenizers should revisit their compression paths rather than stacking channels.

Key takeaways: - Representation collapse in high-compression autoencoders stems from token utilization, not channel count - Two-stage token-to-latent compression preserves structural information that single-stage pipelines discard - Visual tokenizer teams should rethink compression path design instead of adding channels

Source: TC-AE: Unlocking Token Capacity for Deep Compression Autoencoders

04 Training Exploration and Training Don't Need the Same Precision

RL alignment for diffusion models burns compute on rollouts. The rollout phase is pure sampling: generating candidate images in bulk, selecting contrastive pairs. No gradient computation involved. Numerical precision tolerance is far higher than during policy updates. Sol-RL exploits this asymmetry directly: FP4 for high-throughput rollout generation, BF16 for gradient optimization after selection.

Experiments on FLUX.1-12B, SANA, and SD3.5-L confirm training quality matches pure BF16. Convergence speed improves by up to 4.64x. The logic is simple but precise: exploration is not learning. Their compute requirements differ structurally.

Teams doing diffusion model RLHF can apply this split directly to cut rollout-phase costs.

Key takeaways: - Exploration and learning have naturally asymmetric precision needs; FP4/BF16 split targets this structure precisely - Not a general quantization scheme but one designed specifically for RL rollout sampling characteristics - Diffusion model RLHF teams can directly adopt this approach to reduce rollout computation overhead

Source: FP4 Explore, BF16 Train: Diffusion Reinforcement Learning via Efficient Rollout Scaling

Also Worth Noting

Today's Observation

Four papers solving different problems share one principle: resources are unevenly needed across system components, and uniform allocation is waste. MARS finds consecutive token predictability varies enormously. High-confidence positions can be skipped in one step. Sol-RL finds the exploration phase is just sampling; FP4 precision is plenty. Save BF16 for gradient updates that actually need numerical accuracy. TC-AE finds most tokens collapse into identical representations under compression. The problem isn't insufficient precision but uneven token utilization. Q-Zoom finds that relevant visual regions differ entirely by query. Global high resolution feeds attention mechanisms garbage.

An engineering intuition worth internalizing: when you hit a resource bottleneck, don't reach for "compress everything" or "downgrade uniformly." Ask which components don't actually need the resources they're getting. Non-uniform allocation almost always beats uniform compression, because uniform strategies assume all parts are equally important. That assumption is almost never true. Next time you face inference latency, memory limits, or slow training, profile the resource distribution first. You'll likely find 80% of resources serve 20% of positions that genuinely need them. The other 80% can be cut aggressively with minimal impact.