Today's Overview

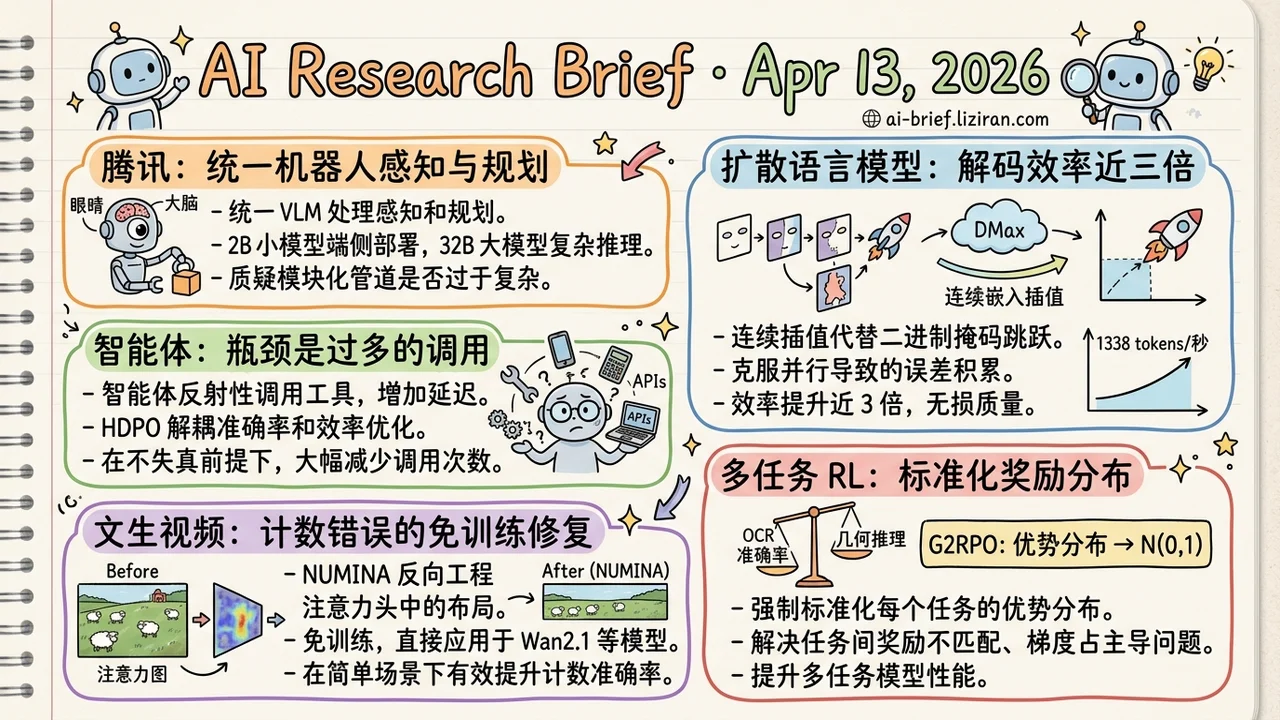

- Tencent unifies robot perception and planning in a single VLM. They release both a 2B on-device model and a 32B reasoning model, calling into question whether modular pipelines are still worth their complexity.

- Parallel decoding efficiency for diffusion language models nearly triples. DMax replaces binary mask flips with continuous embedding interpolation, hitting 1,338 tokens per second on two H200s.

- The real bottleneck for agents isn't too few tools; it's too many calls. HDPO decouples accuracy and efficiency into orthogonal channels, cutting tool calls by orders of magnitude with no accuracy loss.

- Text-to-video counting errors get a training-free fix. NUMINA reverse-engineers object layouts from attention heads and corrects them, plugging directly into Wan2.1 with no retraining.

- Multi-task RL finally has a principled answer to mismatched reward distributions. G2RPO normalizes each task's advantage to N(0,1), beating comparable open-source models across 18 benchmarks.

Featured

01 Robotics Do Robots Still Need Separate Vision Modules? Tencent Says No

Embodied AI has favored modular architectures for years: one model for perception, one for planning, one for control, all stitched into a pipeline. Tencent's HY-Embodied goes the opposite direction. A single VLM handles both spatial perception and task planning, then feeds directly into a VLA (Vision-Language-Action model) for control.

Two model sizes ship: 2B active parameters for on-device deployment, 32B for complex reasoning. The large model distills its capabilities into the small one via on-policy distillation. A Mixture-of-Transformers (MoT) architecture routes different modalities through separate compute paths, preventing vision and language from competing for capacity.

Benchmark numbers look strong: the 2B model beats same-class SOTA on 16 of 22 benchmarks, and the 32B approaches Gemini 3.0 Pro. But embodied AI benchmarks and real-robot performance are notoriously far apart. The real-world experiment details need a close read. The architectural bet matters more than the scores: if VLMs get strong enough, the complexity and maintenance cost of modular pipelines may no longer be justified.

Key takeaways: - Unifying perception and planning in a single VLM is a clear trend bet in embodied AI, replacing modular pipeline complexity. - The 2B on-device plus 32B reasoning dual-size design fits real deployment needs better than a single model. - Benchmarks are encouraging, but real-robot validation remains the binding constraint. Full paper details matter here.

Source: HY-Embodied-0.5: Embodied Foundation Models for Real-World Agents

02 Efficiency Diffusion Language Models Can Finally Go Full Parallel

Diffusion language models (dLLMs) have a frustrating gap between theory and practice. They can generate all tokens in parallel, but cranking up parallelism causes error accumulation and quality collapse. DMax fixes this by replacing the binary mask-to-token jump with continuous self-refinement. Each decoding step interpolates between predicted embeddings and mask embeddings, letting the model gradually "purify" its output in embedding space instead of gambling on one-shot predictions.

A new training strategy, On-Policy Uniform Training, teaches the model to correct its own mistakes. That's the actual prerequisite for aggressive parallelism. The results are concrete: effective tokens per step on GSM8K jump from 2.04 to 5.47, MBPP from 2.71 to 5.86. Two H200s hit 1,338 tokens per second on a single batch.

Key takeaways: - Shifting from discrete mask switching to continuous embedding interpolation solves error accumulation under aggressive parallelism. - Nearly 3x efficiency gain with no accuracy sacrifice. Parallelism can finally be pushed to the limit. - The biggest engineering barriers to dLLMs challenging autoregressive models are being dismantled one by one.

Source: DMax: Aggressive Parallel Decoding for dLLMs

03 Agent The Skill to Learn Isn't Calling More Tools; It's Restraint

Excessive tool calling in agents isn't a bug. It's a systemic metacognition failure. Multimodal agents exhibit reflexive tool invocation: they'll call an external API even when the answer is already visible in the image, adding latency and noise. Existing RL approaches use scalar penalties to suppress overcalling, but that creates a dilemma. Penalize too hard and you suppress necessary calls; too soft and the accuracy reward's variance drowns out the signal.

HDPO splits accuracy and efficiency into two orthogonal optimization channels. First ensure the task is done correctly, then optimize call efficiency among correct trajectories. The resulting Metis model cuts tool calls by orders of magnitude while accuracy actually improves. The direction is sound, but training relies on synthetic counterfactual data. Generalization to open-ended scenarios needs a full-paper read to assess.

Key takeaways: - Reflexive tool calling is a hidden source of agent latency and error amplification. - Decoupling accuracy and efficiency into orthogonal channels outperforms scalar penalty approaches. - The method relies on synthetic training data; real-world generalization is unconfirmed.

Source: Act Wisely: Cultivating Meta-Cognitive Tool Use in Agentic Multimodal Models

04 Video Gen Attention Heads Already Encode Countable Object Layouts

NUMINA starts from a clever observation: text-to-video models' self-attention and cross-attention heads already encode spatial object layout information. The models just can't use it well. At inference time, NUMINA selects the most discriminative attention heads, reverse-engineers a countable latent layout from them, corrects it when the count is wrong, and modulates cross-attention to guide regeneration.

No model modification, no retraining. It plugs directly into the Wan2.1 family. Counting accuracy improves 7.4% on the 1.3B model, 4.9% on 5B, and 5.5% on 14B. CLIP alignment scores also improve. The usual caveat for training-free methods applies: gains are clear on simple scenes, but robustness under occlusion and dynamic interaction needs more evidence.

Key takeaways: - Extracting countable layouts from attention heads is a low-cost, practical approach that skips retraining entirely. - Consistent gains across model scales (1.3B to 14B) suggest reasonable generality. - The ceiling for training-free methods depends on how reliable attention signals remain in complex scenes.

Source: When Numbers Speak: Aligning Textual Numerals and Visual Instances in Text-to-Video Diffusion Models

05 Multimodal When Task Rewards Differ by an Order of Magnitude, Mixed Training Breaks

Mix OCR accuracy and geometric reasoning accuracy into the same RL training loop, and the reward distribution gap lets one task dominate gradient updates. That's the persistent failure mode when applying GRPO to multimodal models. OpenVLThinkerV2's G2RPO applies a mathematically intuitive fix: force-normalize each task's advantage distribution to standard normal N(0,1), putting all tasks' gradient contributions on the same scale.

Two task-level controls sit on top: complex reasoning tasks get encouraged toward longer chain-of-thought, while visual grounding tasks are constrained to short outputs. An entropy regularizer prevents exploration from diverging or collapsing. Evaluation across 18 benchmarks shows gains over comparable open-source and some commercial models, though the abstract omits specific comparison numbers. The full paper is needed to gauge the actual margin.

Key takeaways: - Incomparable reward distributions across tasks is the core multi-task RL challenge. Per-task normalization is a general-purpose fix. - Dual control over response length and entropy balances perceptual precision against reasoning depth. - This approach isn't vision-specific. Any team doing multi-task reinforcement learning should take note.

Source: OpenVLThinkerV2: A Generalist Multimodal Reasoning Model for Multi-domain Visual Tasks

Also Worth Noting

Today's Observation

Five of today's ten highlights are agent papers. Break them down and a pattern emerges: none of them build new agent capabilities.

ClawBench tests 153 everyday tasks across 144 real websites. The best model succeeds less than half the time. KnowU-Bench doesn't test whether agents can act, but whether they know when not to. Act Wisely targets reflexive tool calling directly: after HDPO training, tool call volume drops by orders of magnitude while accuracy rises. MolmoWeb releases a fully open-source web agent baseline. Structured Distillation packs Gemini 3 Pro's capabilities into a 9B model using 3,000 trajectories, matching or exceeding the teacher across six web environments.

Five papers. They measure boundaries, define restraint, and reduce cost. None expand capability.

The same day, non-agent work tells a different story. DMax nearly triples parallel efficiency for diffusion LMs. NUMINA fixes video counting without touching the model. OpenVLThinkerV2 solves the long-standing reward distribution mismatch in multi-task RL. These papers are still pushing performance ceilings upward.

A concrete suggestion for teams building agents: before investing in the next tool integration, run your existing system through ClawBench or a similar framework. Quantify which tasks don't need tool calls at all. Act Wisely's data suggests that cutting redundant calls may yield more than adding new tools.