Today's Overview

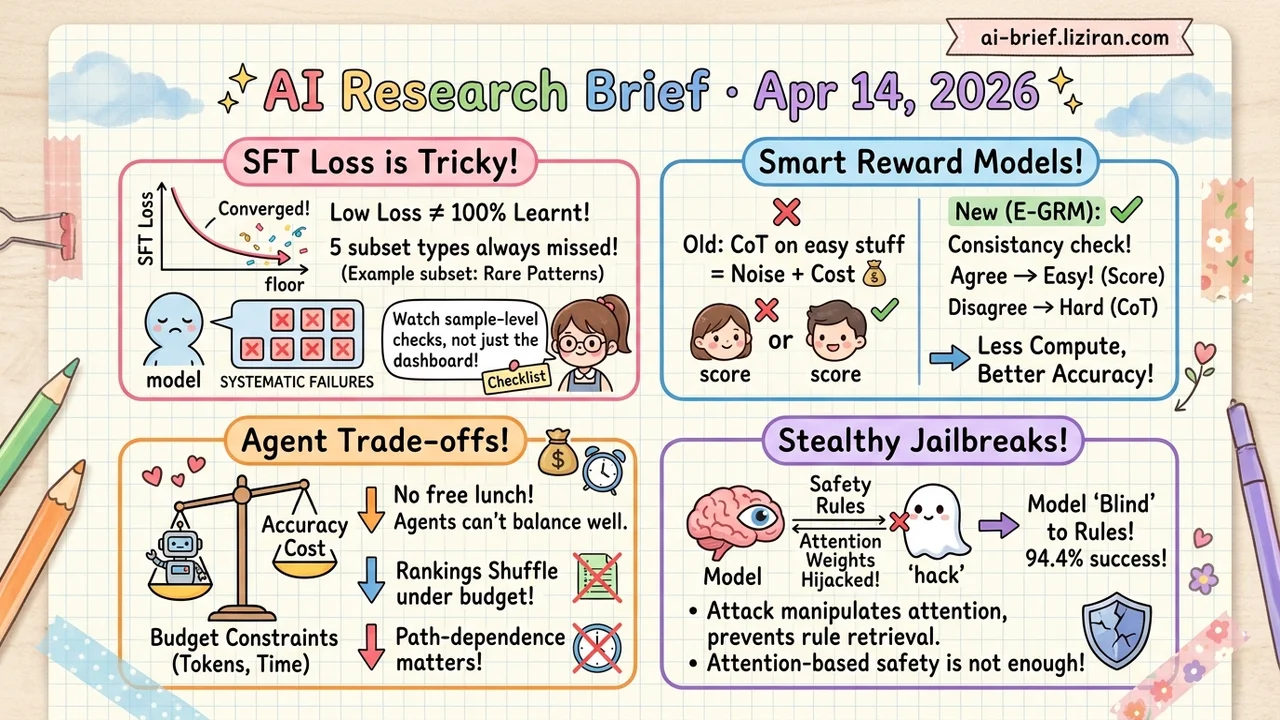

- SFT loss convergence doesn't mean the model learned everything. Five systematic failure modes reproduced across three model families show that aggregate metrics can hide persistently unlearned subsets.

- Reward models don't need CoT reasoning for every score. E-GRM uses generation consistency to estimate uncertainty, skips deep reasoning on easy samples, and improves accuracy while cutting cost.

- Coding agent rankings reshuffle under credit budgets. Frontier agents can't find the optimal accuracy-cost tradeoff under resource constraints, and their behavior is highly path-dependent.

- Hijacking attention weights makes models "blind" to safety instructions, with a 94.4% jailbreak success rate. The attack doesn't force the model to violate rules — it prevents the model from retrieving them during generation.

Featured

01 Training Loss Converged. Did the Model Actually Learn?

SFT loss hits bottom, training converges, job done — except this ACL Oral paper reveals a surprising pattern: converged models still systematically fail on a subset of their own training data. The authors call it Incomplete Learning Phenomenon (ILP) and reproduce it across Qwen, LLaMA, and OLMo2.

This isn't random noise. Five structural causes emerge: missing pretrain knowledge, conflicts with pretrain knowledge, internal data contradictions, left-side forgetting in long sequences, and under-optimization of rare patterns. Aggregate metrics — overall loss, overall accuracy — can paper over samples the model never learns. Your model looks fine on the dashboard while consistently failing on specific subsets.

The paper proposes a diagnostic framework that attributes unlearned samples to specific causes using training and inference signals, then applies targeted interventions. For any team running SFT, the takeaway is direct: watching the loss curve isn't enough. You need sample-level learning diagnostics after training.

Key takeaways: - SFT convergence doesn't mean everything was learned. Models systematically miss specific subsets of their own training data. - Five failure modes each have distinct diagnostic signals and interventions. No single fix covers all of them. - Add sample-level learning checks post-training instead of relying on aggregate metrics alone.

02 Reasoning Not Every Score Needs Deep Thinking

Generative reward models (GRMs) use CoT reasoning to score responses. Good idea in theory, but most samples are obvious. Applying CoT uniformly wastes compute, and the resulting reasoning chains vary wildly in quality.

E-GRM takes a straightforward approach: generate multiple quick judgments in parallel. If they agree, the model is confident. Output the score directly. When they diverge, trigger CoT for deeper reasoning. The uncertainty signal comes entirely from the model's own generation behavior. No external labels, no task-specific thresholds.

On multiple reasoning benchmarks, this selective strategy reduces inference cost while improving accuracy. Forcing reasoning on easy samples apparently introduces noise rather than clarity.

Key takeaways: - Generation consistency provides a clean uncertainty estimate without external features, telling you when deep reasoning is actually needed. - Selective CoT improves accuracy while cutting cost. Indiscriminate reasoning can hurt. - For teams deploying reward models, this is a practical efficiency lever worth trying.

Source: Reason Only When Needed: Efficient Generative Reward Modeling via Model-Internal Uncertainty

03 Code Intelligence Agent Rankings Collapse Under Budget Constraints

Competitive programming and production engineering share one reality: every submission, test run, and compute second has a cost. Yet mainstream coding agent benchmarks assume unlimited resources.

USACOArena borrows ACM-ICPC rules and introduces a strict credit system. Every generated token, every local test, every elapsed second deducts from a fixed budget. Agents must make real tradeoffs between accuracy and resource consumption. Early results: frontier single agents and agent swarms both fail to find optimal balance points. Behavior is highly path-dependent, with different starting strategies leading to wildly different outcomes.

"Highest score with unlimited resources" does not equal "best value in deployment."

Key takeaways: - Agent rankings under unlimited resources may not hold under budget constraints. - Swarm-of-agents scale advantages don't necessarily survive credit limits. - For production deployment, the tradeoff between token efficiency and decision quality matters more than raw accuracy.

Source: Credit-Budgeted ICPC-Style Coding: When Agents Must Pay for Every Decision

04 Safety Blinding Models to Rules Beats Breaking Them

Previous multimodal jailbreak attacks take a brute-force approach: optimize image perturbations to directly force harmful outputs. This ACL paper tries something different. Instead of fighting safety mechanisms, it manipulates attention patterns so the model never "sees" safety instructions at all.

The method uses a push-pull formulation: suppress attention to safety-related tokens in the system prompt while anchoring generation on adversarial image features. On Qwen-VL, attack success rate jumps from 68.8% baseline to 94.4%, converging 40% faster.

The mechanistic finding matters most. Successful attacks suppress attention to safety instructions by 80%. The model doesn't "know the rules but choose to break them." It fails to retrieve the rules during generation. Safety teams need to take this attack surface seriously: attention-based safety retrieval may not be sufficient.

Key takeaways: - The attack vector shifts from "overpower safety" to "make safety invisible." A more subtle threat. - Attention manipulation is more efficient than direct harmful-output optimization, reducing gradient conflict by 45%. - Defense teams should reassess whether attention-based safety retrieval mechanisms are sufficient.