Today's Overview

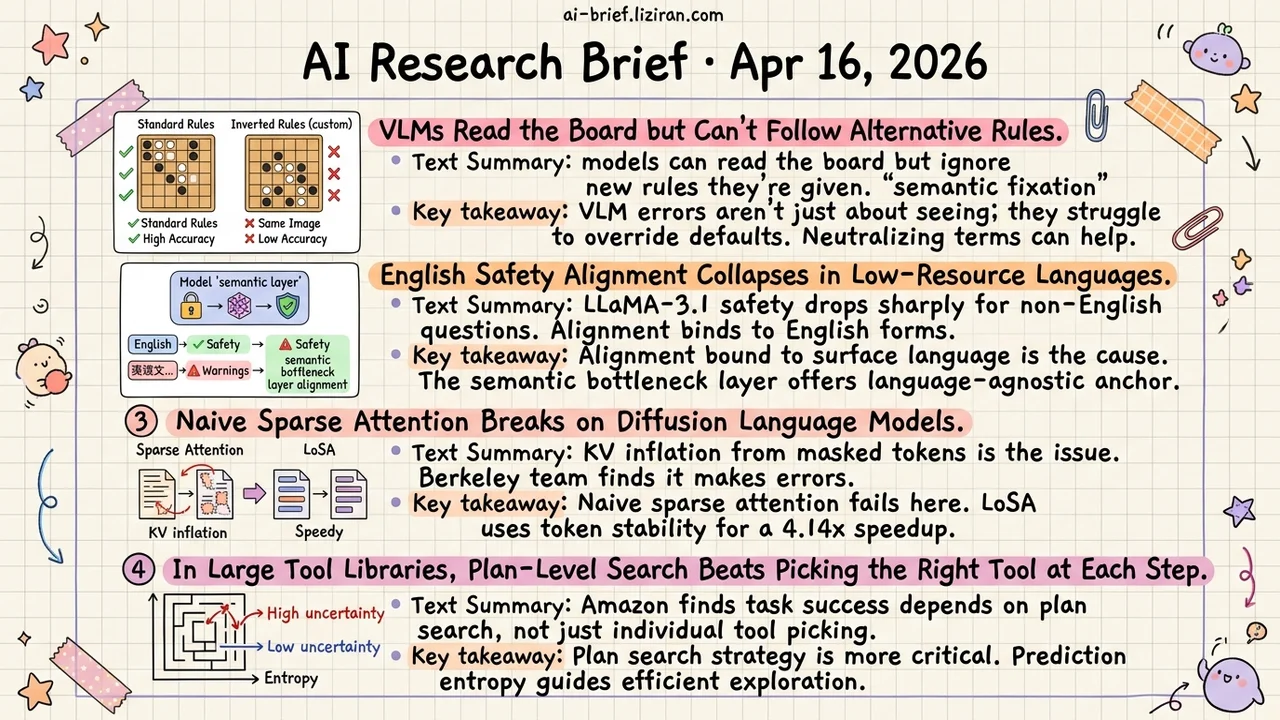

- VLMs Read the Board but Can't Follow Alternative Rules. 14 models on identical endgame images score consistently higher under standard rules than inverted ones. Researchers call it "semantic fixation" — a warning for any application requiring models to follow custom rules.

- English Safety Alignment Collapses in Low-Resource Languages. LASA anchors alignment at the model's semantic bottleneck layer, cutting LLaMA-3.1's average attack success rate from 24.7% to 2.8%.

- Naive Sparse Attention Breaks on Diffusion Language Models. KV inflation from masked tokens is the root cause. LoSA exploits local invariance in token states during denoising, achieving 4.14x speedup in practice.

- In Large Tool Libraries, Plan-Level Search Beats Picking the Right Tool at Each Step. Amazon uses prediction entropy to allocate search budget: explore more where uncertainty is high, push forward where it's low.

Featured

01 Multimodal Change the Rules, VLM Accuracy Collapses

14 VLMs, identical endgame board images. Under standard rules, accuracy is consistently high. Switch to inverted rules — same image, same prompt structure — and accuracy drops sharply. This isn't a perception failure. The models read the board correctly but can't override default semantic mappings like "black pieces = first player."

Researchers call this semantic fixation: even when the prompt explicitly states alternative rules, models default to trained associations. The experimental design isolates this cleanly. Four board games, paired standard/inverted rule sets, identical terminal states. An intervention experiment confirms the mechanism: replacing piece names with semantically neutral labels improves inverted-rule performance. Swap back to meaningful names, the gap returns.

For teams building VLM applications: if your use case requires the model to classify, label, or judge by custom criteria, passing perception benchmarks doesn't mean it follows your rules. Test rule-mapping ability separately.

Key takeaways: - VLM errors aren't always perception errors. Semantic fixation causes models to ignore prompt-specified alternative rules. - Neutralizing terminology reduces fixation effects and can serve as a prompt engineering technique at deployment time. - Applications requiring VLMs to follow custom rules should test rule-mapping ability independently of perception metrics.

Source: Beyond Perception Errors: Semantic Fixation in Large Vision-Language Models

02 Safety English Alignment Doesn't Mean Global Alignment

LLM safety alignment has a structural blind spot. Training uses predominantly English data, so safety behavior binds to English surface forms rather than the model's actual semantic representations. Ask the same harmful question in a low-resource language and attack success rates jump from single digits to double digits.

LASA identifies a "semantic bottleneck layer" inside the model — an intermediate layer where representations are driven by meaning, not language identity — and anchors safety alignment there. LLaMA-3.1-8B-Instruct's average attack success rate drops from 24.7% to 2.8%. Qwen2.5 and Qwen3 variants stabilize at 3–4%. For teams deploying multilingually, this approach treats the root cause rather than patching language by language.

Key takeaways: - Safety alignment bound to surface-level language features is the root cause of multilingual safety cliffs, not insufficient training data. - The semantic bottleneck layer provides an actionable, language-agnostic anchor point for alignment. - Multilingual deployment teams should evaluate real safety performance in low-resource languages, not just English benchmarks.

Source: LASA: Language-Agnostic Semantic Alignment at the Semantic Bottleneck for LLM Safety

03 Architecture Sparse Attention Breaks on Diffusion LMs

Diffusion language models (DLMs) gain parallel decoding over autoregressive models, but attention's memory bottleneck at long contexts remains. Sparse attention seems like the obvious fix. A Berkeley team found it actually makes things worse: masked tokens share identical initial KV values, so the union of selected KV pages across queries expands rather than shrinks. The paper calls this KV inflation. Sparsity introduces more errors instead of saving compute.

LoSA takes a different angle. During denoising, most tokens' hidden states barely change between consecutive steps. Only a small set of "active" tokens shift significantly. LoSA caches attention results for stable tokens and runs sparse attention only on active ones, drastically reducing actual KV loads. Under aggressive sparsity settings, average accuracy improves by 9 points while attention density drops 1.54x. Measured speedup on A6000: 4.14x.

Key takeaways: - Naive sparse attention fails on diffusion language models due to KV inflation. Autoregressive intuitions don't transfer directly. - LoSA exploits local invariance of token states during denoising, restricting sparse computation to active tokens only. - Attention efficiency is the engineering bottleneck DLMs must solve to become practical at long contexts.

Source: LoSA: Locality Aware Sparse Attention for Block-Wise Diffusion Language Models

04 Agent More Tools? Search Strategy Beats Tool Selection

In multi-step tasks, agents may face hundreds of candidate tools at each step. The search space grows exponentially with plan length. Amazon built a large-scale e-commerce benchmark (SLATE) and found that task success depends less on picking the right tool per step and more on plan-level search efficiency and self-correction.

EGB (Entropy-Guided Branching) uses the model's prediction entropy to decide which decision nodes deserve exploration. High entropy means the model is uncertain: branch out. Confident predictions get the greedy treatment: push forward, save compute. The idea is straightforward but addresses a real scaling problem. Exhaustive search is infeasible with large tool sets. Pure greedy search gets stuck. You need a mechanism to allocate search budget where it matters. Generalization beyond e-commerce needs verification, but using entropy for exploration-exploitation balance is a useful reference for teams building agents over large tool libraries.

Key takeaways: - Plan-level search strategy affects multi-step task success more than single-step tool selection accuracy. - Prediction entropy allocates search budget: more exploration at high uncertainty, faster execution at low uncertainty. - Teams building large-scale tool integrations should invest in search efficiency, not just tool retrieval quality.

Source: Long-Horizon Plan Execution in Large Tool Spaces through Entropy-Guided Branching