Today's Overview

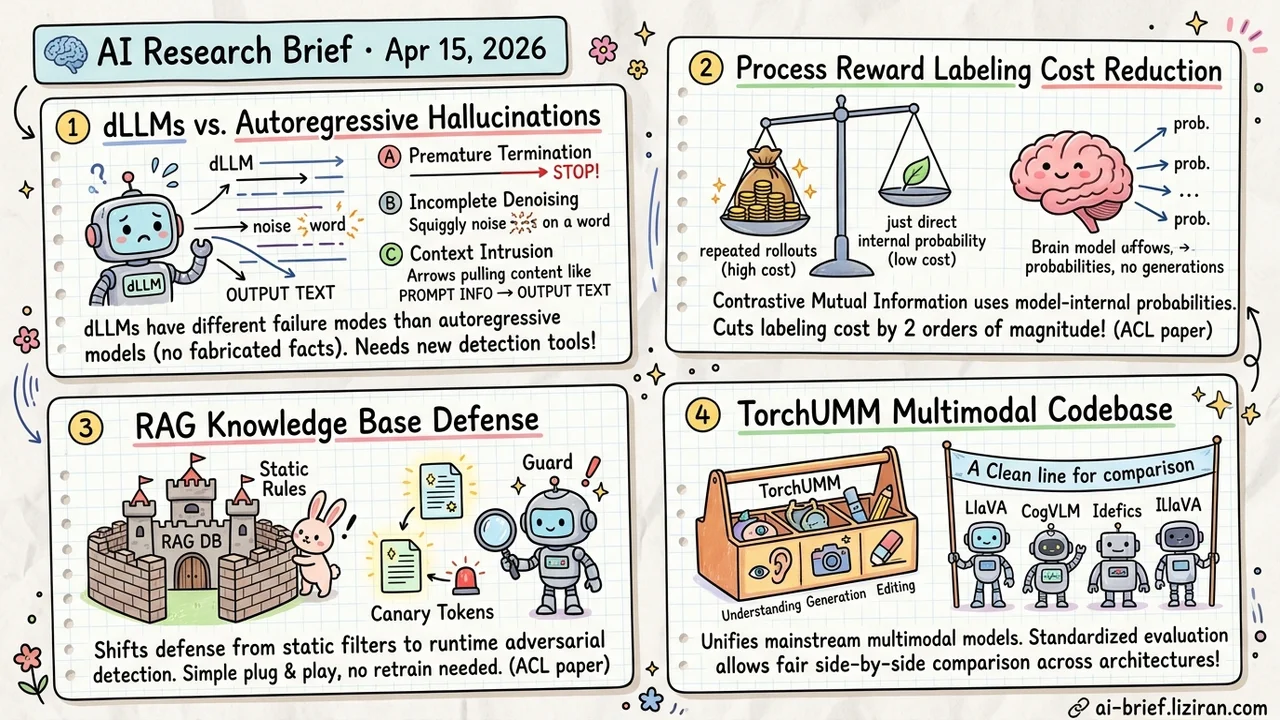

- dLLMs hallucinate in fundamentally different ways than autoregressive models. The first controlled comparison identifies three unique failure modes (premature termination, incomplete denoising, context intrusion), meaning existing detection tools need redesign.

- Contrastive mutual information cuts process reward labeling cost by two orders of magnitude. Step-level signals extracted directly from model probabilities, no repeated rollouts needed. Accepted at ACL.

- RAG knowledge base defense shifts from static rules to runtime adversarial games. Canary tokens borrowed from stack canary concepts enable continuous detection, plug-and-play with no architecture changes.

- TorchUMM unifies mainstream multimodal models into one codebase. Covers understanding, generation, and editing, enabling the first apples-to-apples comparison across architectures.

Featured

01 Architecture dLLM Hallucinations Look Nothing Like Autoregressive Ones

DMax doubled dLLM parallel efficiency just last week. Speed is improving, but a fundamental question remained unanswered: how do dLLMs actually fail? This ACL paper runs the first controlled comparison with matched architecture, parameter count, and pretrain weights. dLLMs do hallucinate more than autoregressive models. The real finding is that they fail in completely different ways.

Three failure modes unique to dLLMs emerge: premature termination (generation stops mid-sequence), incomplete denoising (output retains noise artifacts), and context intrusion (prompt content bleeds into generated text). None overlap with the "fabricating facts" hallucinations typical of autoregressive models. Existing hallucination detection and mitigation tools won't transfer directly.

One inference-time result stands out. Quasi-autoregressive generation saturates early, but non-sequential decoding shows continuous improvement potential — a concrete direction for better dLLM reliability. This paper doesn't say dLLMs are broken. It says they break in new ways that need new tooling.

Key takeaways: - Three dLLM-specific failure modes (premature termination, incomplete denoising, context intrusion) don't overlap with autoregressive hallucinations. - Existing hallucination detection and mitigation tools can't transfer directly. dLLM evaluation requires purpose-built reliability testing. - Non-sequential decoding shows continuous improvement at inference time, offering a path to better dLLM reliability.

02 Reasoning Why Sample Rollouts When Model Probabilities Already Tell You?

Contrastive Pointwise Mutual Information (CPMI) starts from an intuitive idea: a good reasoning step should increase the model's confidence in the correct answer. Contrast against a set of wrong answers as negatives, and the signal gets cleaner. This contrastive signal comes directly from model internals. No Monte Carlo rollouts. No generating complete reasoning chains over and over.

Results: 84% reduction in dataset construction time, 98% fewer tokens generated, and higher accuracy on process-level evaluation and math reasoning benchmarks. Accepted at ACL. The method needs no additional training and no large-scale sampling. For teams that want process verification on their reasoning tasks but are blocked by labeling cost, this is one of the lightest paths available.

Key takeaways: - Contrastive mutual information replaces Monte Carlo sampling, cutting process reward labeling cost by two orders of magnitude. - The method uses model-internal probabilities directly. No extra training or large-scale rollouts required. - For teams blocked on PRM labeling cost, this is currently one of the most lightweight deployment options.

Source: Efficient Process Reward Modeling via Contrastive Mutual Information

03 Safety RAG Defense Goes From Static Rules to Runtime Games

Protecting RAG knowledge bases from leakage has been a static affair: filter malicious prompts, restrict output formats, add access controls. Attackers iterate. Static rules get bypassed eventually. CanaryRAG takes a different approach, borrowing the stack canary concept from software security.

Canary tokens are embedded in retrieved document chunks, reframing defense as a dual-path runtime integrity game. Any attempt to extract raw content triggers anomalous canary behavior, enabling real-time detection. The key advantage: plug-and-play integration with no model retraining or architecture changes. Impact on normal task performance and inference latency is minimal. Accepted at ACL.

The shift from static filtering to runtime adversarial detection matters more than this specific method. Teams running RAG in production should watch this direction. Actual generalization against unknown attack strategies still needs validation.

Key takeaways: - RAG defense shifts from static filtering to runtime adversarial games, with better resilience against iterative attacks. - Plug-and-play design requires no architecture changes, lowering the integration barrier for production environments. - The change in defense thinking matters more for deployed RAG teams than any single technique.

Source: Detecting RAG Extraction Attack via Dual-Path Runtime Integrity Game

04 Multimodal Why Apples-to-Apples Multimodal Comparisons Barely Exist

Unified multimodal model (UMM) architectures keep multiplying. Every group uses different training recipes, implementations, and evaluation setups. Fair side-by-side comparison requires manual alignment — time-consuming and error-prone. TorchUMM aims to close this infrastructure gap.

The codebase unifies mainstream UMMs under one roof, covering understanding, generation, and editing. It provides standardized evaluation protocols and post-training interfaces. Models of various scales and design philosophies are integrated, with evaluation spanning perception, reasoning, compositionality, and instruction following.

This isn't another benchmark leaderboard. It's an experimental platform for running different architectures under identical conditions. Teams doing multimodal model selection or comparative research should track whether it gains community adoption. Coverage and maintenance activity will determine its long-term value.

Key takeaways: - Fragmented multimodal architectures make fair comparison nearly impossible. TorchUMM is the first unified evaluation and post-training codebase. - Covers understanding, generation, and editing with standardized side-by-side comparison. - Useful for model selection teams, but long-term maintenance and community adoption are the key variables.

Source: TorchUMM: A Unified Multimodal Model Codebase for Evaluation, Analysis, and Post-training