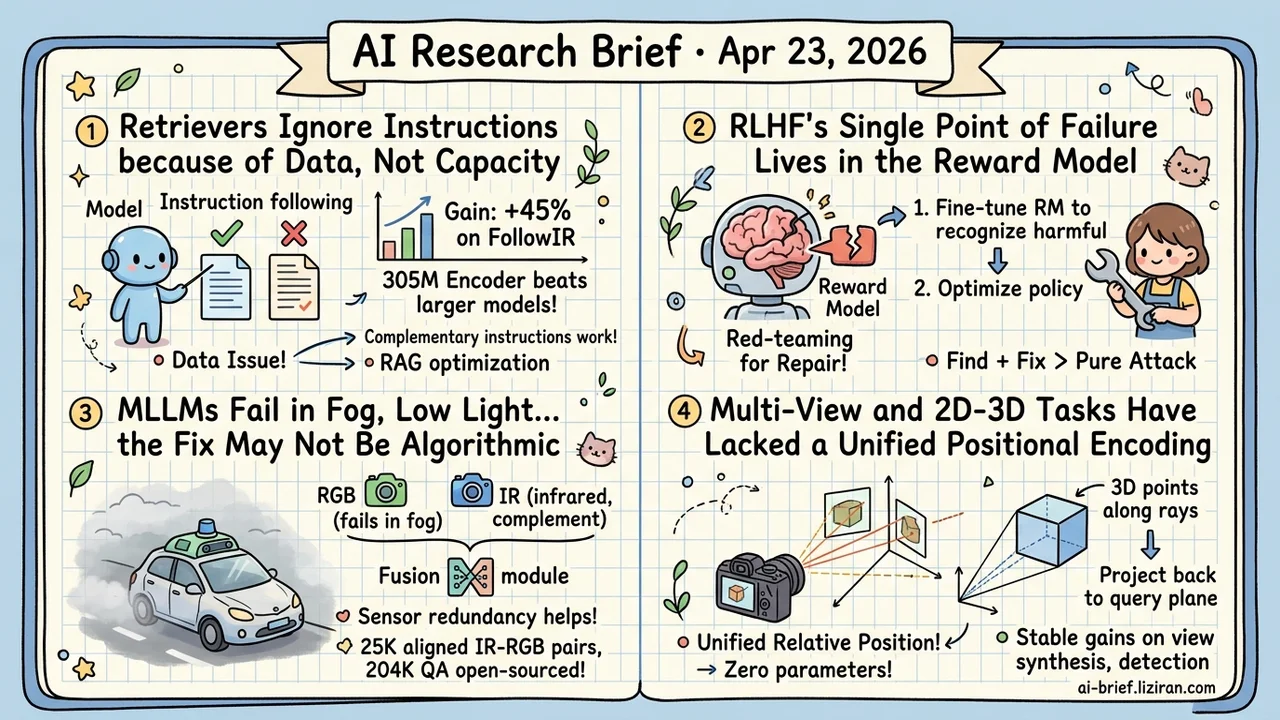

Today's Overview

- Retrievers Ignore Instructions Because of Data, Not Capacity: IF-IR synthesizes contrastive samples from complementary instruction pairs with label reversal. A 305M encoder gains 45% on FollowIR and beats general embeddings of comparable or larger size.

- RLHF's Single Point of Failure Lives in the Reward Model: ARES pushes red-teaming from "find the vulnerability" to end-to-end repair of the policy-reward system, closer to what teams with live RLHF pipelines actually need.

- MLLMs Fail in Fog, Low Light, and Motion Blur, and the Fix May Not Be Algorithmic: DUALVISION adds an infrared channel for modal complementarity and open-sources 25K aligned IR-RGB images with 204K QA annotations, cutting the cost of trying IR on existing MLLMs.

- Multi-View and 2D-3D Tasks Have Lacked a Unified Positional Encoding: URoPE samples 3D points along camera rays and projects them back to the query plane. Parameter-free, compatible with existing RoPE kernels, with stable gains on novel view synthesis, 3D detection, tracking, and depth estimation.

Featured

01 Retrieval Retrievers Understand Topics, Not Constraints

If you've built RAG, you've hit this: the user says "find docs about X but not from Y," and the retriever returns results about X while ignoring the exclusion. The root cause is the training objective — current retrievers optimize for semantic relevance and barely register instruction constraints. IF-IR attacks this from the data side.

The setup: a query, a positive matching both topic and instruction, and a hard negative that's topic-relevant but violates the instruction. An LLM then generates a complementary instruction that flips the labels between those same two documents. The same candidate pair with reversed labels under complementary instructions forces the model to re-evaluate through the instruction. It can't lean on fixed topical cues.

A 305M-parameter encoder gains 45% on FollowIR, beating general embedding models of comparable or larger size. The paper also notes data diversity and instruction supervision are complementary: diversity preserves general retrieval quality, and supervision handles instruction sensitivity.

Key takeaways: - Instruction neglect is a data problem, not capacity. No architecture change needed. - Complementary instruction pairs with label reversal suit any RAG retriever with filter, exclude, or attribute constraints. - A 305M model outperforming larger embeddings shows instruction-following depends on targeted data, not scale.

Source: Dual-View Training for Instruction-Following Information Retrieval

02 Safety Who Repairs the RLHF Reward Model After Red-Teaming?

The reward model is an overlooked single point of failure in RLHF. If it misses a class of unsafe behavior, the policy inherits the blind spot. Past red-teaming stopped at "found the exploit" and called it done. ARES pushes the loop one step forward.

A Safety Mentor composes adversarial prompts from topic, persona, tactic, and goal components, surfacing blind spots in both the core LLM and the RM. Repair runs in two stages: fine-tune the RM to recognize harmful content, then use the improved RM to optimize the policy.

How durable the repair is requires reading the full benchmarks; the abstract alone won't tell you. For teams running real RLHF pipelines, the "find + fix" integrated pattern beats pure attack evaluation. You want the system fixed, not a vulnerability list.

Key takeaways: - Reward models are RLHF's single point of failure, and alignment gaps propagate through them into policy. - Red-teaming's value isn't finding jailbreaks. It's closing the loop into repair. - Treat the RM as an iteratively repaired component, not a frozen judge.

Source: ARES: Adaptive Red-Teaming and End-to-End Repair of Policy-Reward System

03 Multimodal MLLMs Fail in Fog and Low Light. Add a Sensor.

MLLMs look sharp on clean RGB images, then degrade on fog, low light, or motion blur — exactly the conditions autonomous driving, robotics, and surveillance actually operate in. DUALVISION skips more data augmentation and adds an infrared channel for modal complementarity. A lightweight fusion module uses patch-level local cross-attention to inject IR into the MLLM alongside RGB.

They open-source 25K IR-RGB image pairs, 204K QA annotations, and a 500-pair evaluation set called DV-500. Reproduction cost is relatively low. The paper claims stable gains across visual degradations, but the marginal win over "pure RGB + stronger augmentation" requires reading the full benchmarks to judge.

Key takeaways: - Teams in driving, robotics, or surveillance can consider sensor redundancy as an option for degraded visual conditions, not just an algorithmic problem. - A lightweight fusion module plus public aligned datasets lowers the cost of trying IR on existing MLLMs. - Relative gains over strong pure-RGB baselines need the full paper and benchmark details to pin down.

Source: DUALVISION: RGB-Infrared Multimodal Large Language Models for Robust Visual Reasoning

04 Architecture Positional Encoding for Multi-View Transformers

RoPE is standard for 1D sequences and regular grids, but multi-view vision has lacked a unified relative position embedding. Query and key patches sit in different camera coordinate systems, and the geometric relationship doesn't reduce to pixel distance. URoPE samples 3D points along camera rays at preset depths, then projects back onto the query image plane. That reuses the 2D RoPE implementation while bringing camera-intrinsic awareness and global-coordinate invariance.

The authors show stable gains on novel view synthesis, 3D detection, tracking, and depth estimation — covering 2D-2D, 2D-3D, and temporal settings. It's parameter-free and compatible with existing RoPE-optimized attention kernels, so integration cost is small.

Validation so far is between camera views. Whether it generalizes to point clouds, irregular meshes, or graph structures needs future work.

Key takeaways: - For multi-view or 2D-3D joint modeling in Transformers, try it as the default relative position encoding. - Zero added parameters and kernel compatibility keep deployment cost low. - Point clouds, meshes, graphs, and molecules aren't validated yet. Wait before adopting if that's your domain.

Source: URoPE: Universal Relative Position Embedding across Geometric Spaces

Also Worth Noting

Today's Observation

The interesting thing in IF-IR isn't the complementary instruction synthesis itself — contrastive sample synthesis is old. It's how they turn "the retriever doesn't read instructions" into something provable. The paper first runs a diagnostic: build pairs where the same two candidate documents flip labels under two complementary instructions. If the model's relative score on this pair stays flat across both instructions, it never used the instruction at all. It's coasting on topic relevance. Once this control exists, the problem shifts from "model underperforms" (vague) to "model outputs approximate constants on the instruction dimension" (a concrete, attackable defect).

This diagnostic pattern matters because it targets a common practitioner pain point. Your model gains on benchmarks, then fails in production: which dimension did it miss? Usually we guess ("probably missed the long tail," "probably picked up a shortcut feature"), then patch with more data, regularization, or a bigger model. IF-IR flips the order. Hypothesize the suspect dimension first. Design controlled inputs where every other variable is fixed and only that dimension flips. If the model output doesn't flip with it, that dimension never entered the decision. Today's ARES shows the same shadow: adaptive red-teaming walks along topic, persona, tactic, and goal axes to surface reward-model blind spots. Same directed control, different domain.

A concrete move: next time your model gains offline and fails in production, spend half a day designing a controlled experiment. Fix every other variable, flip only the suspected dimension, and check whether the output flips with it. If it doesn't, the model never learned that dimension. Synthesizing contrastive pairs along that one axis will outperform more data collection.