Today's Overview

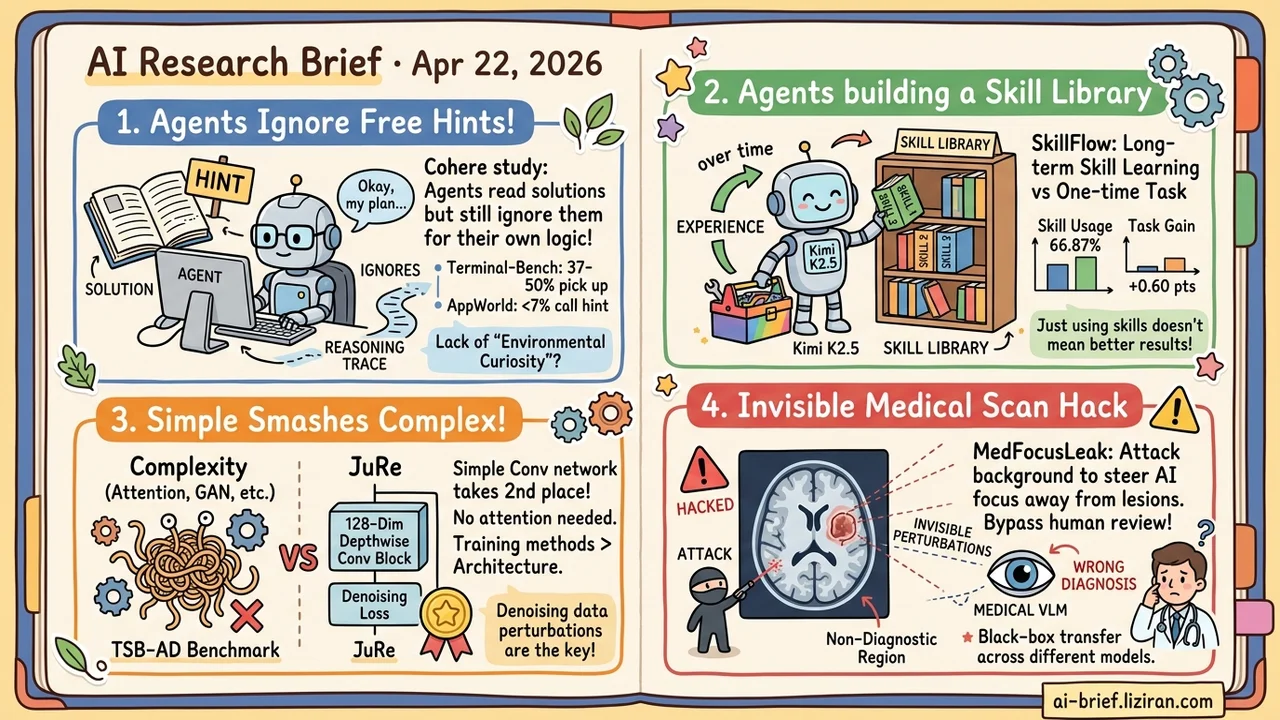

- Cohere Puts the Solution Directly in the Agent's Reading Path and It Still Follows Its Own Reasoning Trace. Terminal-Bench runs encountered the shortcut in 79-81% of runs but acted on it only 37-50% of the time; on AppWorld, fewer than 7% of agents that read the hint actually called it.

- SkillFlow Shifts Agent Evaluation From "Can You Use Tools" to "Can You Build Skills Over a Lifetime." 166 tasks across 20 families expose lifetime-level failures; Kimi K2.5 hit 66.87% skill usage for a +0.60 point gain.

- JuRe Takes Second on TSB-AD With a 128-Dim Depthwise-Separable Conv Block. No attention, no latent variables, no adversarial components; ablations show training perturbations drive the gap, not network capacity.

- MedFocusLeak Injects Invisible Perturbations Into Non-Diagnostic Regions of Medical Scans. SOTA attack success across six imaging modalities, with black-box transfer between medical VLMs.

Featured

01 Agents: Won't Use Answers They Can Plainly See

Cohere ran a near-prank of an experiment across Terminal-Bench, SWE-Bench, and AppWorld. The full solution was injected directly into what the agent could read. The answer was on the table. Terminal-Bench agents encountered the shortcut in 79-81% of runs but acted on it only 37-50% of the time. AppWorld was worse: more than 90% of attempts saw a hint explicitly telling the agent which command returned the full solution, yet fewer than 7% called it.

The agent isn't blind. It sees the free signal and moves past it, pushing forward on its own reasoning trace. The paper calls this missing capability "environmental curiosity." Current agents treat the environment as a retrieval interface for information they already expect, not as a place that might update their plan. Joint optimization across tool configuration, test-time compute, and training distribution did not fix it in most cases.

Key takeaways: - Unstable agent behavior in production may not be a prompt or tool issue. The agent may simply not be reading what it sees. - Reasoning traces and observation streams are structurally decoupled, which is not the same as weak reasoning ability. - To test your own stack, plant an obvious shortcut in the environment and measure pickup rate.

Source: Agents Explore but Agents Ignore: LLMs Lack Environmental Curiosity

02 Evaluation: Skill Usage Is Not Skill Benefit

Most agent benchmarks measure whether a model can finish a task with a given toolbox. SkillFlow shifts the target: can it discover skills from experience, repair failures, and maintain a coherent skill library over time? 166 tasks are grouped into 20 families with intra-family dependencies, a design built to expose lifetime-level failure modes invisible to single-step evaluation.

The numbers are telling. Under the lifelong protocol, Claude Opus 4.6 moves from 62.65% to 71.08%. Kimi K2.5 calls skills 66.87% of the time for only +0.60 points, and Qwen-Coder-Next actually regresses with a skill library compared to without one. Calling a skill and benefiting from one are different capabilities. Frameworks marketed as self-evolving may need to redraw their capability boundaries.

Key takeaways: - Agent evaluation is shifting from single-step tool use to lifetime skill accumulation, a harder bar. - High skill-usage rate does not imply task gain. Evaluate the two separately. - Any framework marketed as self-evolving deserves a lifelong-benchmark rerun before you trust the claim.

Source: SkillFlow: Benchmarking Lifelong Skill Discovery and Evolution for Autonomous Agents

03 Architecture: A 128-Dim Conv Block Beats the Attention Stack

JuRe's entire architecture is one depthwise-separable convolution residual block with hidden dim 128, plus a parameter-free structural difference scoring function. No attention. No latent variables. No adversarial components. It takes second place on the TSB-AD multivariate benchmark (AUC-PR 0.404 across 17 datasets), second on the UCR univariate archive, and first among neural network baselines.

The counterintuitive part: time-series anomaly detection has spent years piling on complexity. Attention, latent variables, and adversarial training have rotated through the leaderboards. JuRe suggests the direction itself may be wrong. The ablation confirms it. Removing training-time data perturbations drops AUC-PR by 0.047, a larger effect than any capacity change. Getting the denoising objective right matters more than adding layers.

Key takeaways: - In time-series anomaly detection, the loss function and training objective dominate, not the architecture. - Adding attention before a simple baseline is saturated wastes compute. - Teams working this area should run JuRe as a minimum baseline before reaching for complex models.

Source: Back to Repair: A Minimal Denoising Network for Time Series Anomaly Detection

04 Safety: Hiding Poison in Medical Image Backgrounds

MedFocusLeak injects coordinated, visually imperceptible perturbations into the non-diagnostic regions of medical scans. An attention-dispersal mechanism then drags the model's focus away from the lesion toward clinically plausible but wrong diagnoses. Clinicians judge scans by the lesion area, so a background-only attack that shifts model attention bypasses human review entirely.

Attack success hits SOTA across six imaging modalities. The harder problem is black-box transfer: an adversary does not need the target model's weights, because adversarial examples crafted on one medical VLM transfer to another. That makes this a model-family weakness, not a single-model bug. Teams deploying medical VLMs need to cover non-diagnostic regions in their robustness pipeline; lesion-only perturbation testing is not enough.

Key takeaways: - Medical VLM attention can be steered systematically through background perturbations that humans cannot see. - Black-box transfer means per-model defense hardening is insufficient. - Robustness pipelines should cover non-diagnostic regions before deployment, not just lesion perturbations.

Source: When Background Matters: Breaking Medical Vision Language Models by Transferable Attack

Also Worth Noting

Today's Observation

SkillFlow assumes agents can extract and maintain skills from experience. Cohere's Agents Explore paper landed the same day and tears down the prerequisite. If an agent cannot pick up a solution placed in front of it, extracting subtle long-term skill patterns from experience is out of reach. The two papers do not cite each other, but reading them together reorders how you evaluate whether an agent framework works. First check whether the agent responds to observations at all. Then ask whether it can accumulate a durable skill library.

Continuity Layer and AnchorMem arriving on the same day echo the gap from the memory side. If agents cannot process what they currently see, no memory architecture can save them.

One concrete action: plant an obvious shortcut in your existing agent integration. Try a tool response that says "call X to finish this task in one step," then run a batch. If pickup is under 50%, the urgent question is whether the reasoning trace and observation stream are actually wired together. Prompt tuning and model swaps can wait.