Today's Overview

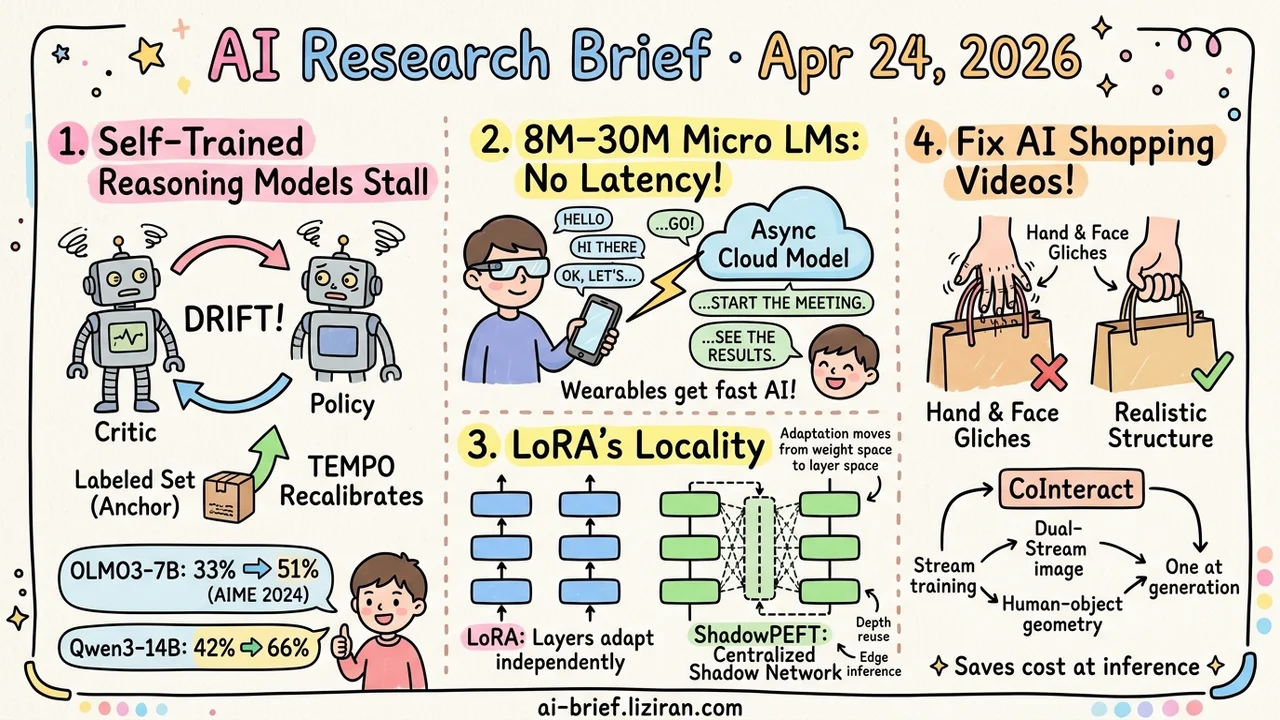

- Self-Trained Reasoning Models Stall Because the Critic Drifts. TEMPO recalibrates the critic against a small labeled set. OLMO3-7B jumps from 33% to 51% on AIME 2024, Qwen3-14B from 42% to 66%. Diversity holds.

- 8M–30M Micro LMs Write the First 4–8 Words On-Device. A cloud model continues asynchronously. From the user's perspective, latency disappears; the device-vs-cloud question stops being either-or.

- LoRA's "Locality" Is a Diagnostic Axis Worth Isolating. ShadowPEFT moves adaptation from weight space to layer space using a centralized shadow network. Same architectural signal as the B-matrix symmetry paper from two days ago.

- What Gives Away an AI Shopping Video Isn't Picture Quality. It's hand and face anomalies plus fingers clipping through products. CoInteract bakes spatial structure into generation through dual-stream training, with the auxiliary stream removed at inference, so generation cost stays flat.

Featured

01 Reasoning: Self-Training Stalls Because the Critic Drifts

Test-time training was supposed to be free gains for reasoning models. The setup: keep updating parameters at inference using unlabeled problems. Multiple teams hit the same wall — more compute stops moving the needle, and diversity collapses with it.

TEMPO's diagnosis: when the model trains on its own generated rewards, the critic drifts with the policy. The reward signal loses its external anchor, and the loop stalls. Periodic recalibration of the critic against a small labeled set is the fix. Formalized as EM, this turns out to be exactly the calibration step prior methods skipped.

OLMO3-7B climbs from 33.0% to 51.1% on AIME 2024. Qwen3-14B goes from 42.3% to 65.8%. Diversity holds. If you're working on self-improvement (training a reasoning model on data it generated itself), this paper turns "extra training stops working" from vague intuition into a locatable, fixable failure mode.

Key takeaways: - Self-trained reasoning models stall because the critic drifts with the policy. Periodic recalibration against a small labeled set is the missing EM step. - OLMO3-7B goes from 33% to 51% on AIME 2024; Qwen3-14B from 42% to 66%, with diversity preserved. - For self-improve teams, the lesson is structural: your reward signal needs an external anchor, otherwise additional training feeds itself.

Source: TEMPO: Scaling Test-time Training for Large Reasoning Models

02 Efficiency: Eight Words On-Device, Then the Cloud Continues

On-device model design used to be a binary: big enough to be useful, or small enough to be fast. μLMs reframe it. An 8M–30M parameter model on the device produces only the first 4–8 words of a response, instantly. The cloud model picks up asynchronously. From the user's perspective, latency disappears.

The cloud is no longer the responder. It's the continuator. Three error-correction mechanisms catch cases where the local opening drifts off-track. For smart glasses, smartwatches, AR — products bottlenecked by both battery and latency — this is a deployment-ready division of labor.

What the abstract doesn't cover: behavior under network jitter. If the local opening lands but the cloud doesn't catch up in time, what does the user see? Worth checking the full paper.

Key takeaways: - μLMs redefine the on-device model from "complete generator" to "opener," ending the device-vs-cloud either-or. - 8M–30M parameters match the language quality of 70M–256M models, pushing the usable floor for tiny models down. - Wearable and AR responsive AI now has an engineering path; jitter behavior still needs empirical validation.

Source: Micro Language Models Enable Instant Responses

03 Training: Should PEFT Be Centralized or Distributed?

LoRA inserts an independent low-rank perturbation per weight matrix. Each layer adapts on its own; nothing coordinates across weights. That "locality" is itself a diagnostic axis worth isolating.

ShadowPEFT goes the other direction. It maintains a parallel shadow state in each transformer layer and evolves it through depth-shared modules. Adaptation moves from weight space to layer space — centralized, not distributed. The shadow module decouples from the backbone, so it can be reused across depths, pretrained independently, even deployed in detached mode (a useful property for edge inference).

Benchmarks land roughly even with LoRA and DoRA at matched parameter budgets. No dramatic gain. Pair this with the LoRA paper from two days ago that approached the same problem through B-matrix symmetry, and a pattern emerges: PEFT tooling is examining LoRA's basic shape from multiple angles.

Key takeaways: - "LoRA's locality" is a diagnostic axis parallel to but independent of weight symmetry; PEFT's basic shape still has design space to explore. - Shadow modules support depth reuse, independent pretraining, and detached deployment, which is practically valuable for edge. - If you build PEFT tools or infrastructure, track the "centralized vs distributed" dividing line, not single-paper benchmark deltas.

Source: ShadowPEFT: Shadow Network for Parameter-Efficient Fine-Tuning

04 Video Gen: Hands and Faces Tell on AI Video

In human-product interaction videos, viewers spot AI through two specific signals: hand and face structural glitches, and fingers passing through products. Both jar more than overall visual quality.

CoInteract pulls these failures out as explicit constraints inside a Diffusion Transformer. A Human-Aware MoE routes hand and face tokens to lightweight regional experts for finer structural stability. Dual-stream training adds an auxiliary stream that encodes human-object interaction geometry, regularizing the shared backbone weights. At inference, the auxiliary stream is removed entirely, so generation cost stays flat.

The shift is conceptual: encode spatial structure into generation, don't patch it afterward. Magnitudes need the full paper — the abstract reports only qualitative wins ("significantly better than existing methods") with no concrete metrics. For teams automating ecommerce or ad short-video, this line is worth tracking. Eliminating these two giveaways will move conversion rates more than another tier of overall fidelity.

Key takeaways: - Hand and face structure plus finger clipping are the first tells viewers use to flag AI video; eliminating them moves conversion more than overall fidelity. - The structure stream is removed entirely at inference, so generation cost is unchanged. - The abstract reports only qualitative wins; specific gains need to be checked in the full paper.

Also Worth Noting

Today's Observation

Two unrelated lines of work — TEMPO on test-time training, ShadowPEFT on PEFT — converge on the same structural diagnosis: the ceiling isn't capacity or compute, it's the assumption of "local independence." TTT lets the model score itself, but self-generated rewards have no external anchor, so the policy drifts. LoRA puts an independent low-rank perturbation on every weight matrix, with no coordination across them. The proposed fixes are oddly symmetric. TTT adds an external calibrator to re-anchor the critic. PEFT replaces distributed perturbations with one centralized shadow network. Neither paper cites the other, but both land on the same architectural call: the missing piece is a global anchor across steps or across weights.

If you're working on reasoning self-improvement or PEFT infrastructure, the next thing to audit isn't "which hyperparameter to tune." Draw out your training or adaptation pipeline and look for a global anchor. Is there a small labeled set passing through every k steps? Do all LoRA layers share a centralized representation? Add "local vs global" as a diagnostic axis in your experiment notes. Next time something refuses to budge, you'll have one more direction to check before falling back into the learning-rate-and-rank loop.