Today's Overview

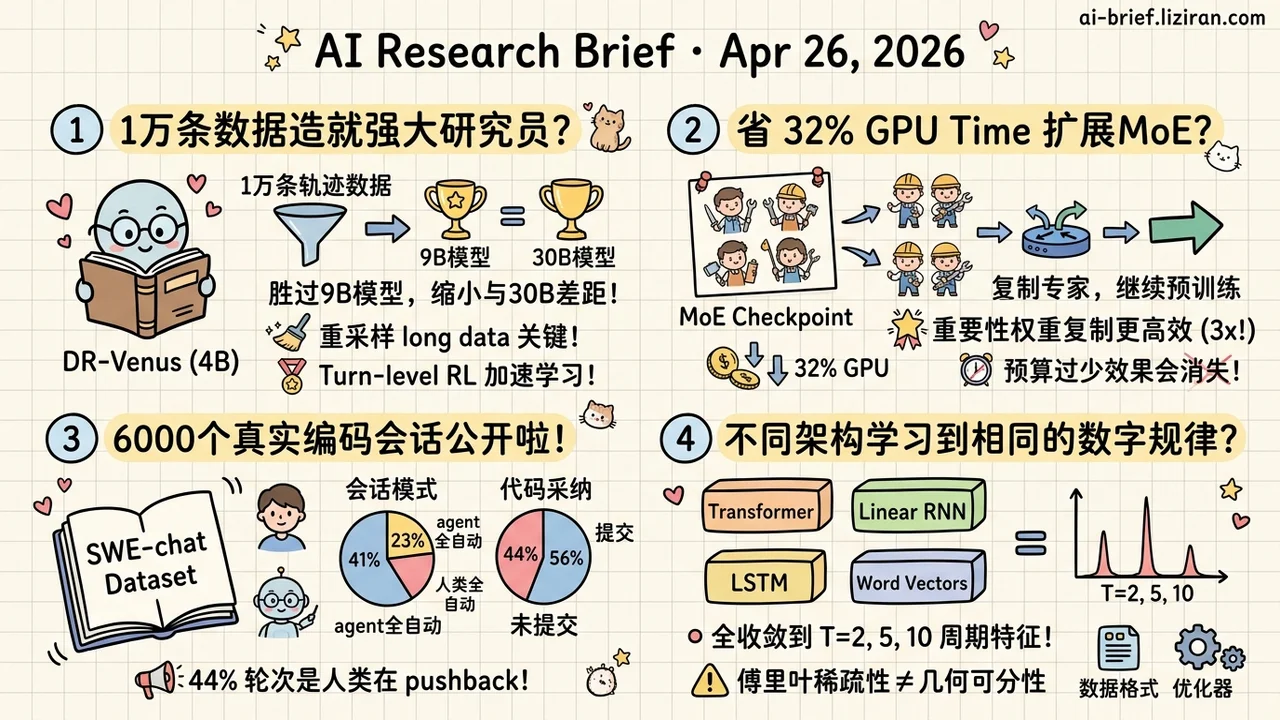

- 10K Open Trajectories Train a 4B Deep Research Agent. DR-Venus combines agentic SFT with turn-level RL to deliver an edge-deployable agent that beats sub-9B agentic models and narrows the gap to the 30B class.

- Expanding MoE Experts from a Checkpoint Saves 32% GPU Time. Expert Upcycling copies experts and expands the router, then lets experts re-differentiate during continued pretraining; picking which experts to copy by gradient importance multiplies the gain by 3x.

- 6,000 Real Coding-Agent Conversations Finally Public. SWE-chat shows bimodal usage — 41% of sessions offload nearly everything to the agent, 23% have humans writing all the code; only 44% of agent code lands in commits and 44% of turns are pushback.

- Four Architectures Learn the Same Number Representations. Transformer, Linear RNN, LSTM, and word vectors all converge on T=2, 5, 10 Fourier-domain periodic features, but whether mod-T is linearly classifiable still depends on data format and optimizer.

Featured

01 Agent: Squeezing 10K Trajectories Until They Train a 4B Agent

The bottleneck for training deep research agents isn't compute or parameter count. It's high-quality long-horizon trajectories, and open-source supply is thin. DR-Venus delivers a recipe that works on just 10K open trajectories.

Stage one is agentic SFT. The key moves are strict data cleaning and resampling long trajectories so they aren't drowned out by ordinary samples in each batch. Stage two builds RL on top of IGPO with turn-level rewards, using information gain and format regularization as supervision signals to densify credit assignment across long sequences.

The recipe beats sub-9B agentic models on multiple deep research benchmarks and closes the gap toward the 30B class. The abstract is light on stage-two reward specifics — exact reshaping and weighting need the full paper. Stage one's data handling is concrete enough to compare against your own pipeline today.

Key takeaways: - Resampling long trajectories beats blindly adding data when supply is tight. - Turn-level rewards using information gain ease credit assignment for sparse long-horizon tasks. - Stage one's data recipe is the immediately actionable piece; stage two's RL details still need the full paper.

Source: DR-Venus: Towards Frontier Edge-Scale Deep Research Agents with Only 10K Open Data

02 Training: Don't Retrain Your MoE, Copy and Re-Specialize

At 7B-13B scale, expanding experts from an existing MoE checkpoint saves 32% of GPU time versus training from scratch, with validation loss matching. The mechanism: copy experts and expand the router so an E-expert model becomes mE-expert, while per-token active expert count stays the same. Inference cost doesn't move.

Copying gives a warm start. Initial loss begins well below random init, and continued pretraining then breaks symmetry so experts re-specialize. The authors add a non-uniform copy rule that picks experts to duplicate by gradient importance score. On a tight continue-pretraining budget, this widens the quality-gap closure by more than 3x.

For teams holding an MoE base that need more experts, this beats a from-scratch quote. The 32% savings depends on enough continue-pretraining for the copied experts to actually diverge. Cut the budget short and the win evaporates.

Key takeaways: - Teams with existing MoE checkpoints can grow expert count without retraining, with matched-quality runs saving 32% GPU time. - Non-uniform copying by gradient importance beats average copying by 3x, and matters most on tight budgets. - The 32% number assumes enough continued pretraining for experts to re-differentiate; too short a budget loses the savings.

Source: Expert Upcycling: Shifting the Compute-Efficient Frontier of Mixture-of-Experts

03 Code Intelligence: First Real Numbers on How People Use Coding Agents

Coding-agent product teams have been judging real usage on intuition and scattered feedback. SWE-chat assembles real sessions from open repos into one dataset: 6,000 sessions, 63K user prompts, 355K tool calls, with ongoing updates promised.

A few numbers worth memorizing. 41% of sessions are pure vibe coding where the agent writes nearly all the code; 23% are sessions where the human writes everything. Usage is bimodal, not a smooth mix. Of code the agent produces, only 44% lands in final commits, and it ships more security vulnerabilities than human-written code. 44% of turns are users pushing back through corrections, error reports, or interruptions.

These numbers sit closer to live distribution than any curated benchmark. Treat it as infrastructure rather than a conclusion. The abstract only promises a living dataset, so the real value depends on what the research community digs out of it.

Key takeaways: - Real usage is bimodal, not uniformly mixed; product design has to serve "fully delegated" and "human-driven" users separately. - Only 44% of agent code gets adopted and 44% of turns are pushback, exposing a clear gap between benchmark scores and real adoption. - This is a dataset, not an evaluation; coding-agent teams should track follow-up analyses built on top of it.

Source: SWE-chat: Coding Agent Interactions From Real Users in the Wild

04 Interpretability: Four Architectures, Same Number Representations

Four wildly different architectures — Transformer, Linear RNN, LSTM, and classic word vectors — independently learn periodic Fourier-domain features at T=2, 5, and 10 when trained on natural text. Representation research usually hunts for what each architecture does differently. This paper reports the opposite: data structure forces the same solution.

There's a second layer hiding underneath. Fourier-domain spikes are necessary but not sufficient. Whether you can linearly classify mod-T geometrically is a separate test, and only some models pass. The paper proves Fourier sparsity doesn't imply geometric separability, then shows data, architecture, optimizer, and tokenizer all shape the second step.

One striking detail: multi-token addition problems trigger geometric separation, while the same problem in single-token form does not. This isn't a new mechanistic finding. It carves out two resolution levels for the old question of how similar different models actually are.

Key takeaways: - Cross-architecture convergence — four architectures all learn T=2, 5, 10 periodic representations, suggesting number representation is more data-driven than architecture-driven. - Common patterns hide second-order differences; Fourier sparsity ≠ geometric separability, so "shared pattern" and "pattern usable for classification" are two different things. - Data formatting matters more than expected; multi-token versus single-token addition changes whether geometric separation emerges.

Source: Convergent Evolution: How Different Language Models Learn Similar Number Representations

Also Worth Noting

Today's Observation

DR-Venus and Expert Upcycling both used the word "frontier" today, but they pushed in opposite directions. DR-Venus drives the deep-research-agent capability frontier down to 4B and edge deployment. Expert Upcycling pushes the MoE compute-efficient frontier toward larger total parameters while cutting actual training cost. Two independent paths chip at the default assumption that frontier means biggest model plus most compute. One shows small sizes can grab capabilities once thought cloud-only. The other shows big sizes don't have to pay from-scratch training prices.

For practitioners, model selection has stopped being a single track. "We use a frontier model" used to point to one lineage. Today it splits into at least two.

Concrete actions: before saying "frontier model" again, pause one second and ask whether you want the absolute capability ceiling or matched capability at half the cost. These have separated. If you have a dense or small MoE checkpoint and are weighing whether to scale, put Expert Upcycling alongside the from-scratch quote, not as an afterthought. If you're evaluating whether edge-side deep research is feasible, run DR-Venus's stage-one data recipe on a few hundred of your own trajectories before deciding.