Today's Overview

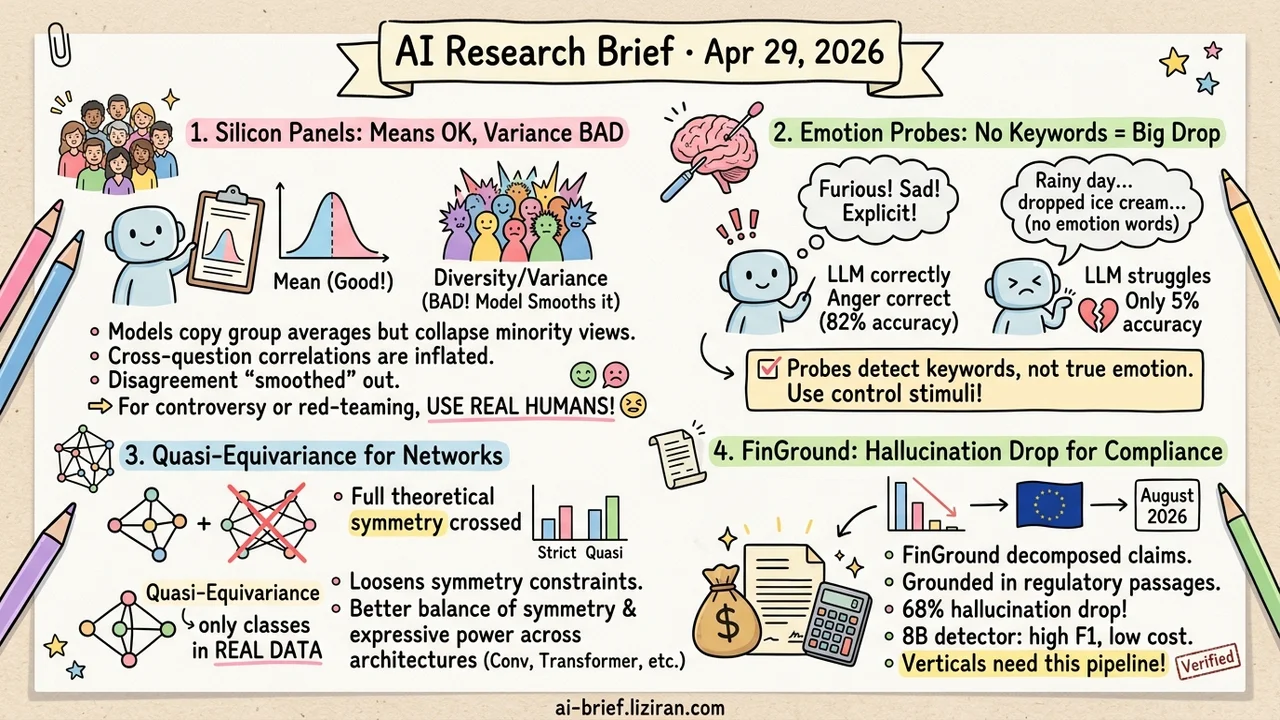

- Silicon Panels Match the Mean and Distort the Variance. Stanford used 277 professional philosophers as ground truth; seven open and closed models all replicate the aggregate distribution, but cross-question correlations come out systematically inflated and minority views collapse. Anything that depends on the shape of disagreement gets a smoothed signal.

- Emotion Probe Accuracy Drops From 82% to 5% Once Keywords Are Removed. MIT's AIPsy-Affect ships 480 paired stimuli with all emotion words surgically excised, and most published "emotion features" lose their signal under this baseline. New probing, SAE, and steering work without a keyword-free control deserves a discount.

- Metanetwork Symmetry Constraints Get Loosened a Notch. Quasi-equivariant networks abandon full theoretical symmetry and hold only on the equivalence classes that actually appear in real data, verified across feedforward, conv, and transformer architectures.

- FinGround Drops Hallucination Rate 68% by Grounding Atomic Facts to Regulatory Passages. An 8B distilled detector keeps 91.4% F1 at $0.003 per query. The EU AI Act's August 2026 deadline turns "fewer hallucinations" from product risk into compliance risk; the verify-then-ground decomposition ports cleanly to any vertical needing fact-level traceability.

Featured

01 Safety: Silicon Panels Get Means Right, Variance Wrong

Replacing real respondents with LLMs in survey panels is something the field already knows works in aggregate. Stanford confirms that part. The new contribution separates "reproducing individual stances" from "preserving the cross-question correlation structure." Using 277 professional philosophers' public PhilPeople positions as ground truth, the team compared seven open and closed models. Individual reproduction is acceptable. But the correlations between judgments come out systematically higher than reality.

The models implicitly assume that experts hold tightly coordinated positions across unrelated questions. Minority views, edge stances, and internal conflicts collapse inside the silicon panel. The pattern holds at scale on the PhilPapers 2020 Survey (N=1785), and DPO fine-tuning does not change the tendency.

Synthetic user studies, alignment moral panels, and behavioral simulations stay usable when analysis runs on means or majority votes. Anything that depends on the shape of disagreement — measuring controversy, finding long-tail preferences, adversarial red-teaming — receives a homogenized version. Real-respondent samples or explicit heterogeneity modeling become non-optional.

Key takeaways: - Silicon panels are trustworthy on means, untrustworthy on variance; confirm which signal you actually need before you commit. - Disagreement-driven analysis (controversy, long-tail preferences, red-teaming) requires real respondents or explicit heterogeneity modeling. - Neither DPO nor model scale fixes this; it looks like a structural side effect of the training objective itself.

Source: The Collapse of Heterogeneity in Silicon Philosophers

02 Interpretability: Are Probes Detecting Anger or the Word "Furious"?

Mechanistic interpretability work on emotion typically uses stimuli containing explicit emotion words — "I am furious." When the probe fires, is the model recognizing anger or recognizing the word "furious"? MIT's AIPsy-Affect provides 480 clinical stimuli built to settle this. 192 use pure narrative scenes to elicit Plutchik's eight basic emotions, with all emotion keywords surgically removed. Another 192 are matched neutral controls, identical in structure but with the affective content stripped.

Three NLP defense tests confirm the design. Bag-of-words sees only situational vocabulary. A contextual classifier still detects the presence of emotion (p<10⁻¹⁵), but classification accuracy lands at just 5.2% on the keyword-free set, against 82.5% on a keyword-containing control. Past "emotion features" that fired reliably on keyword-rich stimuli now have a falsifier.

Key takeaways: - Running linear probing, SAEs, or steering vectors on keyword-free paired stimuli cleanly separates lexical signal from genuine emotion representation. - Existing emotion-probing results need to be rerun through this battery; the circuit you found may be a vocabulary circuit. - Adding this control is now a low-cost methodological obligation for new work in the area.

03 Architecture: Loosen Metanetwork Symmetry, Gain Expressiveness

Treating neural network weights as input has an unavoidable problem. The same function corresponds to infinitely many parameter sets, and feeding raw weights treats equivalent networks as different samples. Earlier work enforces strict equivariance — invariance under every theoretical symmetry — at the cost of sparse structure and reduced expressive power. This paper introduces quasi-equivariance: hold consistent only across the equivalence classes that actually appear in real data, not across the full theoretical symmetry group. Engineering-sufficient and computationally manageable.

The trade lands well across three architecture families. Feedforward, convolutional, and transformer all show better balance between symmetry preservation and expressive power than the strict-equivariance baselines.

Key takeaways: - Quasi-equivariance trades full theoretical symmetry for stronger expressiveness on the structures that actually show up. - Applies cleanly to all three mainstream architecture families, with low porting cost. - Teams working on model merging, weight editing, and hypernetworks should compare their own symmetry choices against this.

Source: Quasi-Equivariant Metanetworks

04 Retrieval: When Every Fact Has to Be Traceable

The EU AI Act's high-risk deadline lands in August 2026. In finance, law, and medicine, "the model made up a number" stops being a product defect and becomes a compliance event. FinGround's approach isn't another RAG layer. It decomposes answers into atomic claims, each verified against a specific passage in the regulatory source. Computational claims also run through a formula-reconstruction path, since 43% of errors are arithmetic — pure semantic matching never catches them.

With retrieval held flat, hallucination rate drops 68% below the strongest baseline. An 8B distilled detector keeps 91.4% F1 at $0.003 per query. The financial framing is mostly cosmetic. What ports cleanly is the verify-then-ground decomposition itself; any vertical that demands fact-level traceability can pick up the same pipeline.

Key takeaways: - The compliance deadline turns "reduce hallucinations" from product wishlist into regulatory requirement; expect more work in this direction. - Atomic-claim verification with type-routed paths fits vertical scenarios better than generic hallucination detection. - Legal and medical RAG teams can borrow this pipeline structure directly without waiting for a domain-specific version to roll their way.

Source: FinGround: Detecting and Grounding Financial Hallucinations via Atomic Claim Verification

Also Worth Noting

Today's Observation

Silicon Philosophers and AIPsy-Affect are unrelated papers — one on philosophy-domain silicon panels, one on emotion mechanistic interpretability — but they land in the same shape. A measurement that previously looked like it worked collapses under a finer metric. Silicon panels match aggregate opinion on the coarse view, then heterogeneity flattens. Emotion probes fire reliably on keyword-rich stimuli, then most of the signal disappears once keywords come out. Two papers don't point at the same trend; they share a methodological lesson.

For practitioners: any "this method works" claim that relies on a single aggregate metric, or only on common stimuli, may be carrying hidden measurement bias. Not unusable, but you don't know whether you're measuring real signal or leakage. A practical checklist: (1) when reporting an aggregate metric, report a distribution-shape or variance metric alongside; (2) take any conclusion built on common stimuli and rerun it on a control set with leakage features deliberately removed; (3) put control experiments at the same prominence as headline results, not buried in the appendix. Next time you take over or review a probing, eval, or simulation result, lead with: "if the leakage signal were removed, would the headline still hold?" That one question saves a lot of rework.