Today's Overview

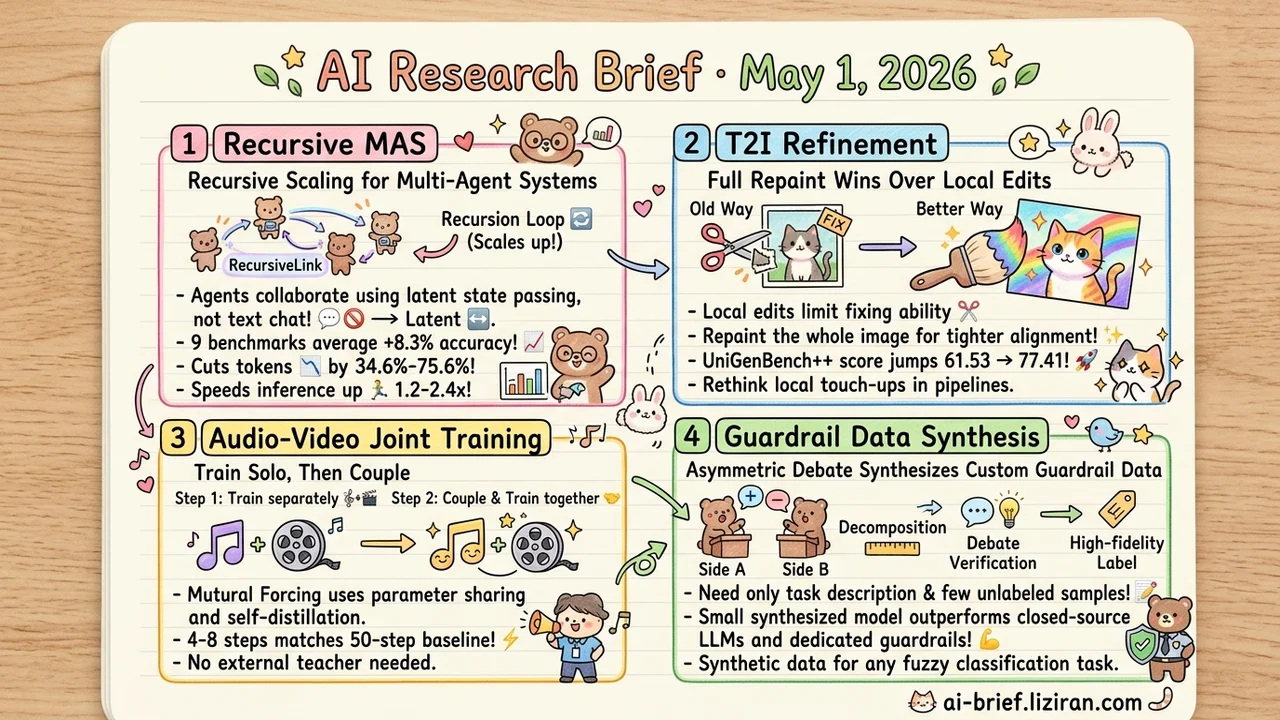

- Recursive Scaling Moves From Single Models to Multi-Agent Systems. RecursiveMAS casts the entire multi-agent setup as one latent-space recursive computation, posting +8.3% accuracy on average across 9 benchmarks while cutting tokens 34.6%-75.6% and speeding inference 1.2-2.4x.

- T2I Refinement Works Better When You Repaint the Whole Image. Editing-based pipelines compress the modification space too aggressively. UniGenBench++ jumps from 61.53 to 77.41, calling the default "local refinement" path into question.

- Audio-Video Joint Training: Train Solo, Then Couple. Mutual Forcing uses two-stage training plus self-distillation, matching a 50-step baseline at 4-8 steps and dropping the external teacher model entirely.

- Asymmetric Debate Synthesizes Custom Guardrail Data. BARRED needs only a task description and a few unlabeled samples to outperform closed-source LLMs and dedicated guardrails. The recipe ports to any fuzzy-boundary classification task.

Featured

01 Multi-Agent Systems Get a Scaling Knob — Recursion

Looped and recursive language models opened a new scaling axis: iterating the same model over its latent state to deepen reasoning. RecursiveMAS extends that idea from single models to multi-agent systems. A lightweight RecursiveLink module passes latent state between agents, with the entire multi-agent setup cast as one unified latent-space recursive computation.

The framing matters because multi-agent has lacked a clean scaling knob. Parameters, context, and agent count all feel like the wrong axis. Recursion already worked at the single-model level, so the open question was whether agent collaboration could ride the same lever.

Across 9 benchmarks (math, science, medicine, search, code) and 4 collaboration modes, accuracy rises 8.3% on average. Tokens drop 34.6%-75.6%. Inference speeds up 1.2-2.4x. Latent-space recursion skips the token round-trips that text-based dialogue requires, which matters more for production deployment than the raw accuracy gain. The abstract doesn't break results down by scenario or explain how RecursiveLink moves latent state between heterogeneous agents. Whether this transfer holds depends on the paper itself.

Key takeaways: - Multi-agent systems now have an explicit scaling axis: recursion depth. - Latent-space state passing replaces text-based multi-turn dialogue and can cut token cost an order of magnitude. - Teams building multi-agent frameworks should track the RecursiveLink implementation. That module is where this transfer succeeds or fails.

Source: Recursive Multi-Agent Systems

02 T2I Refinement: Don't Edit Locally, Repaint Whole

Unified multimodal models doing T2I refinement default to refinement-via-editing. The model produces an editing instruction, modifies only the misaligned regions, and preserves the rest pixel-for-pixel. This paper inverts the assumption. Editing instructions describe changes coarsely, and pixel-level preservation compresses the modification space until the fix can't fully land.

The new approach drops the editing instruction entirely. Initial-image semantic tokens plus the target prompt drive a full repaint. Three benchmarks all improve. UniGenBench++ jumps from 61.53 to 77.41 — the largest jump. Geneval moves from 0.78 to 0.91, also a clear step.

The conclusion is simple. Refinement doesn't have to mean small edits. Giving the model more room to modify produces tighter alignment. Teams running image product pipelines that default to "local touch-ups" as post-processing should reconsider whether that path still wins.

Key takeaways: - T2I refinement doesn't require small edits. More modification room produces better alignment. - The bottleneck on editing-based pipelines isn't model capability. Pixel-level preservation chokes the modification space. - If your image pipeline relies on local touch-ups, reevaluate whether that default still beats a full repaint.

03 Audio-Video Joint Training: Train Solo, Then Couple

The naive approach to joint audio-video generation is training from scratch on paired data. Mutual Forcing reverses the order. Each generator trains to maturity separately, then both couple into a unified joint training stage. Joint optimization happens last, not first.

Streaming generation deserves more attention here. Prior recipes like Self-Forcing trained a bidirectional teacher first and then distilled it into a causal generator over multiple stages. Mutual Forcing skips that. It runs directly on an autoregressive model. Few-step and many-step modes share weights. Many-step boosts few-step through self-distillation. Few-step generates historical context during training to tighten train-inference consistency. Both modes reinforce each other.

This eliminates the external teacher, lets the model learn directly from real paired data, and makes training sequence length more flexible. The headline number — 4-8 steps matching a 50-step baseline — looks strong. Long-video audio-visual sync still needs to be judged from demos.

Key takeaways: - A useful order for multimodal joint training: mature each modality alone before coupling. Avoids tearing the model between two objectives from step one. - Self-distillation works through parameter sharing. Many-step teaches few-step, few-step feeds historical context back. No external teacher needed. - The 4-8-step parity with 50 steps is a strong efficiency number. Long-video sync quality is a demo-based call.

Source: Mutual Forcing: Dual-Mode Self-Evolution for Fast Autoregressive Audio-Video Character Generation

04 Asymmetric Debate Beats Paying for Labels

For fuzzy-boundary classification tasks, the expensive part is never the model. It's annotation. BARRED's core mechanism is asymmetric debate. The policy's domain space is decomposed into multiple dimensions for coverage, then two agents argue from opposite sides of the boundary about whether a sample should be classified positive or negative. Once disagreement resolves, the surviving label is high-fidelity.

A task description and a small unlabeled sample set are enough to synthesize training data sufficient for a small model. Across multiple custom policies, the result outperforms closed-source LLMs and specialized guardrail models. Ablations show dimension decomposition and debate verification both matter. Decomposition delivers diversity. Debate delivers label correctness.

The paper's setup is guardrails. Using LLMs to manufacture labeled data for themselves ports cleanly to any classification task with fuzzy boundaries and high annotation cost.

Key takeaways: - Custom policies no longer force a choice between "feed a large model" and "train a classifier." Synthetic data makes the small-model path workable. - Asymmetric debate adds the most value on boundary samples. Generic safety datasets are thin in exactly that region. - Swap guardrails for your own classification task — content moderation, intent detection, compliance filtering — and the methodology transfers.

Source: BARRED: Synthetic Training of Custom Policy Guardrails via Asymmetric Debate