Today's Overview

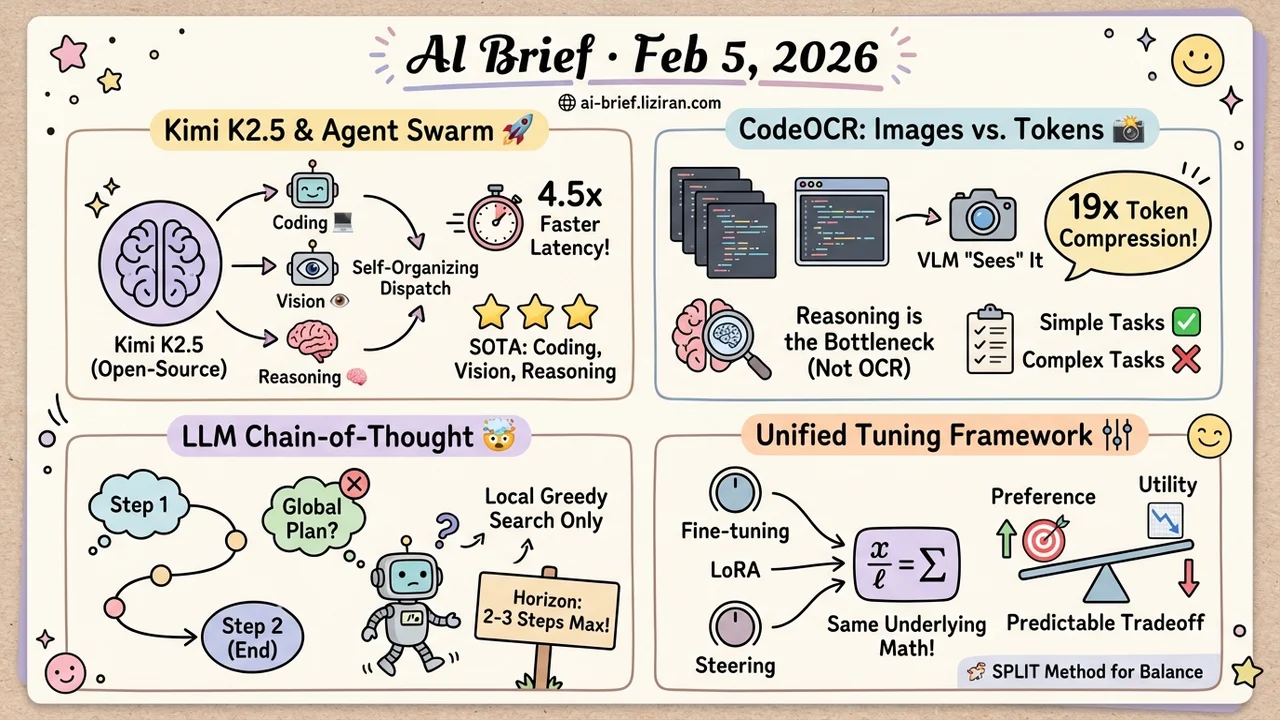

- Kimi K2.5 Goes Open-Source With Agent Swarm, Cutting Multi-Agent Latency by 4.5x — Moonshot AI releases an open-weight multimodal agent model with joint text-vision training and a self-organizing parallel dispatch framework, hitting SOTA across coding, vision, and reasoning.

- Feed source code screenshots to a vision model: 19x token compression with near-zero accuracy loss on simple tasks. CodeOCR finds the bottleneck isn't OCR quality but VLM code semantic reasoning.

- LLM Chain-of-Thought has no global planning ability — probing experiments reveal models can only "see" 2-3 steps ahead; long reasoning chains run on local greedy search, not foresight.

- Fine-tuning, LoRA, and activation steering look different, but a Zhejiang University team finds all three share the same underlying math. A predictable preference-utility tradeoff governs them all.

Featured

01 Agent Agent Swarm Makes Orchestration Self-Organizing

A core bottleneck of agent systems is orchestration — how do sub-agents divide work, run in parallel, and recover from failures? Most solutions rely on hand-defined DAGs or central schedulers.

Kimi K2.5 takes a different route. Its Agent Swarm framework lets the model decompose complex tasks into heterogeneous sub-problems and dynamically dispatch them across agents for parallel execution, reducing latency by up to 4.5x over single-agent baselines.

The model itself is a multimodal agent model trained via joint text-vision optimization — including joint pre-training, zero-vision SFT (text-only SFT that still improves vision capability), and joint RL. It hits multiple SOTA benchmarks across coding, vision, reasoning, and agentic tasks. Weights are open.

Key takeaways: - Agent Swarm is self-organizing orchestration without hand-crafted workflows - Joint text-vision training shows multimodal capabilities reinforce rather than hinder each other - Open weights mean the community can reproduce and iterate immediately

Source: Kimi K2.5: Visual Agentic Intelligence

02 Code Intelligence Code as Screenshots: 19x Fewer Tokens

LLMs processing source code face a straightforward problem: more code means more tokens, and cost scales linearly. CodeOCR proposes a counterintuitive alternative — render code as images and let vision-language models "see" it instead of "reading" it. A single screenshot compresses the same content to 1/19th the token count.

Testing 14 mainstream VLMs across 12 code understanding tasks, the team finds that image mode matches text accuracy on simple tasks (code summarization, clone detection) but falls noticeably short on tasks requiring precise reasoning (e.g., vulnerability detection).

The bottleneck isn't OCR quality — it's the vision model's ability to reason about code semantics.

Key takeaways: - 19x token compression via the visual route is attractive for massive codebases - Current VLM code reasoning is the bottleneck, not recognition accuracy - Teams building code analysis tools should watch how this visual path complements the text path

Source: CodeOCR: On the Effectiveness of Vision Language Models in Code Understanding

03 Reasoning CoT Plans Only 2-3 Steps Ahead

The common assumption is that LLMs writing long reasoning chains are "planning" — mapping out an overall strategy, then executing step by step. This study tests that assumption directly by probing the model's internal states for planning horizon.

The finding is sobering: latent planning during CoT generation covers only the next 2-3 steps, nowhere near global planning. Those seemingly well-structured reasoning chains are closer to local greedy search — each step picks the locally best move without a global blueprint.

This also explains why LLMs routinely go off-track on tasks requiring long-range planning.

Key takeaways: - CoT's planning ability has been overestimated; the actual horizon is just 2-3 steps - Chain quality depends on local decision quality, not global foresight - For prompt engineering and agent design: break problems into short local decision chains externally instead of relying on end-to-end model planning

Source: No Global Plan in Chain-of-Thought: Uncover the Latent Planning Horizon of LLMs

04 Interpretability Fine-Tuning, LoRA, and Steering Are One Thing

The tools for controlling LLM behavior keep multiplying: full fine-tuning, LoRA low-rank adaptation, activation steering. These are usually studied as separate techniques. A Zhejiang University team shows they share a unified framework: all three are dynamic weight updates triggered by a control signal, expressible in the same mathematical form.

Within this framework, the team introduces a preference-utility analysis. Preference measures how far the model shifts toward the target concept; utility measures how coherent the output remains. The two trade off predictably: stronger control pushes preference up but utility down. From the activation manifold perspective, utility drops mainly when intervention pushes representations off the model's effective generation manifold.

Building on this analysis, the team's SPLIT method improves preference while better preserving utility.

Key takeaways: - The mathematical unification makes cross-method comparison and selection systematic - The preference-utility tradeoff is predictable, removing the need to tune by feel each time - Teams working on alignment and behavior control now have a framework for evaluating different intervention strategies side by side

Source: Why Steering Works: Toward a Unified View of Language Model Parameter Dynamics

Also Worth Noting

Today's Observation

Today's papers share a common thread: dissolving artificial boundaries between techniques. Kimi K2.5 breaks the wall between text and vision training. CodeOCR breaks the assumption that code must be processed as text. Why Steering Works breaks the theoretical divide between model control methods. UniReason breaks the task boundary between generation and editing. And the CoT planning horizon finding serves as a reality check — model capability boundaries need honest assessment too. Agent teams should take particular note: rather than hoping the model will plan long-range on its own, design architectures that decompose planning into 2-3-step local decision chains.