Today's Overview

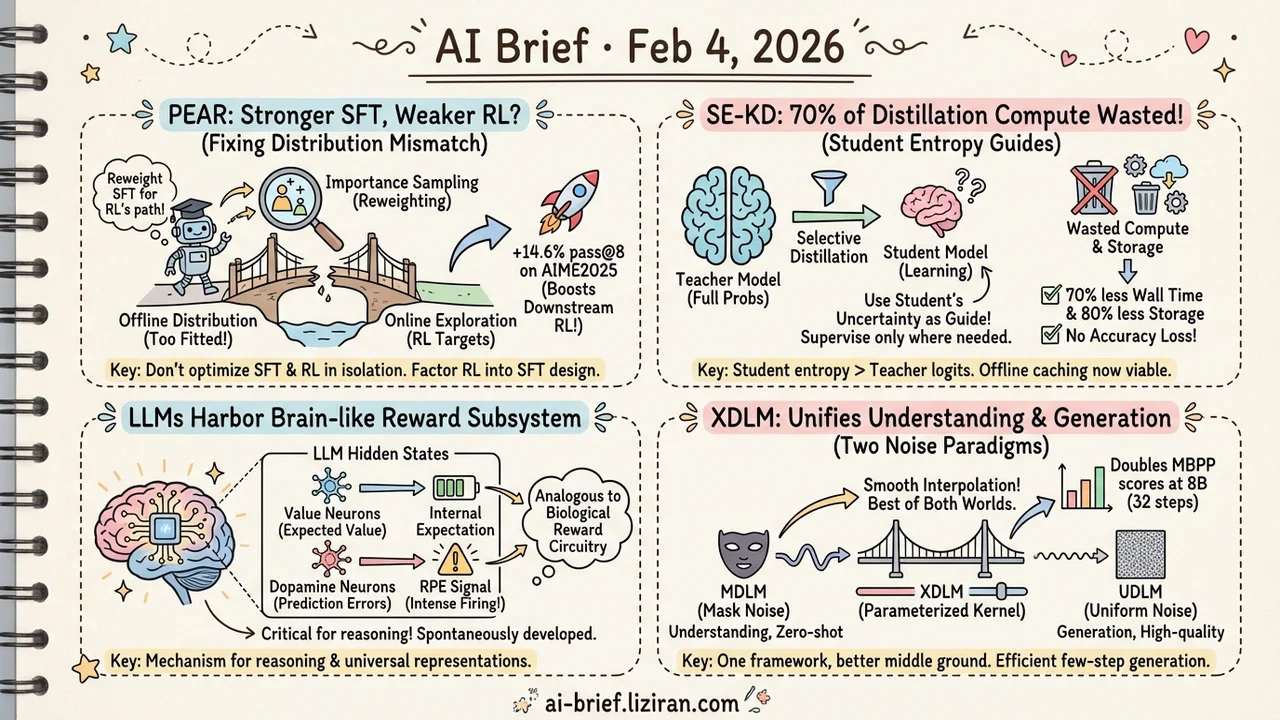

- Stronger SFT, weaker RL? — PEAR uses importance sampling to reweight SFT loss for downstream RL, boosting pass@8 by 14.6% on AIME2025.

- 70% of knowledge distillation compute is wasted — SE-KD uses the student model's own entropy to select distillation positions, cutting wall time by 70% and storage by 80% with no accuracy loss.

- LLMs harbor a brain-like reward subsystem in their hidden states — Stanford finds value neurons encoding expected value and dopamine neurons encoding prediction errors.

- Discrete diffusion models no longer have to choose between understanding and generation — XDLM unifies two noise paradigms, doubling MBPP scores at 8B scale in 32 steps.

Featured

01 Training Better SFT Makes Worse RL Models

This might be the most counterintuitive training phenomenon: a carefully optimized SFT model, after identical RL training, underperforms one started from a weaker SFT checkpoint.

PEAR traces the cause. Standard SFT trains on offline data, but RL explores online. The better your SFT, the more tightly the model fits the offline distribution — and the further it drifts from what RL will actually explore. PEAR fixes this at the SFT stage by reweighting loss via importance sampling, biasing training toward distributions RL will visit. It operates at token, block, and sequence granularity with minimal overhead.

Consistent post-RL improvements on Qwen 2.5/3 and DeepSeek-distilled models, with pass@8 gains up to 14.6% on AIME2025.

Key takeaways: - SFT and RL should not be optimized in isolation — distribution mismatch is the root cause - Importance sampling reweighting is a low-cost fix that stacks on standard SFT - If you train reasoning models in two stages, factor RL into your SFT design from day one

Source: Good SFT Optimizes for SFT, Better SFT Prepares for Reinforcement Learning

02 Training Most Distillation Compute Does Nothing

When distilling a large model into a small one, the standard approach supervises every token position with the teacher's full probability distribution. But intuitively, not every position matters equally — where the student is already confident, extra guidance adds nothing.

SE-KD systematically decomposes selective distillation along position, class, and sample axes. The finding: the student's own entropy is the best importance signal. Supervise only where the student is uncertain. Combining all three axes (SE-KD 3X) makes offline teacher caching practical instead of requiring real-time inference.

Wall time down 70%, peak memory down 18%, storage down 80% — all without sacrificing accuracy.

Key takeaways: - Student entropy beats teacher logits as a distillation guide - Three-axis selection makes offline caching viable, slashing infrastructure requirements - Distillation no longer needs to be exhaustive — good news for resource-constrained teams

Source: Rethinking Selective Knowledge Distillation

03 Interpretability LLMs Grew a Brain-Like Reward System

What does RL training actually wire into an LLM's internals? A Stanford team approached this from a biological analogy and found a sparse "reward subsystem" in hidden states. Value neurons encode the model's internal expectation of current-state value — analogous to the brain's reward circuitry.

More strikingly, when expected and actual rewards diverge, a separate set of dopamine neurons fires intensely, encoding exactly the reward prediction error (RPE) signal. These neurons are robust across datasets, scales, and architectures, and transfer significantly between models fine-tuned from the same base.

Intervention experiments confirm they are critical for reasoning.

Key takeaways: - RL-trained LLMs spontaneously develop brain-like reward structures - Value and dopamine neurons offer a new mechanistic lens into how these models reason - Cross-model transferability hints at universal learned representations

Source: Sparse Reward Subsystem in Large Language Models

04 Architecture Discrete Diffusion: No More Tradeoffs

Two paradigms dominate discrete diffusion for text: MDLM (mask noise) excels at semantic understanding and zero-shot generalization; UDLM (uniform noise) excels at few-step, high-quality generation. Each has blind spots.

XDLM's key insight: both are special cases of the same framework. By introducing a parameterized stationary noise kernel, you can smoothly interpolate between them. Zero-shot text understanding beats UDLM by 5.4 points; few-step image generation drops FID from 80.8 to 54.1.

Scaled to an 8B language model, XDLM scores 15.0 on MBPP in just 32 steps — doubling the baseline. Code is open-sourced.

Key takeaways: - MDLM and UDLM sit at opposite ends of one mathematical framework; XDLM finds a better middle ground - Few-step, high-quality generation matters directly for inference-budget-constrained scenarios - 8B-scale validation suggests the approach scales — worth tracking if you work on generative models

Source: Balancing Understanding and Generation in Discrete Diffusion Models

Also Worth Noting

Today's Observation

Today's two training optimization papers point at the same theme: mismatches hiding in plain sight within the training pipeline. PEAR exposes the distribution mismatch between SFT and RL. SE-KD exposes the attention mismatch between teacher supervision and student needs. Neither proposes a fundamentally new method — both revisit assumptions baked into standard practice and get significant gains from simple corrections. Meanwhile, Stanford's reward subsystem work and PolySAE both push our understanding of model internals forward — one from the RL perspective, the other from feature interaction. If you run post-training pipelines, it is worth revisiting your SFT stage with "preparing for RL" as an explicit design objective.