Today's Overview

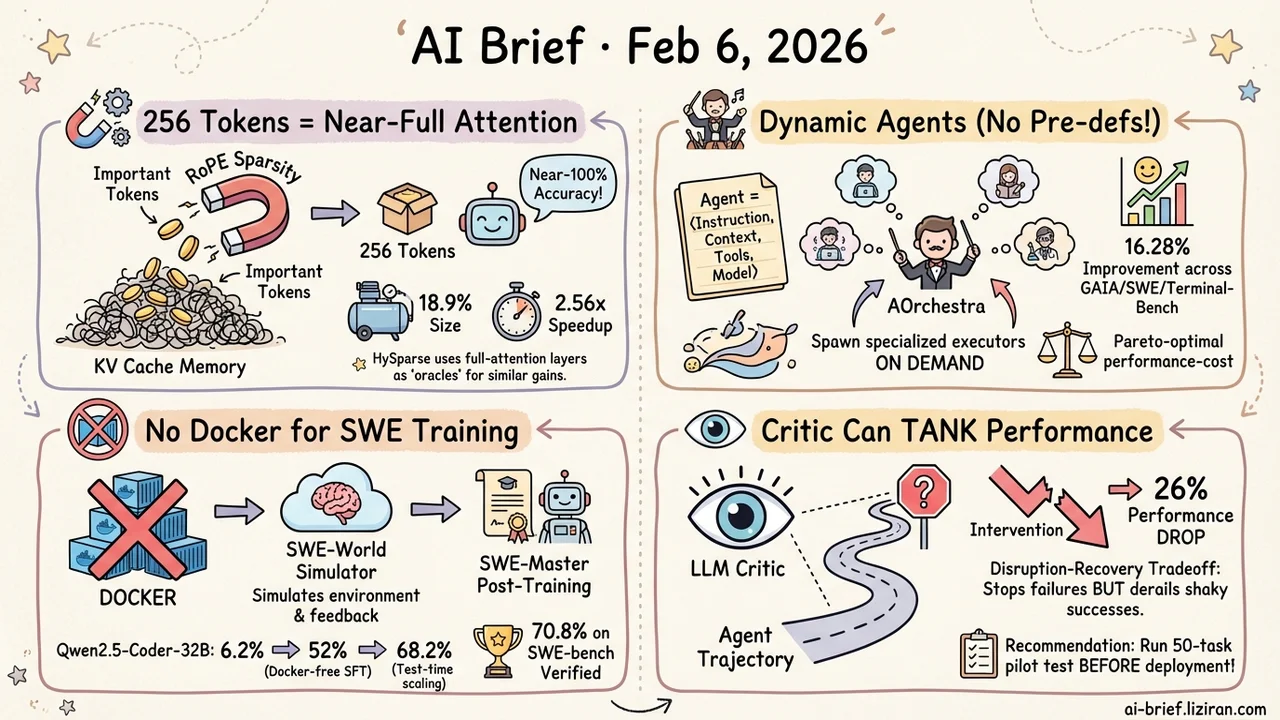

- 256 Tokens Is All You Need for Near-Full Attention — FASA discovers frequency-level sparsity hidden in RoPE positional encoding and uses it for token selection, compressing KV cache to 18.9% while maintaining near-100% accuracy.

- Multi-agent systems no longer need pre-defined sub-agents: let the system spawn specialized executors on demand. AOrchestra achieves 16.28% relative improvement across GAIA/SWE-Bench/Terminal-Bench.

- Training SWE Agents No Longer Requires Docker — SWE-World replaces containerized execution feedback with a learned environment simulator; paired with SWE-Master's post-training framework, an open-source 32B model hits 70.8% on SWE-bench Verified.

- Predicting agent failure doesn't necessarily prevent it. An LLM critic tanks one model's performance by 26 percentage points after deployment.

Featured

01 Efficiency RoPE Hides a Free Lunch for KV Cache

The biggest cost of long-context inference isn't compute — it's KV cache memory. Tens of gigabytes of cache put consumer hardware out of reach. Existing token pruning approaches either use static rules that risk losing critical information, or heuristic strategies with unstable results.

FASA finds a more elegant angle: within RoPE's frequency chunks, only a small subset of "dominant" chunks closely tracks full attention head behavior — and these can identify important tokens at essentially zero extra cost. On LongBench, retaining just 256 tokens reaches nearly 100% of full-KV performance; on AIME24, 18.9% of the cache yields a 2.56x speedup.

A concurrent paper, HySparse, attacks the same problem from the architecture side — using a few full-attention layers as "oracles" to guide token selection in sparse layers. In an 80B MoE model, just 5 full-attention layers enable nearly 10x KV cache compression.

Key takeaways: - RoPE itself contains exploitable sparsity signals, lighter than training a separate importance predictor - The 256-token result shows most context is highly redundant - Teams doing inference deployment should look at FASA and HySparse as complementary approaches

Source: FASA: Frequency-aware Sparse Attention

02 Agent No Pre-Defined Workflows — Build Agents on Demand

Multi-agent systems keep getting more complex, but there's an awkward limitation: sub-agents are typically pre-defined, and they choke on novel subtask types.

AOrchestra proposes a natural abstraction — any agent is a tuple of ⟨instruction, context, tools, model⟩. With this "recipe," the system ditches a fixed agent library. Instead, a central orchestrator constructs specialized agents at each step: which tools, which model, what context — all decided dynamically.

The payoff goes beyond flexibility. It enables Pareto-optimal performance-cost tradeoffs — lightweight models handle simple subtasks, heavy models only where needed. Across GAIA, SWE-Bench, and Terminal-Bench, AOrchestra paired with Gemini-3-Flash achieves 16.28% relative improvement over the strongest baseline.

Key takeaways: - Agent-as-tuple turns "building agents" from an engineering problem into a composition problem - Dynamic model selection inherently supports cost control - The framework is framework-agnostic, theoretically layerable on top of any agent system

Source: AOrchestra: Automating Sub-Agent Creation for Agentic Orchestration

03 Code Intelligence Training SWE Agents Without Docker

The single biggest infrastructure burden of training SWE agents? Docker environments. Every code fix task needs a dependency-complete, test-executable container — eye-wateringly expensive to build and maintain.

SWE-World and SWE-Master are a one-two punch from the same team. SWE-World trains an LLM-based "environment simulator" that takes an agent's action trajectory and directly predicts execution outcomes and test feedback — completely bypassing physical Docker environments. Beyond saving resources, the simulator enables test-time scaling: virtually evaluating multiple candidate solutions at inference without real execution.

SWE-Master provides the companion post-training framework covering teacher trajectory synthesis, long-horizon SFT, and RL with execution feedback. Together they push Qwen2.5-Coder-32B from 6.2% to 52% (Docker-free SFT), 55% with Docker-free RL, and 68.2% with test-time scaling. SWE-Master alone reaches 61.4% via RL, 70.8% at TTS@8.

Key takeaways: - The environment simulator dramatically lowers the infrastructure barrier, opening the door wider for the open-source community - Simulator plus TTS is a win-win: save Docker at training time, use the simulator to pick the best solution at inference time - Both projects are open-source

Source: SWE-World: Building Software Engineering Agents in Docker-Free Environments

04 Agent Your Agent Supervisor Might Be Helping Backward

The intuition sounds reasonable: have an LLM critic monitor agent execution, predict failure, and intervene proactively to boost success rates. This paper delivers a counterintuitive answer — not necessarily, and possibly much worse.

The researchers trained a binary LLM critic with strong offline accuracy (AUROC 0.94), but in deployment it caused a 26 percentage point performance collapse on one model while barely affecting another. The reason is a disruption-recovery tradeoff: intervention can save doomed trajectories, but it also derails trajectories that look shaky mid-run but would have succeeded on their own.

The team's practical recommendation: run a 50-task pilot test before deployment to determine whether intervention actually helps or hurts.

Key takeaways: - High offline prediction accuracy does not guarantee positive online intervention — a critical blind spot in agent reliability research - The disruption-recovery tradeoff means conservative strategies (less intervention) may be safer than aggressive ones - A 50-task pilot is cheap; any team shipping agent systems should add it to their deployment checklist

Source: Accurate Failure Prediction in Agents Does Not Imply Effective Failure Prevention

Also Worth Noting

Today's Observation

A clear technical trend emerges today: KV cache compression is moving from point optimizations to systematic solutions. FASA attacks it from RoPE frequency structure, HySparse from architecture-level full-sparse hybridization, Quant VideoGen from quantization for video generation — three papers solving the same problem from different angles, and their methods are theoretically stackable. The other notable thread is infrastructure cost reduction for SWE agent training: SWE-World demonstrates that replacing Docker with a learned simulator is viable, which could significantly accelerate open-source SWE agent iteration. Teams working on inference deployment should watch FASA and HySparse for combination potential; teams building coding agents should look into SWE-World's simulator approach.