Today's Overview

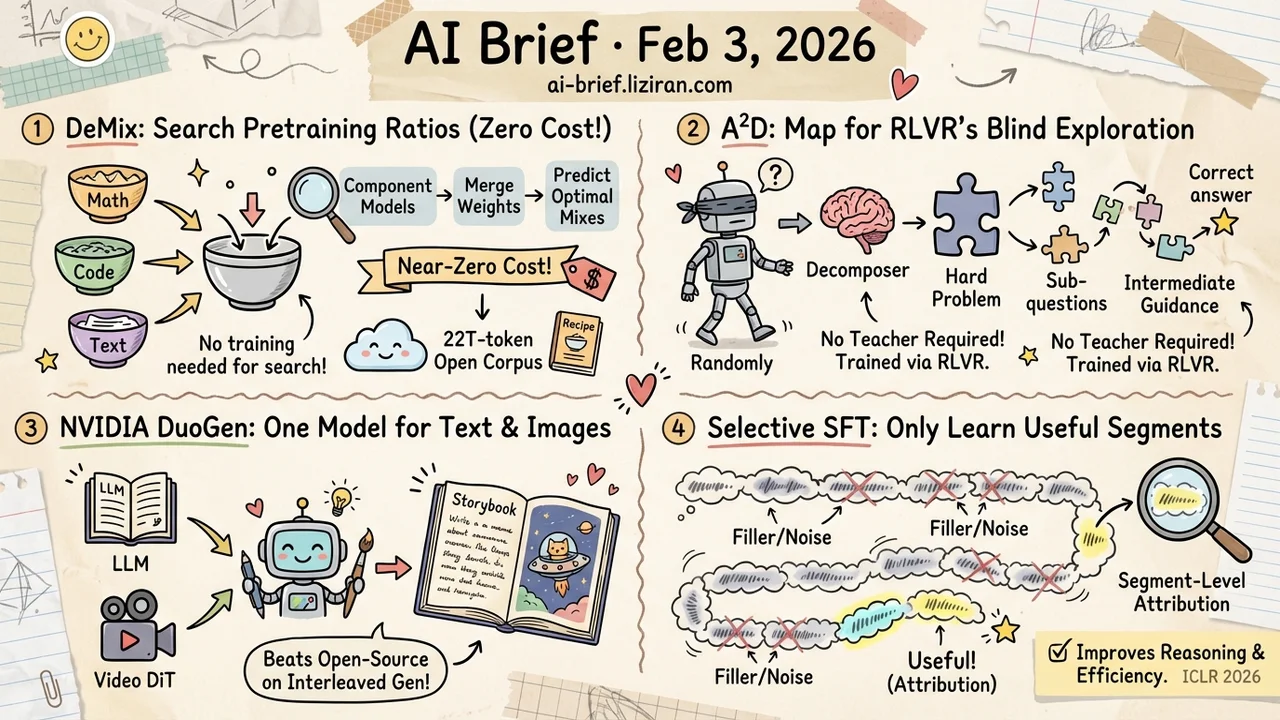

- Search pretraining data ratios without training — DeMix merges component models to predict optimal mixes at near-zero cost, ships a 22T-token open corpus.

- RLVR's blind exploration gets a map — A²D trains a decomposer to break hard problems into sub-questions, then uses them as intermediate guidance, no teacher required.

- NVIDIA wants one model writing text and drawing illustrations — DuoGen pairs a multimodal LLM with a video DiT, beating open-source peers on interleaved generation.

- Most tokens in long reasoning chains are filler — selective SFT on useful segments is enough — Segment-Level Attribution finds the passages that actually drive reasoning, ICLR 2026.

Featured

01 Training Stop Guessing Your Data Ratios

Every LLM pretraining run faces one critical decision: what ratio of math, code, and general text? Standard practice either runs tiny proxy experiments (unreliable at scale) or tries ratios one by one on full runs (prohibitively expensive).

DeMix takes a clever detour. Train "component models" on each candidate dataset separately, then simulate different mixtures by merging their weights. Search is now fully decoupled from training — try as many ratios as you want without a single additional run.

The result is better benchmark performance at lower search cost. More valuable still is the accompanying DeMix Corpora, a 22T-token open pretraining dataset with validated mixture recipes.

Key takeaways: - Model merging is not just an inference trick — it doubles as a pretraining data search tool - Search-training decoupling means near-zero cost to explore unlimited ratios - The 22T-token open corpus is especially attractive for teams with limited pretraining budgets

02 Reasoning Give RLVR a Path, Not Just a Score

RLVR (RL with Verifiable Rewards) is the dominant approach for training reasoning models, but it has a fundamental gap: the reward signal only says whether the final answer is correct. Zero guidance during the process itself. On hard problems, the model explores mostly at random.

A²D addresses this in two steps. First, train a "decomposer" via RLVR itself to break complex problems into simpler sub-questions. Then annotate the training set with these sub-questions and feed them as intermediate navigation during RLVR training. The decomposer needs no teacher distillation — it is trained purely through RLVR.

Experiments show it works as a plug-and-play module on top of different RLVR algorithms.

Key takeaways: - RLVR's "outcome-only reward" limits learning efficiency on hard problems - Problem decomposition injects intermediate signal without external supervision - Plug-and-play design means seamless integration with existing RLVR pipelines

Source: Adaptive Ability Decomposing for Unlocking Large Reasoning Model Effective Reinforcement Learning

03 Multimodal One Model That Writes and Illustrates

Interleaved generation — a model producing text and images in alternation — is one of multimodal AI's most appealing promises. Imagine a model writing a tutorial while generating step-by-step diagrams. Existing approaches fall short due to insufficient training data and weak base models.

NVIDIA's DuoGen attacks this systematically. On the data side, it rewrites curated web pages into multimodal conversations and synthesizes diverse everyday scenarios. On the architecture side, it pairs a pretrained multimodal LLM with a video-generation DiT, using a two-stage strategy that first tunes the LLM then aligns the DiT — sidestepping the cost of pretraining a vision model from scratch.

On both public and custom benchmarks, DuoGen beats existing open-source models on text quality, image fidelity, and text-image alignment.

Key takeaways: - The bottleneck is not any single module but the data + architecture + alignment stack as a whole - Reusing a video DiT for image generation is smart — video pretraining gives natural visual coherence - NVIDIA is doubling down on unified generation; worth tracking for open-source release updates

Source: DuoGen: Towards General Purpose Interleaved Multimodal Generation

04 Reasoning 80% of Reasoning Chains Is Noise

Large reasoning models solve problems by generating long chains of thought, but most of the content is repetition, truncation, or filler. When you SFT on these chains, the model imitates the noise indiscriminately, dragging down performance.

Segment-Level Attribution takes a direct approach: use integrated gradient attribution to quantify each token's contribution to the final answer, then score segments on two dimensions — attribution strength and direction consistency. High-strength segments with mixed directions indicate genuine deliberation. Uniformly positive or negative segments are shallow patterns.

Training computes loss only on the important segments and masks the rest. Across multiple models and datasets, this selective SFT improves both accuracy and output efficiency. ICLR 2026.

Key takeaways: - Reasoning chain value density varies enormously — full SFT trains the model on filler - Attribution analysis automatically separates real reasoning from busywork - Selective training is a practical, low-cost lever for improving reasoning model quality

Source: Segment-Level Attribution for Selective Learning of Long Reasoning Traces

Also Worth Noting

Today's Observation

Two directions converge today. DeMix's "search data ratios via model merging" and A²D's "guide RLVR with sub-questions" join yesterday's Golden Goose in a multi-day streak targeting data efficiency in LLM training — not finding more data, but using data more intelligently. Meanwhile, Segment-Level Attribution and the Latent-CoT analysis both reveal the true structure of reasoning chains: the genuinely useful steps are far fewer than they appear. If you work on reasoning model training or data engineering, "automated data-mix search" and "reasoning chain quality filtering" are two threads worth following.