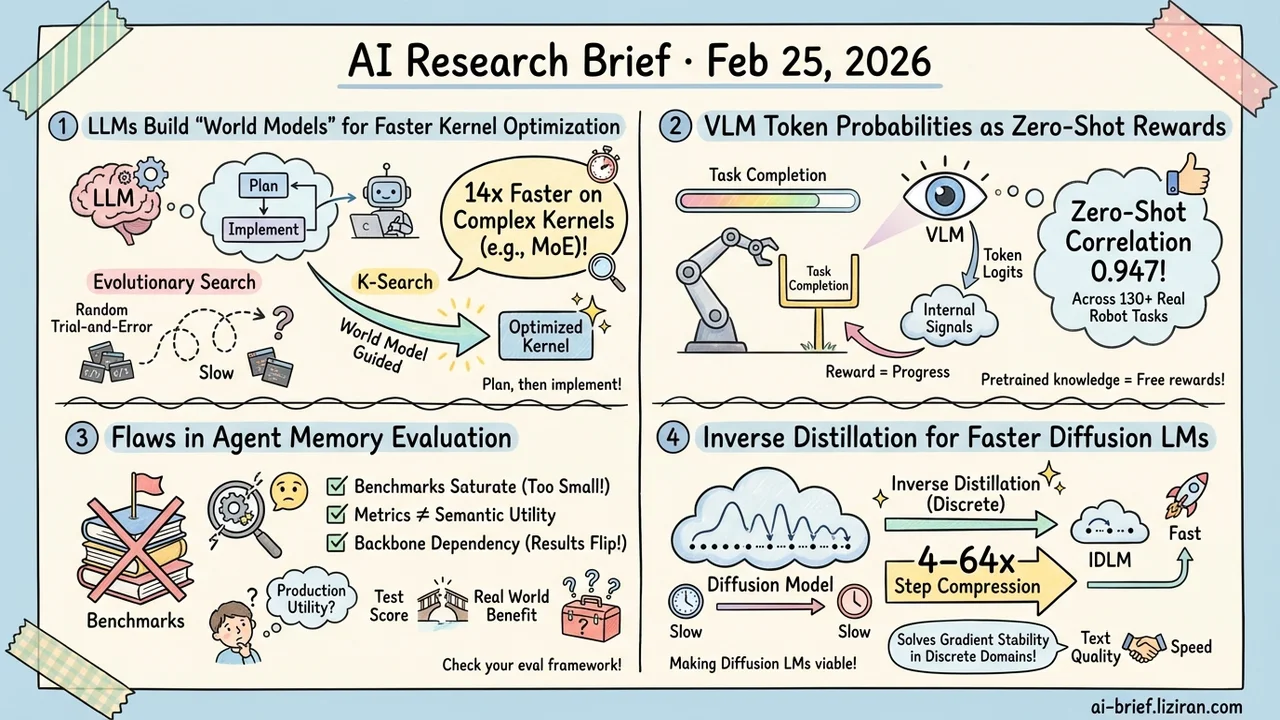

Today's Overview

- An LLM builds an internal "world model" of kernel behavior to plan optimization paths. On complex kernels like MoE, it runs 14x faster than evolutionary search, turning operator tuning from random trial-and-error into guided exploration.

- VLM token probabilities double as reward signals. Pretrained model logits encode task-progress information. Zero-shot correlation hits 0.947 across 130+ real robot tasks.

- Agent memory evaluation has structural flaws. Benchmarks saturate, metrics disconnect from semantic utility, and swapping the backbone model flips conclusions. A checklist for teams building agent systems.

- Inverse distillation moves from continuous to discrete domains for diffusion language models. Solving uniqueness and gradient stability unlocks 4–64x step compression.

Featured

01 Code Intelligence Let the LLM Think Before Touching the Kernel

Existing LLM kernel optimization methods treat the model as a random code generator — mutate, evaluate, repeat inside an evolutionary loop. Complex kernels that need coordinated multi-step edits break this approach. Intermediate steps may temporarily degrade performance, so the search discards promising paths too early.

K-Search separates high-level algorithmic planning from low-level code implementation. The LLM maintains an internal "world model" of kernel behavior. It reasons about an optimization strategy first, then writes the implementation. The world model and search co-evolve: execution feedback updates the model's understanding, sharpening future plans.

On FlashInfer's GQA, MLA, and MoE kernels, K-Search averages 2.1x faster than the best evolutionary search. MoE kernels see up to 14.3x speedup. On GPUMode's TriMul benchmark, it hits 1030μs on H100, surpassing both evolutionary search and hand-tuned solutions. For ML infra teams, the takeaway is direct: operator tuning doesn't have to be brute-force search. Teaching the model to reason about optimization paths may be the more efficient route.

Key takeaways: - Upgrades kernel optimization from random mutation to world-model-guided planning. Can traverse non-monotonic optimization paths that evolutionary search abandons. - Gains are largest on complex kernels (14.3x on MoE), modest on simple ones. The method's advantage scales with structural complexity. - The "plan then implement" approach generalizes to other code optimization tasks requiring multi-step structural transformations.

Source: K-Search: LLM Kernel Generation via Co-Evolving Intrinsic World Model

02 Robotics Token Probabilities Are Hidden Reward Signals

Reinforcement learning for robotics has a classic problem: sparse rewards. The robot acts for many steps and only learns success or failure at the end. The standard fix is training a dedicated reward model for intermediate feedback, but that introduces its own generalization headache — switch the task or the robot and it stops working.

TOPReward takes a different angle. It extracts task-progress signals directly from a pretrained VLM's token output probabilities. No additional training needed. The insight: VLMs already learned "how far along is this task" from pretraining data. That knowledge lives in the logits. Nobody had extracted it before.

Zero-shot on 130+ real robot tasks, TOPReward achieves 0.947 progress correlation. The previous best method (GVL) on the same open-source model scores near zero. The gap is not incremental. This finding likely extends beyond robotics: pretrained models carry far richer internal signals than current pipelines exploit.

Key takeaways: - Extracts reward signals directly from VLM token probabilities. Zero-shot generalization across 130+ real tasks. - Pretrained model internals encode structured knowledge that goes largely unused in standard inference pipelines. - Generalization to complex, long-horizon tasks still needs more validation.

Source: TOPReward: Token Probabilities as Hidden Zero-Shot Rewards for Robotics

03 Agent Agent Memory Evaluation May Be Broken at the Root

Teams building agent systems know the feeling: the memory module scores well on benchmarks, but in production the benefit is unclear. You can't tell if it's actually helping. This survey offers an explanation. The problem may not be your implementation. It may be the evaluation framework itself.

The authors dissect current evaluation methods for agent memory and surface structural issues. Benchmarks are too small, causing saturation effects. Metrics fail to capture semantic utility. The same memory approach tested on a different backbone model produces wildly different results. They categorize memory architectures into four structural types and analyze each one's latency and throughput overhead — costs that most papers ignore entirely.

This isn't a paper proposing a new solution. It's a diagnostic checklist for reassessing whether your memory module is doing what you think it's doing.

Key takeaways: - Existing benchmarks saturate easily. High scores don't mean the memory system actually works in practice. - Memory maintenance imposes latency and throughput costs that matter in production deployments. - Backbone dependency is a real risk. Swap the underlying model and your evaluation conclusions may flip entirely.

Source: Anatomy of Agentic Memory: Taxonomy and Empirical Analysis of Evaluation and System Limitations

04 Efficiency Inverse Distillation Enters Discrete Space for Diffusion LMs

Diffusion models show strong text generation quality, but multi-step sampling makes inference far slower than autoregressive models. That's a deployment blocker. IDLM ports inverse distillation — already proven in continuous diffusion — to discrete text domains. The port is non-trivial. Discrete spaces break backpropagation stability, and the inverse distillation objective lacks a uniqueness guarantee, risking convergence to suboptimal solutions.

The authors provide a theoretical proof of solution uniqueness and introduce a gradient-stable relaxation method. The result: 4–64x step compression across multiple diffusion language models with generation quality preserved. This class of work determines whether diffusion LMs leave the lab. Generation quality means nothing if inference cost stays prohibitive.

Key takeaways: - Multi-step sampling is the core barrier to deploying diffusion language models in production. - Moving inverse distillation from continuous to discrete domains requires solving uniqueness and gradient stability — two problems absent in the continuous case. - 4–64x step compression is a necessary step toward making diffusion LMs viable for real-world use.

Source: IDLM: Inverse-distilled Diffusion Language Models

Also Worth Noting

Today's Observation

We default to treating large models as black boxes: feed input, take output, ignore the middle. But the "byproducts" of generation — probability distribution shapes, internal state representations — carry exploitable information that mostly gets discarded.

TOPReward is a direct example. VLM token probability distributions encode task-progress information. Use them as rewards, skip the dedicated reward model, and zero-shot correlation hits 0.947 on 130+ real robot tasks. K-Search exploits internal signals from another angle: the LLM builds a world model of kernel behavior, using that internal understanding to guide search rather than mutating blindly. Neither work introduces external supervision — no hand-labeled reward functions, no manually designed benchmarks. Both extract usable structure from knowledge the model already accumulated during pretraining.

The logic is intuitive. A model trained on massive data encodes far more than "given input, predict output." Distribution shapes, intermediate activations, relative confidence across tokens — all carry the model's understanding of the world. Standard pipelines use only the final output layer.

As the marginal cost of external annotation rises and pretrained model capability grows, mining internal signals becomes increasingly cost-effective. If you're building a system that needs reward signals or evaluation functions, check what your foundation model already knows internally. It may be cheaper and more generalizable than training a dedicated module from scratch.