Today's Overview

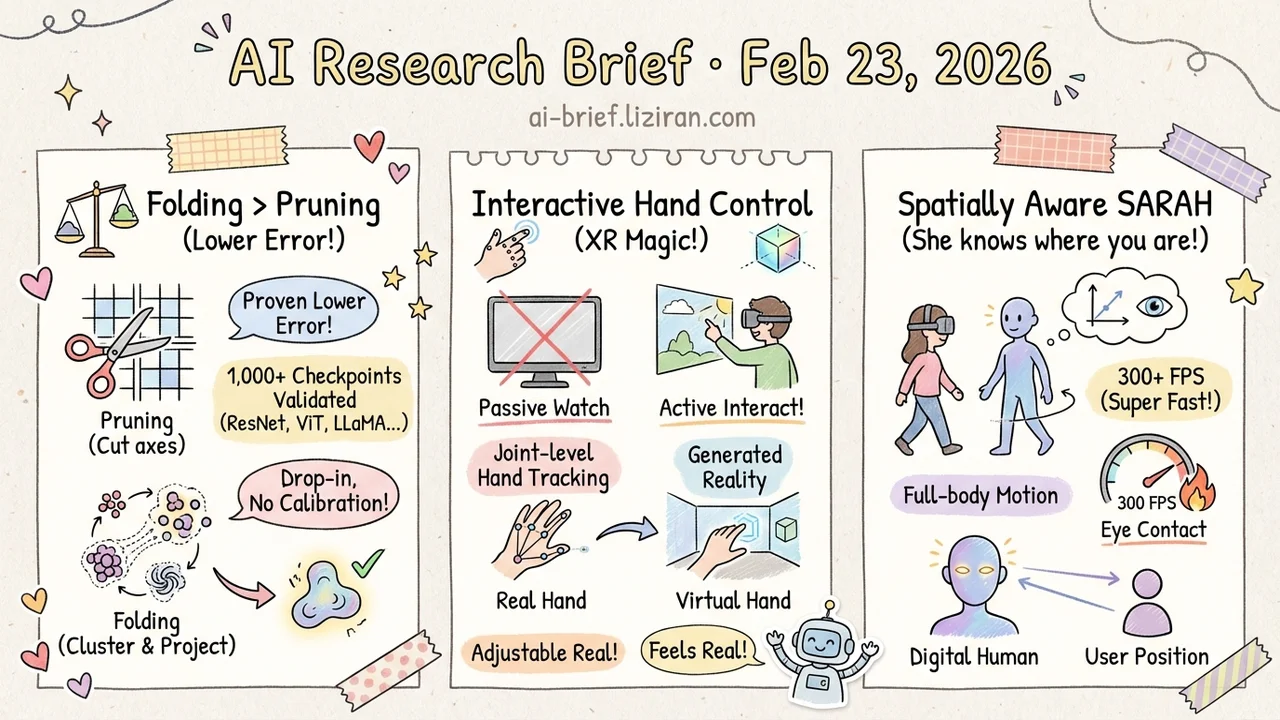

- Weight folding outperforms pruning at most compression rates. ICLR 2026 work proves folding yields lower reconstruction error and validates across 1,000+ checkpoints.

- Video generation models can now track your fingers. Joint-level hand control makes XR scenes interactive, not just watchable.

- VR conversational agents finally know where you're standing. SARAH generates spatially aware full-body motion at 300 FPS for streaming VR deployment.

Featured

01 Efficiency Why Folding Beats Pruning for Model Compression

Structured pruning is the default move for deploying large models. Cut unimportant channels or layers, get a smaller, faster model. But pruning is an axis-aligned projection — it zeros out entire dimensions. Folding takes a different geometric path: cluster similar weights and project onto a low-rank subspace. The authors prove that within a rank distance of one, folding produces strictly smaller reconstruction error than pruning.

The empirical validation is thorough: over 1,000 checkpoints spanning ResNet, ViT, CLIP, and LLaMA-family models. Folding wins at moderate-to-high compression rates across the board. Pruning only catches up under specific training configurations. No calibration data required — folding works as a drop-in replacement for existing pruning pipelines.

Key takeaways: - Pruning projects along coordinate axes; folding projects onto low-rank subspaces with provably lower error - 1,000+ checkpoint evaluation confirms folding dominates at moderate-to-high compression - Calibration-free and drop-in compatible — worth adding to your compression baseline comparisons

Source: Cut Less, Fold More: Model Compression through the Lens of Projection Geometry

02 Multimodal Video World Models Can Finally Track Your Hands

XR has a specific demand that most video generation research ignores: the model must respond to tracked body motion in real time. Current video world models accept text or keyboard input at best. That's nowhere near "reach out and touch a virtual object."

Generated Reality conditions a diffusion transformer on both 6DoF head pose and joint-level hand poses. A bidirectional video diffusion teacher is trained with this conditioning, then distilled into a causal, streaming system that generates first-person virtual environments. Human subjects reported significantly higher perceived control compared to baselines. Video generation is shifting from passive viewing to active manipulation — directly relevant for teams building XR products.

Key takeaways: - First video world model conditioned on both head pose and joint-level hand articulation - Bidirectional-to-causal distillation enables streaming interactive generation - XR video generation is moving from "watch" to "interact"

03 Multimodal Your VR Avatar Finally Looks at You

The most common failure in conversational digital humans isn't lip sync or gesture timing. It's that the avatar doesn't look at you. Walk to its side, and it keeps gesturing toward empty space. SARAH fixes this spatial awareness gap.

Given the user's position and dyadic audio, SARAH generates full-body motion including orientation, gaze, and gestures. The architecture combines a causal transformer VAE with flow matching conditioned on user trajectory and audio. Eye contact intensity is adjustable at inference time through classifier-free guidance — no retraining needed. On Embody 3D, it hits state-of-the-art motion quality at 300+ FPS, 3x faster than non-causal baselines. Already validated on a live VR system. Spatial awareness is what separates a digital human that feels like an animation from one that feels like a presence.

Key takeaways: - First real-time causal method for spatially aware conversational motion, 300+ FPS - Eye contact intensity tunable at inference without retraining - Spatial awareness is the missing capability that makes digital humans feel present