Today's Overview

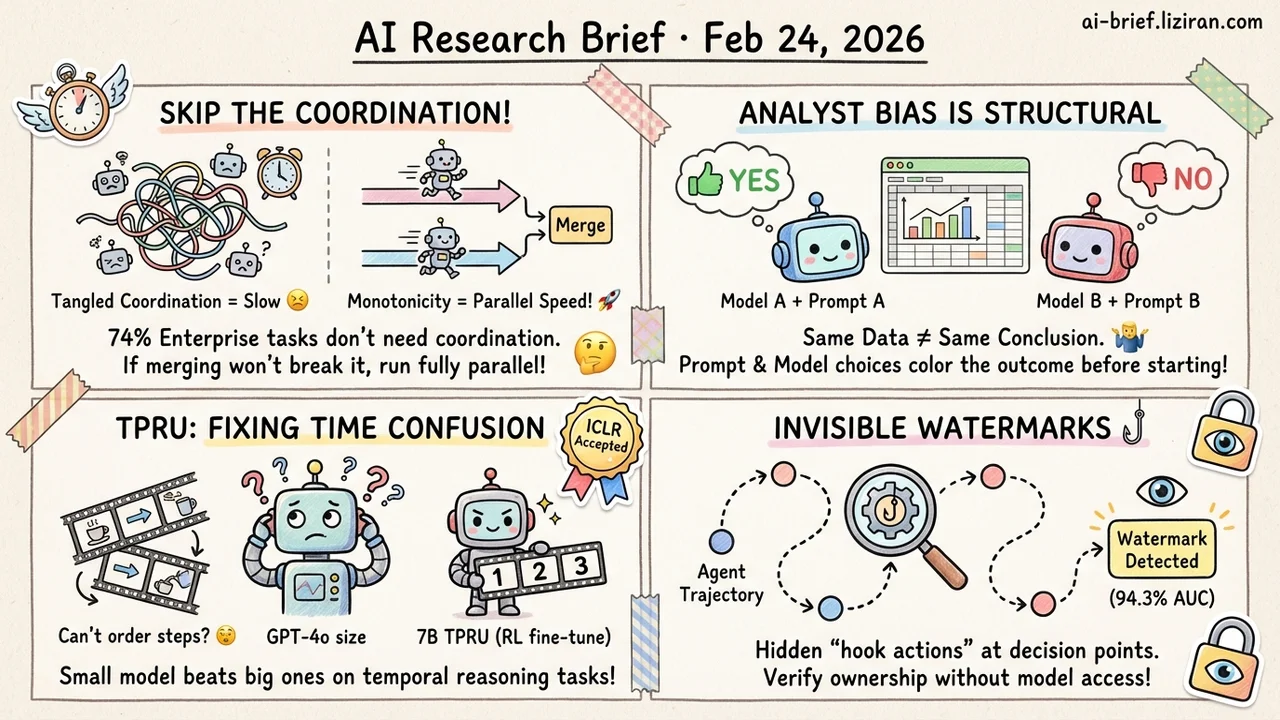

- 74% of enterprise workflow tasks don't need inter-agent coordination. Monotonicity analysis provides a formal test: if merging sub-results can't make things worse, the task can run fully in parallel with zero orchestration overhead.

- Multiple AI analysts examining the same data frequently reach contradictory conclusions. The disagreement isn't noise. It's structural. Prompt wording and model choice color the outcome before analysis even begins.

- Multimodal models understand video content fine, but accuracy drops sharply on step ordering. TPRU uses RL fine-tuning on a 7B model to beat GPT-4o on temporal reasoning tasks. Accepted at ICLR.

- Agent trajectory data now has a black-box watermarking scheme. Hidden hook actions embedded at decision points achieve 94.3 detection AUC without requiring access to model weights.

Featured

01 Agent Half Your Multi-Agent Orchestration Might Be Unnecessary

Coordination overhead in multi-agent systems often costs more than the task itself. That's not hyperbole; it's measurable. The real question isn't "how to coordinate better" but "does this task need coordination at all?"

This paper borrows a precise criterion from distributed systems theory: monotonicity. A task is monotone when new information only supplements conclusions, never overturns them. Monotone tasks let agents work independently and merge results at the end. No intermediate synchronization needed. The team analyzed 65 enterprise workflows and found 74% satisfy the monotonicity condition. On 13,417 occupational tasks from the O*NET database, 42% qualified.

That translates to 24–57% of coordination overhead being entirely unnecessary "for correctness." Every team building multi-agent pipelines should audit their orchestration layer. State synchronization, sequential dependencies, intermediate checkpoints: how many can you just delete?

Key takeaways: - Monotonicity is a formal criterion for whether a task needs coordination. If new information can't overturn old conclusions, parallelize fully. - 74% of tested enterprise workflows satisfy the monotonicity condition. Most orchestration layers carry dead weight. - Before optimizing your coordination protocol, test whether you need one at all. Eliminating unnecessary orchestration saves more than improving it.

Source: When Coordination Is Avoidable: A Monotonicity Analysis of Organizational Tasks

02 Evaluation Same Data, Different AI Analysts, Opposite Conclusions

Give different LLMs the same dataset and the same hypothesis to test. The results are as unsettling as human "many-analysts" studies: effect sizes, p-values, and even binary "does the data support the hypothesis?" judgments scatter widely. Completely opposite conclusions appear regularly.

The disagreement is structural, not random. Implicit decisions at each step — variable preprocessing, model selection, inference approach — create systematic forks across analysis paths. Worse, these forks are steerable. Swap the persona prompt or the underlying model and the distribution of conclusions shifts wholesale. This holds even after filtering out methodologically unsound analyses.

For teams using AI agents for data-driven decisions, a single "let AI analyze this" run arrives pre-colored by prompt wording and model choice. The bias is real but invisible from the output alone.

Key takeaways: - AI analyst disagreement is structural, driven by implicit decisions along the analysis path rather than random error. - Changing the prompt or the model shifts conclusions directionally. A single analysis run shouldn't be taken at face value. - Treat "analyst degrees of freedom" as a known risk. Run multiple configurations and cross-validate before acting on results.

Source: Many AI Analysts, One Dataset: Navigating the Agentic Data Science Multiverse

03 Robotics Multimodal Models See Video, but Can't Follow the Steps

Multimodal models handle static frames well enough. Show them a robot manipulation video and ask "pick up the cup, then open the cabinet, then place it inside" — accuracy drops hard. This capability gap blocks embodied AI deployment. Robot manipulation and GUI navigation both demand understanding action sequences and causal relationships.

TPRU tackles this with a large-scale temporal reasoning dataset covering robot manipulation, daily activities, and more. Three task types: temporal reordering, next-frame prediction, and previous-frame recall. The key design choice: high-quality negative samples that force models from passive viewing into active verification. After RL fine-tuning, a 7B model jumps from 50% to 76% accuracy, surpassing GPT-4o and other much larger models. The gains generalize to external benchmarks.

Key takeaways: - Temporal and procedural understanding is the core bottleneck for multimodal models in embodied settings. - TPRU's RL-fine-tuned 7B model beats GPT-4o on temporal tasks. Data quality and task design matter more than parameter count. - Accepted at ICLR, method is open-sourced. Teams working on robotics or embodied intelligence can build on it directly.

Source: TPRU: Advancing Temporal and Procedural Understanding in Large Multimodal Models

04 Safety Copyright Protection for Agent Trajectory Data

A high-quality agent trajectory encodes task design, strong-model generation, and human curation. The cost isn't trivial. Yet these datasets have almost no copyright protection today. They get copied, distilled, and reused with no way to trace origin.

ActHook borrows from software engineering's hook mechanism. It inserts covert "hook actions" at agent decision points, activated only by a specific key. Normal task execution stays unaffected. An agent trained on watermarked data produces these hook actions at detectably higher rates, enabling black-box verification. No model weight access required — just observe output behavior. Across math reasoning, web search, and software engineering agent tasks, detection AUC hits 94.3 with near-zero performance degradation.

Key takeaways: - The production cost of high-quality agent trajectory data makes copyright protection inevitable. - ActHook embeds covert hook actions for black-box watermark detection, independent of model weight access. - Teams building agent datasets should track the maturation of these protection mechanisms.

Source: Watermarking LLM Agent Trajectories

Also Worth Noting

Today's Observation

Imagine you're designing a multi-agent data analysis system. Instinct says: add a coordination layer so agents share intermediate results, align their analytical approach, then output a consensus. Two papers from today dismantle two reasonable-sounding defaults in that design.

The coordination-avoidability paper says: if each agent's sub-analysis is monotone — new data supplements but never overturns — the coordination layer is pure waste. Run independently, merge at the end, same result.

The analyst-multiverse paper says: even after agents analyze independently, you can't simply take the consensus. Conclusion variance isn't noise. It's a systematic product of implicit decisions along each analysis path. Majority voting doesn't eliminate structural bias.

Stack these two findings and a concrete engineering lesson emerges. "Coordination" and "aggregation" are independent design decisions. Don't bundle them. Many systems default to a linear "coordinate → consensus → output" pipeline. A better approach: use the monotonicity criterion to identify which subtasks need no coordination at all, then cut the orchestration cost. For results that do need aggregation, don't extract consensus. Preserve disagreement. Surface the full spread of analysis paths to decision-makers, letting humans judge which divergences are methodological noise and which reflect genuine uncertainty. Add trajectory watermarking for data provenance, and each analysis path gains an anchor for source and credibility.

Action item: Audit your multi-agent pipeline. Separate the coordination layer from the aggregation layer. For monotone subtasks, delete the coordination. For aggregation, replace "extract consensus" with "present the disagreement distribution." Let downstream decision-makers see the full spectrum of conclusions, not a single potentially misleading point estimate.