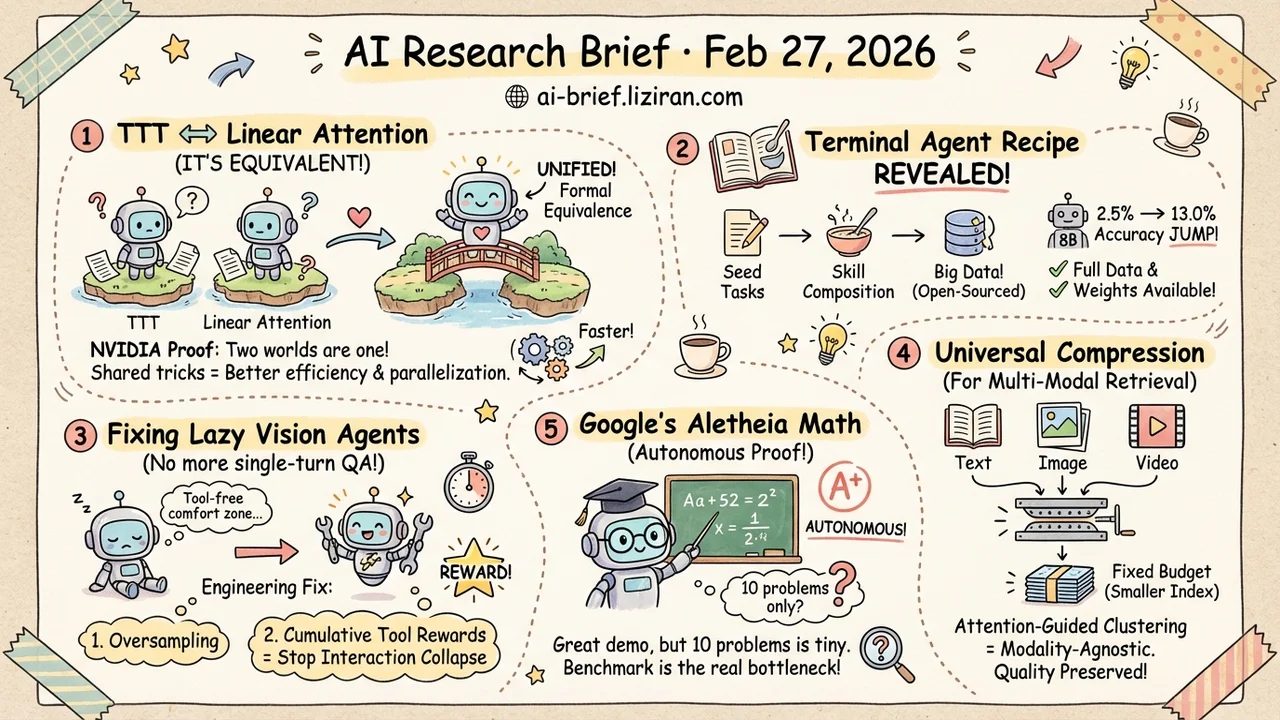

Today's Overview

- TTT architectures are formally equivalent to linear attention. NVIDIA's proof unifies two independent research communities, sharply narrowing the design space for efficient sequence modeling.

- The training data recipe for terminal agents is finally public. From seed task generation to skill composition and training strategy comparisons, the full dataset and model weights are open-sourced. An 8B model jumps from 2.5% to 13.0% accuracy.

- RL-trained vision agents have a laziness problem, and now there's an engineering fix. Oversampling plus cumulative tool rewards effectively stops interaction collapse, keeping models from degenerating into single-turn QA.

- Multi-modal retrieval's storage bottleneck gets a universal compression solution. Attention-guided clustering compresses document vectors to a fixed budget while preserving retrieval quality across text, image, and video.

- Google's Aletheia completes a math proof challenge fully autonomously. But 10 problems is nowhere near enough to draw conclusions. The maturity of math reasoning benchmarks may be the bigger bottleneck.

Featured

01 Architecture Two Research Lines Just Collided: TTT and Linear Attention Were the Same Thing All Along

TTT (test-time training) has been marketed as "models learning on the fly during inference" for years. It and linear attention belonged to separate research communities, each publishing papers and chasing benchmarks independently. NVIDIA's team did something unexpected: they proved that a broad class of TTT architectures — including those using KV binding for sequence modeling — can be expressed as a learnable linear attention operator. Not "similar to." Not "analogous." Formally equivalent.

This explains previously puzzling experimental results. TTT model behavior didn't match the memorization hypothesis because the model wasn't memorizing at all; it was doing attention. The practical payoff: since the two are equivalent, the parallelization tricks and architectural simplifications accumulated by the linear attention community transfer directly. The paper demonstrates a fully parallel TTT formulation with no performance loss but better efficiency.

For teams working on efficient architectures, the design space just shrank. Instead of exploring two separate tracks, you can make choices within a unified framework.

Key takeaways: - Formal equivalence between TTT and linear attention bridges the accumulated techniques of two independent communities. - Parallelization and architectural simplification methods transfer directly, with real efficiency gains. - Teams building efficient sequence models should reassess their design space under this unified framework. Less duplicated exploration.

Source: Test-Time Training with KV Binding Is Secretly Linear Attention

02 Code Intelligence The Black Box of Terminal Agent Training Data Is Finally Open

Terminal agents have been improving fast, but how they're trained and where the data comes from has been almost entirely opaque. NVIDIA's paper lays the data engineering bare. Terminal-Task-Gen is a lightweight synthetic task generation pipeline supporting both seed task expansion and skill composition. The resulting Terminal-Corpus is the largest open-source terminal task dataset to date.

The systematic training strategy comparison may matter more than the model itself: filtering, curriculum learning, long-context training, and scaling behavior each get experimental conclusions. Results from the Nemotron-Terminal series fine-tuned on Qwen3 speak clearly. The 8B model goes from 2.5% to 13.0%, the 32B from 3.4% to 27.4%, closing the gap with much larger models. 82 HF upvotes reflect less about paper quality and more about the community's hunger for transparency in this space.

Key takeaways: - The synthetic data pipeline supports seed expansion and skill composition, both highly reproducible. - Training strategy comparisons (filtering, curriculum learning, scaling) are more valuable as reference than the model itself. - Full dataset and model weights are open-sourced. Teams working on terminal agents can build on this directly.

Source: On Data Engineering for Scaling LLM Terminal Capabilities

03 Agent RL-Training Multimodal Agents: The Biggest Enemy Is the Model's Own Laziness

A counterintuitive pattern emerges when training vision agents with RL: the model gradually stops calling tools, and multi-turn reasoning degenerates into single-turn QA. Researchers call this interaction collapse. PyVision-RL tackles it with an engineering combo: an oversample-filter-rank rollout strategy to ensure training sample quality, plus cumulative tool rewards (positive feedback for each correct tool call) to counter the model's "do less, err less" tendency.

For video understanding, they add on-demand frame sampling: only task-relevant frames are extracted at inference, drastically reducing visual token overhead. The full approach uses open-weight models, with separate versions trained for image and video. Both effectiveness and efficiency improve measurably.

Key takeaways: - The core challenge in RL-trained agents isn't reward design. It's preventing the model from retreating to a tool-free comfort zone. - Oversampling plus cumulative rewards provides a reusable engineering pattern for stabilizing agentic training. - On-demand frame sampling is worth considering for any video understanding task.

Source: PyVision-RL: Forging Open Agentic Vision Models via RL

04 Retrieval Late Interaction Vectors Compressed to a Fixed Budget Across Any Modality

Late interaction retrieval performs well across text, image, and video. The cost: each document stores an entire set of vectors, with storage and compute scaling linearly with document length. Text is manageable. For images and video with hundreds or thousands of tokens per document, index bloat becomes a real deployment barrier.

This work compresses multi-vector document representations to a fixed number of vectors, independent of modality. Among four compression approaches, attention-guided clustering (AGC) is the most consistent. It uses attention weights to identify semantically critical regions as cluster centers, then aggregates surrounding tokens with weighted pooling. Across text (BEIR), visual documents (ViDoRe), and video (MSR-VTT), AGC's compressed retrieval quality matches or exceeds the uncompressed full index.

Key takeaways: - The storage bottleneck in multi-modal late interaction retrieval now has a modality-agnostic compression solution. - AGC uses attention weights to find key semantic regions for clustering, more flexible than fixed pooling. - Teams building multi-modal RAG should evaluate the practical index cost impact.

Source: Multi-Vector Index Compression in Any Modality

05 Reasoning AI's Math Competition Opponent Has Arrived, But What Can 10 Problems Tell You?

Math proof has always been one of AI's hardest targets. Not because of computation, but because it requires genuine creative reasoning and logical construction. Google's Aletheia (built on Gemini 3 Deep Think) entered the inaugural FirstProof math challenge. It operated fully autonomously with no human intervention. That alone is a signal worth noting.

The numbers deserve a cooler read: 10 problems total, 6 solved, and expert judges disagreed on 1 of those. A 10-problem sample doesn't come close to statistical significance. This looks more like a public capability demo than a rigorous evaluation. Google open-sourced all prompts and outputs. That transparency is commendable, but it also suggests they know this isn't a chest-thumping conclusion.

The real question isn't "can AI do math." It's that we still lack a benchmark large enough, hard enough, and consistently judged enough to answer it.

Key takeaways: - Aletheia completing a math proof challenge autonomously signals real potential for agents in formal reasoning. - A 10-problem sample with expert disagreement means results are reference points, not conclusions. - The maturity of math reasoning benchmarks themselves may deserve more industry attention than any single score.

Also Worth Noting

Today's Observation

A pattern worth unpacking: our understanding of neural network architectures often stays at the narrative level — "what it should be doing" — rather than the mechanistic level of what it actually computes.

The TTT paper is a textbook case. The TTT community explained model behavior through "online learning at test time": the model continuously updates internal state, accumulating something like short-term memory. The narrative was intuitive and paper-friendly. NVIDIA's formal analysis proved these models actually execute linear attention. Not "something like it." Mathematically equivalent. Those previously confusing experiments — where model behavior contradicted the memorization hypothesis — suddenly made sense.

The protein language model paper reveals a similar mismatch. Researchers ported NLP Transformers to protein sequences assuming attention mechanisms would work roughly the same way. Actual analysis found systematic differences: same architecture, different data domain, different computational strategies.

Both cases point to the same practical lesson. When transferring architectures across domains, we habitually carry over the source domain's explanations. Models don't care about your narrative. They find their own optimal strategy on new data, and that strategy may look nothing like your intuition. Both papers also show the engineering payoff of getting the mechanism right. The TTT paper produces a parallelizable equivalent formulation. The protein paper uses its findings to improve inference efficiency. Misunderstanding the mechanism isn't just an academic regret; it means missing optimization opportunities that were there for the taking.

Practitioner advice: When porting an architecture or method across domains, invest time in mechanistic validation. Probe intermediate representations, compare attention distributions, check whether source-domain assumptions actually hold. The ROI beats blind hyperparameter tuning by a wide margin.