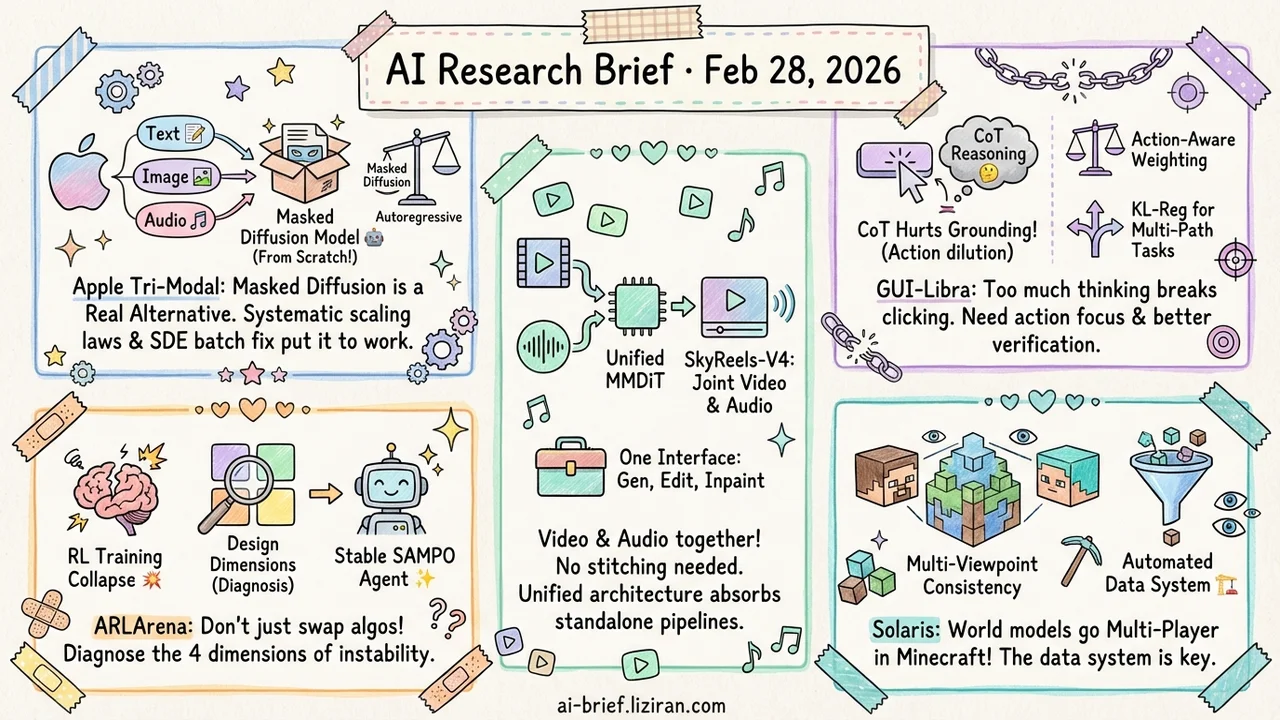

Today's Overview

- Apple trains a tri-modal masked diffusion model from scratch. Systematic testing of scaling laws, modality mixing, and noise schedules makes this directly actionable for teams working on multi-modal diffusion. Masked diffusion is emerging as a real alternative to autoregressive generation.

- Agentic RL training collapse now has a diagnostic framework. ARLArena decomposes policy gradients into four design dimensions and ablates each one to find instability sources. More effective than swapping algorithms blindly.

- SkyReels-V4 generates video and audio together via dual-stream MMDiT. Text-to-video, inpainting, and editing all collapse into a single interface. Unified architectures are absorbing standalone modality pipelines.

- Adding CoT reasoning to GUI agents actually hurts grounding. GUI-Libra identifies the root causes: action token dilution and incomplete step-level verification. Targeted fixes follow.

- World models go multi-player and multi-viewpoint. Solaris achieves consistent multi-agent simulation in Minecraft. The automated data collection system may outlast the model itself.

Featured

01 Architecture Tri-Modal Masked Diffusion: How Far Can One Model Go?

Apple trained a 3B-parameter masked diffusion model from scratch across text, image, and audio. Not fine-tuning on top of an existing language model. A full pre-training redesign. The direction itself matters: masked diffusion (generating content by progressively unmasking tokens) is becoming a viable alternative to autoregressive generation. Apple chose this path for a large-scale, systematic exploration.

The team trained on 6.4T tokens and methodically tested multi-modal scaling laws, modality mixing ratios, noise schedules, and batch size effects. These engineering details are directly reusable. One practical contribution stands out: an SDE-based batch size reparameterization that decouples "physical batch size" (limited by GPU memory) from "logical batch size" (affecting gradient variance). No more expensive sweeps to find the optimal batch size.

Text generation, text-to-image, and text-to-speech all reach usable quality, but this is a design space exploration, not a leaderboard push. The paper title says it plainly. The question for practitioners isn't whether this specific model ships. It's whether masked diffusion as a unified multi-modal generation path deserves a share of your attention budget.

Key takeaways: - Apple's from-scratch tri-modal masked diffusion model provides a systematic reference for scaling laws and engineering parameters. Directly useful for teams on the same path. - SDE reparameterization decouples physical and logical batch size, solving a real pain point in diffusion training. - Masked diffusion is becoming a credible multi-modal alternative to autoregressive generation. Worth tracking, but not yet proven at scale.

Source: The Design Space of Tri-Modal Masked Diffusion Models

02 Agent Agentic RL Doesn't Need More Algorithms. It Needs a Stability Diagnosis.

Papers on training LLM agents with RL keep multiplying. The awkward reality: training collapses constantly, reproduction is fragile, and hyperparameter tuning feels like guesswork. ARLArena doesn't propose yet another agent system. It asks why training breaks in the first place.

The approach decomposes policy gradients into four core design dimensions and ablates each one on a standardized test bed. This isolates the primary sources of instability. The resulting method, SAMPO, achieves stable training across multiple agent tasks. For teams doing agentic RL, the diagnostic framework may matter more than the final algorithm. Knowing which dimension is failing beats swapping algorithms at random.

Key takeaways: - Agentic RL training collapse is a systematic problem. Decompose and diagnose, don't guess and swap. - SAMPO addresses instability along four core dimensions, with consistent cross-task results. - Teams training agents can apply this ablation framework directly to diagnose their own training failures.

Source: ARLArena: A Unified Framework for Stable Agentic Reinforcement Learning

03 Video Gen Dual-Stream MMDiT Puts Video and Audio in One Generator

The core design of SkyReels-V4: a dual-stream MMDiT (multi-modal diffusion Transformer) where one branch generates video and the other produces time-aligned audio. Both branches share a single multi-modal LLM as text encoder. Video and audio are no longer "generated separately, stitched later." They're aware of each other from the start.

The capability coverage matters more than the architecture. Text-to-video, image-to-video, video continuation, inpainting, editing: all handled through a unified channel-concatenation interface. No separate pipeline per task. It supports 1080p at 32 FPS for up to 15 seconds. A joint generation strategy ("full sequence at low resolution, keyframes at high resolution") keeps compute manageable. This is one of the most capable open video foundation models available. Unified architectures are steadily absorbing what used to be standalone modality pipelines.

Key takeaways: - Dual-stream MMDiT achieves joint video-audio generation with no post-hoc alignment needed. - Generation, inpainting, and editing share a single interface. Video toolchains get much simpler. - Unified multi-modal architectures are becoming the default for video foundation models. Teams building vertical pipelines should reassess.

Source: SkyReels-V4: Multi-modal Video-Audio Generation, Inpainting and Editing model

04 Agent CoT Reasoning Hurts GUI Agent Grounding. Here's Why.

A counterintuitive finding when training GUI agents: adding chain-of-thought reasoning via SFT actually degrades grounding ability (accurately clicking and operating target elements). Reasoning tokens dilute the model's focus on actions and coordinates. A second problem surfaces during RL: step-level verification is inherently incomplete for GUI tasks. Multiple valid action paths exist for the same goal, but training treats a single demonstration as the only correct answer. Offline metrics and actual task completion rates diverge.

GUI-Libra addresses both problems directly. During SFT, it mixes "reasoning + action" and "direct action" data, with explicit weighting on action tokens. During RL, it introduces KL-regularized trust regions for partially verifiable scenarios, plus success-rate-adaptive scaling to reduce the impact of unreliable negative gradients. Step accuracy and end-to-end completion rates improve on both web and mobile benchmarks. No expensive online data collection required.

Key takeaways: - SFT with CoT damages GUI agent grounding. Action-aware token weighting is needed to restore balance. - Step-level RL verification fails on multi-path tasks. KL regularization stabilizes training under partial observability. - Agent post-training can't just copy general-purpose pipelines. Task characteristics dictate training strategy.

05 Multimodal Multi-Player World Models Start With the Data Problem

Video world models have operated under one unchallenged assumption: simulate a single agent's viewpoint. Real environments are inherently multi-agent. Multiple participants act simultaneously, each with independent observations that still need spatial consistency. Solaris takes the first step in Minecraft, training a video world model that generates multiple player viewpoints while maintaining coherence across them.

The infrastructure deserves more attention than the model. An automated multi-player data collection system captures synchronized video and actions from multiple agents continuously, accumulating 12.64 million frames of multiplayer gameplay. Training proceeds in stages from single-player to multi-player modeling. Checkpointed Self Forcing solves the memory problem for long-horizon training.

Key takeaways: - Multi-agent video data is extremely scarce. The automated collection system may prove more valuable long-term than the model itself. - Single-viewpoint to multi-viewpoint is a necessary step for world models that simulate real environments. - Code and model are open-sourced. Teams working on multi-agent scenarios can reuse the data pipeline directly.

Source: Solaris: Building a Multiplayer Video World Model in Minecraft

Also Worth Noting

Today's Observation

Three papers today converge on the same bet: unify modalities inside a single architecture and train from scratch. Apple's tri-modal masked diffusion handles text, image, and audio together. SkyReels-V4 jointly generates video and audio through dual-stream MMDiT. VecGlypher uses a multi-modal language model to output SVG directly.

This breaks sharply from the past year's dominant approach: freeze a backbone and bolt on adapters to support new modalities. That path optimized for cost. A frozen base plus lightweight modules could support a new modality cheaply. But more teams are now willing to pay the from-scratch pre-training price for genuine modality alignment. The adapter approach may be hitting its ceiling. Apple's systematic testing of multi-modal scaling laws is telling: they're not building a demo. They're evaluating how far this path can go.

The question worth tracking: at what data and compute scale does unified from-scratch training systematically beat the stitched-together approach? That crossover point directly determines technology choices for mid-size teams. If it falls below 10B parameters, the cost advantage of the adapter path shrinks fast. Teams working on multi-modal systems should start running comparative experiments on their own data rather than assuming adapters are good enough.