Today's Overview

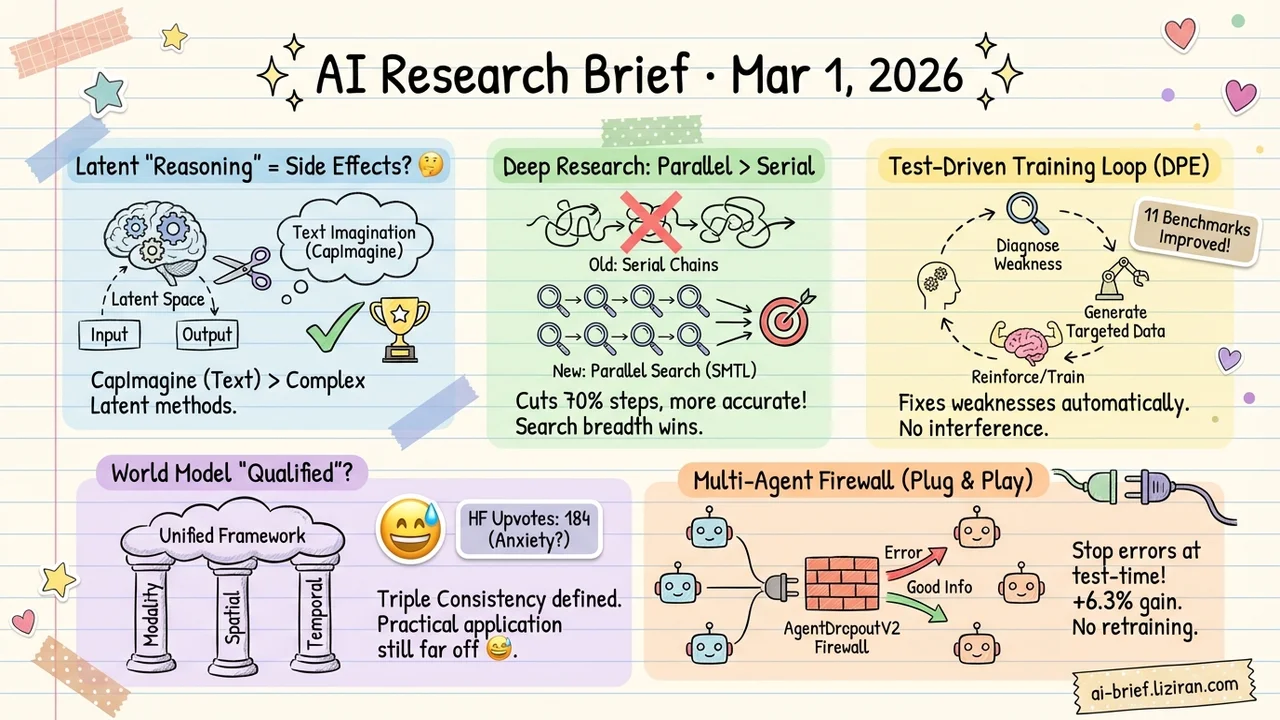

- Latent reasoning gains come from side effects, not reasoning itself. Causal mediation analysis reveals a causal disconnect between latent tokens and both inputs and outputs. A simple text-based "imagination" baseline outperforms complex latent-space methods.

- Deep research agent cuts 70% of reasoning steps and gets more accurate. Parallel evidence gathering replaces serial reasoning chains. Search breadth beats reasoning depth.

- "Test-driven error correction" from education psychology enters multimodal training. A diagnostic-reinforcement loop automatically identifies model weaknesses and generates targeted data. Eleven benchmarks improve without interfering with each other.

- A framework for what makes a world model "qualified" arrives, but practical application is far off. Triple consistency (modality, spatial, temporal) provides a unified evaluation lens. The 184 HF upvotes reflect community anxiety more than a solved problem.

- Multi-agent error propagation gets a plug-and-play firewall. Test-time pruning intercepts misinformation flow without retraining or changing topology. Average improvement: 6.3 percentage points.

Featured

01 Multimodal Latent Reasoning Performance Gains May Have Nothing to Do With Reasoning

Latent visual reasoning is one of the hottest directions in multimodal AI this year: let the model "imagine" in hidden states, simulating human visual reasoning. Sounds elegant. This paper applies causal mediation analysis to take the mechanism apart and finds two surprising disconnects. First, large perturbations to the input barely change the latent tokens. They aren't actually "looking" at the input. Second, perturbing the latent tokens barely changes the final answer. Their causal influence on the output is negligible.

Probing experiments confirm that latent tokens encode very little visual information and are highly similar to each other. The model is doing something in latent space, but that something is most likely not reasoning. It looks more like a side effect of extra compute and attention patterns.

The authors propose CapImagine, a straightforward alternative: use text for explicit "imagination." It significantly outperforms the complex latent-space methods on visual reasoning benchmarks. Every team working on latent reasoning should take this causal analysis seriously. Does your performance gain survive the same test?

Key takeaways: - Causal mediation analysis exposes a disconnect between latent tokens and both inputs and outputs. Performance gains likely stem from side effects, not reasoning. - A simple text-based imagination approach beats complex latent-space methods on visual reasoning benchmarks. - Teams pursuing latent reasoning need causal verification of where their gains actually come from.

Source: Imagination Helps Visual Reasoning, But Not Yet in Latent Space

02 Agent Cut 70% of Reasoning Steps, Get Higher Accuracy

The default move in deep research agents is deeper reasoning chains: think harder before acting. SMTL inverts this. It breaks serial long-chain reasoning into parallel evidence gathering, efficiently managing information within a limited context window. On BrowseComp, reasoning steps drop 70.7% compared to Mirothinker-v1.0 while accuracy rises to 48.6%.

The framework also introduces a unified data synthesis pipeline. One agent handles both factual QA and open-ended research, hitting 75.7% on GAIA and 82.0% on Xbench. Training uses SFT plus RL. The core bet: trade reasoning depth for search breadth. Search more, think less.

Key takeaways: - Parallel evidence gathering replaces serial reasoning chains, cutting 70% of steps without sacrificing accuracy. - Unified data synthesis lets one agent generalize across task types. No per-scenario training required. - Teams building research agents should reassess "stack more reasoning depth" as the default architecture choice.

Source: Search More, Think Less: Rethinking Long-Horizon Agentic Search for Efficiency and Generalization

03 Training Diagnostic-Driven Training: Fix What the Model Gets Wrong

"Test-driven error correction beats repetitive practice." That classic finding from education psychology now runs inside multimodal model training. DPE (Diagnostic-driven Progressive Evolution) adds a spiral diagnostic loop: evaluate the current model, locate weak capabilities, then deploy multiple agents to automatically generate training data targeting those weaknesses. The agents call search engines and image editing tools to construct realistic, diverse samples. After each training round, diagnose again and generate the next batch for newly exposed gaps.

Tested on Qwen3-VL-8B and Qwen2.5-VL-7B across eleven benchmarks, performance improves consistently without the usual "fix one thing, break another" pattern. The loop runs without manual data balancing.

Key takeaways: - A "diagnose then reinforce" closed loop identifies and patches model weaknesses automatically. - Multi-agent data generation eliminates dependence on human annotation and static datasets. - Eleven benchmarks improve without mutual interference. Teams doing multimodal training should take note of the methodology.

Source: From Blind Spots to Gains: Diagnostic-Driven Iterative Training for Large Multimodal Models

04 Multimodal What Makes a World Model "Qualified"? A Framework, Not Yet an Answer

After Sora, "world model" became a buzzword. Nobody could clearly define what a world model should actually satisfy. This paper proposes "triple consistency": modality consistency (semantic alignment across language, vision, etc.), spatial consistency (geometric correctness), and temporal consistency (causal coherence). The framework pulls scattered evaluation criteria from different subfields into a single coordinate system. CoW-Bench ships alongside it for unified evaluation of video generation and multimodal models.

The 184 HF upvotes reflect anxiety about the question itself, not confidence that this paper answers it. As a theoretical framework, its value depends entirely on whether it guides concrete model design downstream. For now, it reads more like an ambitious proposal than an actionable engineering roadmap.

Key takeaways: - Triple consistency (modality, spatial, temporal) defines world model requirements under a unified evaluation lens. - CoW-Bench enables cross-comparison of video generation and multimodal models, though benchmark discriminability still needs validation. - The real test for framework papers is whether they translate into architecture design guidance. That hasn't happened yet.

Source: The Trinity of Consistency as a Defining Principle for General World Models

05 Agent A Plug-and-Play Firewall for Multi-Agent Error Propagation

Multi-agent systems have a structural problem: one agent outputs bad information, downstream agents reason on top of it, and the error amplifies layer by layer. Existing fixes require redesigning agent topology or fine-tuning models. Both are expensive to deploy.

AgentDropoutV2 takes a different approach. It adds an active firewall at inference time. A retrieval-augmented rectifier intercepts each agent's output, identifies errors using historical failure patterns as priors, and either corrects or drops the output before it reaches downstream agents. Correction intensity adapts dynamically to task difficulty. On math reasoning benchmarks, average performance improves by 6.3 percentage points. No retraining, no topology changes. Plug and play.

Key takeaways: - Test-time pruning fits deployed multi-agent systems better than structural redesign or retraining. - Retrieval-augmented correction using failure patterns is more precise than rule-based filtering. - The key deployment risk: false positives. Dropping correct outputs carries its own cost.

Also Worth Noting

Today's Observation

Three independent papers today perform the same operation: take a plausible technical narrative apart and check whether the actual mechanism matches the surface story. The Imagination paper uses causal mediation analysis to show that latent reasoning gains come from attention pattern side effects, not latent-space reasoning. SMTL finds that stacking reasoning depth in deep research agents returns far less than expanding evidence breadth. Scale Can't Overcome Pragmatics traces VLM reasoning shortfalls to training data: humans omit the obvious when describing images, and that reporting bias transfers directly to models. The reasoning capacity of the model itself isn't the bottleneck.

Different targets, same exposed pattern: a method works, a plausible explanation gets attached, investment doubles down on that explanation. The problem is that "plausible" doesn't mean "causal." The latent reasoning case is the clearest example. Without causal analysis, teams would keep investing in larger latent spaces and more elaborate imagination mechanisms. The actual driver was just extra compute.

This pattern applies directly to everyday technical decisions. Next time a method produces good results on your project, spend half a day on an ablation study. Confirm where the performance gain actually comes from before deciding where to double down. Half a day of verification can save weeks of optimizing the wrong thing.