Today's Overview

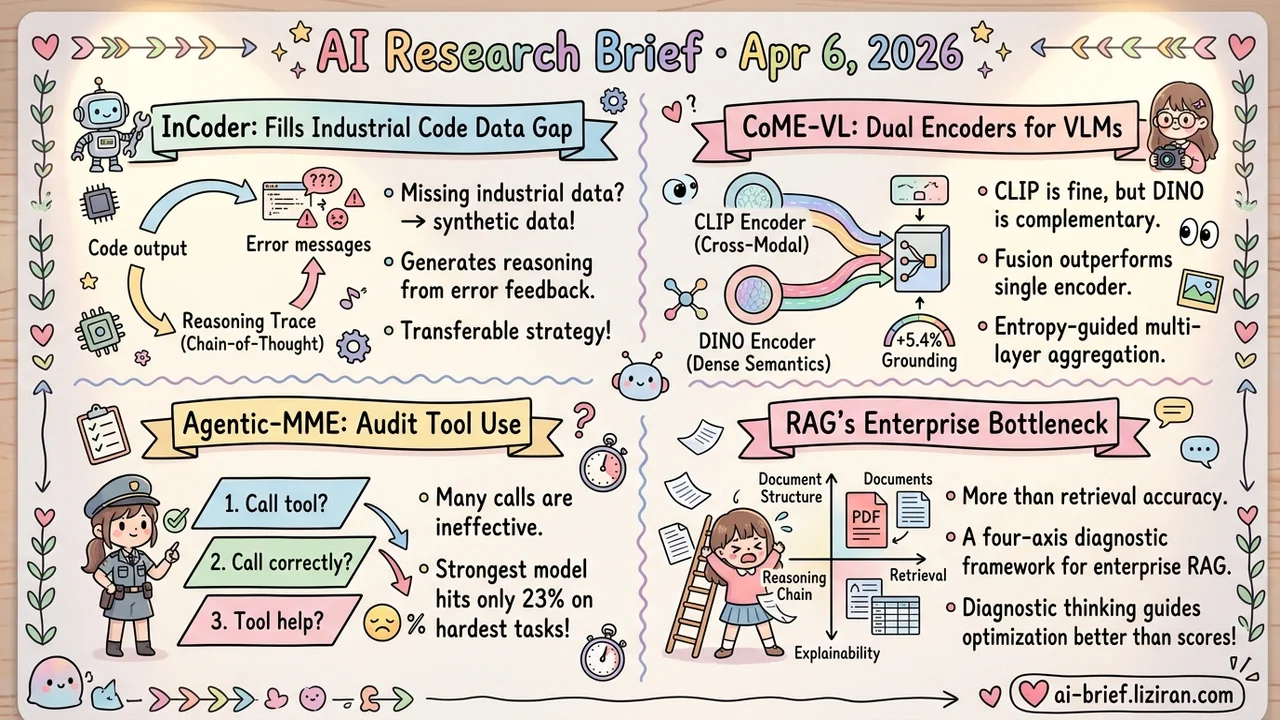

- Open-Source 32B Reaches Top Tier for Hardware Code Debugging. InCoder distills reasoning chains from engineers' actual error-fix cycles. It ranks among the best open-source models on LiveCodeBench and CAD-Coder, though KernelBench at 38% shows GPU optimization is still far from production-ready.

- CLIP's Spatial Blindness Is Baked Into Its Training Objective. CoME-VL fuses CLIP with DINO at the representation level, lifting grounding tasks by 5.4%. The real value: systematic ablation data for anyone evaluating dual-encoder designs.

- Agents That "Got It Right" May Just Be Guessing. Agentic-MME evaluates multimodal agents on process, not just final answers. The strongest model manages only 23% on hard tasks. An overthinking metric exposes step-efficiency gaps hidden by accuracy scores.

- RAG Failures Are Multi-Dimensional; a Single Accuracy Number Can't Find the Bottleneck. This AAAI paper splits diagnosis into four axes: reasoning complexity, retrieval difficulty, document structure, and explainability. It moves teams from blanket tuning to targeted fixes.

Featured

01 Code Intelligence Can You Distill a Hardware Engineer's Debugging Instinct?

Debugging chip designs and GPU kernels differs from normal software. The error signal isn't a compile failure — it's a timing violation or a missed performance target, problems that require domain experience to localize. InCoder-32B-Thinking attacks this with Error-driven Chain-of-Thought: it synthesizes reasoning traces from multi-turn dialogues where environment errors drive the fix cycle, explicitly modeling the "break → locate → repair" loop engineers live in.

An Industrial Code World Model (ICWM) trained on Verilog simulation and GPU profiling traces lets the model predict execution outcomes before compilation, enabling self-verification. At 32B parameters and open-source, it hits 81.3% on LiveCodeBench v5, 84.0% on CAD-Coder, and 38.0% on KernelBench — open-source top tier across both general and industrial benchmarks.

KernelBench at 38.0% is the honest number. GPU kernel optimization remains early-stage even for the best models. Teams working on hardware code intelligence should start tracking this line of work, but don't expect it to ship out of the box.

Key takeaways: - Distilling reasoning from engineers' actual error-fix trajectories fits hardware debugging better than generic CoT - 32B open-source scale reaching top tier on industrial code benchmarks lowers the barrier for hardware teams to experiment - KernelBench at 38.0% is a reminder that GPU optimization tasks still have a long way to go

Source: InCoder-32B-Thinking: Industrial Code World Model for Thinking

02 Multimodal CLIP Isn't Enough, but the Problem Isn't CLIP

CLIP as the visual encoder in VLMs is almost an industry default. But contrastive learning optimizes for global semantic alignment, which structurally discards dense spatial information: object positions, local details, fine-grained regions. CoME-VL doesn't replace CLIP. It patches the gap by fusing CLIP with self-supervised DINO at the representation level.

The method uses entropy-guided multi-layer aggregation with orthogonal constraints to cut redundancy, plus RoPE-enhanced cross-attention to align the two encoders' heterogeneous token grids. Visual understanding improves by 4.9% on average, grounding by 5.4%, and RefCOCO detection reaches SOTA.

The engineering takeaway matters more than the headline numbers. Dual-encoder fusion: how much does it buy, and what's the most efficient way to do it? This paper provides the ablation data to answer both questions. Grounding and detection gains outpacing general understanding confirms that dense spatial semantics is indeed CLIP's structural weak spot.

Key takeaways: - Contrastive and self-supervised encoders have complementary information trade-offs; fusion beats replacement - Modular design plugs into existing VLM pipelines at low cost, no architecture changes needed - Grounding and detection gains are larger than general understanding, confirming CLIP's spatial deficit

Source: CoME-VL: Scaling Complementary Multi-Encoder Vision-Language Learning

03 Evaluation Right Answer, Wrong Process: Agents Need Step-Level Evaluation

Multimodal agent evaluation has a blind spot. A model scores well on final answers but may never have called a tool — it just guessed from parametric memory. Agentic-MME targets this with process-level verification: 418 real tasks, each annotated with 10+ person-hours of step-by-step checkpoints, evaluating whether the model called the right tool, used it correctly, and used it efficiently.

The efficiency dimension is particularly sharp. It introduces an overthinking metric relative to human trajectories, measuring whether a model burns far more steps than necessary. Results: the top model (Gemini3-pro) reaches 56.3% overall accuracy but drops to 23.0% on Level-3 tasks. Process-level evaluation reveals gaps far wider than final-answer metrics suggest.

Key takeaways: - Final-answer accuracy masks the real state of tool use; process-level evaluation is a more reliable signal - The overthinking metric directly informs deployment cost estimation - Even the strongest model collapses on hard tasks — agentic capabilities are nowhere near mature

Source: Agentic-MME: What Agentic Capability Really Brings to Multimodal Intelligence?

04 Retrieval RAG Evaluation Stuck on Accuracy? No Wonder It Breaks in Production

Split RAG evaluation from "final accuracy" into four independent axes — reasoning complexity, retrieval difficulty, document structure, explainability — and the picture changes completely. This AAAI paper starts from a practical observation: in enterprise settings, RAG fails for entangled reasons. The same 70% accuracy might mean the system chokes on cross-document reasoning or on parsing unstructured documents. The optimization path differs entirely.

The four-axis diagnostic framework doesn't discover new problems. It turns "something feels off" into "here's what's off" — a structured method that fills the gap between academic benchmarks and production debugging. For teams building RAG products, this taxonomy is more useful than chasing higher benchmark scores.

Key takeaways: - Enterprise RAG failure modes are multi-dimensional; a single accuracy metric can't pinpoint the bottleneck - The four-axis framework shifts pipeline optimization from blanket tuning to targeted repair - Teams building RAG products can adopt this taxonomy to structure their internal evaluation