Today's Overview

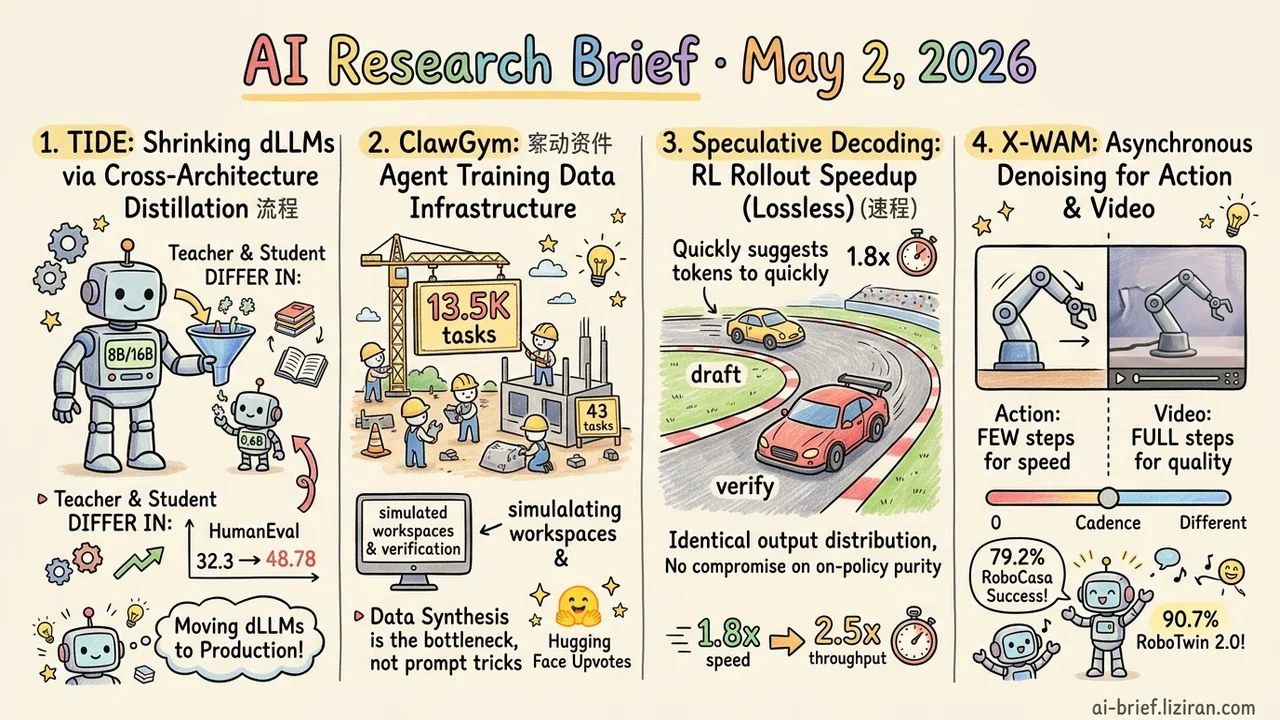

- Cross-Architecture Distillation Shrinks dLLMs From 8B to 0.6B. TIDE is the first dLLM distillation framework where teacher and student differ in architecture, attention mechanism, and tokenizer at once. HumanEval jumps from 32.3 to 48.78, with average gains of 1.53 points across 8 benchmarks.

- Agent Training Data Synthesis Is Becoming the New Infrastructure Layer. ClawGym ships 13.5K persona-driven tasks, simulated workspaces, and hybrid verification. 43 HF upvotes top every highlight today.

- Speculative Decoding Becomes a Lossless Speedup Primitive for RL Rollouts. 1.8x throughput at 8B, 2.5x simulated end-to-end at 235B with an async pipeline. On-policy purity stays intact.

- Asynchronous Denoising Lets Action and Video Run on Different Cadences in One Diffusion. X-WAM pretrains on 5,800 hours of robot data. RoboCasa hits 79.2% success, RoboTwin 2.0 hits 90.7%.

Featured

01 Cross-Architecture Distillation Closes the dLLM Deployment Gap

Diffusion language models offer real advantages: parallel decoding and bidirectional context. But getting SOTA still demands 8B-16B parameters, with deployment costs an order higher than equivalent AR models. TIDE attempts cross-architecture distillation where teacher and student differ in architecture, attention mechanism, and tokenizer all at once. That's the missing piece for moving dLLMs from papers to production. Prior dLLM distillation work compressed inference steps within a single architecture.

The framework has three modules. TIDAL adjusts distillation strength based on training progress and diffusion timestep, since teacher reliability varies across noise levels. CompDemo uses complementary mask splitting so the teacher still produces useful predictions under heavy masking. Reverse CALM handles chunk-level likelihood alignment across tokenizers.

Distilling 8B dense and 16B MoE teachers into a 0.6B student gains 1.53 points on average across 8 benchmarks. HumanEval jumps from the AR baseline's 32.3 to 48.78. Code generation benefits most; other tasks see more measured gains. Both pipelines in the abstract are dLLM→dLLM. Whether AR teachers work as a starting point, and how much precision cross-tokenizer alignment loses, depends on numbers in the paper itself.

Key takeaways: - Cross-architecture distillation (architecture, attention, and tokenizer differing simultaneously) is the missing link between dLLM lab work and production. TIDE is the first framework filling that gap. - The 0.6B student's 32.3→48.78 jump on code generation is striking. The 1.53-point average across 8 benchmarks is steadier. Read the magnitudes carefully. - Teams hoping to use AR teachers for dLLM students should wait for the paper. Both abstract pipelines are still dLLM→dLLM.

Source: Turning the TIDE: Cross-Architecture Distillation for Diffusion Large Language Models

02 Verifiable Synthetic Data Is the Real Agent Bottleneck

Agent training has lived in cottage industries. Each team builds its own environments, trajectories, and reward signals, and almost nothing transfers across teams. ClawGym's center of gravity isn't another agent loop. It targets the hardest piece to externalize: systematic synthesis of verifiable training data. 13.5K tasks come from persona-driven intent plus skill-level operations, paired with simulated workspaces and hybrid verification, then routed through supervised fine-tuning and lightweight RL pipelines.

43 upvotes on HF top every highlight today. The community signal is direct: missing data infrastructure blocks more teams than missing prompt tricks. The abstract doesn't show actual quality distribution of the synthesized tasks, or how big the gap to real workflows is. That call needs measurement after release.

For teams building their own agents, the right question isn't "should I swap in a new loop." It's "is my training data synthesis pipeline industrial-grade."

Key takeaways: - Data synthesis and environment integration are turning into the agent infrastructure layer, closer to the current bottleneck than yet another agent loop. - 43 HF upvotes beats every highlight today. Demand for data infrastructure outweighs new prompt or loop tricks. - Real task quality and the gap to actual workflows aren't disclosed. Wait for the code release before betting on it.

Source: ClawGym: A Scalable Framework for Building Effective Claw Agents

03 Speeding Up RL Rollouts Without Touching On-Policy

The bottleneck on RL post-training is rollout generation. Autoregressive decoding plays out one token at a time and eats most of the training clock. Existing speedups usually compromise: off-policy execution, replay, low-precision generation. They save compute but bend on-policy purity.

This work ports speculative decoding (small drafter, large verifier) from inference into the NeMo-RL+vLLM rollout stack as a lossless primitive. Output distribution stays identical to the original model, and the on-policy assumption holds. At 8B with synchronous RL, rollout throughput rises 1.8x. With an async pipeline at 235B, end-to-end speedup simulates 2.5x.

Speculative decoding's actual gain depends on draft acceptance rate. RL training drifts the policy distribution as it progresses, and the abstract doesn't say whether acceptance rate stays stable through that drift. That's the variable to test before deploying.

Key takeaways: - For teams that need to speed up RL post-training without compromising on-policy purity, speculative decoding is one of the few options that doesn't change the training regime. - The 1.8x to 2.5x speedup comes from engineering integration (NeMo-RL+vLLM+MTP/Eagle3), not new algorithms. - Acceptance rate stability under policy drift is the variable to verify yourself before deployment.

Source: Accelerating RL Post-Training Rollouts via System-Integrated Speculative Decoding

04 One Diffusion, Two Cadences: Action Fast, Video Slow

Unified world models like UWM put video generation and action prediction in one diffusion framework, but they only model 2D pixel space, so action can't run real-time. X-WAM splits the same denoising into two paces: action runs few steps for fast decoding, video runs full steps for quality. Training samples from the joint distribution to align with the asymmetric inference cadences.

A lightweight depth prediction branch reuses the last few layers of the pretrained DiT for 4D reconstruction (video plus geometry), avoiding a full retrain. Pretraining on 5,800 hours of robot data, average success rates land at 79.2% on RoboCasa and 90.7% on RoboTwin 2.0. 4D metrics also beat existing methods.

Latency and action frequency aren't in the abstract. How fast "real-time" actually runs, and whether it can drive closed-loop control, needs the paper itself to confirm.

Key takeaways: - Asynchronous denoising lets one diffusion model run different step counts for action and video. Engineering compromise, not a new paradigm. - 4D reconstruction comes from a lightweight depth branch without retraining the pretrained video DiT. Useful reference for teams reusing existing video base models. - Latency for real-time isn't disclosed. Confirm that number before any deployment evaluation.

Source: Unified 4D World Action Modeling from Video Priors with Asynchronous Denoising

Also Worth Noting

Today's Observation

ClawGym addresses agent training data synthesis pre-training. At runtime, OCR-Memory handles long-horizon trajectories with image-based recall. One sits before training, one sits at inference. The directions don't overlap, but both skip the "how to design an agent loop" layer and go directly at deeper infrastructure in the agent lifecycle. Both pulled solid community signal — ClawGym's 43 HF upvotes beats every highlight today. Not coincidence. It extends a quiet line that's been forming for months. As prompt engineering and reasoning chains hit diminishing returns, what determines the agent ceiling is the plainer engineering question: where does verifiable training data come from, and how do long-horizon trajectories not blow context.

The move for practitioners isn't picking another loop structure. Audit two pipelines first. Is your training data synthesis industrial-grade — task diversity, simulated environments, automated verification all three present? Can your runtime memory survive 100+ turn trajectories — not just remembering, but recalling and compressing too? With either short, no prompt tweak fills the gap.

Action item: Make a table listing your agent system's training data synthesis pipeline and runtime memory module piece by piece. Match it against ClawGym's three-piece kit (persona-driven intent, simulated workspace, hybrid verification) and OCR-Memory's non-text approach (trajectory-as-image, OCR recall). Whatever's blank is the engineering debt for next quarter.