Today's Overview

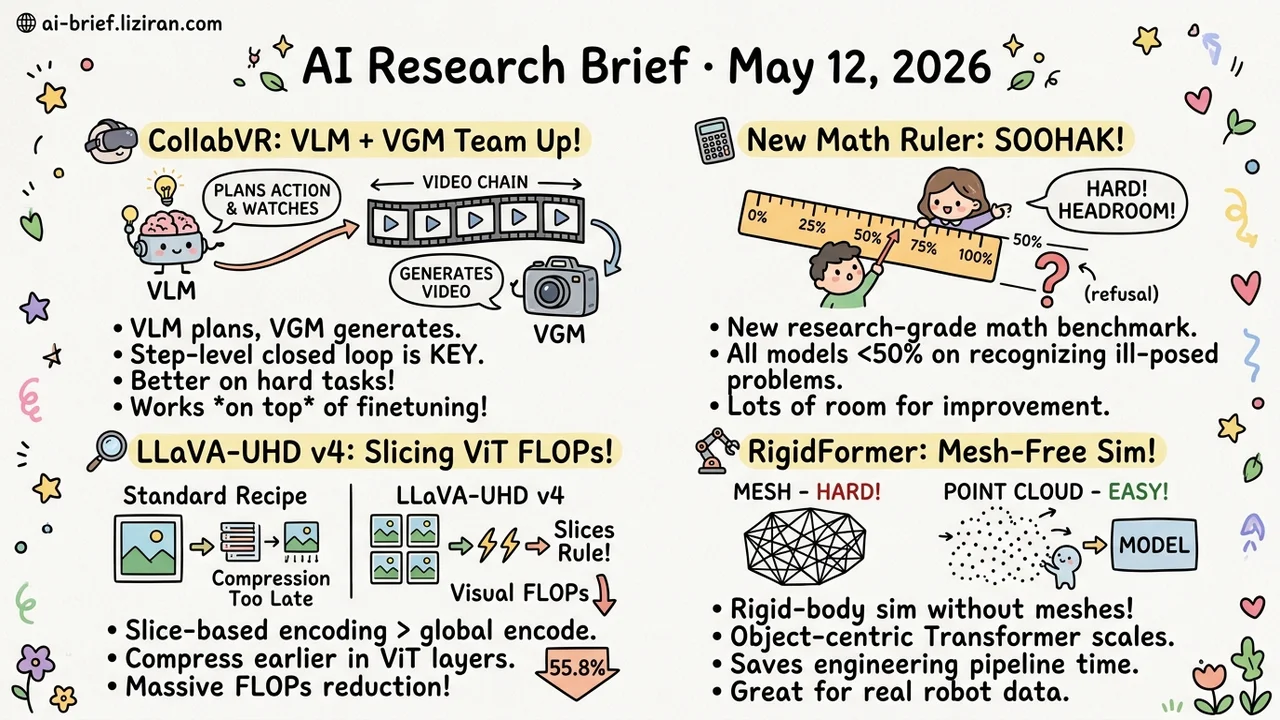

- CollabVR Splits Video Reasoning Between VLM and VGM. Step-level closed loop holds long-horizon goals while curbing short-horizon simulation drift. External supervision stacks with VGM-side reasoning fine-tuning.

- The Next Math Ruler After IMO Gold. Soohak pins Gemini-3-Pro at 30.4% on research-grade problems and isolates refusal — recognizing ill-posed problems — where every model sits under 50%.

- MLLMs Optimize the Wrong Compression Layer. LLaVA-UHD v4 moves compression into shallow ViT layers and replaces global encode with slice-based encoding. Visual FLOPs drop 55.8% with downstream performance intact.

- Rigid-Body Sim Without the Mesh. RigidFormer feeds point clouds directly through an object-centric Transformer and scales past 200 objects. The win sits in the engineering pipeline, not the accuracy ceiling.

Featured

01 VLM and VGM Should Split the Video-Reasoning Load

"Thinking with Video" runs videogen models (VGMs) frame by frame to simulate physical processes or multi-step tasks, turning the generated Chain-of-Frames into reasoning traces. Even with the strongest VGM, two failure modes keep showing up. Goals drift on long-horizon tasks. Mid-clip simulation errors compound.

CollabVR argues neither problem will be solved by scaling VGMs further. Add an explicit reasoning layer instead — a vision-language model (VLM). Architecture is straightforward. The VLM plans the next action at each step, watches the VGM-generated clip, then folds the diagnostic back into the next prompt to repair the trajectory.

Granularity is what matters. Whole-plan upfront commits before seeing any frame. Whole-critique at the end intervenes too late. Step-level closed loop sits in the middle and works. On Gen-ViRe and VBVR-Bench, the harder the task, the larger the gain. And this external supervision stacks on top of VGMs that already went through reasoning fine-tuning.

Key takeaways: - "Video as reasoning" is shifting from one-model-bigger to VLM+VGM specialization. Long-horizon planning and short-horizon simulation split across two models. - Step-level closed-loop checking is the engineering win available today. More effective than upfront planning or post-hoc critique. - External supervision is orthogonal to a VGM's own reasoning fine-tuning. Stack them. No either-or.

Source: CollabVR: Collaborative Video Reasoning with Vision-Language and Video Generation Models

02 After IMO Gold, Math Evals Have a New Axis

Frontier models cleared IMO gold this year, and "math ability" as an eval dimension is losing resolution fast. Competition problems test step-by-step solutions. Real math research uses reasoning to push the knowledge frontier itself.

Soohak comes from 64 mathematicians starting from scratch. 439 problems, an order of magnitude larger than Riemann Bench (25) and FrontierMath-Tier 4 (50). On the Challenge subset, Gemini-3-Pro hits 30.4%, GPT-5 26.4%, Claude-Opus-4.5 10.4%. Open models sit below 15%. Headroom across the board.

The refusal subset is more interesting. It tests whether a model can recognize "this problem is ill-posed" and decline to answer. Every model lands below 50%. That points at a training target almost nobody has optimized for explicitly: teaching reasoning models to admit a problem shouldn't have an answer.

Key takeaways: - Research-level math is becoming the next eval ruler, but top models only clear 30%. Plenty of headroom. - Refusal on ill-posed problems sits below 50% for every model. A new RL target nobody has pushed on. - Dataset stays private until late 2026 to prevent contamination. Remote evaluation only. Anyone working on eval or reasoning products can request access early.

Source: Soohak: A Mathematician-Curated Benchmark for Evaluating Research-level Math Capabilities of LLMs

03 High-Res MLLMs Are Optimizing the Wrong Layer

The standard recipe for high-res MLLM processing has become almost reflexive. Global encode produces a long visual token sequence. Post-ViT compression squeezes it down. The compressed tokens go into the LLM. LLaVA-UHD v4 revisits this flow and points out an easy-to-miss fact. ViT's quadratic attention cost is already paid before token compression runs. Compression only saves on the LLM side.

The paper changes two things. On encoding strategy, slice-based encoding beats global encode across multiple benchmarks. Preserving local detail helps fine-grained perception more than chasing global attention. On compression placement, push it forward into shallow ViT layers (intra-ViT early compression). That is where the real FLOPs reduction lives.

Combined, visual encoding FLOPs drop 55.8% with downstream performance flat or slightly up. The full paper is worth checking to see how the slice strategy extends to video or longer inputs.

Key takeaways: - High-res MLLM optimization should move upstream into encoding. Tuning the token reducer is optimizing the wrong layer. - Slice-based encoding deserves to be the default for new projects, not global encode. - For MLLM inference optimization, profile ViT vs LLM FLOPs split before picking a target.

Source: LLaVA-UHD v4: What Makes Efficient Visual Encoding in MLLMs?

04 Rigid-Body Sim Without the Mesh

Learned rigid-body dynamics simulation has been tied to mesh and vertex-level message passing for a long time. Inputs must be clean meshes. Point clouds, scan data, and other mesh-free representations can't go in directly.

RigidFormer replaces vertex-level interaction with an object-centric Transformer. Each object gets a compact anchor set. Anchor-Vertex Pooling injects local geometry and avoids attention over all vertices. To stay invariant under anchor reordering, the model uses Anchor-based RoPE. Differentiable Kabsch alignment then projects updates back onto the rigid-body manifold, so the network can't break physical constraints.

Results match or beat mesh baselines, scale past 200 objects, and generalize across unseen point cloud resolutions. For robotics and physics simulation teams, what gets relaxed is the input-side preprocessing burden, not the model's accuracy ceiling. Whether to switch comes down to how much of your pipeline is mesh cleanup.

Key takeaways: - Rigid-body sim drops the mesh requirement. Point clouds go in directly. Less preprocessing. - Object-centric design ties compute to object count rather than vertex count. 200+ object scale is practical. - Input-side simplification fits real robot data better. Accuracy gains are modest. The win is in the engineering pipeline.

Source: RigidFormer: Learning Rigid Dynamics using Transformers

Also Worth Noting

Today's Observation

Soohak and MLS-Bench landed the same day. Two versions of the same move. Push the eval target up from "apply existing knowledge" to "create new knowledge." One asks models to push open math problems forward. The other asks models to invent generalizable, scalable ML methods. Shared premise: frontier models are hitting the ceiling on "high-difficulty tasks with definite answers" — IMO gold, coding contests — and the next line needs to be drawn somewhere new.

The interesting move is the tier shift. Eval targets are sliding from problem-solving to method-invention. If current models only score low on "create method," the next round of work shouldn't aim at squeezing out a few more competition points. The target becomes capability along the "propose problem → design method → verify" loop. How well that hit rate moves shapes how training data gets built and how RL tasks get designed.

Practical step. If you're sitting on a reasoning model or eval product, apply for remote evaluation while both benchmarks are fresh, or read the refusal and method-invention problem formulations directly. Far more telling than the scoreboard. On the product side, revisit whether your model "just answers questions better" or "is starting to propose new approaches." The two answers point at very different deployment forms.