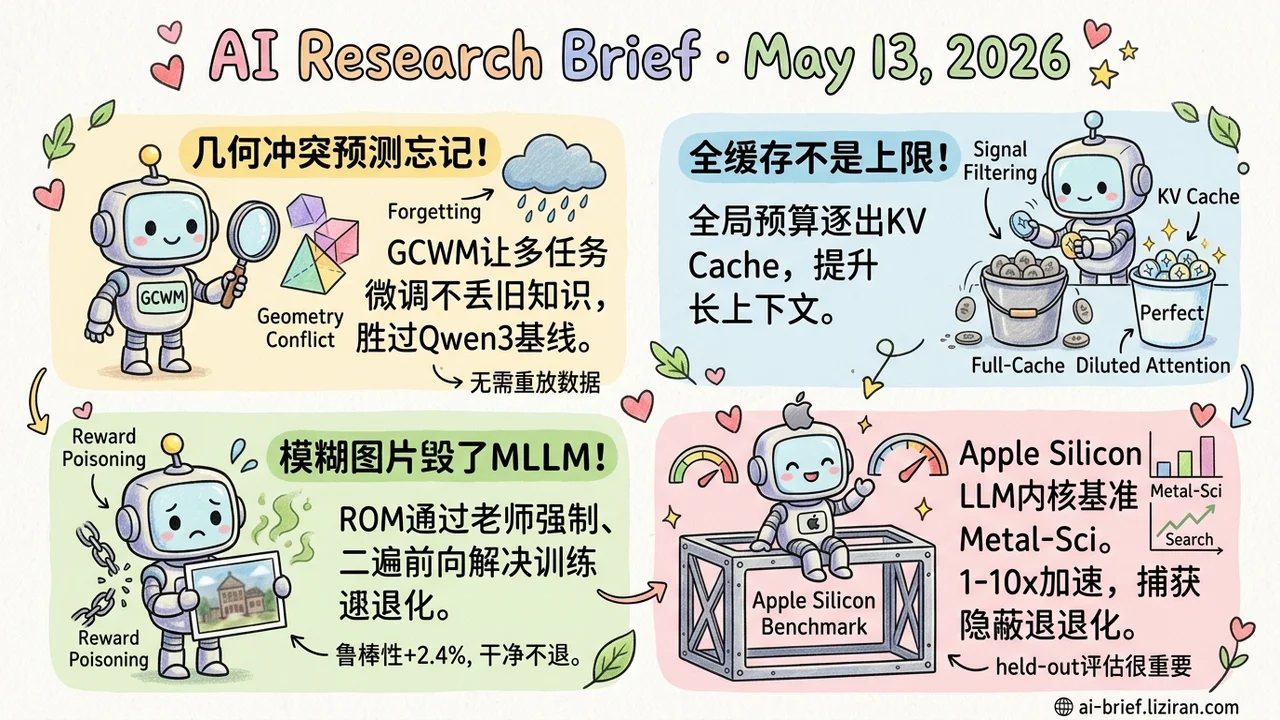

Today's Overview

- Geometry Conflict Predicts Continual Fine-Tuning Forgetting. Treating each task's parameter-update covariance as a measurable signal, GCWM beats data-free baselines on Qwen3 0.6B-14B across both domain and capability continual settings.

- Full-Cache Is No Longer the Ceiling for KV Eviction. Irrelevant tokens dilute attention in long context. A learnable global-budget eviction policy beats full-cache, and KV cache should be reframed as signal filtering.

- MLLMs Get Wrecked by Blurry Production Images. Throwing degraded samples into RL rollouts triggers reward poisoning. ROMA uses a second forward pass plus teacher forcing to handle visual degradation on the training side.

- Apple Silicon LLM Kernel Tuning Finally Has a Benchmark. Metal-Sci packages 10 scientific compute tasks, CPU baselines, roofline-anchored fitness, and an evolutionary search harness, with held-out sizes catching silent regressions.

Featured

01 Geometry Conflict Predicts Forgetting Before You Train

Continual post-training now has a measurable signal that lets you predict forgetting before it happens. Represent each post-training task by its update to the model parameters, then look at the covariance geometry of that update — where the update points and how it spreads. The paper's core observation: forgetting comes not from inherent task conflict, but from misalignment between the new task's induced geometry and the model's current geometry. Aligned, you get capability transfer. Misaligned, old knowledge gets squeezed out.

Building on this, GCWM (Geometry-Conflict Wasserstein Merging) constructs a shared metric using Gaussian Wasserstein barycenters and gates the merge based on geometric conflict. No replay data needed. Across Qwen3 from 0.6B to 14B, on both domain-continual and capability-continual scenarios, GCWM consistently beats other data-free baselines.

For teams running continual SFT pipelines, this could shift the design from "replay/regularization to clean up afterward" toward "predict first, then decide whether to train at all." The abstract doesn't disclose how expensive the criterion itself is to compute, so whether it can run cheaply on production-scale models still needs the full paper to confirm.

Key takeaways: - Update covariance geometry is a sharper signal than "task similarity" or "task conflict" intuitions for predicting forgetting. - GCWM works without replay data and lifts retention from 0.6B to 14B. Useful when memory or data compliance is constrained. - Whether the criterion runs cheaply in production decides whether this becomes tooling. Check the full paper.

Source: Geometry Conflict: Explaining and Controlling Forgetting in LLM Continual Post-Training

02 Throwing Out Irrelevant Tokens Makes Long Context More Accurate

Anyone working on long-context inference defaults to one assumption: KV cache eviction aims to approximate full-cache, and full retention sets the upper bound. This paper says no. Too many irrelevant tokens dilute attention in long context and pull the model away from useful evidence.

The authors train a lightweight retention gate that scores each token's future utility. Tokens compete across layers, heads, and modalities under one shared memory budget. Memory drops sharply, and on long-context language reasoning, vision-language reasoning, and multi-turn dialogue, the result matches or beats full-cache.

KV cache stops being a compression problem and becomes a signal-filtering one. Eviction isn't just about cost. It can also lift long-context quality.

Key takeaways: - Full-cache is no longer the ceiling for KV eviction. Learnable selective dropping can improve long-context reasoning quality. - Global unified budget with cross-layer, cross-head, cross-modality competition beats traditional per-layer-independent eviction. - Teams building RAG, long-document, or multi-turn agent products should reframe KV eviction from "compression tool" to "signal filtering mechanism."

Source: Make Each Token Count: Towards Improving Long-Context Performance with KV Cache Eviction

03 MLLMs That Reason in the Lab Fall Apart on a Single Blurry Photo

After GRPO and other critic-free RL methods pushed multimodal reasoning forward, deployment hits a wall. Slightly blurry surveillance frames, low-resolution scans, images that went through several JPEG passes — accuracy drops noticeably. Traditional visual robustness tricks (static data augmentation, value-based regularization) don't transplant cleanly into this RL setting. Feeding degraded images directly into rollout triggers reward poisoning. The model invents wrong reasoning paths over the blurry image and steers the policy off course.

ROMA uses a second forward pass with teacher forcing to evaluate the degraded view. Add token-level KL penalty and "regularize only on correct trajectories," and the collapse is avoided. On Qwen3-VL 4B/8B across seven reasoning benchmarks, seen and unseen degradations gain 2.4% and 2.3% respectively. Clean-input accuracy holds.

Numbers aren't striking. What's worth tracking is the framing: visual degradation as an RL training objective rather than a preprocessing or post-processing problem. Teams pushing multimodal models toward production should watch this training-side path.

Key takeaways: - Real-world MLLM deployment suffers from variable image quality, but throwing degraded samples into RL rollout triggers reward poisoning. Fix on the training side, not the data side. - ROMA evaluates degraded samples through a second teacher-forcing pass and confines regularization to correct trajectories, avoiding policy contamination. - The 2-3% robustness gain is modest, but bringing visual degradation into the RL training loop is the more interesting move.

Source: Reinforcing Multimodal Reasoning Against Visual Degradation

04 LLM Kernel Tuning on Apple Silicon Finally Has Solid Ground

Almost all work on LLM-generated GPU kernels runs on CUDA. Apple Silicon teams doing inference or research have lacked a standard benchmark and search harness. Metal-Sci ships 10 scientific compute kernel tasks (stencil, n-body, Boltzmann, molecular dynamics, multi-kernel PDE, FFT — six optimization patterns), CPU reference implementations, a roofline-anchored fitness function, and a lightweight evolutionary search loop. The LLM iterates kernel code on M1 Pro by itself.

Three models compared: Claude Opus 4.7, Gemini 3.1 Pro, GPT 5.5. In-distribution speedups range from 1.00× to 10.7×. The real methodological contribution is the held-out-size evaluation. Opus's HMC kernel won on the search set but produced sampling errors at unseen dimensions. GPT's best FFT3D solution hit 2.95× in distribution but collapsed to 0.23× on the held-out 256³ set. Single search-set scores can't surface that kind of silent regression.

For Mac/iOS ML engineering teams, this is missing infrastructure that has been overdue. Whether the toolchain is complete enough depends on how the community picks it up.

Key takeaways: - Apple Silicon LLM kernel search has its first comparable public benchmark and harness. Mac/iOS ML engineering gets infrastructure it was missing. - Held-out-size evaluation as mechanical oversight catches silent regressions on the search set. Worth borrowing for any auto-tuning pipeline. - 1× to 10× spread shows the same LLM has very different ability across kernel patterns. Trusting a single point result will burn you.

Source: Metal-Sci: A Scientific Compute Benchmark for Evolutionary LLM Kernel Search on Apple Silicon

Also Worth Noting

Today's Observation

Read Geometry Conflict and KV Cache together and a recurring dynamic inside LLMs comes through. The default scaling assumption — train one more round = better, cache more tokens = better — doesn't actually hold monotonically inside the model. Geometry Conflict translates "will continued training trigger forgetting" into the alignment of parameter-update covariance geometry, giving a measurable predictor before you train. The KV Cache paper shows full-cache attention in long context gets diluted by irrelevant tokens, and learnable eviction doesn't just approximate full-cache. It beats it.

Both papers do the same thing. Use the model's internal geometry or attention structure to explain when "more" starts working in reverse, then build an online-readable trade-off tool from that explanation. Multi-Indicator Data Selection in Also Worth Noting does the same on the data side. The instruction-tuning selection function is more worth modeling than the data volume, and joint task-model adaptive weights are sharper than static multi-dimensional heuristics.

Concrete step. If you're running a continual SFT pipeline, long-context inference, or a large-scale SFT data pipeline, pick one internal signal (parameter update covariance, attention distribution, single-sample contribution) and run a measurement pass. See whether the scaling assumption has already passed its inflection point in your setup. If it has, the next investment isn't "train a bit more / cache a bit more / collect a bit more data." Make that signal online-readable so the pipeline decides when to stop.