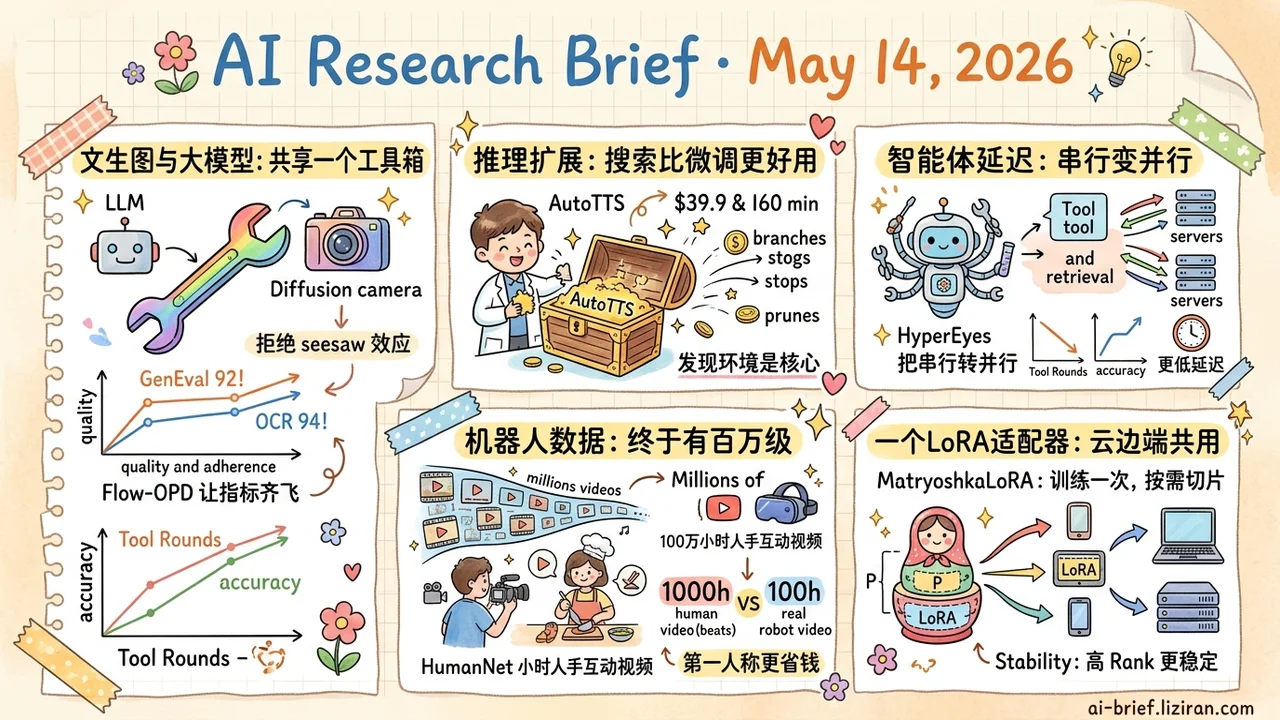

Today's Overview

- Image Generation Alignment and LLM Post-Training Now Share One Toolbox. Flow-OPD ports On-Policy Distillation to flow matching. SD 3.5 Medium hits GenEval 92 (up from 63) and OCR 94 (up from 59), about 10 points over plain GRPO.

- Test-Time Scaling Strategies Can Be Searched, Not Tuned. AutoTTS lifts researcher work up one level — build a discovery environment instead of designing a strategy. 160 minutes and $39.9 yields policies that transfer across benchmarks and model sizes.

- Agent Latency Bottlenecks Are Often Serialized Parallel Opportunities. HyperEyes turns independent sub-retrievals within a single round into parallel atomic actions. The 30B version gains 9.9% accuracy with 5.3× fewer tool-call rounds.

- Physical Interaction Data Finally Hits Million-Hour Scale. HumanNet ships 1M hours of human activity video with first- and third-person views. 1000 hours of first-person video beats 100 hours of real robot data for continued training.

- One LoRA Adapter Serves Cloud and Edge. MatryoshkaLoRA reorganizes rank as nested hierarchy. Pick a tier per device at deploy time. More stable than DyLoRA at the high-rank end.

Featured

01 Text-to-Image Borrows the LLM Post-Training Stack

LLM post-training has a classic problem. Multiple reward signals fight each other, and models drift into reward hacking. Flow matching (the dominant diffusion-style generative paradigm) hits the same trouble in multi-task alignment: the "seesaw effect" where one metric rises only as another falls. The shape of the problem is identical to LLM multi-objective alignment.

Flow-OPD ports the LLM-side solution wholesale. Train a specialist teacher per single-reward GRPO run, then distill them into one student via on-policy sampling and task routing. Add a task-agnostic "manifold anchoring" term to keep image quality from collapsing.

Task routing matters because direct multi-reward GRPO weights several rewards into one scalar and squeezes the same parameters at every gradient step. The objectives end up competing for gradient direction. OPD instead dispatches each sample to its specialist teacher, so multi-objective optimization becomes a hard split along the sample dimension and gradients stop canceling. Manifold anchoring is the safety net — pushing too hard on rewards slips the model into hack regions where metrics climb but quality dies. Anchoring constrains the policy to the natural-image manifold the model already learned.

On SD 3.5 Medium, GenEval climbs from 63 to 92, OCR from 59 to 94. About 10 points over direct GRPO. Caveat: the abstract only shows seesaw mitigation, not a quantified bound on cross-task negative transfer. Real scalability needs more task combinations.

Key takeaways: - The "multi-objective fights plus reward hacking" problem in text-to-image alignment is structurally identical to LLM post-training. Methodologies are converging. - Two-stage OPD (specialist teachers plus task-routed distillation) lifts hard metrics like GenEval and OCR together without sacrificing image quality. - Migration cost from LLM post-training to image teams will keep dropping. Worth auditing which parts of your stack can be ported wholesale.

Source: Flow-OPD: On-Policy Distillation for Flow Matching Models

02 Outsource TTS Strategy Tuning to the LLM Itself

Test-time scaling (TTS, spending more compute at inference for better results) has been standard for two years. Strategies still come from researchers tuning heuristics by hand — when to branch, when to prune, when to stop. AutoTTS doesn't change a trick. It changes the workflow.

Researchers stop designing the strategy and instead build a discovery environment. Width-depth TTS becomes controller synthesis over pre-sampled inference trajectories. The search loop never re-queries the LLM, which compresses discovery cost to $39.9 and 160 minutes. Searched policies beat hand-built baselines on the accuracy-cost curve and transfer to unseen benchmarks and model sizes.

What matters here isn't a few extra points on math reasoning. Scaling-era methodology — don't optimize the result, build a discovery process that can scale — has moved from the training side to the inference side. As inference budgets grow, whoever automates strategy discovery first gets the compounding return.

Key takeaways: - TTS strategies can now be searched, not tuned. The trick: pre-sampled trajectories plus controller synthesis push search cost to tens of dollars. - Discovered strategies transfer across benchmarks and model sizes, which means the environment is more valuable than any one strategy. - Inference product teams should decide now: keep paying engineers to tune heuristics, or start building a strategy-discovery pipeline.

Source: LLMs Improving LLMs: Agentic Discovery for Test-Time Scaling

03 Stop Serializing Independent Agent Queries

Recent agent work competes on who thinks longer with longer chains. HyperEyes pushes the other direction: emit independent sub-retrievals in parallel within a single round. Visual grounding and retrieval fuse into one atomic action, and the agent dispatches multiple visually-anchored queries at once instead of one tool call per entity.

Training stacks two reward layers. The macro layer uses TRACE-style trajectory rewards with progressively tightening reference values to suppress redundant calls. A micro on-policy distillation layer injects token-level correction signals on failed rollouts, easing the credit-assignment pain of sparse outcome rewards.

The 30B version posts 9.9% higher accuracy than comparable open-source agents and averages 5.3× fewer tool-call rounds. That second number matters more for production — it directly shapes latency and the token bill. HyperEyes also ships IMEB, a benchmark that scores reasoning cost alongside accuracy. Existing benchmarks reward call-count inflation, which makes "good agents" often just agents trading calls for points.

Key takeaways: - Agent latency bottlenecks usually aren't single-step depth. They're parallel opportunities that got serialized. Worth a controlled audit. - A 5× tool-call gap at equal accuracy is an order-of-magnitude swing on bills and latency. - Accuracy-only evals push call counts up. Bake cost into your internal benchmarks if you want them to reflect deployment.

04 Embodied AI Gets Its Own Internet

Vision and language scaled because the internet provided massive natural corpora. Embodied AI has been stuck on data for exactly this reason — the substrate is missing. HumanNet supplies it: 1M hours of human activity video, first- and third-person views, with action descriptions, hand and body signals, and other interaction-oriented annotations.

The 1M-hour mark is when this starts to matter. Physical interaction's long tail is much wider than text. A few thousand or tens of thousands of hours only cover benchmarks, not the real-world action distribution. Dual-view design is the other key choice. First-person captures manipulation detail, third-person captures scene context, and robot transfer needs both.

The most interesting ablation: continuing training from a Qwen VLM, 1000 hours of HumanNet first-person video beats 100 hours of real robot data. Filming people is roughly an order of magnitude cheaper than filming robots, with margin to spare.

Key takeaways: - Physical interaction datasets entered million-hour scale. Comparable to the internet's role for NLP — a foundational substrate. - First-person human video may be a more economical scaling path than direct robot data. - Embodied foundation model teams should re-examine the assumption that robot data is mandatory.

Source: HumanNet: Scaling Human-centric Video Learning to One Million Hours

05 One LoRA, Cloud and Edge

MatryoshkaLoRA's engineering value is direct: train once, then pick a rank at deploy time based on device capability. Cloud inference uses high rank for quality. Edge devices use low rank to save resources. No need to train a separate adapter per scenario.

The mechanism inserts a carefully designed fixed diagonal matrix P between LoRA components, so every sub-rank (a nested low-rank slice) sees a consistent gradient signal during training. That's the core difference from random-rank-sampling schemes like DyLoRA. There, high-rank performance often degrades because different ranks see incoherent gradients.

The paper also proposes AURAC (Area Under the Rank Accuracy Curve) for evaluating "overall performance across a rank set," which fits multi-tier serving better than single-point accuracy. Code is open at IST-DASLab/MatryoshkaLoRA.

Key takeaways: - One adapter supports dynamic rank switching. Downgrade at deploy time by device or latency budget without retraining. - More stable than DyLoRA at the high-rank end. Worth swapping in as your default. - AURAC gives multi-tier serving teams a relevant evaluation metric.

Source: MatryoshkaLoRA: Learning Accurate Hierarchical Low-Rank Representations for LLM Fine-Tuning

Also Worth Noting

Today's Observation

Flow-OPD and AutoTTS look unrelated, but they point at the same concrete move: porting mature methodology from the LLM community back into adjacent fields. Flow-OPD ports On-Policy Distillation into flow-matching multi-objective alignment, where OPD originated as a tool for reconciling heterogeneous rewards in LLM post-training. AutoTTS ports the scaling-era workflow — build a discovery environment instead of tuning heuristics — from training to test-time scaling.

This isn't LLMs eating everything. Both paths have their own research communities. LLM post-training and inference infrastructure are becoming the general toolbox for AI research. The flip side worth watching: techniques still maintained as "image-generation tricks" or "inference-only hacks" may just be waiting for that methodology to arrive. Once it does, what looked like field-specific specialness gets exposed as "we didn't have better tools yet."

Concrete next step. Pull your team's tech-debt list from the past six months and pick three places where heuristics are duct-taping things together. For each, ask: has the LLM side solved a similarly shaped problem? If yes, the migration cost is probably lower than you think. Run a one-week feasibility spike before deciding whether to put it on next quarter's roadmap.